The iPhone 5s Review

by Anand Lal Shimpi on September 17, 2013 9:01 PM EST- Posted in

- Smartphones

- Apple

- Mobile

- iPhone

- iPhone 5S

GPU Architecture

Dating back to the original iPhone, Apple has relied on GPU IP from Imagination Technologies. In recent years, the iPhone and iPad lines have pushed the limits of Img’s technology - integrating larger and higher performing GPUs than all other Img partners. Apple definitely attempted to obfuscate its underlying GPU architecture this time around for some reason.

Dating back to a year ago I got a lot of tips saying that Apple would be integrating Imagination Technologies’ PowerVR Series 6 GPU this generation, but I needed more proof.

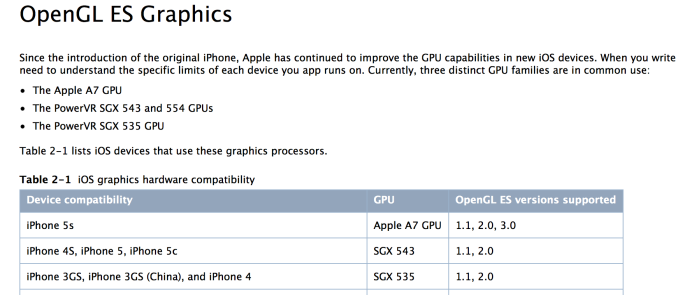

The first indication that this isn’t simply a Series 5XT part is the listed support for OpenGL ES 3.0. The only GPUs presently shipping with ES 3.0 support are Qualcomm’s Adreno 3xx (which is only integrated into Qualcomm silicon), ARM’s Mali-T6xx series and PowerVR Series 6. NVIDIA’s Tegra 4 GPU doesn’t support ES 3.0, and it’s too early for Logan/mobile Kepler. With Qualcomm out of the running that leaves Mali and PowerVR Series 6.

“All GPUs used in iOS devices use tile-based deferred rendering (TBDR).”

Apple’s developer documentation lists all of its SoCs as supporting Tile Based Deferred Rendering (TBDR). If you ask Imagination, they will tell you that they are the only ones with a true TBDR implementation. However if you look at ARM’s Mali-T6xx documentation, ARM also claims its GPU is a TBDR.

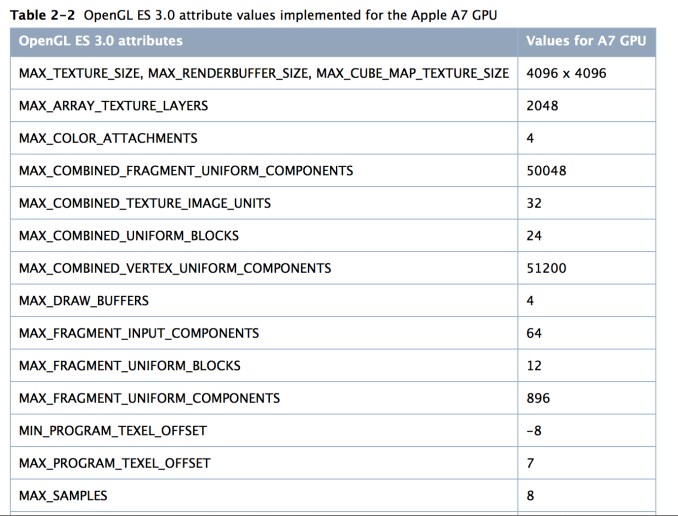

The real hint comes with anti-aliasing support:

The last line in the screenshot above, MAX_SAMPLES = 8. That’s a reference to 8 sample MSAA, a mode that isn’t supported by ARM’s Mali-T6xx hardware - only PowerVR Series 6 (Mali-T6xx supports 4x and 16x AA modes).

There are some other hints here that Apple is talking about PowerVR Series 6 when it references the A7’s GPU:

“The A7 GPU processes all floating-point calculations using a scalar processor, even when those values are declared in a vector. Proper use of write masks and careful definitions of your calculations can improve the performance of your shaders. For more information, see “Perform Vector Calculations Lazily” in OpenGL ES Programming Guide for iOS.

Medium- and low-precision floating-point shader values are computed identically, as 16-bit floating point values. This is a change from the PowerVR SGX hardware, which used 10-bit fixed-point format for low-precision values. If your shaders use low-precision floating point variables and you also support the PowerVR SGX hardware, you must test your shaders on both GPUs.”

As you’ll see below, both of the highlighted statements apply directly to PowerVR Series 6. With Series 6 Imagination moved to a scalar architecture, and in ImgTec’s developer documentation it confirms that the lowest precision mode supported is FP16.

All of this leads me to confirm what I heard would be the case a while ago: Apple’s A7 is the first shipping mobile silicon to integrate ImgTec’s PowerVR Series 6 GPU.

Now let’s talk about hardware.

The A7’s GPU Configuration: PowerVR G6430

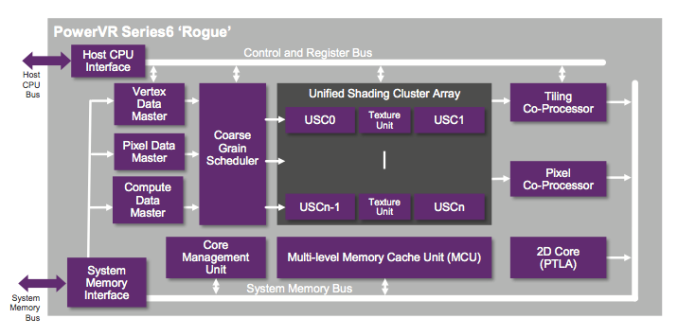

Previously known by the codename Rogue, series 6 has been announced in the following configurations:

| PowerVR Series 6 "Rogue" | ||||||||||||

| GPU | # of Clusters | # of FP32 Ops per Cluster | Total FP32 Ops | Optimization | ||||||||

| G6100 | 1 | 64 | 64 | Area | ||||||||

| G6200 | 2 | 64 | 128 | Area | ||||||||

| G6230 | 2 | 64 | 128 | Performance | ||||||||

| G6400 | 4 | 64 | 256 | Area | ||||||||

| G6430 | 4 | 64 | 256 | Performance | ||||||||

| G6630 | 6 | 64 | 384 | Performance | ||||||||

Based on the delivered performance, as well as some other products coming down the pipeline I believe Apple’s A7 features a variant of the PowerVR G6430 - a 4 cluster Rogue design optimized for performance (vs. area).

Rogue is a significant departure from the Series 5XT architectures that were used in the iPhone 5, iPad mini and iPad 4. The biggest change? A switch to a fully scalar architecture, similar to the present day AMD and NVIDIA GPUs.

Whereas with 5XT designs we talked about multiple cores, the default replication unit in Rogue is a “cluster”. Each core in 5XT replicated all hardware, while each cluster in Rogue only replicates the shader ALUs and texture hardware. Rogue is still a unified architecture, but the front end no longer scales 1:1 with shading hardware. In many ways this approach is a lot more sensible, as it is typically how you build larger GPUs.

In 5XT, each core featured a number of USSE2 pipelines. Each pipeline was capable of a Vec4 multiply+add plus one additional FP operation that could be dual-issued under the right circumstances. Img never detailed the latter so I always counted flops by looking at the number of Vec4 MADs. If you count each MAD as two FP operations, that’s 8 FLOPS per USSE2 pipe. Each USSE2 was a SIMD, so that’s one instruction across all 4 slots and not some combination of instructions. If you had 3 MADs and something else, the USSE2 pipe would act as a Vec3 unit instead. The same goes for 1 or 2 MADs.

With Rogue the USSE2 pipe is gone and replaced by a Unified Shading Cluster (USC). Each USC is a 16-wide scalar SIMD, with each slot capable of up to 4 FP32 ops per clock. Doing the math, a single USC implementation can do a total of 64 FP32 ops per clock - the equivalent of a PowerVR SGX 543MP2. Efficiency obviously goes up with a scalar design, so realizable performance will likely be higher on Rogue than 5XT.

The A7 is a four cluster design, so that four USCs or a total of 256 FP32 ops per clock. At 200MHz that would give the A7 twice the peak theoretical performance of the GPU in the iPhone 5. And from what I’ve heard, the G6430 is clocked much higher than that.

There’s more graphics horsepower under the hood of the iPhone 5s than there is in the iPad 4. While I don’t doubt the iPad 5 will once again widen that gap, keep in mind that the iPhone 5s has less than 1/4 the number of pixels as the iPad 4. If I were a betting man, I’d say that the A7 was designed not only to drive the 5s’ 1136 x 640 display, but also a higher res panel in another device. Perhaps an iPad mini with Retina Display? There’s no solving the memory bandwidth requirements, but the A7 surely has enough compute power to get there. There's also the fact that Apple has prior history of delivering an SoC that wasn’t perfect for the display (e.g. iPad 3).

GPU Performance

As I mentioned earlier, the iPhone 5s is the first Apple device (and consumer device in the world) to ship with a PowerVR Series 6 GPU. The G6430 inside the A7 is a 4 cluster configuration, with each cluster featuring a 16-wide array of SIMD pipelines. Whereas the 5XT generation of hardware used a 4-wide vector architecture (1 pixel per clock, all 4 color components per SIMD), Series 6 moves to a scalar design (think 16 pixels per clock, one color per clock). Each pipeline is capable of two FP32 MADs per clock, for a total of 64 FP32 operations per clock, per cluster. With the A7's 4 cluster GPU, that works out to be the same throughput per clock as the 4th generation iPad.

Imagination claims its new scalar architecture is not only more computationally dense, but also far more efficient. With the transition to scalar GPU architectures in the PC space we generally saw efficiency go up, so I'm inclined to believe Imagination's claims here.

Apple claims up to a 2x increase in GPU performance compared to the iPhone 5, but just looking at the raw numbers in the table above there's far more shading power under the hood of the A7 than only "2x" the A6.

| Mobile SoC GPU Comparison | ||||||||||||

| PowerVR SGX 543 | PowerVR SGX 543MP2 | PowerVR SGX 543MP3 | PowerVR SGX 543MP4 | PowerVR SGX 554 | PowerVR SGX 554MP2 | PowerVR SGX 554MP4 | PowerVR G6430 | |||||

| Used In | - | iPad 2/iPhone 4S | iPhone 5 | iPad 3 | - | - | iPad 4 | iPhone 5s | ||||

| SIMD Name | USSE2 | USSE2 | USSE2 | USSE2 | USSE2 | USSE2 | USSE2 | USC | ||||

| # of SIMDs | 4 | 8 | 12 | 16 | 8 | 16 | 32 | 4 | ||||

| MADs per SIMD | 4 | 4 | 4 | 4 | 4 | 4 | 4 | 32 | ||||

| Total MADs | 16 | 32 | 48 | 64 | 32 | 64 | 128 | 128 | ||||

| GFLOPS @ 300MHz | 9.6 GFLOPS | 19.2 GFLOPS | 28.8 GFLOPS | 38.4 GFLOPS | 19.2 GFLOPS | 38.4 GFLOPS | 76.8 GFLOPS | 76.8 GFLOPS | ||||

GFXBench 2.7

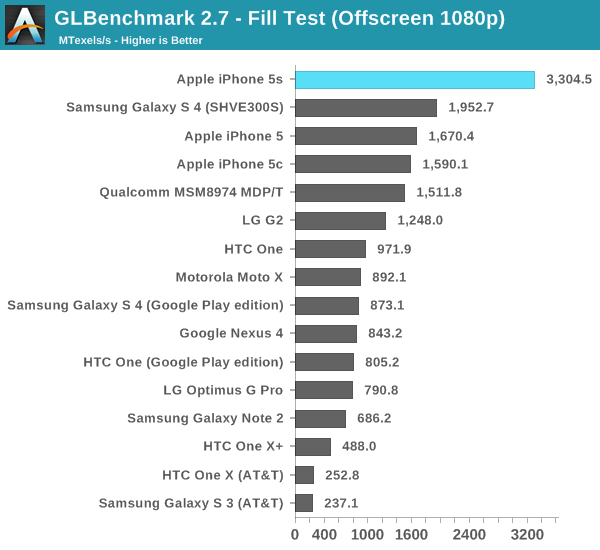

As always, we'll start with GFXBench (formerly GLBenchmark) 2.7 to get a feel for the theoretical performance of the new GPU. GFXBench 2.7 tends to be more computationally bound than most games as it is frequently used by silicon vendors to stress hardware, not by game developers as an actual performance target. Keep that in mind as we get to some of the actual game simulation results.

Twice the fill rate of the iPhone 5, and clearly higher than anything else we've tested. Rogue is off to a good start.

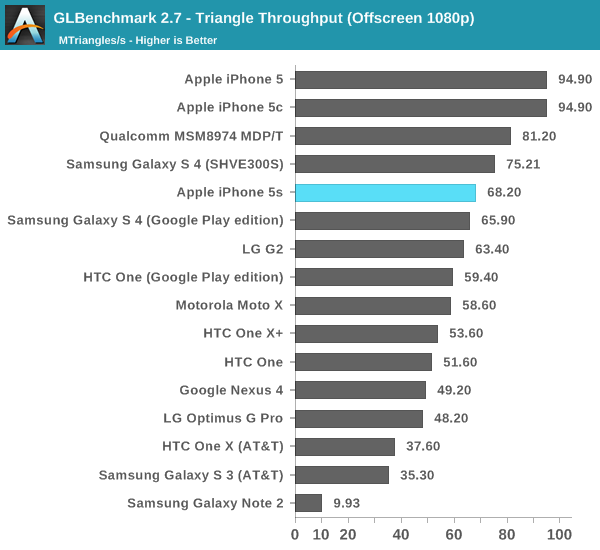

What's this? A performance regression? Remember what I said earlier in the description of Rogue. Whereas 5XT replicated nearly the entire GPU for "multi-core" versions, multi-cluster versions of Rogue only replicate at the shader array. The result? We don't see the same sort of peak triangle setup scaling we did back on multi-core 5XT parts. I don't suppose this will be a big issue in actual games (and likely a better balance between triangle setup/rasterization and shading hardware), but it's worth pointing out.

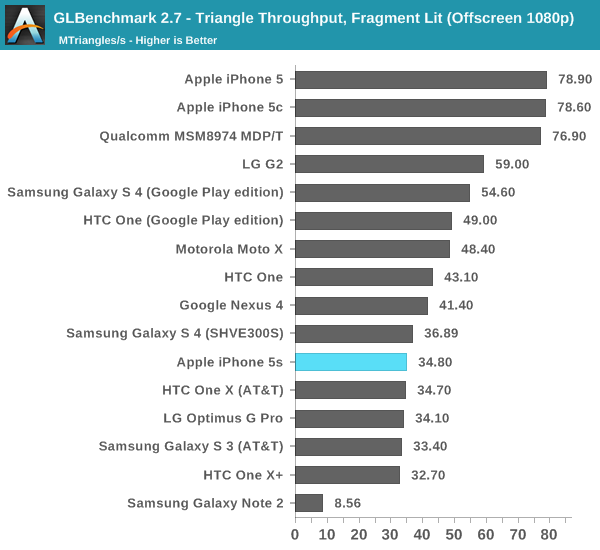

This is the worst case regression we've seen from 5XT to Rogue. Its clear that per chip triangle rates are much higher on Rogue, but with a many core implementation of 5XT there's just no competing. I suspect this change is part of how Img was able to increase the overall density of Rogue vs. 5XT. Now the question is whether or not this regression will actually appear in games? To find out we turn to the two game simulation tests in GFXBench 2.7, starting with the most stressful one: T-Rex HD.

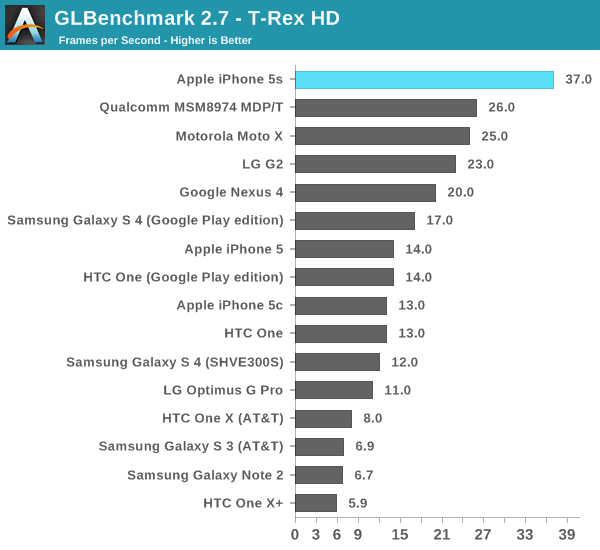

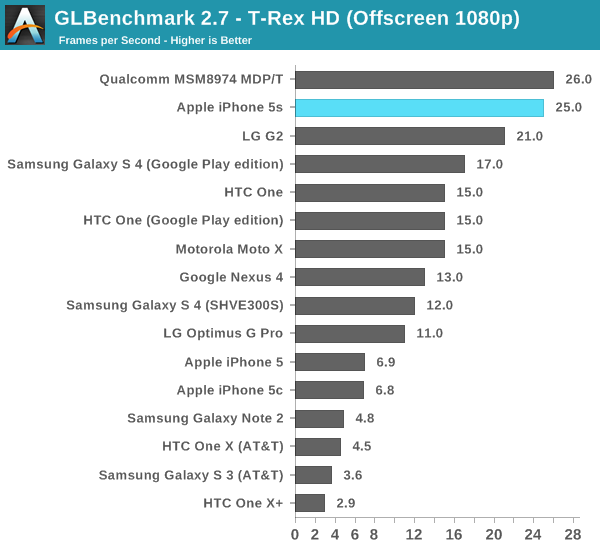

As always, the onscreen tests run at a device's native resolution with v-sync enabled, while the offscreen results happen at 1080p and v-sync disabled.

As expected, the G6430 in the iPhone 5s is more than twice the speed of the part in the iPhone 5. It is also the first device we've tested capable of breaking the 30 fps barrier in T-Rex HD at its native resolution. Given just how ridiculously intense this test is, I think it's safe to say that the iPhone 5s will probably have the longest shelf life from a gaming perspective of any previous iPhone.

The offscreen test helps put the G6430's performance in perspective. Here we show the 5s barely falling behind Qualcomm's Adreno 330 (Snapdragon 800). There are obvious thermal differences between the two platforms, but if we look at the G2's performance (another S800/A330 part) we get a better indication of an apples to apples comparison. Looking at the leaked Nexus 5 (also S800/A330) T-Rex HD scores confirms what we're seeing above. In a phone, it looks like the G6430 is a bit quicker than Qualcomm's Adreno 330.

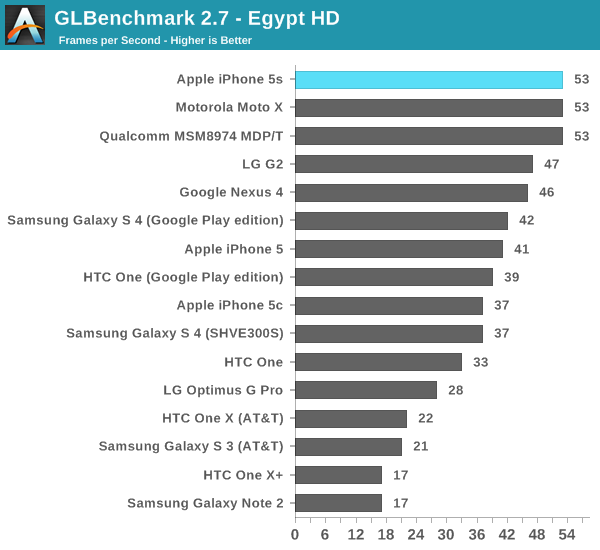

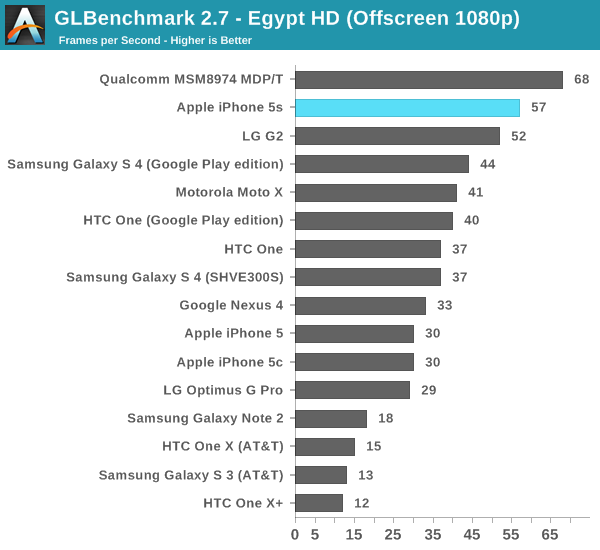

The Egypt HD tests are much lighter and a lot closer to the workload of a lot of games on the store today, although admittedly it is getting a little light.

Onscreen we're at Vsync already, something the iPhone 5 wasn't capable of doing. The 5s should have no issues running most games at 30 fps.

Offscreen, even at 1080p, performance doesn't really change. Qualcomm's Adreno 330 is definitely faster, at least in the MDP/T. In the G2, its performance lags behind the G6430. I really want to measure power on these things.

3DMark

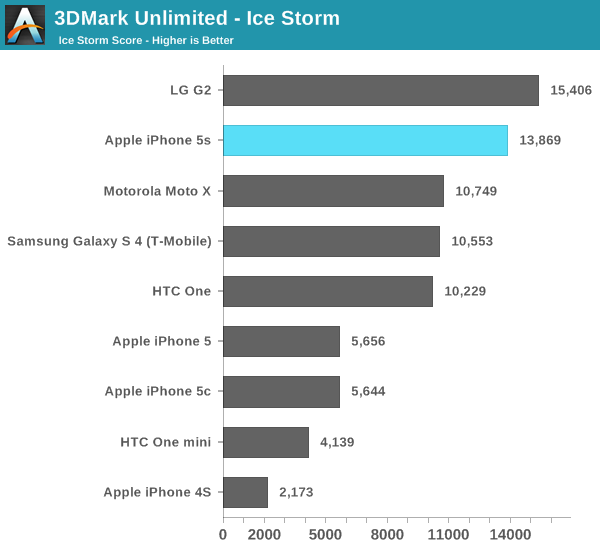

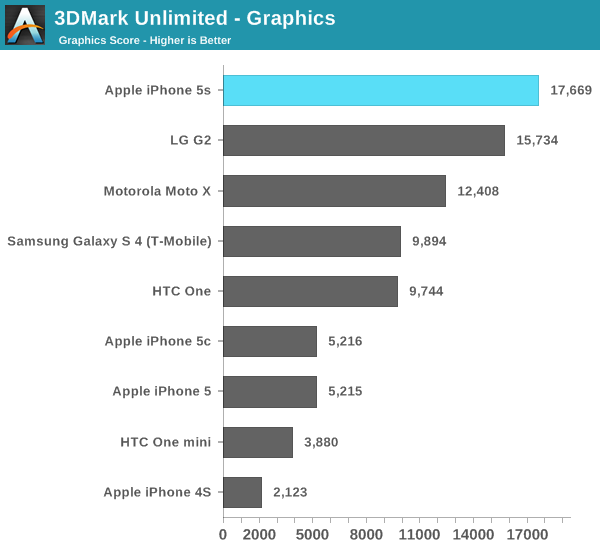

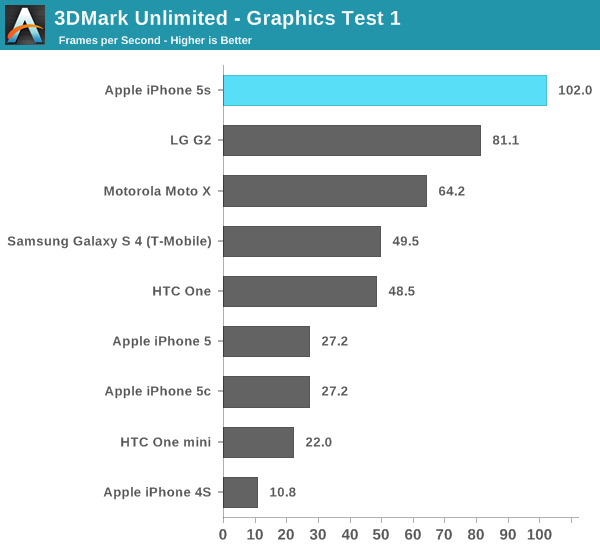

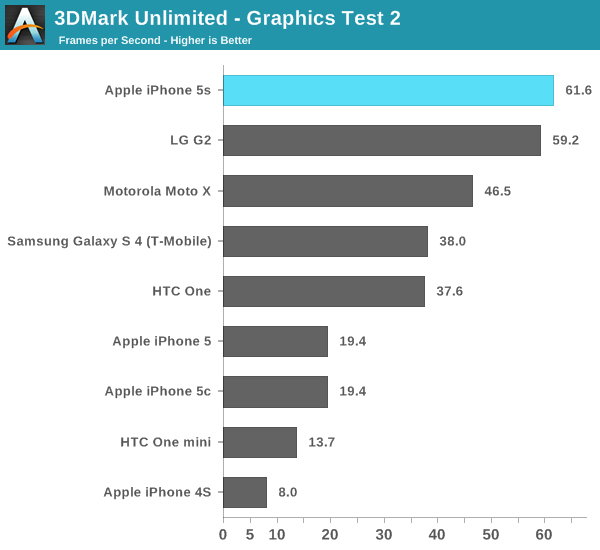

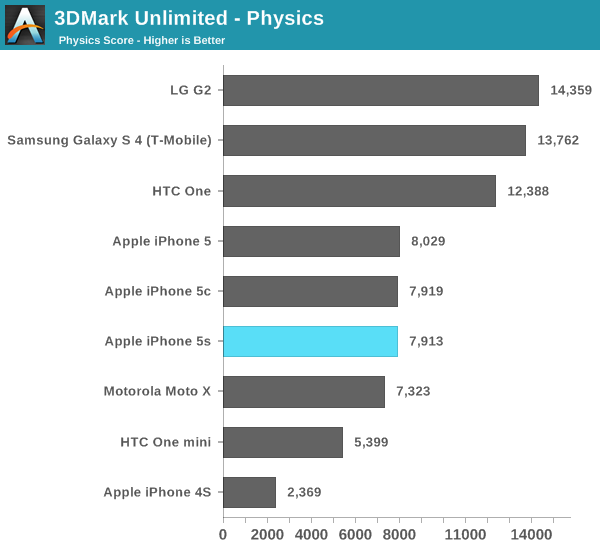

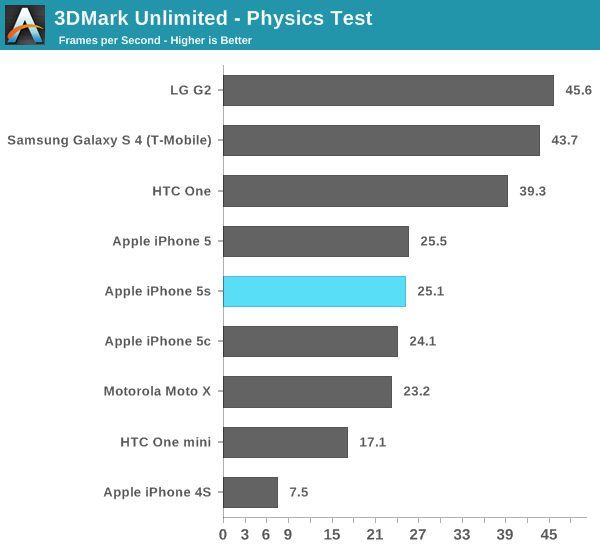

3DMark finally released an iOS version of its benchmark, enabling us to run the 5s through on yet another test. As we've discovered in the past, 3DMark is far more of a CPU test than GFXBench. While CPU load will range from 6 - 25% during GFXBench, we'll see usage greater than 50% on 3DMark - even during the graphics tests. 3DMark is also heavily threaded, with its physics test taking advantage of quad-core CPUs.

With the iOS release of the benchmark comes a new offscreen rendering mode called Unlimited. The benchmark is the same but it renders offscreen at 720p with the display only being updated once every 100 frames to somewhat get around vsync. Because of the new test we don't have a ton of comparison data, so I've included whatever we've got at this point.

3DMark ends up being more of a CPU and memory bandwidth test rather than a raw shader performance test like GFXBench and Basemark X. The 5s falls behind the Snapdragon 800/Adreno 330 based G2 in overall performance. To find out how much of that is GPU performance and how much is a lack of four cores, let's look at the subtests.

The graphics test is more GPU bound than CPU bound, and here we see the G6430 based iPhone 5s pull ahead. Note how well the Moto X does because of its very high clocked CPU cores rather than its GPU. Although this is a graphics test, it's still well influenced by CPU performance.

The physics test hits all four cores in a quad-core chip and explains the G2 pulling ahead in overall performance. Note that I saw no improvement in this largely CPU bound test, leading me to believe that we've hit some sort of a bug with 3DMark and the new Cyclone core.

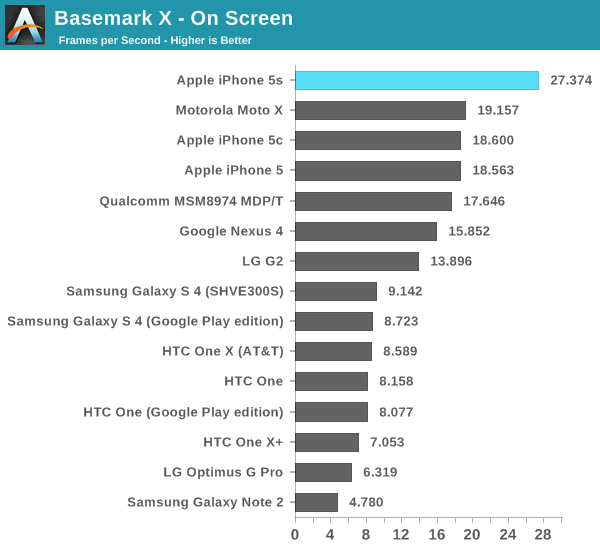

Basemark X

Basemark X is a new addition to our mobile GPU benchmark suite. There are no low level tests here, just some game simulation tests run at both onscreen (device resolution) and offscreen (1080p, no vsync) settings. The scene complexity is far closer to GLBenchmark 2.7 than the new 3DMark Ice Storm benchmark, so frame rates are pretty low.

Unfortunately I ran into a bug with Basemark X under iOS 7 on the iPhone 5/5c/5s that prevented the off screen test from completing, leaving me only with on-screen results at native resolution.

Once again we're seeing greater than 2x scaling comparing the iPhone 5s to the 5.

464 Comments

View All Comments

Dug - Wednesday, September 18, 2013 - link

"maybe you should hire a developer to write native cross platform benchmark tools"WHY? It is not going to make any difference. Developers aren't writing native cross platform programs. If they can take advantage of anything that's in the system, then show it off.

That would be like telling car manufacturers to redesign a hybrid to gas only to compare with all the other gas only cars.

ddriver - Wednesday, September 18, 2013 - link

"Developers aren't writing native cross platform programs"Maybe it is about time you crawl from under the rock you are living under... Any even remotely concerned with performance and efficiency application pretty much mandates it is a native application. It would be incredibly stupid to not do it, considering the "closest" to native language Java is like 2-3 times slower and users 10-20 times as much memory.

Dug - Wednesday, September 18, 2013 - link

Exactly my point! "native cross platform" Each cross-platform solution can only support a subset of the functionality included in each native platform.It doesn't get you anywhere to produce a native cross platform benchmark tool.

Again you have to mitigate to names and snide comments because you are wrong.

ddriver - Wednesday, September 18, 2013 - link

What you talk about is I/O, events and stuff like that. When it comes to pure number crunching the same code can execute perfectly well for every platform it is complied against. Actually, some modern frameworks go even further than that and provide ample abstractions. For example, the same GUI application can run on Windows, Linux, MacOS, iOS and Android, apart from a few other minor platforms.Anand Lal Shimpi - Wednesday, September 18, 2013 - link

Ultimately the benchmarking problem is being fixed, just not on the time scale that we want it to. I figured we'd be better off by now, and in many ways we are (WebXPRT, Browsermark are both steps in the right direction, we have more native tools under Android now) but part of the problem is there was a long period of uncertainty around what OSes would prevail. Now that question is finally being answered and we're seeing some real investment in benchmarks. Trust me, I tried to do a lot behind the scenes over the past 4 years (some of which Brian and I did recently) but this stuff takes time. I remember going through this in the early days of the PC industry too though, I know how it all ends - it'll just take a little time to get there.Actually I think 128-bit registers might've been optional on v7.

The only reason encryption results are in that table is because that's how Geekbench groups them. There's no nefarious purpose there (note that it's how we've always reported the Geekbench results, as they are reported in the test themselves).

In my experience with the 5s I haven't noticed any performance regressions compared to the 5/5c. I'm not saying they don't exist and I'll continue to hunt, it's just that they aren't there now. I believe I established the reasoning for why you'd want to do this early, and again we're talking about at most 12 months before they should start the move to 64-bit anyways. Apple tends to like its ISA transitions to be as quick and painless as possible, and moving early to ARMv8 makes a lot of sense in that light. Sure they are benefiting from the marketing benefits of having a feature that no one else does, but what company doesn't do that?

I don't believe the move to 64-bit with Cyclone was driven first and foremost by marketing. Keep in mind that this architecture was designed when a bunch of certain ex-AMDers were over there too...

Take care,

Anand

BrooksT - Wednesday, September 18, 2013 - link

Why would Anand write cross-platform benchmarks that have no connection to real world usage? Especially when you then complain that the 64 bit coverage isn't real world enough?ddriver - Wednesday, September 18, 2013 - link

For starters, putting the encryption results in their own graph, like every other review before that, and side to side comparison between geekbench ST/MT scores for A7 and competing v7 chips would be a good start toward a more objective and less biased article.And I know I am asking a lot, but an edit feature in the comment section is long overdue...

TheBretz - Wednesday, September 18, 2013 - link

For what it's worth this is NOT a case of LITERALLY comparing "Apples" and "Oranges" - it is a case of comparing "Apple" and many other manufacturers, but there was no fruit involved in the comparison, only smarthphones and tablets.ddriver - Wednesday, September 18, 2013 - link

Apples to oranges is a figure of speech, it has nothing to do with the company apple... It concerns comparing incomparable objects which is the case of completely different JS implementations on iOS and Android.Arbee - Wednesday, September 18, 2013 - link

Please name any case when AT's benchmarks and reviews have been proven to be biased or inaccurate. There's a reason the writers at other sites consider AT the gold standard for solid technical commentary (Engadget, Gizmodo, and the Verge all regularly credit AT on technical stories). As far as bias, have you *heard* Brian cooing about practically wanting to marry the Nexus 5? ;-)I think what actually happened here is that apparently Apple engineers listen to the AT podcast, because aside from 802.11ac and the screen size the 5S is designed almost perfectly to AT's well-known and often-stated specifications. It hits all of Anand's chip architecture geekery hot buttons in a way that Samsung's mashups of off-the-shelf parts never will, and they used Brian's exact line "Bigger pixels means better pictures" in the presentation. And naturally, if someone gives you what you want, you're likely to be happy with it. This is why people have Amazon gift lists ;-)

Krait's 128 bit SIMD definitely helps, but it won't match true v8 architecture designs. I've written commercially shipping ARM assembly, and there's a *lot* of cruft in the older ISA that v8 cleans right up. And it lets compilers generate *much* more favorable code. I'll be surprised if the next Snapdragons aren't at least 32-bit v8. Qualcomm has been pretty forward-looking aside from their refusal to cooperate with the open-source community (Freedreno FTW).

As far as 64 bit on less than 4 GB of RAM, it enables applications to more freely operate on files in NAND without taking up huge amounts of RAM (via mmap(), which the Linux kernel in Android of course also has). Apps like Loopy HD and MultiTrack DAW (not to mention Apple's own iMovie and GarageBand) will definitely be able to take advantage.