Samsung SSD 840 EVO Review: 120GB, 250GB, 500GB, 750GB & 1TB Models Tested

by Anand Lal Shimpi on July 25, 2013 1:53 PM EST- Posted in

- Storage

- SSDs

- Samsung

- TLC

- Samsung SSD 840

Endurance

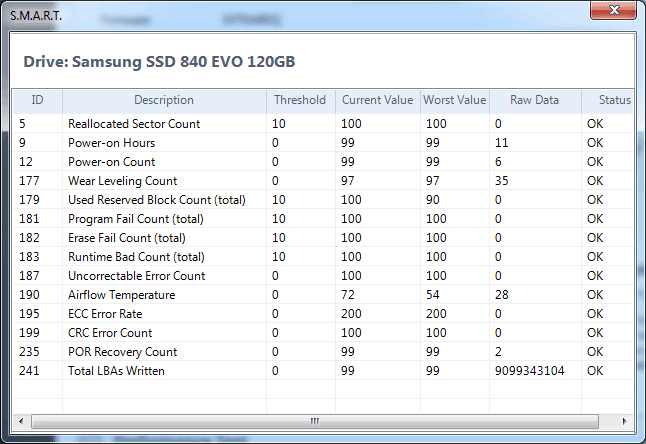

Samsung isn't quoting any specific TB written values for how long it expects the EVO to last, although the drive comes with a 3 year warranty. Samsung doesn't explicitly expose total NAND writes in its SMART details but we do get a wear level indicator (SMART attribute 177). The wear level indicator starts at 100 and decreases linearly down to 1 from what I can tell. At 1 the drive will have exceeded all of its rated p/e cycles, but in reality the drive's total endurance can significantly exceed that value.

Kristian calculated around 1000 p/e cycles using the wear level indicator on his 840 sample last year or roughly 242TB of writes, but we've seen reports of much more than that (e.g. this XtremeSystems user who saw around 432TB of writes to a 120GB SSD 840 before it died). I used Kristian's method of mapping sequential writes to the wear level indicator to determine the rated number of p/e cycles on my 120GB EVO sample:

| Samsung SSD 840 EVO Endurance Estimation | |||||||

| Samsung SSD EVO 120GB | |||||||

| Total Sequential Writes | 4338.98 GiB | ||||||

| Wear Level Counter Decrease | -3 (raw value = 35) | ||||||

| Estimated Total Writes | 144632.81 GiB | ||||||

| Estimated Rated P/E Cycles | 1129 cycles | ||||||

Using the 1129 cycle estimate (which is an improvement compared to last year's 840 sample), I put together the table below to put any fears of endurance to rest. I even upped the total NAND writes per day to 50 GiB just to be a bit more aggressive than the typically quoted 10 - 30 GiB for consumer workloads:

| Samsung SSD 840 EVO TurboWrite Buffer Size vs. Capacity | |||||||

| 120GB | 250GB | 500GB | 750GB | 1TB | |||

| NAND Capacity | 128 GiB | 256 GiB | 512 GiB | 768 GiB | 1024 GiB | ||

| NAND Writes per Day | 50 GiB | 50 GiB | 50 GiB | 50 GiB | 50 GiB | ||

| Days per P/E Cycle | 2.56 | 5.12 | 10.24 | 15.36 | 20.48 | ||

| Estimated P/E Cycles | 1129 | 1129 | 1129 | 1129 | 1129 | ||

| Estimated Lifespan in Days | 2890 | 5780 | 11560 | 17341 | 23121 | ||

| Estimated Lifespan in Years | 7.91 | 15.83 | 31.67 | 47.51 | 63.34 | ||

| Estimated Lifespan @ 100 GiB of Writes per Day | 3.95 | 7.91 | 15.83 | 23.75 | 31.67 | ||

Endurance scales linearly with NAND capacity, and the worst case scenario at 50 GiB of writes per day is just under 8 years of constant write endurance. Keep in mind that this is assuming a write amplification of 1, if you're doing 50 GiB of 4KB random writes you'll blow through this a lot sooner. For a client system however you're probably looking at something much lower than 50 GiB per day of total writes to NAND, random IO included.

I also threw in a line of lifespan estimates at 100 GiB of writes per day. It's only in this configuration that we see the 120GB drive drop below 4 years of endurance, again based on a conservative p/e estimate. Even with 100 GiB of NAND writes per day, once you get beyond the 250GB EVO we're back into absolutely ridiculous endurance estimates.

Keep in mind that all of this is based on 1129 p/e cycles, which is likely less than half of what the practical p/e cycle limit on Samsung's 19nm TLC NAND. To go ahead and double those numbers and then you're probably looking at reality. Endurance isn't a concern for client systems using the 840 EVO.

137 Comments

View All Comments

verjic - Thursday, February 13, 2014 - link

I'm talking about 120 Gb versionverjic - Thursday, February 13, 2014 - link

Also what is Write/Read IOMeter Bootup and Write/Read IOMeter IOMix - what means their speed? Thank YouAhDah - Thursday, May 15, 2014 - link

The TRIM validation graph shows a tremendous performance drop after a few gigs of writes, even after TRIM pass, the write speed is only 150MBps.Does this mean once the drive is 75%-85% filled up, the write speed will always be slow?

I'm tempted to get Crucial M550 because of this down fall.

njwhite2 - Wednesday, October 15, 2014 - link

Kudos to Anand Lal Shimpi! This is one of the finest reviews I have ever read! No jargon. No unexplained acronyms. Quantitative testing of compared items instead of reviewer bias. Explanation of why the measured criteria are imortant to the end user! Just fabulous! I read dozens of reviews each week, so I'm surprised I had not stumbled upon Anandtech before. I'm (for sure) going to check out their smartphone reviews. Most of those on other sites are written by Apple fans or Android fans and really don't tell the potential purchaser what they need to know to make the best choice for them.IT_Architect - Thursday, October 22, 2015 - link

I would be interested in how reliable they are. The reason I ask is one time, when the time the Intel SLC technology was just under two years old, and there was no MLC or TLC, I needed speed to load a database from scratch 6 times an hour during incredible traffic times. I was getting requests by users at the rate of 66 times a second per server, which each required many reads of the database per request. I couldn't swap databases without breaking sessions, and mirror and unmirror did not work well. I would have to pay a ton to duplicate a redundant array in SSDs. Then I asked the data center how many of these drives they had out there. They (SoftLayer) queried and came back with 700+. Then I asked them how many they've had go bad. They queried their records and it was none, not so much as a DOA. I reasoned from that I would be just as likely to have a chassis or disk controller go bad. None of them have any moving parts, and the drives are low power. Those were enterprise drives of course because that's all there was at that time.In 2011 I bought a Dell M6600. Dell was shipping them with the Micron SSD. I was concerned about the lifespan and I do a lot of reading and writing with it and work constantly with virtual machines while prototyping, and VM files are huge. It calculated out to 4 years. While researching, I came across that situation where Dell had "cold feet" about OEMing them due to lifespan. Micron/Intel demonstrated to them 10x the rated lifespan, which convinced Dell. There was plenty of other trouble with consumer-level SSDs at the time, which gave the technology a bad name. The Micron/Intel was one of the very few solid citizens at the time. I went with it, although I didn't buy my M6600 with it because Dell had such a premium on them. I had two problems with the drive, which by the way is still in service today. The first was the drive just stopped doing anything one day. I called Micron and it turned out to be a bug in the firmware. If I had two drives arrayed, it would have stopped both at the same time. I upgraded the firmware and never had that problem again. The next time I was troubleshooting the laptop and putting the battery in and out and the computer would no longer boot. I again called Micron. It was by design. They said disconnect the power, pull the battery, and wait one hour. I did, and it has worked perfectly since. If I had an array, it would have stopped both at the same time.

Today, the market is much more mature and the technology no longer has a bad name. A redundant array is no substitute for a backup anyway. A redundant array brings business continuity and speed. Are we just as likely or more so to have a motherboard go out? We don't have redundant motherboards unless without having another entire computer. Unlike a power supplies and CPUs, SSDs are low-current devices. I'm considering the possibility that we may be at the point, even for consumer-level drives, where redundant arrays for SSDs are just plain silly.

Gothmoth - Sunday, January 8, 2017 - link

in real life my RAPID test showed no benefits AT ALL!!all it does is making low level benchmarks look better.

you should test with real applications. RAPID is a useless feature.

jeyjey - Friday, June 7, 2019 - link

I have one of this drive. I need to find a little part that is fired, I need to replace it to try to enter the data inside. Please help.