Intel's Haswell - An HTPC Perspective: Media Playback, 4K and QuickSync Evaluated

by Ganesh T S on June 2, 2013 8:15 PM ESTDecoding and Rendering Benchmarks

Our decoding and rendering benchmarks consists of standardized test clips (varying codecs, resolutions and frame rates) being played back through MPC-HC. GPU usage is tracked through GPU-Z logs and power consumption at the wall is also reported. The former provides hints on whether frame drops could occur, while the latter is an indicator of the efficiency of the platform for the most common HTPC task - video playback.

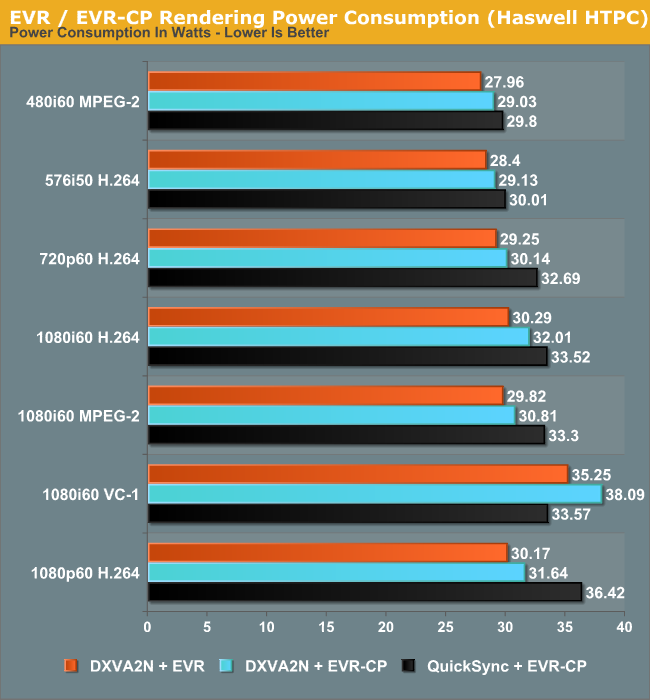

Enhanced Video Renderer (EVR) / Enhanced Video Renderer - Custom Presenter (EVR-CP)

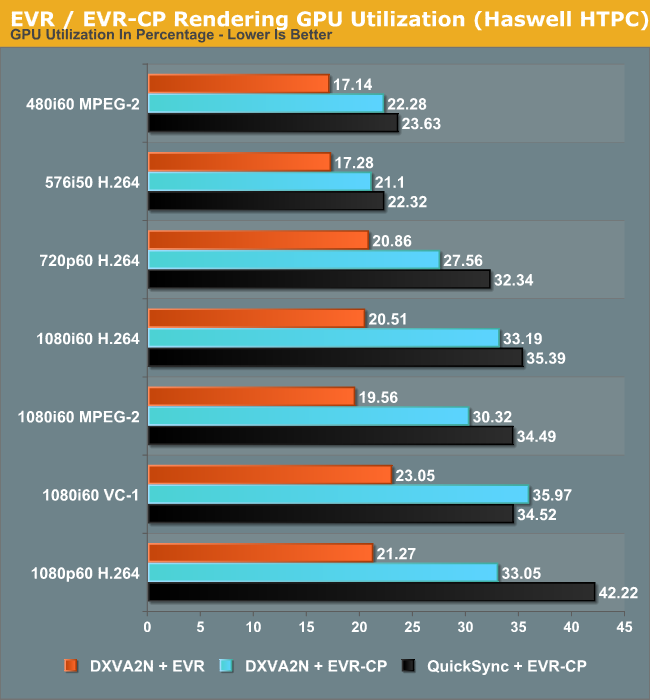

The Enhanced Video Renderer is the default renderer made available by Windows 8. It is a lean renderer in terms of usage of system resources since most of the aspects are offloaded to the GPU drivers directly. EVR is mostly used in conjunction with native DXVA2 decoding. The GPU is not taxed much by the EVR despite hardware decoding also taking place. Deinterlacing and other post processing aspects were left at the default settings in the Intel HD Graphics Control Panel (and these are applicable when EVR is chosen as the renderer). EVR-CP is the default renderer used by MPC-HC. It is usually used in conjunction with MPC-HC's video decoders, some of which are DXVA-enabled. However, for our tests, we used the DXVA2 mode provided by the LAV Video Decoder. In addition to DXVA2 Native, we also used the QuickSync decoder developed by Eric Gur (an Intel applications engineer) and made available to the open source community. It makes use of the specialized decoder blocks available as part of the QuickSync engine in the GPU.

Power consumption shows a tremendous decrease across all streams. Admittedly, the passive Ivy Bridge HTPC uses a 55W TDP Core i3-3225, but, as we will see later, the power consumption at full load for the Haswell build is very close to that of the Core i3-3225 build despite the lower TDP of the Core i7-4765T.

In general, using the QuickSync decoder results in a higher power consumption because the decoded frames are copied back to the DRAM before being sent to the renderer. Using native DXVA decoding, the frames are directly passed to the renderer without the copy-back step. The odd-man out in the power numbers is the interlaced VC-1 clip, where QuickSync decoding is more efficient compared to 'native DXVA2'. This is because there is currently no support in the open source native DXVA2 decoders for interlaced VC-1 on Intel GPUs, and hence, it is done in software. On the other hand, the QuickSync decoder is able to handle it with the VC-1 bitstream decoder in the GPU.

The GPU utilization numbers follow a similar track to the power consumption numbers. EVR is very lean on the GPU, as discussed earlier. The utilization numbers provide proof of the same. QuickSync appears to stress the GPU more, possibly because of the copy-back step for the decoded frames.

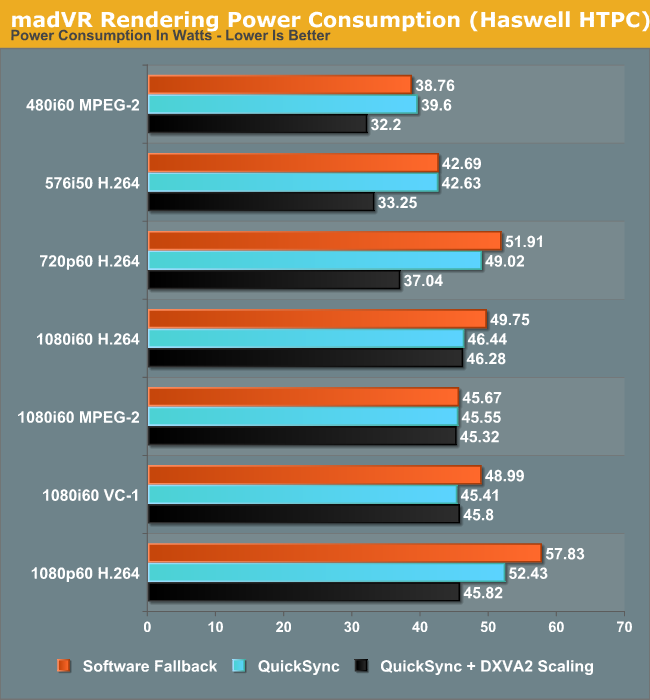

madVR

Videophiles often prefer madVR as their renderer because of the choice of scaling algorithms available as well as myriad other features. In our recent Ivy Bridge HTPC review, we found that with DDR3-1600 DRAM, it was straightforward to get madVR working with the default scaling algorithms for all materials 1080p60 or lesser. In the meanwhile, Mathias Rauen (developer of madVR) has developed more features. In order to alleviate the ringing artifacts introduced by the Lanczos algorithm, an option to enable an anti-ringing filter was introduced. A more intensive scaling algorithm (Jinc) was also added. Unfortunately, enabling either the anti-ringing filter with Lanczos or choosing any variant of Jinc resulted in a lot of dropped frames. Haswell's HD4600 is simply not powerful enough for these madVR features.

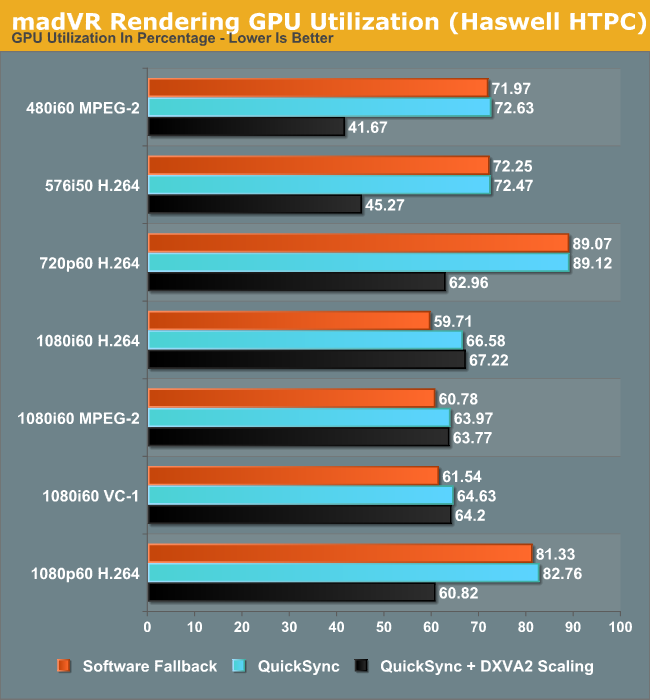

It is not possible to use native DXVA2 decoding with madVR because the decoded frames are not made available to an external renderer directly. (Update: It is possible to use DXVA2 Native with madVR since v0.85. Future HTPC articles will carry updated benchmarks) To work around this issue, LAV Video Decoder offers three options. The first option involves using software decoding. The second option is to use either QuickSync or DXVA2 Copy-Back. In either case, the decoded frames are brought back to the system memory for madVR to take over. One of the interesting features to be integrated into the recent madVR releases is the option to perform DXVA scaling. This is particularly interesting for HTPCs running Intel GPUs because the Intel HD Graphics engine uses dedicated hardware to implement support for the DXVA scaling API calls. AMD and NVIDIA apparently implement those calls using pixel shaders. In order to obtain a frame of reference, we repeated our benchmark process using DXVA2 scaling for both luma and chroma instead of the default settings.

One of the interesting aspects to note here is the fact that the power consumption numbers show a much larger shift towards the lower end when using DXVA2 scaling. This points to more power efficient updates in the GPU video post processing logic.

DXVA scaling results in much lower GPU usage for SD material in particular with a corresponding decrease in average power consumption too. Users with Intel GPUs can continue to enjoy other madVR features while giving up on the choice of a wide variety of scaling algorithms.

95 Comments

View All Comments

meacupla - Monday, June 3, 2013 - link

there's like... exactly one mITX FM2 mobo even worth considering out of a grand total of two. One of them catches on fire and neither of them have bluetooth or wifi.LGA1155 and LGA1150 have at least four each.

TomWomack - Monday, June 3, 2013 - link

The mITX FM2 motherboard that I bought last week has bluetooth and wifi; they're slightly kludged in (they are USB modules apparently glued into extra USB ports that they've added), but I don't care.The Haswell mITX boards aren't available from my preferred supplier yet, so I've gone for micro-ATX for that machine.

BMNify - Monday, June 3, 2013 - link

I know you're trolling but the fact is more people are content with converting their 5 year old C2D cookie cutter desktop into an HTPC ($50 video card + case + IR receiver = job done) than buying all new kit.We reached the age of "good enough" years ago. Money is tight and with all the available gadgets on the market (and more to come) people are looking to make it go as far as possible. Intel is going to find it harder and harder to get their high margin silicon into the homes of the average family. Good enough ARM mobile + good enough x86 allows people to own more devices and still pay the bills. It looks like AMD has accepted this, they've taking their lumps and are moving forward in this "new world". I'm not sure what Intels long term strategy is but I'm a bit concerned.

Veroxious - Tuesday, June 4, 2013 - link

Agreed 100%. I am using an old Dell SFF with an E2140 LGA775 CPU running XBMCbuntu. It works like a charm. I can watch movies while simultaneously adding content. That PC is near silent. What incentive do I have for upgrading to a Haswell based system? None whatsoever.kallogan - Monday, June 3, 2013 - link

2.0 ghz seriously ??? The core 45W Sandy i5-2500T was at 2,3 ghz and 3,3 ghz turbo. LOL at this useless cpu gen.kallogan - Monday, June 3, 2013 - link

Forget my comment didn't see it was a i7 with 8 threads. 35W tdp is not bad either. But the 45W core i7-3770T would still smoke this.Montago - Monday, June 3, 2013 - link

I must be blind... i don't see the regression you are talking about.HD4000 QSV usually get smudgy and blocky.. and that i don't see in HD4600 ... so i think you are wrong in your statements.

comparing the frames, there is little difference, and none i would ever notice while watching the movie on a handheld device like an tablet or Smartphone.

The biggest problem with QSV is not the quality, but the filesize :-(

QSV is usually 2x larger than x264

ganeshts - Monday, June 3, 2013 - link

Montago,Just one example of the many that can be unearthed from the galleries:

Look at Frame 4 in the 720p encodes in full size here:

HD4600: http://images.anandtech.com/galleries/2836/QSV-720...

HD4000: http://images.anandtech.com/galleries/2839/QSV-HD4...

Look at the horns of the cattle in the background to the right of the horse. The HD4000 version is sharper and more faithful to the original compared to the HD4600 version, even though the target bitrate is the same.

In general, when looking at the video being played back, these differences added up to a very evident quality loss.

Objectively, even the FPS took a beating with the HD4600 compared to the HD4000. There is some driver issue managing the new QuickSync Haswell modes definitely.

nevcairiel - Monday, June 3, 2013 - link

The main Haswell performance test from Anand at least showed improved QuickSync performance over Ivy, as well as something called the "Better Quality" mode (which was slower than Ivy, but never specified what it really meant)ganeshts - Monday, June 3, 2013 - link

Anand used MediaEspresso (CyberLink's commercial app), while I used HandBrake. As far as I remember, MediaEspresso doesn't allow specification of target bitrate (at least from the time that I used it a year or so back), just better quality or better performance. Handbrake allows setting of target bitrate, so the modes that are being used by the Handbrake app might be completely different from those used by MediaEspresso.As we theorize, some new Haswell modes which are probably not being used by MediaEspresso are making the transcodes longer and worse quality.