Intel's Haswell - An HTPC Perspective: Media Playback, 4K and QuickSync Evaluated

by Ganesh T S on June 2, 2013 8:15 PM ESTNetwork Streaming Performance - Netflix and YouTube

The move from Windows 7 to Windows 8 as our platform of choice for HTPCs has made Silverlight unnecessary. The Netflix app on Windows 8 supports high definition streams (up to a bit rate of 3.85 Mbps for all ISPs, more if the ISP is Super HD enabled) as well as 5.1-channel Dolby Digital Plus audio on selected titles.

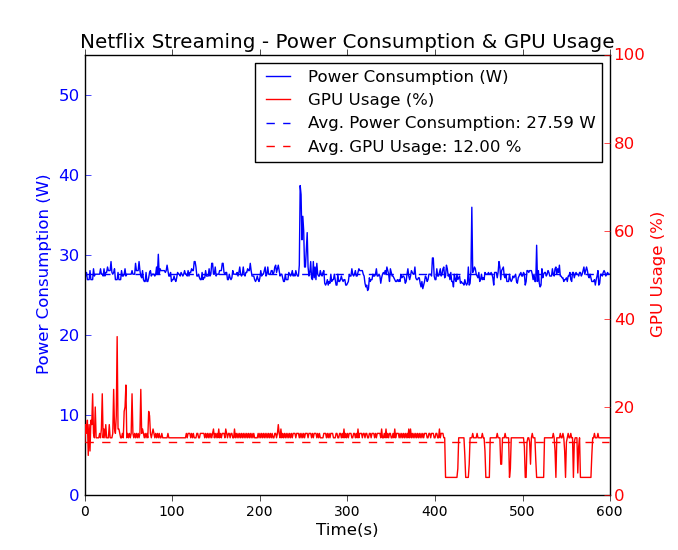

It is not immediately evident whether GPU acceleration is available or not from the OSD messages. However, GPU-Z reported an average GPU utilization of 12% throughout the time that the Netflix app was playing back video. The average power consumption is around 28 W.

Unlike Silverlight, Adobe Flash continues to maintain some relevance right now. YouTube continues to use Adobe Flash to serve FLV (at SD resolutions) and MP4 (at both SD and HD resolutions) streams. YouTube's debug OSD indicates whether hardware acceleration is being used or not.

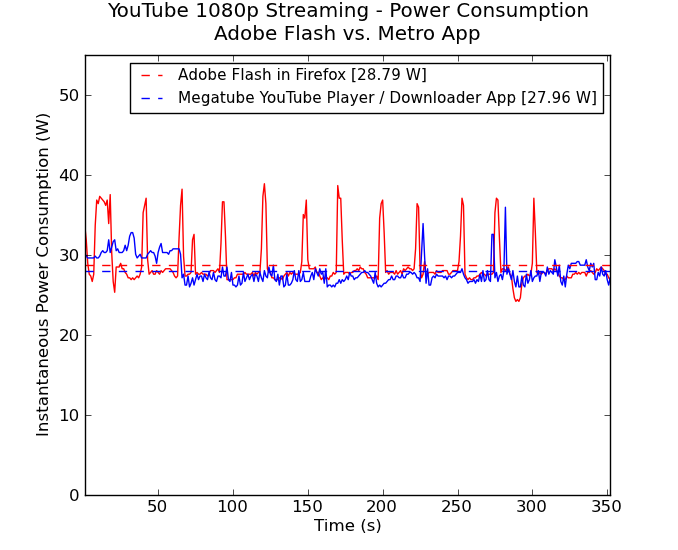

Windows 8 has plenty of YouTube apps. We chose the Megatube YouTube Player / Downloader which allows for stream selection. For our power measurement experiments, we chose the 1080p MP4 stream.

However, we can't be sure whether hardware acceleration is being used with the app, as there is no debug OSD. However, a look at the power consumption numbers reveal that both approaches consume less than 30 W on an average. The difference in the caching of the stream is also visible in this graph, with the Flash approach preferring to download data in bursts while the app prefers to download the whole stream as quickly as possible. Streaming was done over Wi-Fi.

Comparing these numbers with what was obtained using the i3-3225 in a passive build shows that the Haswell build manages to be more efficient even when active cooling (with one big Antec Skeleton chassis fan and a CPU fan) is employed.

On the image quality front, Haswell doesn't seem to change anything here vs. Ivy Bridge. Performance was acceptable before, and it continues to be so here. The big difference is really the additional power savings.

95 Comments

View All Comments

HisDivineOrder - Tuesday, June 4, 2013 - link

I've heard this song and dance before. It never happens. Plus, limiting people to GDDR5 of pre-determined amounts for a HTPC seems like an exercise in being stupid.Spunjji - Tuesday, June 4, 2013 - link

Yeah, I'm not buying that rumour. Doesn't make much sense.JDG1980 - Sunday, June 2, 2013 - link

It's good to see that Intel finally got around to fixing the 23.976 fps bug, which was the biggest show-stopper for using their integrated graphics in a HTPC.Regarding MadVR, I'd be interested to see more benchmarks. How good can you run the settings before hitting a wall with GPU utilization? How about on the GT3e - if this ever shows up in an all-in-one Mini-ITX board or NUC, it might be a great choice for HTPCs. Can it handle the good scaling algorithms?

My own experience is that anti-ringing doesn't add that much GPU load. I recently upgraded to a Radeon HD 7750, and it can handle anti-ringing filters on both luma and chroma with no problem. Chroma upscaling works fine with 3-tap Jinc, and luma also can do this with SD content (even interlaced), but for the most demanding test clip I have (1440x1080 interlaced 60 fields per second) I have to downgrade luma scaling to either Lanczos 3-tap or SoftCubic 80 to avoid dropping frames. (The output destination is a 1080p TV.) I suspect a 7790 or 7850 could handle 3-tap Jinc for both chroma and luma at all resolutions and frame rates up to full HD.

By the way, I found a weird problem with madVR - when I ran GPU-Z in the background to monitor load, all interlaced content dropped frames. Didn't matter what settings I used. Closing GPU-Z ended the problem. I was still able to monitor GPU load with Microsoft's "Process Explorer" application and this did not cause any problems.

Regarding 4K output, did you test whether DisplayPort 60 Hz 4K works properly? This might be of interest to some users, especially if the upcoming Asus 4K monitor is released at a reasonable price point. I know people have had to use some odd tricks to get the Sharp 4K monitor to do native resolution at 60 Hz with existing cards.

ganeshts - Monday, June 3, 2013 - link

This is very interesting.. What version of GPU-Z were you using? I will check whether my Jinc / anti-ringing dropped frames were due to GPU-Z running in the background. I did do the initial setup when GPU-Z wasn't active, but obviously the benchmark runs were run with GPU-Z active in the background. Did you see any difference in GPU load between GPU-Z and Process Explorer when playing interlaced content with dropped frames?JDG1980 - Monday, June 3, 2013 - link

I was using the latest version (0.7.1) of GPU-Z. The strange part is that the GPU load calculation was correct - it was just dropping frames for no reason, it wasn't showing the GPU as being maxed out. For the video card, I was using the newest stable Catalyst driver (13.4, I believe) from AMD's website. The OS is Windows 7 Ultimate (64-bit).The only reason I suspected GPU-Z is because after searching a bunch of forums to try to find out why interlaced content (even SD with low madVR settings) wouldn't play properly, I found one other user who said he had to turn off GPU-Z. I cannot say if this is a widespread issue and it's possible it may be limited to certain system configurations or certain GPUs. Still worth trying, though. Thanks for the follow-up!

tential - Sunday, June 2, 2013 - link

I don't understand the H.264 Transcoding Performance chart at all can someone help?QuickSync does more FPS at 720p than 1080p. This makes sense.

The x264 on the Core i3 and core i7 post higher FPS in 1080p but lower in 720p. Why is this?

ganeshts - Monday, June 3, 2013 - link

Maybe the downscaling of the frame from 1080p to 720p sucks up more resources, causing the drop in FPS? Remember that the source is 1080p...tential - Monday, June 3, 2013 - link

Ok so if I'm downscaling to 720p, why does FPS increase with quicksync, but decrease with the processor?It's OPPOSITE directions one increases (quicksync) one decreases (cpu). Wouldn't it be the same both ways?

ganeshts - Monday, June 3, 2013 - link

Downscaling is also hardware accelerated in QS mode. Hardware transcode is faster for 720p decoded frames rather than 1080p decoded frames. The time taken to downscale is much lower than the time taken to transcode the 'extra pixels' in a 1080p version.elian123 - Monday, June 3, 2013 - link

Ganesh, you mention "The Iris Pro 5200 GPUs are reserved for BGA configurations and unavailable to system builders". Does that imply that there won't be motherboards for sale with the 4770R integrated? Will the 4770R only be available in complete systems?