Intel's Haswell - An HTPC Perspective: Media Playback, 4K and QuickSync Evaluated

by Ganesh T S on June 2, 2013 8:15 PM ESTDecoding and Rendering Benchmarks

Our decoding and rendering benchmarks consists of standardized test clips (varying codecs, resolutions and frame rates) being played back through MPC-HC. GPU usage is tracked through GPU-Z logs and power consumption at the wall is also reported. The former provides hints on whether frame drops could occur, while the latter is an indicator of the efficiency of the platform for the most common HTPC task - video playback.

Enhanced Video Renderer (EVR) / Enhanced Video Renderer - Custom Presenter (EVR-CP)

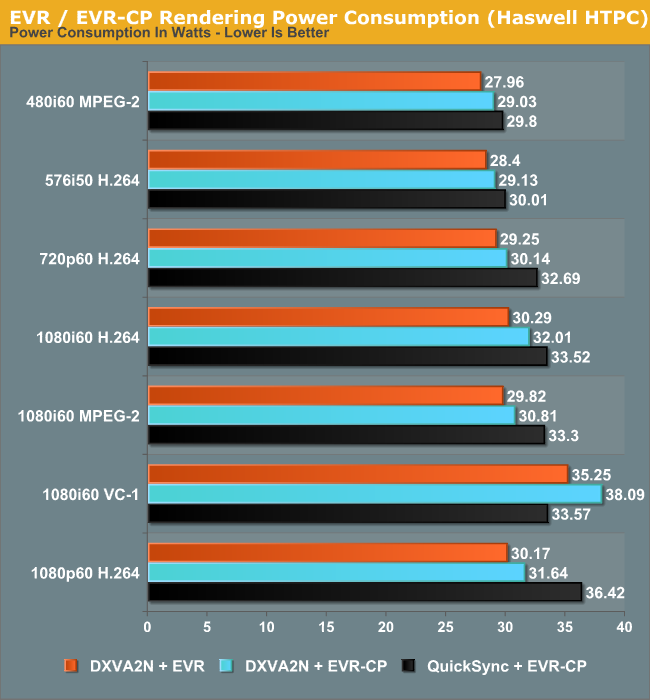

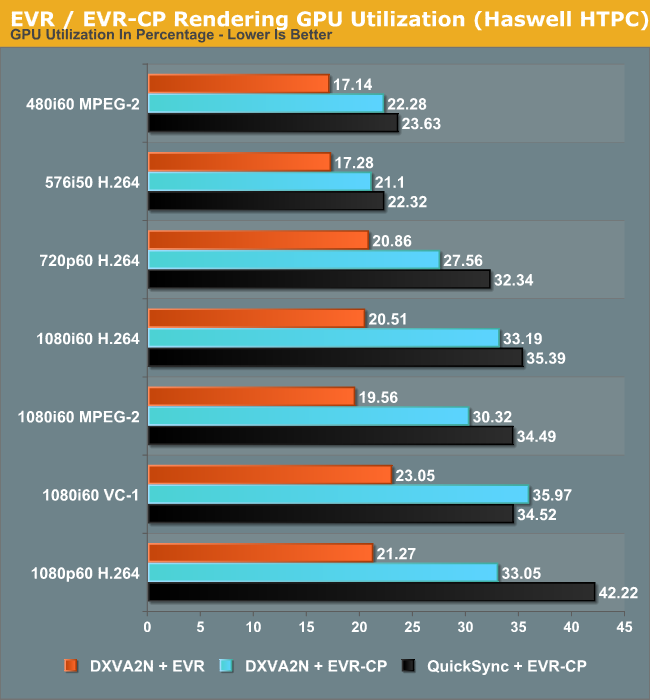

The Enhanced Video Renderer is the default renderer made available by Windows 8. It is a lean renderer in terms of usage of system resources since most of the aspects are offloaded to the GPU drivers directly. EVR is mostly used in conjunction with native DXVA2 decoding. The GPU is not taxed much by the EVR despite hardware decoding also taking place. Deinterlacing and other post processing aspects were left at the default settings in the Intel HD Graphics Control Panel (and these are applicable when EVR is chosen as the renderer). EVR-CP is the default renderer used by MPC-HC. It is usually used in conjunction with MPC-HC's video decoders, some of which are DXVA-enabled. However, for our tests, we used the DXVA2 mode provided by the LAV Video Decoder. In addition to DXVA2 Native, we also used the QuickSync decoder developed by Eric Gur (an Intel applications engineer) and made available to the open source community. It makes use of the specialized decoder blocks available as part of the QuickSync engine in the GPU.

Power consumption shows a tremendous decrease across all streams. Admittedly, the passive Ivy Bridge HTPC uses a 55W TDP Core i3-3225, but, as we will see later, the power consumption at full load for the Haswell build is very close to that of the Core i3-3225 build despite the lower TDP of the Core i7-4765T.

In general, using the QuickSync decoder results in a higher power consumption because the decoded frames are copied back to the DRAM before being sent to the renderer. Using native DXVA decoding, the frames are directly passed to the renderer without the copy-back step. The odd-man out in the power numbers is the interlaced VC-1 clip, where QuickSync decoding is more efficient compared to 'native DXVA2'. This is because there is currently no support in the open source native DXVA2 decoders for interlaced VC-1 on Intel GPUs, and hence, it is done in software. On the other hand, the QuickSync decoder is able to handle it with the VC-1 bitstream decoder in the GPU.

The GPU utilization numbers follow a similar track to the power consumption numbers. EVR is very lean on the GPU, as discussed earlier. The utilization numbers provide proof of the same. QuickSync appears to stress the GPU more, possibly because of the copy-back step for the decoded frames.

madVR

Videophiles often prefer madVR as their renderer because of the choice of scaling algorithms available as well as myriad other features. In our recent Ivy Bridge HTPC review, we found that with DDR3-1600 DRAM, it was straightforward to get madVR working with the default scaling algorithms for all materials 1080p60 or lesser. In the meanwhile, Mathias Rauen (developer of madVR) has developed more features. In order to alleviate the ringing artifacts introduced by the Lanczos algorithm, an option to enable an anti-ringing filter was introduced. A more intensive scaling algorithm (Jinc) was also added. Unfortunately, enabling either the anti-ringing filter with Lanczos or choosing any variant of Jinc resulted in a lot of dropped frames. Haswell's HD4600 is simply not powerful enough for these madVR features.

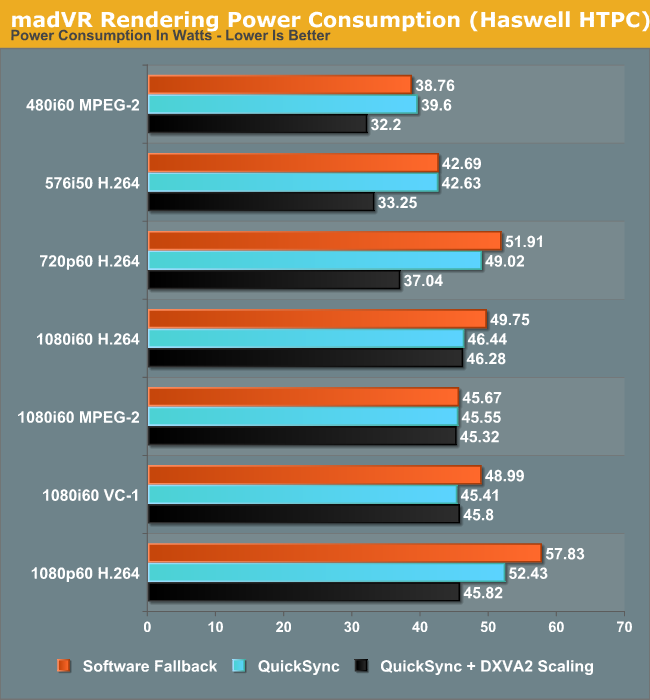

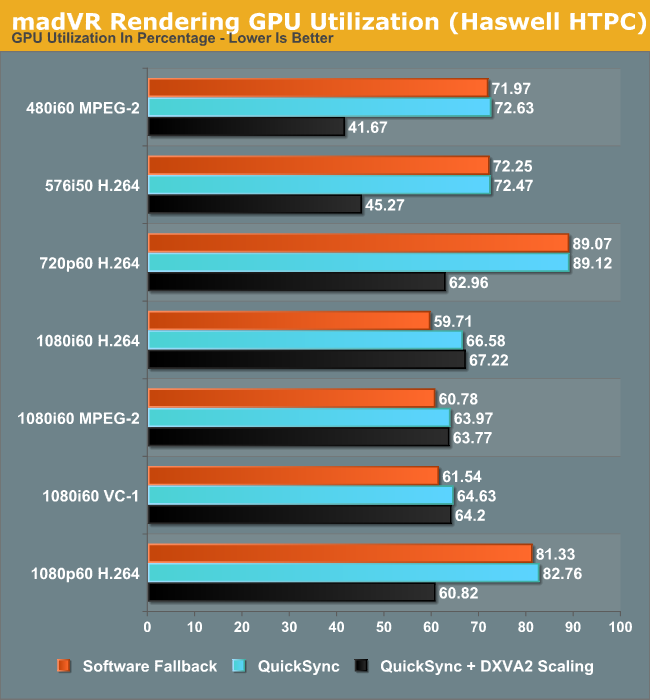

It is not possible to use native DXVA2 decoding with madVR because the decoded frames are not made available to an external renderer directly. (Update: It is possible to use DXVA2 Native with madVR since v0.85. Future HTPC articles will carry updated benchmarks) To work around this issue, LAV Video Decoder offers three options. The first option involves using software decoding. The second option is to use either QuickSync or DXVA2 Copy-Back. In either case, the decoded frames are brought back to the system memory for madVR to take over. One of the interesting features to be integrated into the recent madVR releases is the option to perform DXVA scaling. This is particularly interesting for HTPCs running Intel GPUs because the Intel HD Graphics engine uses dedicated hardware to implement support for the DXVA scaling API calls. AMD and NVIDIA apparently implement those calls using pixel shaders. In order to obtain a frame of reference, we repeated our benchmark process using DXVA2 scaling for both luma and chroma instead of the default settings.

One of the interesting aspects to note here is the fact that the power consumption numbers show a much larger shift towards the lower end when using DXVA2 scaling. This points to more power efficient updates in the GPU video post processing logic.

DXVA scaling results in much lower GPU usage for SD material in particular with a corresponding decrease in average power consumption too. Users with Intel GPUs can continue to enjoy other madVR features while giving up on the choice of a wide variety of scaling algorithms.

95 Comments

View All Comments

HisDivineOrder - Tuesday, June 4, 2013 - link

I've heard this song and dance before. It never happens. Plus, limiting people to GDDR5 of pre-determined amounts for a HTPC seems like an exercise in being stupid.Spunjji - Tuesday, June 4, 2013 - link

Yeah, I'm not buying that rumour. Doesn't make much sense.JDG1980 - Sunday, June 2, 2013 - link

It's good to see that Intel finally got around to fixing the 23.976 fps bug, which was the biggest show-stopper for using their integrated graphics in a HTPC.Regarding MadVR, I'd be interested to see more benchmarks. How good can you run the settings before hitting a wall with GPU utilization? How about on the GT3e - if this ever shows up in an all-in-one Mini-ITX board or NUC, it might be a great choice for HTPCs. Can it handle the good scaling algorithms?

My own experience is that anti-ringing doesn't add that much GPU load. I recently upgraded to a Radeon HD 7750, and it can handle anti-ringing filters on both luma and chroma with no problem. Chroma upscaling works fine with 3-tap Jinc, and luma also can do this with SD content (even interlaced), but for the most demanding test clip I have (1440x1080 interlaced 60 fields per second) I have to downgrade luma scaling to either Lanczos 3-tap or SoftCubic 80 to avoid dropping frames. (The output destination is a 1080p TV.) I suspect a 7790 or 7850 could handle 3-tap Jinc for both chroma and luma at all resolutions and frame rates up to full HD.

By the way, I found a weird problem with madVR - when I ran GPU-Z in the background to monitor load, all interlaced content dropped frames. Didn't matter what settings I used. Closing GPU-Z ended the problem. I was still able to monitor GPU load with Microsoft's "Process Explorer" application and this did not cause any problems.

Regarding 4K output, did you test whether DisplayPort 60 Hz 4K works properly? This might be of interest to some users, especially if the upcoming Asus 4K monitor is released at a reasonable price point. I know people have had to use some odd tricks to get the Sharp 4K monitor to do native resolution at 60 Hz with existing cards.

ganeshts - Monday, June 3, 2013 - link

This is very interesting.. What version of GPU-Z were you using? I will check whether my Jinc / anti-ringing dropped frames were due to GPU-Z running in the background. I did do the initial setup when GPU-Z wasn't active, but obviously the benchmark runs were run with GPU-Z active in the background. Did you see any difference in GPU load between GPU-Z and Process Explorer when playing interlaced content with dropped frames?JDG1980 - Monday, June 3, 2013 - link

I was using the latest version (0.7.1) of GPU-Z. The strange part is that the GPU load calculation was correct - it was just dropping frames for no reason, it wasn't showing the GPU as being maxed out. For the video card, I was using the newest stable Catalyst driver (13.4, I believe) from AMD's website. The OS is Windows 7 Ultimate (64-bit).The only reason I suspected GPU-Z is because after searching a bunch of forums to try to find out why interlaced content (even SD with low madVR settings) wouldn't play properly, I found one other user who said he had to turn off GPU-Z. I cannot say if this is a widespread issue and it's possible it may be limited to certain system configurations or certain GPUs. Still worth trying, though. Thanks for the follow-up!

tential - Sunday, June 2, 2013 - link

I don't understand the H.264 Transcoding Performance chart at all can someone help?QuickSync does more FPS at 720p than 1080p. This makes sense.

The x264 on the Core i3 and core i7 post higher FPS in 1080p but lower in 720p. Why is this?

ganeshts - Monday, June 3, 2013 - link

Maybe the downscaling of the frame from 1080p to 720p sucks up more resources, causing the drop in FPS? Remember that the source is 1080p...tential - Monday, June 3, 2013 - link

Ok so if I'm downscaling to 720p, why does FPS increase with quicksync, but decrease with the processor?It's OPPOSITE directions one increases (quicksync) one decreases (cpu). Wouldn't it be the same both ways?

ganeshts - Monday, June 3, 2013 - link

Downscaling is also hardware accelerated in QS mode. Hardware transcode is faster for 720p decoded frames rather than 1080p decoded frames. The time taken to downscale is much lower than the time taken to transcode the 'extra pixels' in a 1080p version.elian123 - Monday, June 3, 2013 - link

Ganesh, you mention "The Iris Pro 5200 GPUs are reserved for BGA configurations and unavailable to system builders". Does that imply that there won't be motherboards for sale with the 4770R integrated? Will the 4770R only be available in complete systems?