Intel Iris Pro 5200 Graphics Review: Core i7-4950HQ Tested

by Anand Lal Shimpi on June 1, 2013 10:01 AM ESTHaswell GPU Architecture & Iris Pro

In 2010, Intel’s Clarkdale and Arrandale CPUs dropped the GMA (Graphics Media Accelerator) label from its integrated graphics. From that point on, all Intel graphics would be known as Intel HD graphics. With certain versions of Haswell, Intel once again parts ways with its old brand and introduces a new one, this time the change is much more significant.

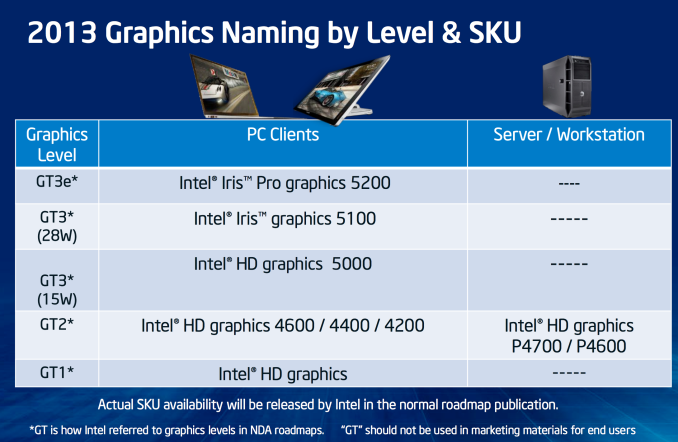

Intel attempted to simplify the naming confusion with this slide:

While Sandy and Ivy Bridge featured two different GPU implementations (GT1 and GT2), Haswell adds a third (GT3).

Basically it boils down to this. Haswell GT1 is just called Intel HD Graphics, Haswell GT2 is HD 4200/4400/4600. Haswell GT3 at or below 1.1GHz is called HD 5000. Haswell GT3 capable of hitting 1.3GHz is called Iris 5100, and finally Haswell GT3e (GT3 + embedded DRAM) is called Iris Pro 5200.

The fundamental GPU architecture hasn’t changed much between Ivy Bridge and Haswell. There are some enhancements, but for the most part what we’re looking at here is a dramatic increase in the amount of die area allocated for graphics.

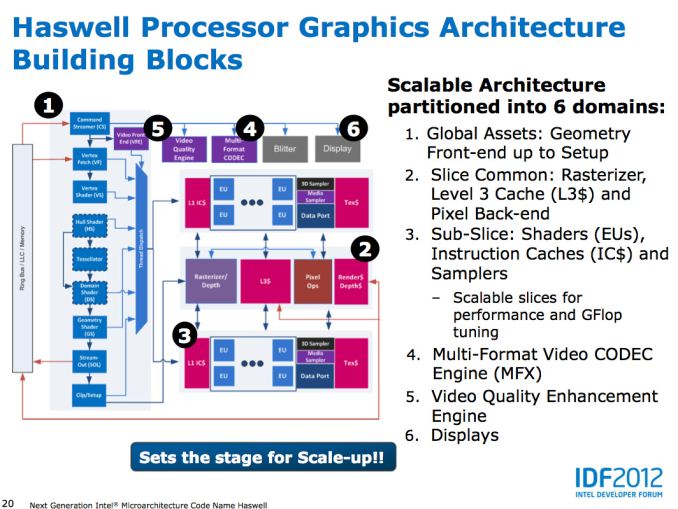

All GPU vendors have some fundamental building block they scale up/down to hit various performance/power/price targets. AMD calls theirs a Compute Unit, NVIDIA’s is known as an SMX, and Intel’s is called a sub-slice.

In Haswell, each graphics sub-slice features 10 EUs. Each EU is a dual-issue SIMD machine with two 4-wide vector ALUs:

| Low Level Architecture Comparison | ||||||||||||||||

| AMD GCN | Intel Gen7 Graphics | NVIDIA Kepler | ||||||||||||||

| Building Block | GCN Compute Unit | Sub-Slice | Kepler SMX | |||||||||||||

| Shader Building Block | 16-wide Vector SIMD | 2 x 4-wide Vector SIMD | 32-wide Vector SIMD | |||||||||||||

| Smallest Implementation | 4 SIMDs | 10 SIMDs | 6 SIMDs | |||||||||||||

| Smallest Implementation (ALUs) | 64 | 80 | 192 | |||||||||||||

There are limitations as to what can be co-issued down each EU’s pair of pipes. Intel addressed many of the co-issue limitations last generation with Ivy Bridge, but there are still some that remain.

Architecturally, this makes Intel’s Gen7 graphics core a bit odd compared to AMD’s GCN and NVIDIA’s Kepler, both of which feature much wider SIMD arrays without any co-issue requirements. The smallest sub-slice in Haswell however delivers a competitive number of ALUs to AMD and NVIDIA implementations.

Intel had a decent building block with Ivy Bridge, but it chose not to scale it up as far as it would go. With Haswell that changes. In its highest performing configuration, Haswell implements four sub-slices or 40 EUs. Doing the math reveals a very competent looking part on paper:

| Peak Theoretical GPU Performance | ||||||||||||||||

| Cores/EUs | Peak FP ops per Core/EU | Max GPU Frequency | Peak GFLOPs | |||||||||||||

| Intel Iris Pro 5100/5200 | 40 | 16 | 1300MHz | 832 GFLOPS | ||||||||||||

| Intel HD Graphics 5000 | 40 | 16 | 1100MHz | 704 GFLOPS | ||||||||||||

| NVIDIA GeForce GT 650M | 384 | 2 | 900MHz | 691.2 GFLOPS | ||||||||||||

| Intel HD Graphics 4600 | 20 | 16 | 1350MHz | 432 GFLOPS | ||||||||||||

| Intel HD Graphics 4000 | 16 | 16 | 1150MHz | 294.4 GFLOPS | ||||||||||||

| Intel HD Graphics 3000 | 12 | 12 | 1350MHz | 194.4 GFLOPS | ||||||||||||

| Intel HD Graphics 2000 | 6 | 12 | 1350MHz | 97.2 GFLOPS | ||||||||||||

| Apple A6X | 32 | 8 | 300MHz | 76.8 GFLOPS | ||||||||||||

In its highest end configuration, Iris has more raw compute power than a GeForce GT 650M - and even more than a GeForce GT 750M. Now we’re comparing across architectures here so this won’t necessarily translate into a performance advantage in games, but the takeaway is that with HD 5000, Iris 5100 and Iris Pro 5200 Intel is finally walking the walk of a GPU company.

Peak theoretical performance falls off steeply as soon as you start looking at the GT2 and GT1 implementations. With 1/4 - 1/2 of the execution resources as the GT3 graphics implementation, and no corresponding increase in frequency to offset the loss the slower parts are substantially less capable. The good news is that Haswell GT2 (HD 4600) is at least more capable than Ivy Bridge GT2 (HD 4000).

Taking a step back and looking at the rest of the theoretical numbers gives us a more well rounded look at Intel’s graphics architectures :

| Peak Theoretical GPU Performance | ||||||||||||||||

| Peak Pixel Fill Rate | Peak Texel Rate | Peak Polygon Rate | Peak GFLOPs | |||||||||||||

| Intel Iris Pro 5100/5200 | 10.4 GPixels/s | 20.8 GTexels/s | 650 MPolys/s | 832 GFLOPS | ||||||||||||

| Intel HD Graphics 5000 | 8.8 GPixels/s | 17.6 GTexels/s | 550 MPolys/s | 704 GFLOPS | ||||||||||||

| NVIDIA GeForce GT 650M | 14.4 GPixels/s | 28.8 GTexels/s | 900 MPolys/s | 691.2 GFLOPS | ||||||||||||

| Intel HD Graphics 4600 | 5.4 GPixels/s | 10.8 GTexels/s | 675 MPolys/s | 432 GFLOPS | ||||||||||||

| AMD Radeon HD 7660D (Desktop Trinity, A10-5800K) | 6.4 GPixels/s | 19.2 GTexels/s | 800 MPolys/s | 614 GFLOPS | ||||||||||||

| AMD Radeon HD 7660G (Mobile Trinity, A10-4600M) | 3.97 GPixels/s | 11.9 GTexels/s | 496 MPolys/s | 380 GFLOPS | ||||||||||||

Intel may have more raw compute, but NVIDIA invested more everywhere else in the pipeline. Triangle, texturing and pixel throughput capabilities are all higher on the 650M than on Iris Pro 5200. Compared to AMD's Trinity however, Intel has a big advantage.

177 Comments

View All Comments

Elitehacker - Tuesday, September 24, 2013 - link

Even for a given power usage the 650M isn't even to on the top of the list for highest end discrete GPU.... The top at the moment for lowest wattage to power ratio would be the 765M, even the Radeon HD 7750 has less wattage and a tad more power than the 650M. Clearly someone did not do their researching before opening their mouth.I'm gonna go out on a limb and say that vFunct is one of those Apple fanboys that knows nothing about performance. You can get a PC laptop in the same size and have better performance than any Macbook available for $500 less. Hell you can even get a Tablet with an i7 and 640M that'll spec out close to the 650M for less than a Macbook Pro with 650M.

Eric S - Tuesday, June 25, 2013 - link

The Iris Pro 5200 would be ideal for both machines. Pro users would benefit from ECC memory for the GPU. The Iris chip uses ECC memory making it ideal for OpenCL workloads in Adobe CS6 or Final Cut X. Discrete mobile chips may produce errors in the OpenCL output. Gamers would probably prefer a discrete chip, but that isn't the target for these machines.Eric S - Monday, July 1, 2013 - link

I think Apple cares more about the OpenCL performance which is excellent on the Iris. I doubt the 15" will have a discrete GPU. There isn't one fast enough to warrant it over the Iris 5200. If they do ever put a discrete chip back in, I hope they go with ECC GDDR memory. My guess is space savings will be used for more battery. It is also possible they may try to reduce the display bezel.emptythought - Tuesday, October 1, 2013 - link

It's never had the highest end chip, just the best "upper midrange" one. Above the 8600m GT was the 8800m GTX and GTS, and above the 650m there was the 660, a couple 670 versions, the 675 versions, and the 680.They chose the highest performance part that hit a specific TDP, stretching a bit from time to time. It was generally the case that anything which outperformed the MBP was either a thick brick, or had perpetual overheating issues.

CyberJ - Sunday, July 27, 2014 - link

Not even close, but whatever floats you boat.emptythought - Tuesday, October 1, 2013 - link

It wouldn't surprise me if the 15in just had the "beefed up" iris pro honestly. They might even get their own, special even more overclocked than 55w version.Mainly, because it wouldn't be without precedent. Remember when the 2009 15in macbook pro had a 9400m still? Or when they dropped the 320m for the hd3000 even though it was slightly slower?

They sometimes make lateral, or even slightly backwards moves when there are other motives at play.

chipped - Sunday, June 2, 2013 - link

That's just crazy talk, they want drop dedicated graphics. The difference is still too big, plus you can't sell a $2000 laptop without a dedicated GFX.shiznit - Sunday, June 2, 2013 - link

considering Apple specifically asked for eDRAM and since there is no dual core version yet for the 13", I'd say there is very good chance.mavere - Sunday, June 2, 2013 - link

"The difference is still too big"The difference in what?

Something tells me Apple and its core market is more concerned with rendering/compute performance more than Crysis 3 performance...

iSayuSay - Wednesday, June 5, 2013 - link

If it plays Crysis 3 well, it can render/compute/do whatever intensive fine.