Intel Iris Pro 5200 Graphics Review: Core i7-4950HQ Tested

by Anand Lal Shimpi on June 1, 2013 10:01 AM ESTAddressing the Memory Bandwidth Problem

Integrated graphics solutions always bumped into a glass ceiling because they lacked the high-speed memory interfaces of their discrete counterparts. As Haswell is predominantly a mobile focused architecture, designed to span the gamut from 10W to 84W TDPs, relying on a power-hungry high-speed external memory interface wasn’t going to cut it. Intel’s solution to the problem, like most of Intel’s solutions, involves custom silicon. As a owner of several bleeding edge foundries, would you expect anything less?

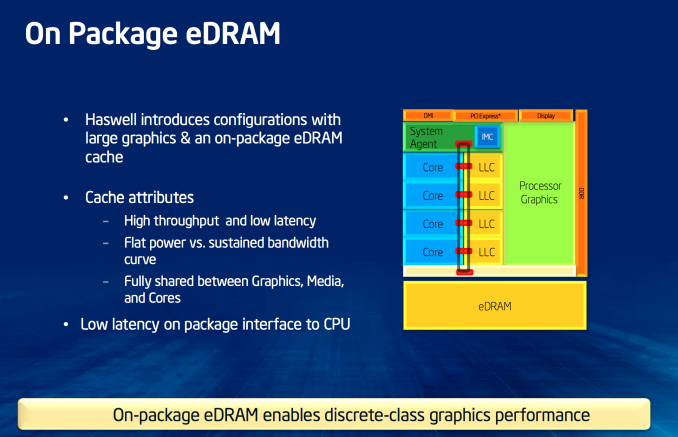

As we’ve been talking about for a while now, the highest end Haswell graphics configuration includes 128MB of eDRAM on-package. The eDRAM itself is a custom design by Intel and it’s built on a variant of Intel’s P1271 22nm SoC process (not P1270, the CPU process). Intel needed a set of low leakage 22nm transistors rather than the ability to drive very high frequencies which is why it’s using the mobile SoC 22nm process variant here.

Despite its name, the eDRAM silicon is actually separate from the main microprocessor die - it’s simply housed on the same package. Intel’s reasoning here is obvious. By making Crystalwell (the codename for the eDRAM silicon) a discrete die, it’s easier to respond to changes in demand. If Crystalwell demand is lower than expected, Intel still has a lot of quad-core GT3 Haswell die that it can sell and vice versa.

Crystalwell Architecture

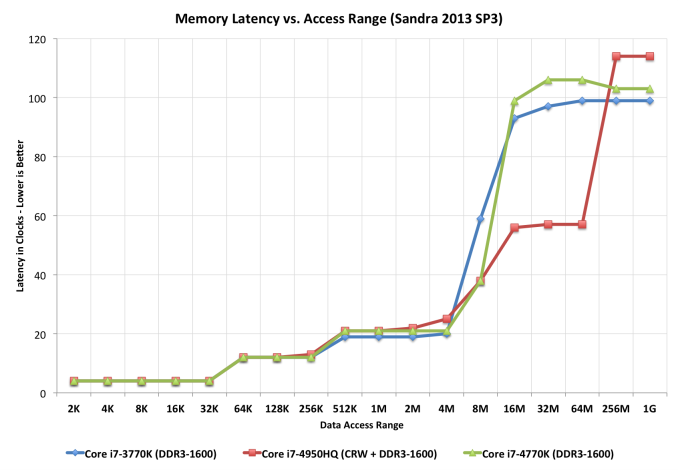

Unlike previous eDRAM implementations in game consoles, Crystalwell is true 4th level cache in the memory hierarchy. It acts as a victim buffer to the L3 cache, meaning anything evicted from L3 cache immediately goes into the L4 cache. Both CPU and GPU requests are cached. The cache can dynamically allocate its partitioning between CPU and GPU use. If you don’t use the GPU at all (e.g. discrete GPU installed), Crystalwell will still work on caching CPU requests. That’s right, Haswell CPUs equipped with Crystalwell effectively have a 128MB L4 cache.

Intel isn’t providing much detail on the connection to Crystalwell other than to say that it’s a narrow, double-pumped serial interface capable of delivering 50GB/s bi-directional bandwidth (100GB/s aggregate). Access latency after a miss in the L3 cache is 30 - 32ns, nicely in between an L3 and main memory access.

The eDRAM clock tops out at 1.6GHz.

There’s only a single size of eDRAM offered this generation: 128MB. Since it’s a cache and not a buffer (and a giant one at that), Intel found that hit rate rarely dropped below 95%. It turns out that for current workloads, Intel didn’t see much benefit beyond a 32MB eDRAM however it wanted the design to be future proof. Intel doubled the size to deal with any increases in game complexity, and doubled it again just to be sure. I believe the exact wording Intel’s Tom Piazza used during his explanation of why 128MB was “go big or go home”. It’s very rare that we see Intel be so liberal with die area, which makes me think this 128MB design is going to stick around for a while.

The 32MB number is particularly interesting because it’s the same number Microsoft arrived at for the embedded SRAM on the Xbox One silicon. If you felt that I was hinting heavily at the Xbox One being ok if its eSRAM was indeed a cache, this is why. I’d also like to point out the difference in future proofing between the two designs.

The Crystalwell enabled graphics driver can choose to keep certain things out of the eDRAM. The frame buffer isn’t stored in eDRAM for example.

| Peak Theoretical Memory Bandwidth | ||||||||||||||||

| Memory Interface | Memory Frequency | Peak Theoretical Bandwidth | ||||||||||||||

| Intel Iris Pro 5200 | 128-bit DDR3 + eDRAM | 1600MHz + 1600MHz eDRAM | 25.6GB/s + 50GB/s eDRAM (bidirectional) | |||||||||||||

| NVIDIA GeForce GT 650M | 128-bit GDDR5 | 5016MHz | 80.3 GB/s | |||||||||||||

| Intel HD 5100/4600/4000 | 128-bit DDR3 | 1600MHz | 25.6GB/s | |||||||||||||

| Apple A6X | 128-bit LPDDR2 | 1066MHz | 17.1 GB/s | |||||||||||||

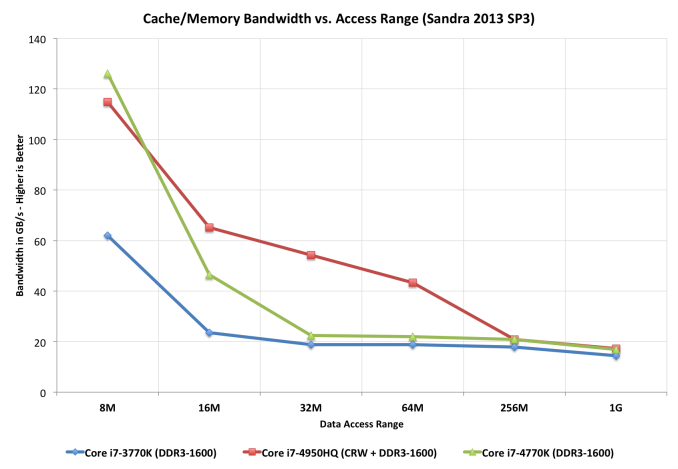

Intel claims that it would take a 100 - 130GB/s GDDR memory interface to deliver similar effective performance to Crystalwell since the latter is a cache. Accessing the same data (e.g. texture reads) over and over again is greatly benefitted by having a large L4 cache on package.

I get the impression that the plan might be to keep the eDRAM on a n-1 process going forward. When Intel moves to 14nm with Broadwell, it’s entirely possible that Crystalwell will remain at 22nm. Doing so would help Intel put older fabs to use, especially if there’s no need for a near term increase in eDRAM size. I asked about the potential to integrate eDRAM on-die, but was told that it’s far too early for that discussion. Given the size of the 128MB eDRAM on 22nm (~84mm^2), I can understand why. Intel did float an interesting idea by me though. In the future it could integrate 16 - 32MB of eDRAM on-die for specific use cases (e.g. storing the frame buffer).

Intel settled on eDRAM because of its high bandwidth and low power characteristics. According to Intel, Crystalwell’s bandwidth curve is very flat - far more workload independent than GDDR5. The power consumption also sounds very good. At idle, simply refreshing whatever data is stored within, the Crystalwell die will consume between 0.5W and 1W. Under load, operating at full bandwidth, the power usage is 3.5 - 4.5W. The idle figures might sound a bit high, but do keep in mind that since Crystalwell caches both CPU and GPU memory it’s entirely possible to shut off the main memory controller and operate completely on-package depending on the workload. At the same time, I suspect there’s room for future power improvements especially as Crystalwell (or a lower power derivative) heads towards ultra mobile silicon.

Crystalwell is tracked by Haswell’s PCU (Power Control Unit) just like the CPU cores, GPU, L3 cache, etc... Paying attention to thermals, workload and even eDRAM hit rate, the PCU can shift power budget between the CPU, GPU and eDRAM.

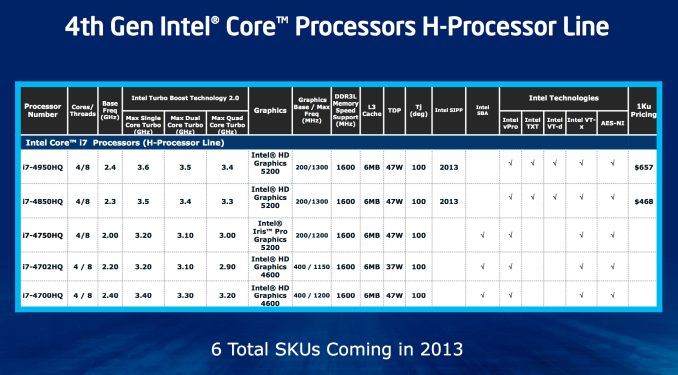

Crystalwell is only offered alongside quad-core GT3 Haswell. Unlike previous generations of Intel graphics, high-end socketed desktop parts do not get Crystalwell. Only mobile H-SKUs and desktop (BGA-only) R-SKUs have Crystalwell at this point. Given the potential use as a very large CPU cache, it’s a bit insane that Intel won’t even offer a single K-series SKU with Crystalwell on-board.

As for why lower end parts don’t get it, they simply don’t have high enough memory bandwidth demands - particularly in GT1/GT2 graphics configurations. According to Intel, once you get to about 18W then GT3e starts to make sense but you run into die size constraints there. An Ultrabook SKU with Crystalwell would make a ton of sense, but given where Ultrabooks are headed (price-wise) I’m not sure Intel could get any takers.

177 Comments

View All Comments

Phrontis - Wednesday, June 5, 2013 - link

I can't wait for one on these on a mITX board such with 3 decent monitor outputs. Theres enough power for the sort of things I do if not for gaming.Phrontis

khanov - Friday, June 7, 2013 - link

Without a direct comparison between HD 5000/5100 and Iris Pro 5200 with Crystalwell,how can we conclude that Crystalwell has any effect in any of the game benchmarks? While it clearly is of benefit in some compute tasks, in the game benchmarks you only compare to HD 4600 with half as many EU's and to Nvidia and AMD with their different architectures.

We really need to see Iris Pro 5200 vs HD5100 to get an apples to apples comparison and be able to determine if Crystalwell is worth the extra money.

MODEL3 - Sunday, June 9, 2013 - link

Haswell ULT GT3 (Dual-Core+GT3) = 181mm2 and 40 EU Haswell GPU is 174mm^2.7mm^2 for everything else except GT3?

n13L5 - Tuesday, June 11, 2013 - link

" An Ultrabook SKU with Crystalwell would make a ton of sense, but given where Ultrabooks are headed (price-wise) I’m not sure Intel could get any takers."They sure seem to be going up in price, rather than down at the moment...

anandfan86 - Tuesday, June 18, 2013 - link

Intel has once again made their naming so confusing that even their own marketing weasels can't get it right. Notice that the Intel slide titled "4th Gen Intel Core Processors H-Processors Line" calls the graphics in the i7-4950HQ and i7-4850HQ "Intel HD Graphics 5200" instead of the correct name which is "Intel Iris Pro Graphics 5200". This slide calls the graphics in the i7-4750HQ "Intel Iris Pro Graphics 5200" which indicates that the slide was made after the creation of that name. It is little wonder that most media outlets are acting as if the biggest tech news of the month is the new pastel color scheme in iOS 7.Myoozak - Wednesday, June 26, 2013 - link

The peak theoretical GPU performance calculations shown are wrong for Intel's GFLOPS numbers. Correct numbers are half of what is shown. The reason is that Intel's execution units are made of of an integer vec4 processor and a floating-point vec4 processor. This article correctly states it has a 2xvec4 SIMD, but does not point out that half is integer and half is floating-point. For a GFLOPS computation, one should only include the floating-point operations, which means only half of that execution unit's silicon is getting used. The reported computation performance would only be correct if you had an algorithm with a perfect mix of integer & float math that could be co-issued. To compare apples to apples, you need to stick to GFLOPS numbers, and divide all the Intel numbers in the table by 2. For example, peak FP ops on the Intel HD4000 would be 8, not 16. Compared this way, Intel is not stomping all over AMD & nVidia for compute performance, but it does appear they are catching up.alexcyn - Tuesday, August 6, 2013 - link

I heard that Intel 22nm process equals TSMS 26nm, so the difference is not that much.alexcyn - Tuesday, August 6, 2013 - link

I heard that Intel 22nm process equals TSMC 26nm, so the difference is not that big.Doughboy(^_^) - Friday, August 9, 2013 - link

I think Intel could push their yield way up by offering 32MB and 64MB versions of Crystalwell for i3 and i5 processors. They could charge the same markup for the 128, but sell the 32/64 for cheaper. It would cost Intel less and probably let them take even further market share from low-end dGPUs.krr711 - Monday, February 10, 2014 - link

It is funny how a non-PC company changed the course of Intel forever for the good. I hope that Intel is wise enough to use this to spring-board the PC industry to a new, grand future. No more tick-tock nonsense arranged around sucking as many dollars out of the customer as possible, but give the world the processing power it craves and needs to solve the problems of tomorrow. Let this be your heritage and your profits will grow to unforeseen heights. Surprise us!