AMD’s Jaguar Architecture: The CPU Powering Xbox One, PlayStation 4, Kabini & Temash

by Anand Lal Shimpi on May 23, 2013 12:00 AM EST

Microprocessor architectures these days are largely limited, and thus defined, by power consumption. When it comes to designing an architecture around a power envelope the rule of thumb is any given microprocessor architecture can scale to target an order of magnitude of TDPs. For example, Intel’s Core architectures (Sandy/Ivy Bridge) effectively target the 13W - 130W range. They can surely be used in parts that consume less or more power, but at those extremes it’s more efficient to build another microarchitecture to target those TDPs instead.

Both AMD and Intel feel similarly about this order of magnitude rule, and thus both have two independent microprocessor architectures that they leverage to build chips for the computing continuum. From Intel we have Atom for low power, and Core for high performance. In 2010 AMD gave us Bobcat for its low power roadmap, and Bulldozer for high performance.

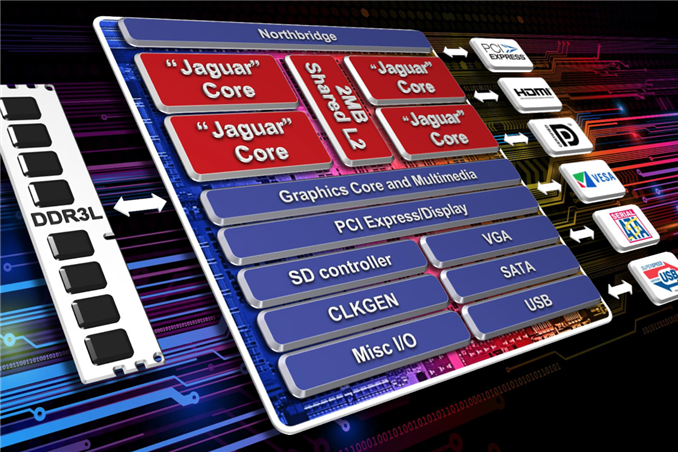

Both the Bobcat and Bulldozer lines would see annual updates. In 2011 we saw Bobcat used in Ontario and Zacate SoCs, as a part of the Brazos platform. Last year AMD announced Brazos 2.0, using slightly updated versions of those very same Bobcat based SoCs. Today AMD officially launches Kabini and Temash, APUs based on the first major architectural update to Bobcat: the Jaguar core.

Jaguar: Improved 2-wide Out-of-Order

At the core-level, Jaguar still looks a lot like Bobcat. The same dual-issue, out-of-order architecture that AMD introduced in 2010 remains intact with Jaguar. The same L1 cache, front end and execution blocks are all still here. Given the ARM transition from a dual-issue, out-of-order core with Cortex A9 to a three-issue, OoO design with the Cortex A15, I expected something similar from AMD. Despite moving to a smaller manufacturing process (28nm), AMD was very focused on increasing performance within the same TDP or lower with Jaguar. The driving motivator? While Bobcat ended up in netbooks, nettops and other low cost, but thick machines, Jaguar needed to go into even thinner form factors: tablets. AMD still has no intentions of getting into the smartphone SoC space, but the Windows 8 (and Android?) tablet market is fair game. Cellular connectivity isn’t a requirement there, particularly at the lower price points, and AMD can easily be a second source alternative to Intel Atom based designs.

The average number of instructions executed per clock (IPC) is still below 1 for most client workloads. There’s a certain amount of burst traffic to be expected but given the types of dependencies you see in most use cases, AMD felt the gain from making the machine wider wasn’t worth the power tradeoff. There’s also the danger of making the cat-cores too powerful. While just making them 3-issue to begin with wouldn’t dramatically close the gap between the cat-cores and the Bulldozer family, there’s still a desire for there to be clear separation between the two microarchitectures.

The move to a three-issue design would certainly increase performance, but AMD’s tablet ambitions and power sensitivity meant it would save that transition for another day. I should point out that ARM is increasingly looking like the odd-man-out here, with both Jaguar and Intel’s Silvermont retaining the dual-issue design of their predecessors. Part of this has to do with the fact that while AMD and Intel are very focused on driving power down, ARM has aspirations of moving up in the performance/power chain.

The width of the front end is only one lever AMD could have used to increase performance. While it was a pretty big lever that AMD chose not to pull, there are other smaller levers that were exercised in Jaguar.

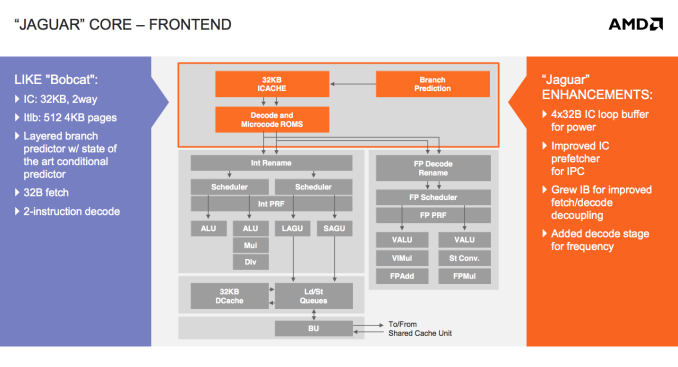

There’s now a 4 x 32-byte loop buffer for the instruction cache. Whenever a loop is detected, instead of fetching instructions executed in the loop from the L1 I-cache over and over again, they’re serviced from this small loop buffer. If this sounds like a trace cache or decoded micro-op cache, don’t get too excited, Jaguar’s loop buffer is neither of these things. There are no pipeline savings or powered down fetch/decode units. The only benefit to the new loop buffer is the instruction cache doesn’t have to be fired up during every iteration of a buffered loop. In other words, this is a very specific play to reduce power consumption - not to improve performance.

All microprocessors see tons of simulation work before they’re ever brought to market. Even once a design is done, additional profiling is used to identify bottlenecks, which are then prioritized for addressing in future designs. All bottleneck removal has to be vetted against power, cost and schedule constraints. Given an infinite budget across all vectors you could eliminate all bottlenecks, but you’d likely take an infinite amount of time to complete the design. Taking all of those realities into account usually means making tradeoffs, even when improving a design.

We saw the first example of a clear tradeoff when AMD stuck with a 2-issue front end for Jaguar. Not including a decoded micro-op cache and opting for a simpler loop buffer instead is an example of another. AMD likely noticed a lot of power being wasted during loops, and the addition of a loop buffer was probably the best balance of complexity, power savings and cost.

AMD also improved the instruction cache prefetcher, not because of an over abundance of bandwidth but by revisiting the Bobcat design and spending some more time on the implementation in Jaguar. The IC prefetcher improvements are simply AMD doing things better in Jaguar, not being under the same pressure to introduce a brand new architecture as was the case with Bobcat.

The instruction buffer between the instruction cache and decoders grew in size with Jaguar, a sort of half step towards the more heavily decoupled fetch/decode stages in Bulldozer.

Jaguar adds support for new instructions (SSE4.1/4.2, AES, CLMUL, MOVBE, AVX, F16C, BMI1) as well as 40-bit physical addressing.

The final change to the front of Jaguar was the addition of another decode stage, purely for frequency gains. It turns out that in Bobcat the decoder was one of the critical paths limiting maximum frequency. Adding another decode stage simply gave AMD enough wiggle room to hit their frequency targets for Jaguar at 28nm.

78 Comments

View All Comments

Krysto - Friday, May 24, 2013 - link

Still don't see why OEM's would choose AMD's APU's in Android tablets over ARM, though.It's weaker CPU wise, and most likely weaker GPU wise, too. We'll see when they come out if their GPU's can stand up to Adreno 330, PowerVR Series 6 and Mali T628. Plus, it requires quite a bit of power.

In my book no chip that can't be used in a smartphone (and I'm talking about the exact same model, not the "brand") should be called a "mobile chip".

This idea about "tablet chips" is nonsense. Tablet chips is just another way of saying our chip is not efficient enough, so we're just going to compensate for that with a much larger battery, that adds more to weight, charging time, and of course price.

ReverendDC - Monday, May 27, 2013 - link

Even an Atom chip is more powerful per cycle than ARM, and AMD's stuff is more powerful than Atom. I'm not exactly sure what you are using to state that ARM is more powerful, but AnandTech did a great comparison themselves.By the way, the comparisons in some cases are for quad core ARM vs. single core Atom at comparable speeds. Again, really not sure where your "facts" come from.

BernardBlack - Wednesday, May 29, 2013 - link

ARM actually isn't all that...and it's quite the other way around.As I have seen it stated elsewhere, "Simply: x86 IPC eats ARM for lunch while actual performance and power usage will scale together. That is why ARM currently has no real business competing against x86"

BernardBlack - Wednesday, May 29, 2013 - link

It's all about instructions per cycle and that is what AMD and Intel do best.BernardBlack - Wednesday, May 29, 2013 - link

not to mention, the IPC's in these processors follow suit with their server Opteron processors, which means, they achieve even greater IPC's per cycle. This is how you are able to have 1.6ghz CPU's that can compete with many common 3-4ghz desktop processors.eanazag - Friday, May 31, 2013 - link

They could use the exact same hardware design to sell Android and Windows tablets.Wolfpup - Wednesday, June 12, 2013 - link

Huh? AMD's Bobcat parts are more powerful than ARM's stuff. ARM's only just now managing to sort of compete with first gen Atom at best, and that with a CPU that's not actually used in much.And "tablet chips" is NOT nonsense. You have more power budget, and higher expectations for performance in a tablet. If it's "nonsense", why does Apple put bigger chips in their tablets? Why can tablets run Core i CPUs? Why do they typically get bigger chips first even with Android?

Wolfpup - Wednesday, June 12, 2013 - link

To add to that, THIS article explicitly says "Jaguar is presently without competition...nothing from ARM is quick enough."So really, where the heck are you getting the idea ARM has more powerful chips?

kyuu - Thursday, May 23, 2013 - link

I think the main point of this is getting into the mobile market. Temash looks like a great chip for a tablet. The only problem is OEMs not biting because they think they have to put an Intel sticker on the box for it to sell.Personally, I'm waiting on a good tablet with Temash to finally jump into the Win8 tablet club. Whatever OEM makes a good one first will be getting my money.

mikato - Friday, May 24, 2013 - link

I think with tablets, OEMs are even less likely to think they need to put an Intel sticker on it. Joe Schmo knows Intel doesn't mean as much for tablets. The most well known tablets aren't Intel. This is an opening for AMD to be able to get in the game late if they want to.Actually, do they even put stickers on tablets?