NVIDIA GeForce GTX 780 Review: The New High End

by Ryan Smith on May 23, 2013 9:00 AM ESTCrysis 3

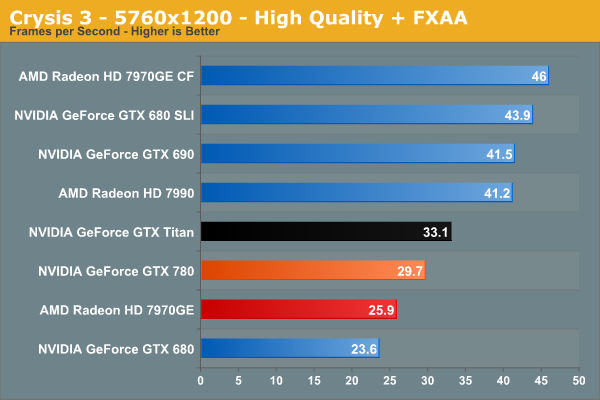

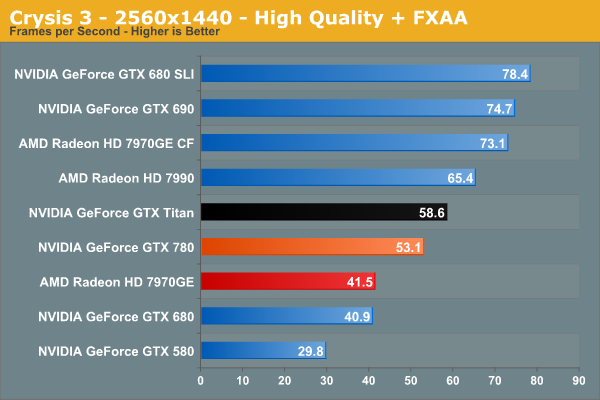

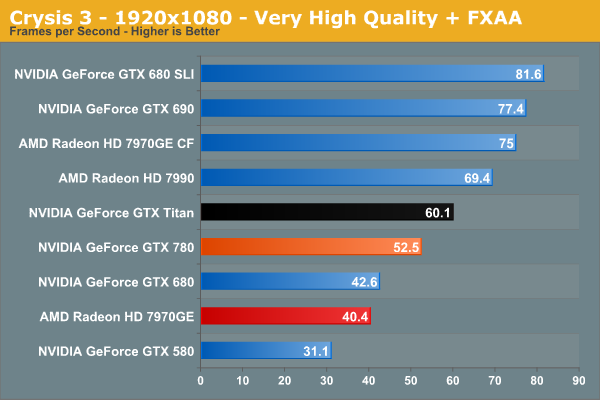

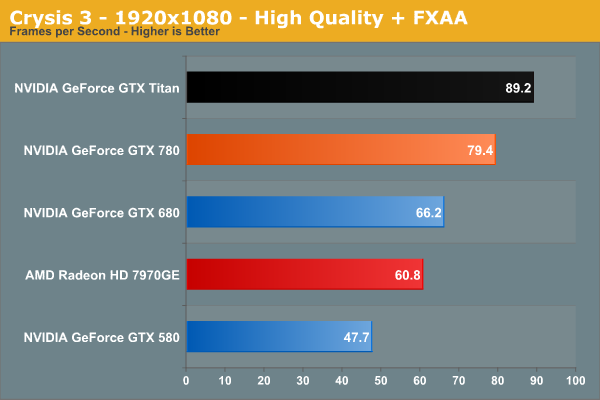

Our final benchmark in our suite needs no introduction. With Crysis 3, Crytek has gone back to trying to kill computers, taking back the “most punishing game” title in our benchmark suite. Only in a handful of setups can we even run Crysis 3 at its highest (Very High) settings, and that’s still without AA. Crysis 1 was an excellent template for the kind of performance required to driver games for the next few years, and Crysis 3 looks to be much the same for 2013.

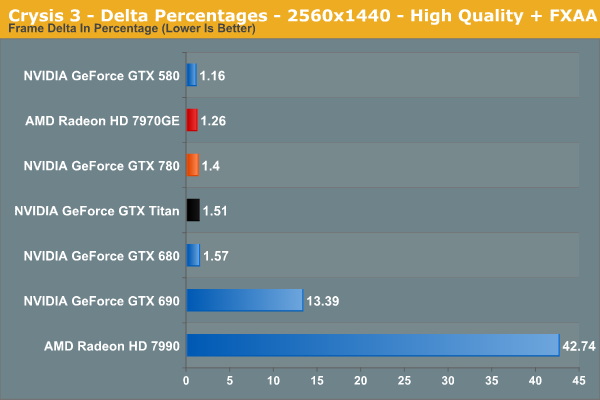

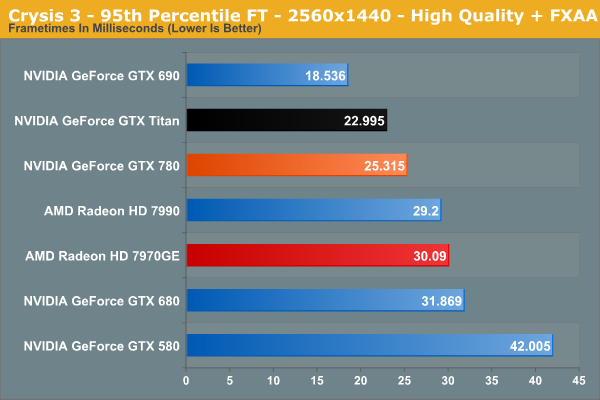

Even with just FXAA and High quality settings, Crysis 3 quashes any hope of running at 2560 with a single card at the game’s higher quality settings. 53.1fps is plenty playable, but GTX 780 users would need to give up a bit more if they want to push the averages above 60fps. Meanwhile looking at our percentages it’s another strong showing for the GTX 780, with the GTX 780 leading the GTX 680 by 30% and the 7970GE by 28%.

155 Comments

View All Comments

Stuka87 - Thursday, May 23, 2013 - link

The video card does handle the decoding and rendering for the video. Anand has done several tests over the years comparing their video quality. There are definite differences between AMD/nVidia/Intel.JDG1980 - Thursday, May 23, 2013 - link

Yes, the signal is digital, but the drivers often have a bunch of post-processing options which can be applied to the video: deinterlacing, noise reduction, edge enhancement, etc.Both AMD and NVIDIA have some advantages over the other in this area. Either is a decent choice for a HTPC. Of course, no one in their right mind would use a card as power-hungry and expensive as a GTX 780 for a HTPC.

In the case of interlaced content, either the PC or the display device *has* to apply post-processing or else it will look like crap. The rest of the stuff is, IMO, best left turned off unless you are working with really subpar source material.

Dribble - Thursday, May 23, 2013 - link

To both of you above, on DVD yes, not on bluray - there is no interlacing, noise or edges to reduce - bluray decodes to a perfect 1080p picture which you send straight to the TV.All the video card has to do is decode it, which why a $20 bluray player with $5 cable will give you exactly the same picture and sound quality as a $1000 bluray player with $300 cable - as long as TV can take the 1080p input and hifi can handle the HD audio signal.

JDG1980 - Thursday, May 23, 2013 - link

You can do any kind of post-processing you want on a signal, whether it comes from DVD, Blu-Ray, or anything else. A Blu-Ray is less likely to get subjective quality improvements from noise reduction, edge enhancement, etc., but you can still apply these processes in the video driver if you want to.The video quality of Blu-Ray is very good, but not "perfect". Like all modern video formats, it uses lossy encoding. A maximum bit rate of 40 Mbps makes artifacts far less common than with DVDs, but they can still happen in a fast-motion scene - especially if the encoders were trying to fit a lot of content on a single layer disc.

Most Blu-Ray content is progressive scan at film rates (1080p23.976) but interlaced 1080i is a legal Blu-Ray resolution. I believe some variants of the "Planet Earth" box set use it. So Blu-Ray playback devices still need to know how to deinterlace (assuming they're not going to delegate that task to the display).

Dribble - Thursday, May 23, 2013 - link

I admit it's possible to post process but you wouldn't, a real time post process is highly unlikely to add anything good to the picture - fancy bluray players don't post process, they just pass on the signal. As for 1080i that's a very unusual case for bluray, but as it's just the standard HD TV resolution again pass it to the TV - it'll de-interlace it just like it does all the 1080i coming from your cable/satelight box.Galidou - Sunday, May 26, 2013 - link

''All the video card has to do is decode it, which why a $20 bluray player with $5 cable will give you exactly the same picture and sound quality as a $1000 bluray player with $300 cable - as long as TV can take the 1080p input and hifi can handle the HD audio signal.''I'm an audiophile and a professionnal when it comes to hi-end home theater, I myself built tons of HT system around PCs and or receivers and I have to admit this is the funniest crap I've had to read. I'd just like to know how many blu-ray players you've personnally compared up to let's say the OPPO BDP -105(I've dealt with pricier units than this mere 1200$ but still awesome Blu-ray player).

While I can certainly say that image quality not affected by much, the audio on the other side sees DRASTIC improvements. Hardware not having an effect on sound would be like saying: there's no difference between a 200$ and a 5000$ integrated amplifier/receiver, pure non sense.

''the same picture and sound quality''

The part speaking about sound quality should really be removed from your comment as it really astound me to think you can beleive what you said is true.

eddman - Thursday, May 23, 2013 - link

http://i.imgur.com/d7oOj7d.jpgEzioAs - Thursday, May 23, 2013 - link

If I were a Titan owner (and I actually purchase the card, not some free giveaway or something), I would regret that purchase very, very badly. $650 is still a very high price for the normal GTX x80 cards but it makes the Titan basically a product with incredibly bad pricing (not that we don't know that already). Still, I'm no Titan owner, so what do I know...On the other hand, when I look at the graphs, I think the HD7970 is an even better card than ever despite it being 1.5 years older. However, as Ryan pointed out for previous GTX500 users who plan on sticking with Nvidia and are considering high end cards like this, it may not be a bad card at all since there are situations (most of the time) where the performance improvements are about twice the GTX580.

JeffFlanagan - Thursday, May 23, 2013 - link

I think $350 is almost pocket-change to someone who will drop $1000 on a video card. $1K is way out of line with what high-quality consumer video cards go for in recent years, so you have to be someone who spends to say they spent, or someone mining one of the bitcoin alternatives in which case getting the card months earlier is a big benefit.mlambert890 - Thursday, May 23, 2013 - link

I have 3 Titans and don't regret them at all. While I wouldn't say $350 is "pocket change" (or in this case $1050 since its x3), it also is a price Im willing to pay for twice the VRAM and more perf. With performance at this level "close" doesn't count honestly if you are looking for the *highest* performance possible. Gaming in 3D surround even 3xTitan actually *still* isn't fast enough, so no way I'd have been happy with 3x780s for $1000 less.