Intel’s Silvermont Architecture Revealed: Getting Serious About Mobile

by Anand Lal Shimpi on May 6, 2013 1:00 PM EST- Posted in

- CPUs

- Intel

- Silvermont

- SoCs

The Silvermont Module and Caches

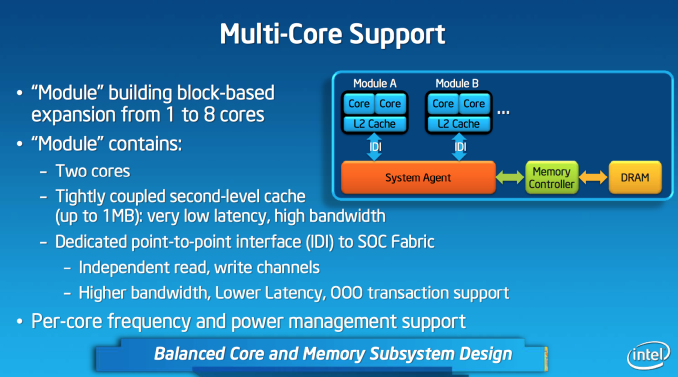

Like AMD’s Bobcat and Jaguar designs, Silvermont is modular. The default Silvermont building block is a two-core/two-thread design. Each core is equally capable and there’s no shared execution hardware. Silvermont supports up to 8-core configurations by placing multiple modules in an SoC.

Each module features a shared 1MB L2 cache, a 2x increase over the core:cache ratio of existing Atom based processors. Despite the larger L2, access latency is reduced by 2 clocks. The default module size gives you clear indication as to where Intel saw Silvermont being most useful. At the time of its inception, I doubt Intel anticipated such a quick shift to quad-core smartphones otherwise it might’ve considered a larger default module size.

L1 cache sizes/latencies haven’t changed. Each Silvermont core features a 32KB L1 data cache and 24KB L1 instruction cache.

Silvermont Supports Independent Core Frequencies: Vindication for Qualcomm?

In all Intel Core based microprocessors, all cores are tied to the same frequency - those that aren’t in use are simply shut off (power gated) to save power. Qualcomm’s multi-core architecture has always supported independent frequency planes for all CPUs in the SoC, something that Intel has always insisted was a bad idea. In a strange turn of events, Intel joins Qualcomm in offering the ability to run each core in a Silvermont module at its own independent frequency. You could have one Silvermont core running at 2.4GHz and another one running at 1.2GHz. Unlike Qualcomm’s implementation, Silvermont’s independent frequency planes are optional. In a split frequency case, the shared L2 cache always runs at the higher of the two frequencies. Intel believes the flexibility might be useful in some low cost Silvermont implementations where the OS actively uses core pinning to keep threads parked on specific cores. I doubt we’ll see this on most tablet or smartphone implementations of the design.

From FSB to IDI

Atom and all of its derivatives have a nasty secret: they never really got any latency benefits from integrating a memory controller on die. The first implementation of Atom was a 3-chip solution, with the memory controller contained within the North Bridge. The CPU talked to the North Bridge via a low power Front Side Bus implementation. This setup should sound familiar to anyone who remembers Intel architectures from the late 90s up to the mid 2000s. In pursuit of integration, Intel eventually brought the memory controller and graphics onto a single die. Historically, bringing the memory controller onto the same die as the CPU came with a nice reduction in access latency - unfortunately Atom never enjoyed this. The reasoning? Atom never ditched the FSB interface.

Even though Atom integrated a memory controller, the design logically looked like it did before. Integration only saved Intel space and power, it never granted it any performance. I suspect Intel did this to keep costs down. I noticed the problem years ago but completely forgot about it since it’s been so long. Thankfully, with Silvermont the FSB interface is completely gone.

Silvermont instead integrates the same in-die interconnect (IDI) that is used in the big Core based processors. Intel’s IDI is a lightweight point to point interface that’s far lower overhead than the old FSB architecture. The move to IDI and the changes to the system fabric are enough to improve single threaded performance by low double digits. The gains are even bigger in heavily threaded scenarios.

Another benefit of moving away from a very old FSB to IDI is increased flexibility in how Silvermont can clock up/down. Previously there were fixed FSB:CPU ratios that had to be maintained at all times, which meant the FSB had to be lowered significantly when the CPU was running at very low frequencies. In Silvermont, the IDI and CPU frequencies are largely decoupled - enabling good bandwidth out of the cores even at low frequency levels.

The System Agent

Silvermont gains an updated system agent (read: North Bridge) that’s much better at allowing access to main memory. In all previous generation Atom architectures, virtually all memory accesses had to happen in-order (Clover Trail had some minor OoO improvements here). Silvermont’s system agent now allows reordering of memory requests coming in from all consumers/producers (e.g. CPU cores, GPU, etc...) to optimize for performance and quality of service (e.g. ensuring graphics demands on memory can regularly pre-empt CPU requests when necessary).

174 Comments

View All Comments

extide - Wednesday, May 8, 2013 - link

What does Tegra 4 do 1.9Ghz in?Wilco1 - Wednesday, May 8, 2013 - link

Rumour is that it goes in the next ZTE phone out in a few months.phoenix_rizzen - Tuesday, May 7, 2013 - link

Note: Tegra 4i does *not* use Cortex-A15 CPUs, it uses Cortex-A9 CPUs! In fact, there's very little "Tegra 4" in the "Tegra 4i" other than the name.lmcd - Monday, May 13, 2013 - link

And the GPU is closer to the 4 than 3.And the process node. Oh yeah, that.

name99 - Monday, May 6, 2013 - link

You're willfully missing the point (and I say that as someone who's not convinced it will be easy for Intel to get ahead).What is the value of high speed CPUs in a phone (or for that matter a tablet, or a desktop machine)? For most users it is NOT that it allows some long computation to take a shorter time; rather it's that it provides snappiness --- it allows something that would have taken 1/40th of a sec to take 1/60th of a sec, or that would have taken 1/3rd of a sec to take 1/4 of a sec.

In this world, where snappiness is what matters, the ability to run your CPU at very high speeds for very short bursts of time (as long as this does not cost you long-run power) is an exceedingly valuable asset. You're being very stupid to dismiss it.

dig23 - Thursday, May 9, 2013 - link

I think so too. This article sounds totally biased :(bkiserx7 - Monday, May 6, 2013 - link

I wish they would go all out and lay it all on the table. I think it would drive great competition through the industry.Gigaplex - Tuesday, May 7, 2013 - link

Agreed. And if it does come out "too good", just downclock it and get even better battery life.jamesb2147 - Monday, May 6, 2013 - link

This is, by far, the worst article I've ever read on Anandtech. I'm pulling you out of my RSS feed specifically because of this article.Post when you have specs, guys, not Intel slides. I don't want to see the word "should."

Homeles - Monday, May 6, 2013 - link

AnandTech's architectural analyses are some of the best in the industry. It's your loss.