Choosing a Gaming CPU: Single + Multi-GPU at 1440p, April 2013

by Ian Cutress on May 8, 2013 10:00 AM ESTMetro 2033

Our first analysis is with the perennial reviewers’ favorite, Metro 2033. It occurs in a lot of reviews for a couple of reasons – it has a very easy to use benchmark GUI that anyone can use, and it is often very GPU limited, at least in single GPU mode. Metro 2033 is a strenuous DX11 benchmark that can challenge most systems that try to run it at any high-end settings. Developed by 4A Games and released in March 2010, we use the inbuilt DirectX 11 Frontline benchmark to test the hardware at 1440p with full graphical settings. Results are given as the average frame rate from a second batch of 4 runs, as Metro has a tendency to inflate the scores for the first batch by up to 5%.

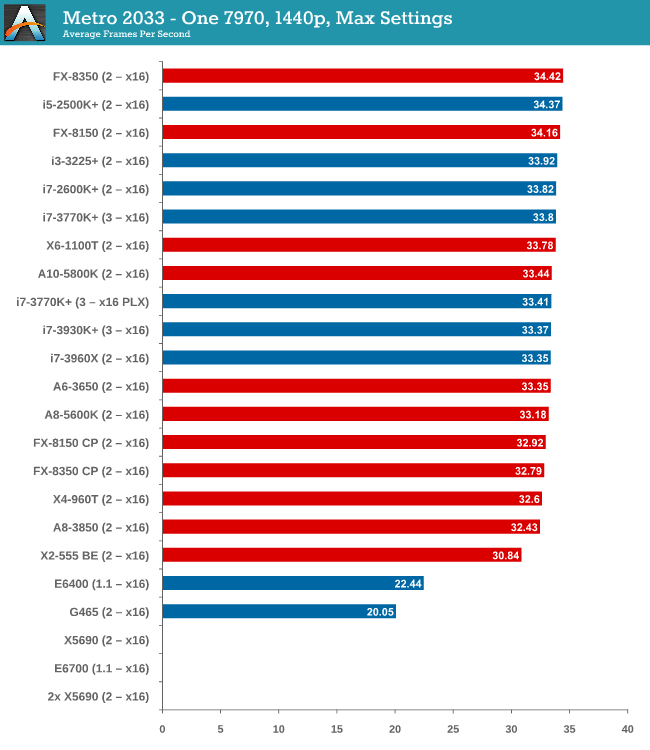

One 7970

With one 7970 at 1440p, every processor is in full x16 allocation and there seems to be no split between any processor with 4 threads or above. Processors with two threads fall behind, but not by much as the X2-555 BE still gets 30 FPS. There seems to be no split between PCIe 3.0 or PCIe 2.0, or with respect to memory.

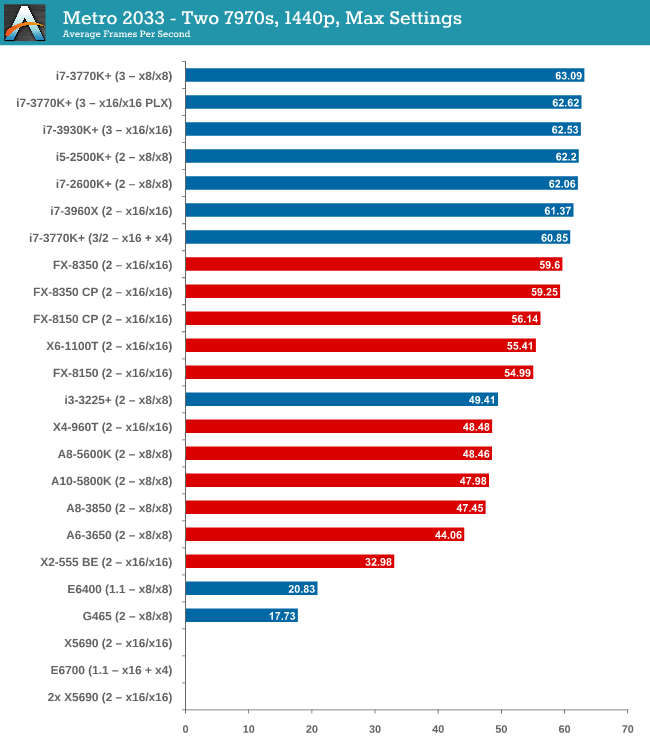

Two 7970s

When we start using two GPUs in the setup, the Intel processors have an advantage, with those running PCIe 2.0 a few FPS ahead of the FX-8350. Both cores and single thread speed seem to have some effect (i3-3225 is quite low, FX-8350 > X6-1100T).

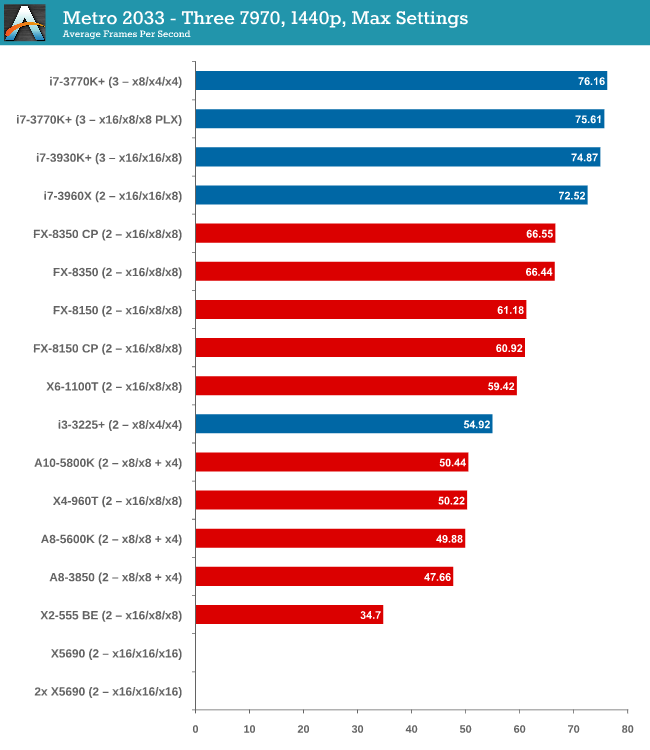

Three 7970s

More results in favour of Intel processors and PCIe 3.0, the i7-3770K in an x8/x4/x4 surpassing the FX-8350 in an x16/x16/x8 by almost 10 frames per second. There seems to be no advantage to having a Sandy Bridge-E setup over an Ivy Bridge one so far.

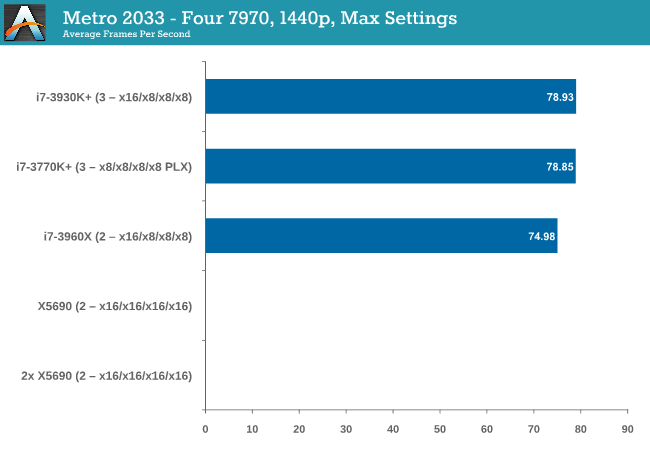

Four 7970s

While we have limited results, PCIe 3.0 wins against PCIe 2.0 by 5%.

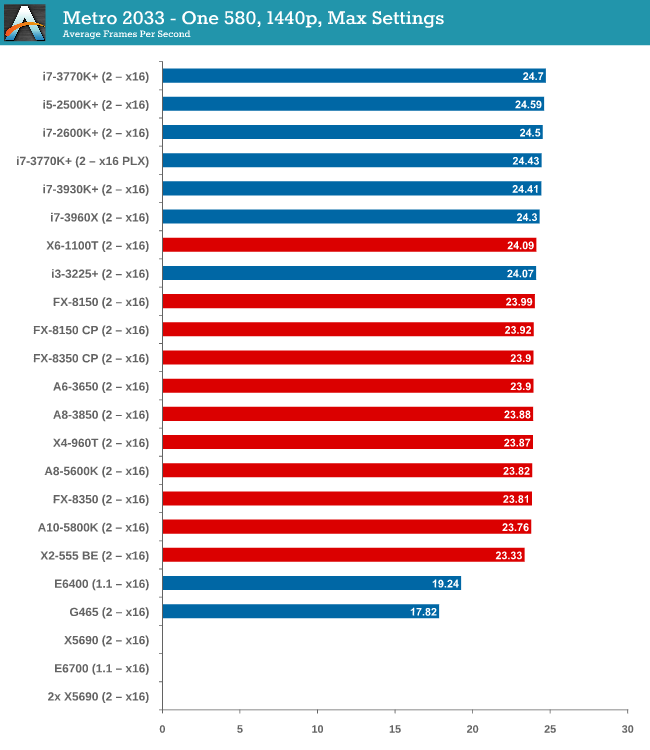

One 580

From dual core AMD all the way up to the latest Ivy Bridge, results for a single GTX 580 are all roughly the same, indicating a GPU throughput limited scenario.

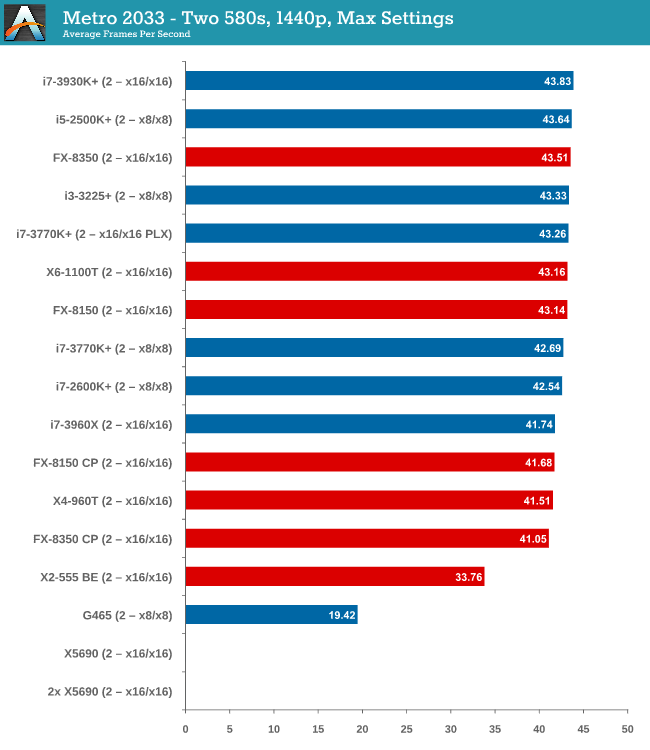

Two 580s

Similar to one GTX580, we are still GPU limited here.

Metro 2033 conclusion

A few points are readily apparent from Metro 2033 tests – the more powerful the GPU, the more important the CPU choice is, and that CPU choice does not matter until you get to at least three 7970s. In that case, you want a PCIe 3.0 setup more than anything else.

242 Comments

View All Comments

Dribble - Wednesday, May 8, 2013 - link

Mmm, not done by a true gamer as it doesn't address a number of things:1) Not everyone wants to run the game at max settings getting 30fps. Many want 60, or in my case 120fps as that's what my monitor can do. To do this we turn down graphics a bit, but this makes us much more likely to be cpu bound. Remember generally you can turn down the graphics settings to ease strain on gpu for higher fps, but cpu settings are much more fixed - you can't lower the resolution or turn of AA to fix cpu bottlenecks!

2) Min fps is key, not average fps. This I learned years ago playing ut2004. That game might return 60fps most of the time while admiring the scenery, but when you were in the middle of an intense fight with multiple players fps could half or even quarter. It's obviously in the middle of a firefight that you most need the high fps to win.

3) There's a huge difference between single player games and online. Basically most single player games also run on consoles so they run like a dream on most PC cpu's as even the slower ones are more powerful. However go onto a 64 player server (which a console can't do) and watch the fps tank - suddenly the cpu is being worked much harder. BF3, UT engined games all do this when you get on a large server.

Hence your conclusions are wrong imo. You want an o/c intel quad core - i5 750 o/c to about 4ghz+ or better really. Why that - because basically it's still not far of as fast as you'll get - the latest intel cpu's still have 4 cores, ipc isn't much better and only clock a little higher then that.

maximumGPU - Wednesday, May 8, 2013 - link

i'm pretty sure there's a sizeable jump moving from an i5 750 to 3570K, in both ipc and potential for overclock.Dribble - Wednesday, May 8, 2013 - link

I suppose it depends on what you define "sizable" as? Perhaps a i2500K would be better, but even with a i5 750 @4ghz vs a i3570K@4.5ghz we aren't talking huge increases in cpu power - 25-30% maybe (hyperthreading aside which generally isn't much help in games).IanCutress - Wednesday, May 8, 2013 - link

I very much played a lot of clan-based BF2/BF2142 for a long while. 'True Gamer' is often a misnomer anyway, perpetuated by those who want to categorize others or want to announce their own true nature.1) The push will always be towards the highest settings at which you can hit that 60-120 FPS ideal. If some of the games we see today can't hit 60 on a single GPU at 1440p, at 4K it's all going to tank. Many games tested in this review hit 60+ above two GPUs which was the point of this article to begin with.

2) Min FPS falls under the issue of statistical reporting. If you run a game benchmark (Dirt3) and in one scene of genuine gameplay there is a 6-car pileup, it would show the min FPS of that one scene. So if that happened on an FX-8350 and min-FPS was down to 20 FPS when others didn't have this scene were around 90 FPS for minimum, how is that easily reported and conveyed in a reasonable way to the public? A certain amount of acknowledgement is made on the fact that we're taking overall average numbers, and that users would apply brain matter with regard to an 'average minimum'.

3) This is a bit obvious, but try doing 1400 tests on 64 player servers and keeping any level of consistency. If this is your usage scenario, then you'll know what concessions you will have to make.

An i5-750 using an older chipset also suffers from less of the newer features - native SATA 6Gbps for example for an awesome RAID-0 setup. This could be the limiting factor in your gaming PC. We will be testing that generation for the next update of this testing :)

As written in the review, the numbers we have taken are but a small subset of everything that is possible, and we can only draw conclusions from the numbers we have taken. There are other numbers available online which may be more relevant to you, but these are the ones under our test-bed situations. Your setup is different from someone elses, which is a different usage scenario from others - testing them all would require a few years in Narnia. But suggestions are more than welcome!

Ian

darckhart - Wednesday, May 8, 2013 - link

I agree with Dribble's post above, but your reply was also well thought and written, just like your article. Keep up the good work. Thanks!Dribble - Wednesday, May 8, 2013 - link

I suppose "true gamer" does sound a bit elitist, by that I really meant someone who plays not benchmarks. I agree it's hard to test min fps in 64 player BF3 matches, but that's the sort of moment when your choice of cpu matters, not in for example in a canned off-line BF3 benchmark. As you are advising on cpu buying choices for gaming it is pretty important.My personal experience is the offline canned benchmarks giving average fps say you require a cpu a lot less powerful then you really do when you take your fancy new rig online in the latest super popular multi player game. Particularly as in that game you pretty quickly start playing to win and are willing to sacrifice some fancy settings to get the fps up so you don't loose again as you try to hit that annoying fast moving 15 year old while your fps is tanking :)

Therefore while it's fine to advise those people who only want to play offline console ports using benchmarking as you did, it's just doesn't work for the rest of us.

JarredWalton - Wednesday, May 8, 2013 - link

It sounds more than a bit elitist: it is elitist. For every gamer that spends 10-20 hours of time each week in multiplayer gaming (MMORPG, or whatever FPS you want to name, or World of Tanks, etc.), there are likely at least ten times as many gamers that generally stick to single player games. What's more, that sort of definition of "true gamer" may as well just say "high school or early 20s with little life outside of the digital realm." Yes, that's a relatively big demographic, but there are many 20, 30, 40, and even 50-somethings that still play a fair amount of games, but never bother with the multiplayer stuff. In fact, I'd say that of the 30+ year old people I know well, less than 1% would meet your "true gamer" requirement, while 5% would still be "gamers".Says the 39 year old fuddy duddy.

Spunjji - Wednesday, May 8, 2013 - link

The purpose of this article is to give a scientific basis for comparison within the boundaries of realistic testing deadlines. I would be interested to see you produce something as statistically rigorous based on performance numbers taken from online gaming. If you managed to do it before said numbers became irrelevant due to changes to the game code I would be utterly flabbergasted.Dribble - Thursday, May 9, 2013 - link

No, the purpose of this article is to recommend cpu's for gaming.frozen ox - Thursday, May 9, 2013 - link

There is no way to recreate or capture all the variables/scenarios to repeatedly benchmark a firefight in BF3 across multiple systems. The results from this hardware review are relevant, because they are easily repeatable by others and provide a fair baseline to compare systems. The point of this study is not what CPU do I need to play BF3 or Crysis at max settings, it's how much bandwidth bottleneck is going on with a single GPU setup? What happens in reality with multi-GPU setups? How well does the new AMD architecture (because "true gamers" want to save $$ to buy games) compare to Intel?What you have to do, as a "true gamer" and someone who has enough wits about them, is extrapolate the results to your scenario because everyone's will be different. And honestly, anyone who plays FPS...the "true gamers", will know what you pointed out. It's insanely obvious even the first time you play a demanding FPS MMPOG like BF3.

I however, play single player 99% of the time. Only online FPS I'll play now is CS.