FCAT: The Evolution of Frame Interval Benchmarking, Part 1

by Ryan Smith on March 27, 2013 9:00 AM EST

In the last year, stuttering, micro-stuttering, and frame interval benchmarking have become a very big deal in the world of GPUs, and for good reason. Through the hard work of the Tech Report’s Scott Wasson and others, significant stuttering issues were uncovered involving AMD’s video cards, breaking long-standing perceptions on stuttering, where the issues lie, and which GPU manufacturer (if anyone) does a better job of handling the problem. The end result of these investigations has seen AMD embarrassed and rightfully so, as it turned out they were stuttering far worse than they thought, and more importantly far worse than NVIDIA.

The story does not stop there however. As AMD has worked on fixing their stuttering issues, the methodologies pioneered by Scott have gone on to gain wide acceptance across the reviewing landscape. This has the benefit of putting more eyes on the problem and helping AMD find more of their stuttering issues, but as it turns out it has also created some problems. As we laid out in detail yesterday in a conversation with AMD, the current methodologies rely on coarse tools that don’t have a holistic view of the entire rendering pipeline. And as such while these tools can see the big problems that started this wave of interest, their ability to see small problems and to tell apart stuttering from other issues is very limited. Too limited.

In their conversation AMD laid out their argument for a change in benchmarking. A rationale for why benchmarking should move from using tools like FRAPS that can see the start of the rendering pipeline, and towards other tools and methods that can see the end of the rendering pipeline. And AMD was not alone in this; NVIDIA too has shown concern about tools like FRAPS, and has wanted to see testing methodologies evolve.

That brings us to this week. Often evolution is best left to occur naturally. But other times evolution needs a swift kick in the pants. This week NVIDIA has decided to give evolution that swift kick in the pants. This week NVIDIA is introducing FCAT.

FCAT, the Frame Capture Analysis Tool, is NVIDIA’s take on what the evolution of frame interval benchmarking should look like. By moving the measurements of frame intervals from the start of the rendering pipeline to the end of the pipeline, FCAT evolves the state of benchmarking by giving reviewers and consumers alike a new way to measure frame intervals. A year and a half ago the use of FRAPS brought a revolution to the 3D game benchmarking scene, and today NVIDIA seeks to bring about that revolution all over again.

FCAT is a powerful, insightful, and perhaps above all else labor intensive tool. For these reasons we are going to be splitting up our coverage on FCAT into two parts. Between trade shows and product launches we simply have not had enough time to put together a complete and proper dataset for FCAT, so rather than to do this poorly, we’re going to hold back our results until we’ve had a chance to run all of the FCAT tests and scenarios that we want to run

In part one of our series on FCAT, today we will be taking a high-level overview of FCAT. How it works, why it’s different from FRAPS, and why we are so excited about this tool. Meanwhile next week will see the release of part two of our series, in which we’ll dive into our FCAT results, utilizing FCAT to its full extent to look at where FCAT sees stuttering and under what conditions. So with that in mind, let’s dive into FCAT.

Reprise: When FRAPS Isn’t Enough

Since we covered the subject of FRAPS in great detail yesterday, we’re not going to completely rehash it. But for those of you who have not had the time to read yesterday’s article, here’s a quick rundown of how FRAPS measures frame intervals, and why at times this can be insufficient.

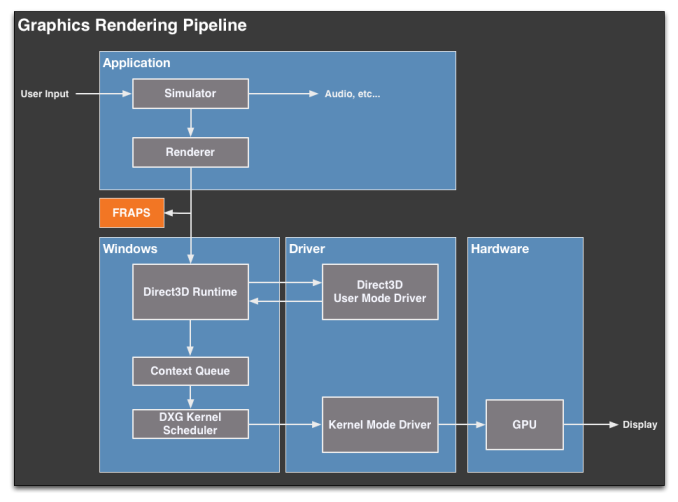

Direct3D (and OpenGL) uses a complex rendering pipeline that spans several different mechanisms and stages. When a frame is generated by an application, it must travel through the pipeline to Direct3D, the video drivers, a frame queue (the context queue), a GPU scheduler, the video drivers again, the GPU, and finally after that a frame can be displayed. The pipeline analogy is used here because that’s exactly what it is, with the added complexity of the context queue sitting in the middle of that pipeline.

FRAPS for its part exists at almost the very beginning of this pipeline. It interfaces with individual applications and intercepts the Present calls made to Direct3D that mark the end of each frame. By counting Present calls FRAPS can easily tell how many frames have gone into the pipeline, making it a simple and effective tool for measuring average framerates.

The problem with FRAPS as it were, is that while it can also be used to measure the intervals between frames, it can only do so at the start of the rendering pipeline, by counting the time between Present calls. This, while better than nothing, is far removed from the end of the pipeline where the actual buffer swaps take place, and ultimately is equally removed from the end-user experience. Furthermore because FRAPS is so far up the rendering pipeline, it’s insulated from what’s going on elsewhere; the context queue in particular can hold up to 3 frames, which means the rate of flow into the context queue can at times be very different from the rate of flow outside of the context queue.

As a result FRAPS is best descried as a coarse tool. It can see particularly egregious stuttering situations – like what AMD has been experiencing as of late – but it cannot see everything. It cannot see stuttering issues the context queue hides, and it’s particularly blind to what’s going on in multi-GPU scenarios.

88 Comments

View All Comments

ARealAnand - Tuesday, April 9, 2013 - link

I'm not sure you realize how development of silicon based semiconductor product works. This is not on the scale of a planting season where you put down some seed an later that year you harvest your crop. You start off with the specification phase of the product, you move to development of the hdl and verification of everything and then you go to nre and silicon samples. This is a multi-year process. AMD may well have known about this issue just as long as nvidia but silicon products don't go from specification to product overnight. That is why vendors offer driver/firmware/microcode updates. As to taking a pot shot at AMD laying off R&D people, it's called a business decision. Sometimes you need to let people go so that the rest of the employees can remain employed. Otherwise you can end up facing bankruptcy and massive layoffs. I don't know if it's the right move at this time or not, and I'm no financial analyst. Currently nvidia has no gpu chips directly that share a piece of silicon with the cpu, unlike AMD and intel. The next generation of consoles seem to have gone with AMD. I haven't heard of any big chipsets from nvidia. Anyway, sorry for the long response. I believe AMD, Intel, and nvidia all have strong points and areas where they can improve. This seems like it might be largely a driver issue and I'll admit that AMD seem to have had issues with drivers. Your post just seemed like an easy shot against AMD. I am not affiliated with AMD, nvidia, or Intel and the views expressed in this post are my own opinions and not those of any current or previous employer.arbiter9605 - Wednesday, April 17, 2013 - link

From what I heard/read somewhere, Nvidia new of such a problem back in their 8000 series card(8800gtx/gts/gt). So its something they knew about for a long time. Nvidia has hardware built in to their cards to keep the cards in sync frame wise where as AMD doesn't. Some time of 2 years for AMD to fix it properly as well as a software driver fix will most likely cost some fps.name99 - Thursday, March 28, 2013 - link

I'd like an AnandTech investigation of a slightly different issue:How fast does Apple update the screen on iPads and iPhones when displaying movies? Specifically, when displaying movies, do they switch to updating the screen only every 24th of a second?

The reason I think this is an interesting question is that, in my experience, movies displayed on iPad show none of the stuttering when panning that is so obvious on both TVs and computers, stuttering which is, as far as I can tell, generated by the 3:2 pullup. (I don't have a 120Hz TV, so I can't comment on how well they deal with this.

So we have the visual suggestion that this is what Apple is doing, along with the obvious point that it would presumably save power to only refresh the screen at 24Hz (though the power savings may be negligible).

I must admit I would find very interesting an investigation of this (perhaps by similar techniques to what are being used here, like a movie consisting of a color coded sequence of frames, and time-stamped video capture; though you'd probably have to use an external video camera.)

And this is not just an Apple specific issue; it would be interesting to know if Android and MS likewise are capable of displaying stutter-free movies on their mobile devices (unlike on the desktop where, sure, you have far less control, but for fsck's sake --- can't you at least do the job right in full screen mode?)

mayankleoboy1 - Thursday, March 28, 2013 - link

So where does VirtuPro and VirtuHyperformance etc. come in this picture ?wingless - Friday, March 29, 2013 - link

Great question. Enough Z77 mobos alone support this to warrant investigation.cactusdog - Thursday, March 28, 2013 - link

Wow, so this issue of stuttering has been talked about amongst users for at least a decade, then Scott Wasson from techreport decides to run a test of latency just weeks before Nvidia releases their super duper new tool to test latency?......and Nvidia have been working on it for 2 years? What a coincidence!! ...and NVidia cards perform better in this regard? Double coincidence!! So does that mean Nvidia is a benevolent company who wants to help AMD fix their stuttering issues so AMD can sell more cards?? Wow they must be saints!! We can add this to Nvidia's long list of open, honest and transparent business practices.cobalt42 - Thursday, March 28, 2013 - link

Not sure if joking or troll.... Scott's latency tests started in their August 23, 2012 article entitled "Inside the second: Gaming performance with today's CPUs". That's not "just weeks" ago. Second, if NVIDIA thinks Scott's FRAPS tests are so awesome for them, why would they bother to release a tool that measures at an entirely different point in the pipeline? Your conspiracy theory is not only factually wrong, it doesn't even make a good conspiracy theory.JPForums - Thursday, March 28, 2013 - link

I also agree with you and Spoelie. Simulation step stutter is also an issue that should be covered. It would be really nice to get simulation timestamps directly from the output of the simulator and match them up to their corresponding output frames. However, this would probably require collaboration with game developers that you probably won't get. Until then, using a tool that works at the output of the renderer (like FRAPS) and can associate simulation steps with output frames would be nice.That said, there is really little that AMD or Nvidia can do to fix issues in the application other than trick it into working correctly. These results would be more useful for game developers developing new engines. Also keep in mind, simulation steps are tied loosely (through queues) to GPU's processing capabilities (unless the bottleneck happens to be before the command is dispatched to D3D). Simulation steps should be roughly equal to frame times on average. If the GPU processing were completely consistent, then the latency between the simulation step and output would be fixed and the output would appear smooth. It is variations in frame times that cause variations in simulation steps. On average, the variations of each should be roughly equal. The worse case stutter, which should be something like double the frame time variation (when simulator is compensating it the opposite direction as the frame time variation), is what we need to look out for. That said, variation in frame time is generally smaller than frame time itself. I would suggest that simulation step stuttering is a smaller problem than frame time stuttering and becomes smaller as frame times get shorter. Point of interest, Nvidia's Frame metering may actually increase simulation step stuttering.

Unwinder - Thursday, March 28, 2013 - link

I've just added FCAT overlay rendering support to OSD server of MSI Afterburner and EVGA Precision. Still need some time to discuss exact RGB color sequence with NVIDIA, then I guess we'll release it to public.Ryan Smith - Friday, March 29, 2013 - link

Hi Unwinder;Thank you for the update and for adding support for this. I think it's a great relief all-around having someone besides NVIDIA providing the overlay.

It's too big to post in our comments, but if you need the colors hit me up. They're not secret. I can send you the list of precise colors in hex form.