SanDisk Ultra Plus SSD Review (256GB)

by Anand Lal Shimpi on January 7, 2013 9:00 AM ESTAnandTech Storage Bench 2011

Two years ago we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

1) The MOASB, officially called AnandTech Storage Bench 2011 - Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011 - Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential, the rest range from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

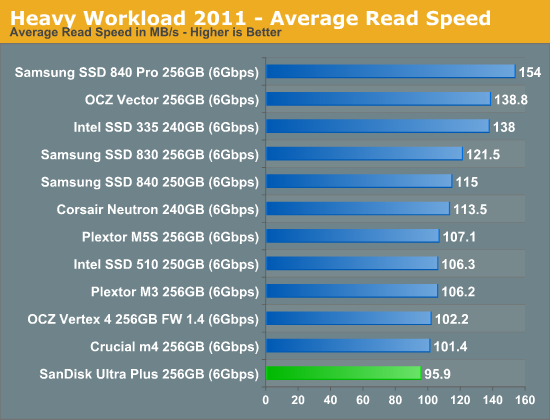

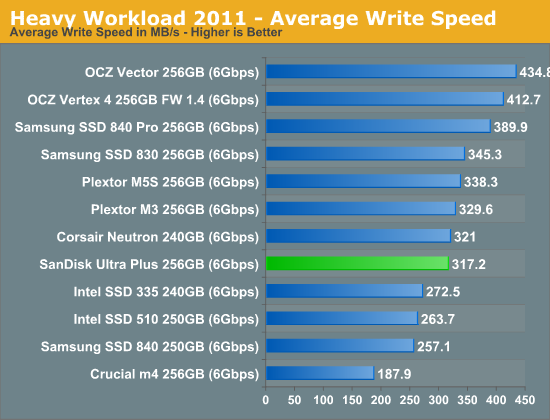

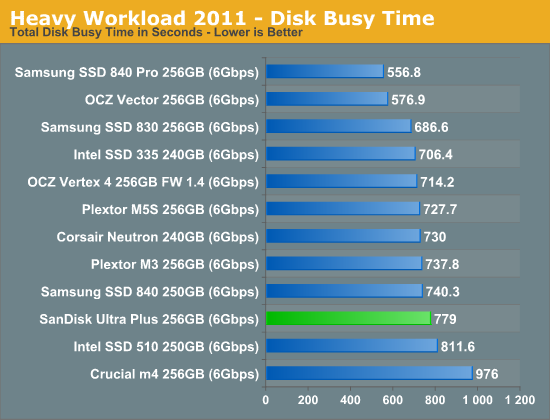

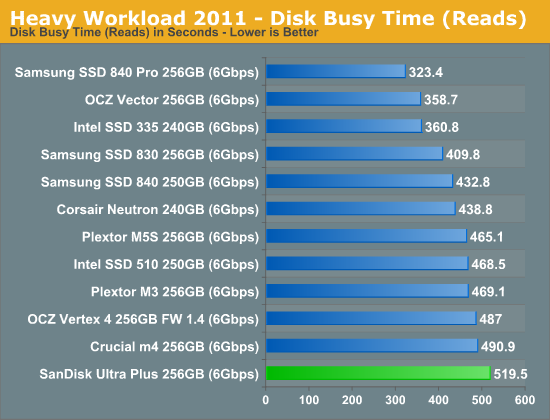

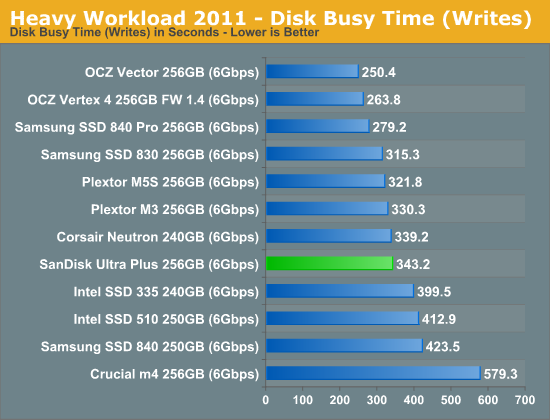

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running in 2010.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests.

AnandTech Storage Bench 2011 - Heavy Workload

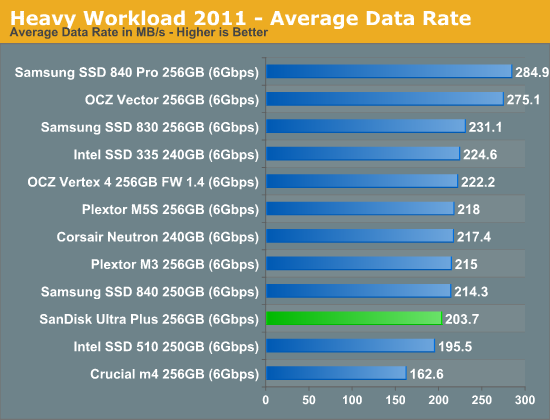

We'll start out by looking at average data rate throughout our new heavy workload test:

The Ultra Plus does ok in our heavy workload, hot on the heels of Samsung's SSD 840. By no means is this the fastest drive we've tested, but it's not abysmal either. We're fans of the vanilla 840, and the Ultra Plus performed similarly. Architecturally it's a bit of a concern if Samsung's TLC drive is able to outperform your 2bpc MLC drive though.

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

38 Comments

View All Comments

Flunk - Monday, January 7, 2013 - link

If you want real performance you could make a versio of this that features two of these PCBs along with a RAID chip for enhanced performance. All in a 2.5" form factor would be quite compelling.bmgoodman - Monday, January 7, 2013 - link

Sorry, but after the way SanDisk handled the TRIM issue on their SanDisk Extreme hard drives last year, I will NEVER buy from them again. I understand mistakes are made and things don't go as expected, but for the longest time they simply would not comment at all on the problem. Their response plan to bury their heads in the sand is NOT a strategy for good customer support.Quoth the raven, "nevermore!"

magnetar - Monday, January 7, 2013 - link

IMO, SanDisk handled the SandForce 5.0 firmware TRIM issue as it needed to be dealt with, carefully and professionally.The weak statement about it from a SanDIsk forum moderator that was buried in a thread started by a SanDisk customer was apparently missed by most of those concerned about this issue. That was a mistake. Responding to angry demands for a release date is not professional.

The other SSD manufactures that provided the fixed firmware very quickly were not doing their customers a favor. That indicated to me that those manufactures did very little testing and verification of the new firmware. Considering that all the SSD manufactures that used the 5.0 firmware, and SandForce itself missed finding the problem, a careful approach with the new firmware was warranted.

The firmware update SanDisk provided not only included the fixed firmware, but other fixes as well, including a fix for the incorrect temperature reading some of the Extreme SSDs had. SanDisk chose to provide one update with multiple fixes, rather than multiple firmware updates, which was the better option IMO. The more firmware updates necessary, the less professional the product is.

The R211 firmware update program SanDisk provided was the best one I've ever used. Running in Windows, it worked with the SanDisk Ex connected to the Marvell 9128 SATA chipset! Any other firmware update programs can do that? The lack of complaints about that firmware update program in the SanDisk forum also indicates how good it is.

No blemish on SanDisk IMO, actually exactly the opposite.

Kevin G - Monday, January 7, 2013 - link

Looking at the PCB for this drive makes me feel that this was a precursor to an mSATA version down the road. Hacking off the SATA and power connector for an edge connector looks like it'd be the right size. Kinda makes one wonder why they just didn't using a mSATA to SATA adapter in a 2.5" enclosure and launch both products simultaneously.Kristian Vättö - Monday, January 7, 2013 - link

SanDisk X110 was launched alongside the Ultra Plus, which is essentially an mSATA version of the Ultra Plus.perrydoell - Tuesday, January 8, 2013 - link

How about making the PCB 4x larger... 1GB SSD drive!vanwazltoff - Monday, January 7, 2013 - link

most companies purposely put out a high performing ssd and a low performing ssd, this probably their low end or more likely they are trying to shrink power consumption and size for smaller form factors such as tablets. sandisks extreme ssd went toe to toe with an 830 and proved itself a worthy component with more leveled results than an 830. i am sure they will have answer to the 840 pro soon enoughDeath666Angel - Monday, January 7, 2013 - link

The Extreme was a normal SF-2281 offering with Toggle Mode NAND. Nothing fancy about that imho.iaco - Monday, January 7, 2013 - link

64 GB packages. Verrrry nice.Tells me what we've known all along: Apple has no excuse charging obscene prices for NAND on their iPads, iPhones and Macs. 64 GB probably costs what, $50 at retail? Apple wants $200 to upgrade from 16 to 64 GB.

Maybe they'll finally get around to updating the iPod classic.

Kristian Vättö - Monday, January 7, 2013 - link

8GB 2-bit-per-cell MLC contract price is currently $4.58 ($0.57) on average according to inSpectrum. That would put the cost of 64GB MLC NAND to $36.50. Price depends on quality, though, but smartphones/tablets in general don't use the highest quality NAND (the best dies are usually preserved for SSDs, the second tier NAND is for phones/tablets).