SanDisk Ultra Plus SSD Review (256GB)

by Anand Lal Shimpi on January 7, 2013 9:00 AM ESTPerformance Consistency

In our Intel SSD DC S3700 review I introduced a new method of characterizing performance: looking at the latency of individual operations over time. The S3700 promised a level of performance consistency that was unmatched in the industry, and as a result needed some additional testing to show that. The reason we don't have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag and cleanup routines directly impacts the user experience. Frequent (borderline aggressive) cleanup generally results in more stable performance, while delaying that can result in higher peak performance at the expense of much lower worst case performance. The graphs below tell us a lot about the architecture of these SSDs and how they handle internal defragmentation.

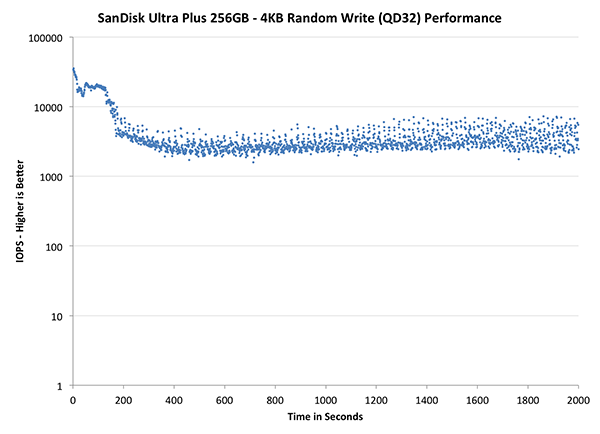

To generate the data below I took a freshly secure erased SSD and filled it with sequential data. This ensures that all user accessible LBAs have data associated with them. Next I kicked off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. I ran the test for just over half an hour, no where near what we run our steady state tests for but enough to give me a good look at drive behavior once all spare area filled up.

I recorded instantaneous IOPS every second for the duration of the test. I then plotted IOPS vs. time and generated the scatter plots below. Each set of graphs features the same scale. The first two sets use a log scale for easy comparison, while the last set of graphs uses a linear scale that tops out at 40K IOPS for better visualization of differences between drives.

The high level testing methodology remains unchanged from our S3700 review. Unlike in previous reviews however, I did vary the percentage of the drive that I filled/tested depending on the amount of spare area I was trying to simulate. The buttons are labeled with the advertised user capacity had the SSD vendor decided to use that specific amount of spare area. If you want to replicate this on your own all you need to do is create a partition smaller than the total capacity of the drive and leave the remaining space unused to simulate a larger amount of spare area. The partitioning step isn't absolutely necessary in every case but it's an easy way to make sure you never exceed your allocated spare area. It's a good idea to do this from the start (e.g. secure erase, partition, then install Windows), but if you are working backwards you can always create the spare area partition, format it to TRIM it, then delete the partition. Finally, this method of creating spare area works on the drives I've tested here but not all controllers may behave the same way.

The first set of graphs shows the performance data over the entire 2000 second test period. In these charts you'll notice an early period of very high performance followed by a sharp dropoff. What you're seeing in that case is the drive alllocating new blocks from its spare area, then eventually using up all free blocks and having to perform a read-modify-write for all subsequent writes (write amplification goes up, performance goes down).

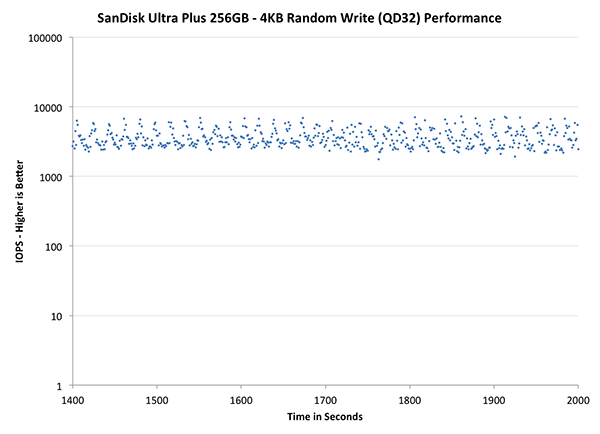

The second set of graphs zooms in to the beginning of steady state operation for the drive (t=1400s). The third set also looks at the beginning of steady state operation but on a linear performance scale. Click the buttons below each graph to switch source data.

| Impact of Spare Area | |||||||

| Intel SSD DC S3700 200GB | Corsair Neutron 240GB | OCZ Vector 256GB | Samsung SSD 840 Pro 256GB | SanDisk Ultra Plus 256GB | |||

| Default | |||||||

| 25% Spare Area | - | ||||||

The Ultra Plus' performance consistency, at least in the default configuration, looks a bit better than Samsung's SSD 840 Pro. The 840 Pro is by no means the gold standard here so that's not saying too much. What is interesting however is that the 840 Pro does much better with an additional 25% spare area compared to the Ultra Plus. SanDisk definitely benefits from more spare area, just not as much as we're used to seeing.

The next set of charts look at the steady state (for most drives) portion of the curve. Here we'll get some better visibility into how everyone will perform over the long run.

| Impact of Spare Area | |||||||

| Intel SSD DC S3700 200GB | Corsair Neutron 240GB | OCZ Vector 256GB | Samsung SSD 840 Pro 256GB | SanDisk Ultra Plus 256GB | |||

| Default | |||||||

| 25% Spare Area | - | ||||||

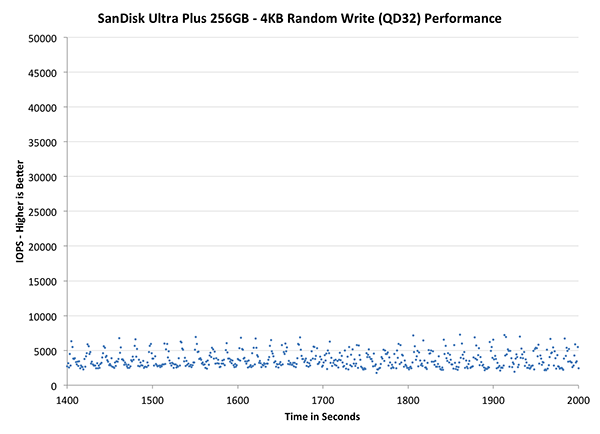

The final set of graphs abandons the log scale entirely and just looks at a linear scale that tops out at 50K IOPS. We're also only looking at steady state (or close to it) performance here:

| Impact of Spare Area | |||||||

| Intel SSD DC S3700 200GB | Corsair Neutron 240GB | OCZ Vector 256GB | Samsung SSD 840 Pro 256GB | SanDisk Ultra Plus 256GB | |||

| Default | |||||||

| 25% Spare Area | - | ||||||

Here we see just how far performance degrades. Performance clusters around 5K IOPS, which is hardly good for a modern SSD. Increasing spare area helps considerably, but generally speaking this isn't a drive that you're going to want to fill.

38 Comments

View All Comments

Samus - Monday, January 7, 2013 - link

I'm just dying for a mainstream Intel S3700 to hit the consumer corner...Beenthere - Monday, January 7, 2013 - link

Few if anyone would be able to differentiate a noticeable actual system performance change no matter which one of the listed SSDs they chose. SanDisk hasn't yet learned how to dupe the benches but in due time their numbers will increase similar to the others.If you're going to buy an SSD you should do your homework so you know the liabilities and realities including reliability and campatibility issues, lost data, drive size change, etc. If you want an eye opener read the warranties on SSDs at the respective SSD mfg. websites.

mrdude - Monday, January 7, 2013 - link

"The drive PCB itself is very small, potentially paving the way for some interesting, smaller-than-2.5" form factors."That's the most interesting bit, I find. Those things are absolutely tiny. So tiny that it kind of makes you wonder if standardizing the NGFF cards is even worth it going forward. If you need small storage then you can just stick with the standard SATA connectors on an itsy bitsy drive.

Very cool :)

vol7ron - Monday, January 7, 2013 - link

n-TB sizes that much more of a coming realitySmCaudata - Monday, January 7, 2013 - link

I picked up a SanDisk extreme 240 last year for about 50¢ per GB and I'm happy. Even during black Friday I didn't see anything cheaper and this drive is fast. The difference between most ssd now is academic. User experience is nearly the same for average consumers.mayankleoboy1 - Monday, January 7, 2013 - link

Isnt it the same that OCZ implemented in Vertex4 f/w 1.4 ?blowfish - Monday, January 7, 2013 - link

So for XP users, would a drive that doesn't support Trim be the way to go, since MS decided not to add Trim to XP in order to push Win7?randinspace - Monday, January 7, 2013 - link

Upgrading to Windows 7 (and pretending like 8 didn't happen even though it has enviable features...) is the way to go. Seriously speaking (upgrade), it's not like a drive that has TRIM support is going to be a bad thing even if you can't use it, but see the above comments about Sandforce controllers in general/Intel's 335 series SSDs in particular. Or even see the comments about hacking in TRIM support, IF YOU DARE!FWIW I'm very happy with the performance of my (240GB) 335. I'd probably buy another one to put in my laptop if they weren't going for $40 more than what I paid last month...

dave_the_nerd - Monday, January 7, 2013 - link

They all support TRIM. None REQUIRE it.If your OS doesn't support TRIM commands, you just have to find the drive with the best built-in GC routines. That used to be Sandforce, but I don't know anymore.

kmmatney - Monday, January 7, 2013 - link

With Samsung and Intel SSDs you can just run their toolbox software to do a manual TRIM. Iknow you can schedule it automatically with the Intel SSD Toolbox, and I think you can do that with the Samsung software as well. I've had a 40GB Intel SSD running on Windows XP for 2+ years and the TRIM scheduler works great.