The ARM vs x86 Wars Have Begun: In-Depth Power Analysis of Atom, Krait & Cortex A15

by Anand Lal Shimpi on January 4, 2013 7:32 AM EST- Posted in

- Tablets

- Intel

- Samsung

- Arm

- Cortex A15

- Smartphones

- Mobile

- SoCs

Cortex A15: SunSpider 0.9.1

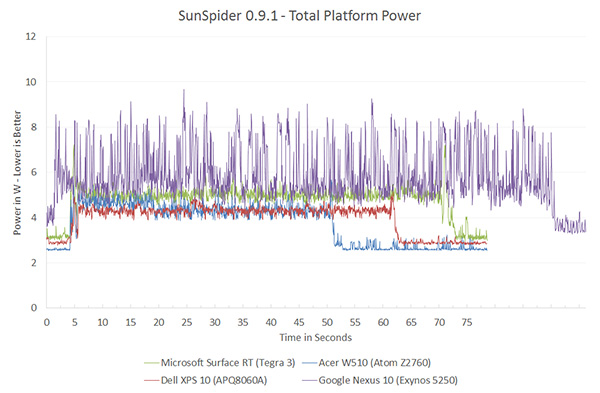

SunSpider performance in Chrome on the Nexus 10 isn't all that great to begin with, so the Exynos 5250 curve is longer than the competition. I wouldn't pay too much attention to overall performanceas that's more of a Chrome optimization issue, but we begin to shine some light on Cortex A15's power consumption:

Although these line graphs are neat to look at, it's tough to quantify exactly what's going on here. Following every graph from here on forward I'll present a bar chart that integrates over the benchmark time period (excluding idle) and presents total energy used during the task in Joules.

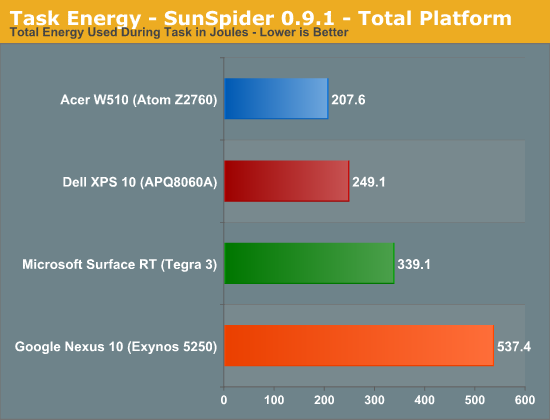

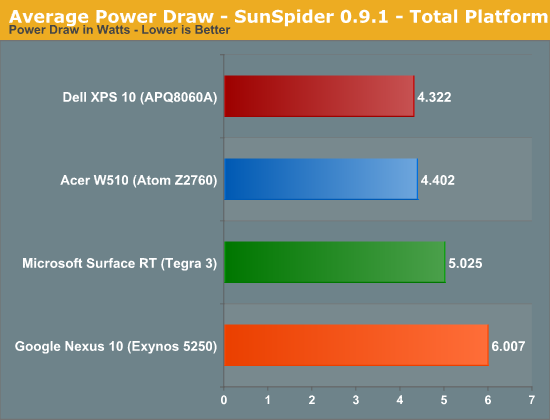

The data here reflects what you see in the chart above fairly well. Acer/Intel manage to get the edge over Dell/Qualcomm when it comes to total energy consumed during the test. The Nexus 10 doesn't do so well here but that's likely a software issue more than anything else.

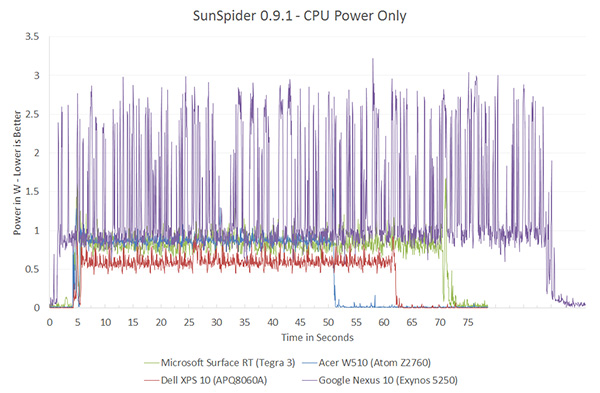

CPU power is just insane. Peak power consumption is around 3W, compared to around 1W for the competition.

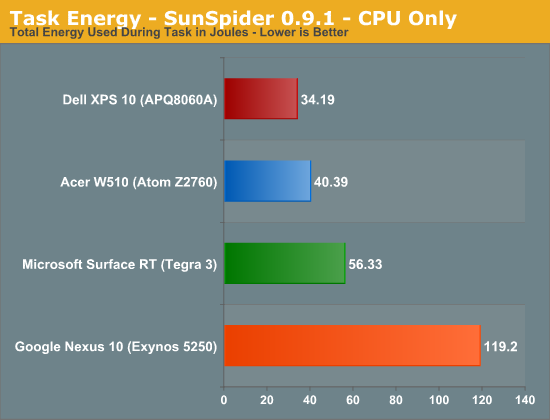

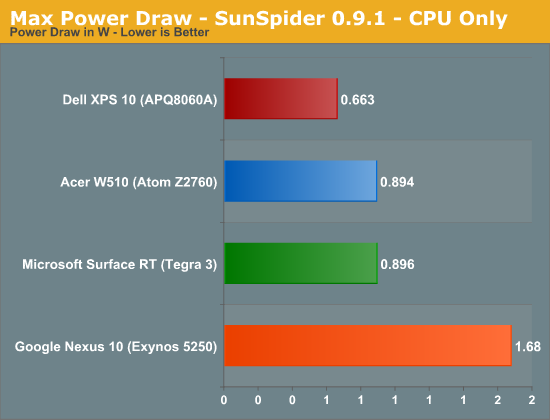

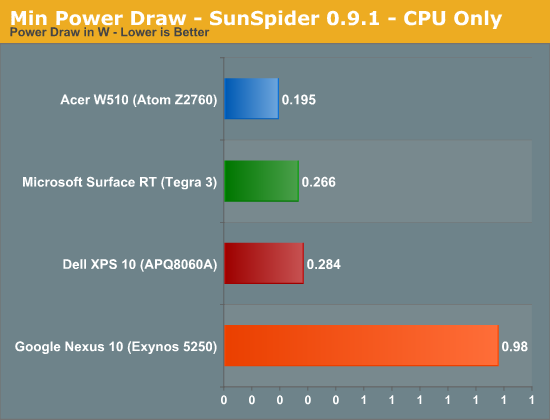

Looking at the CPU core itself, Qualcomm appears to have the advantage here but keep in mind that we aren't yet tracking L2 cache power on Krait (but we are on Atom). Regardless Atom and Krait are very close.

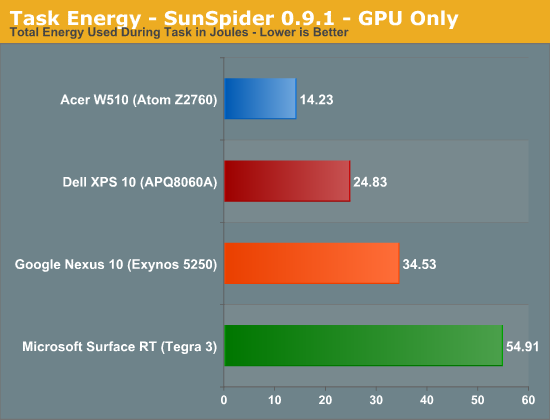

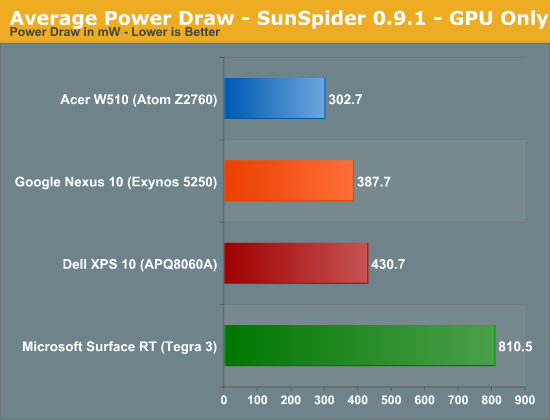

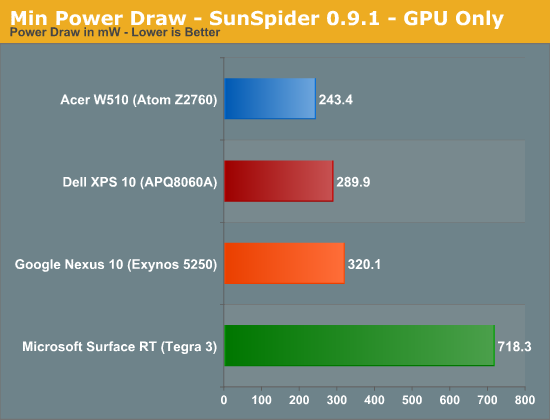

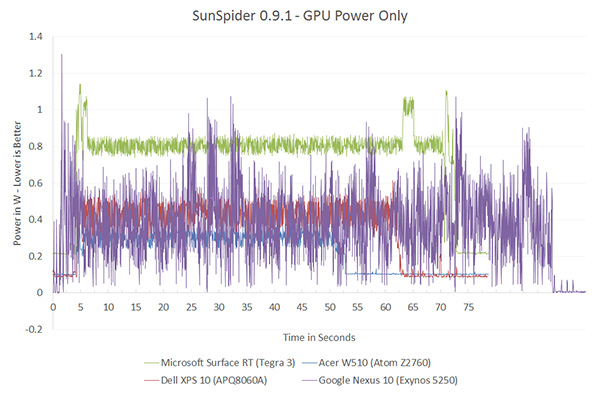

Even GPU power consumption is pretty high compared to everything else (minus Tegra 3).

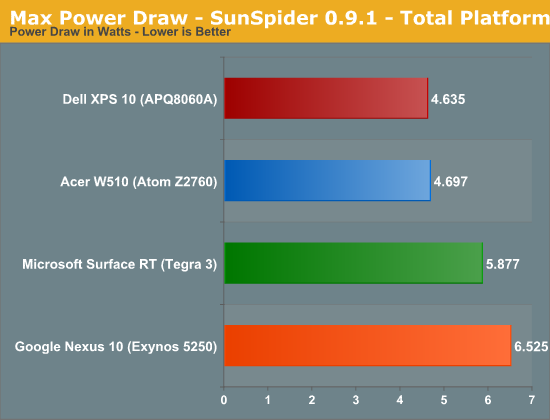

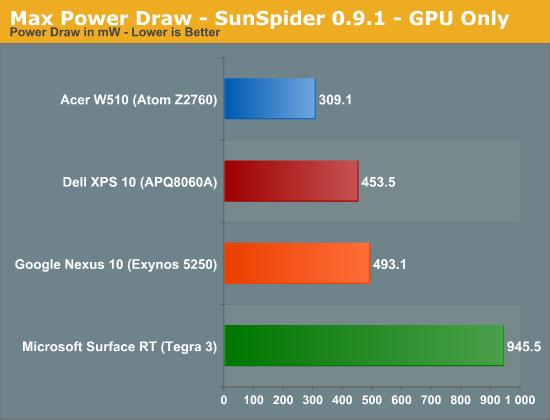

SunSpider - Max, Avg, Min Power

For your reference, the remaining graphs present max, average and min power draw throughout the course of the benchmark (excluding beginning/end idle times).

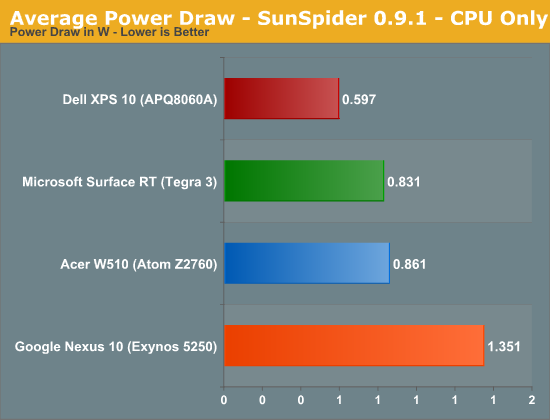

Average Power Draw

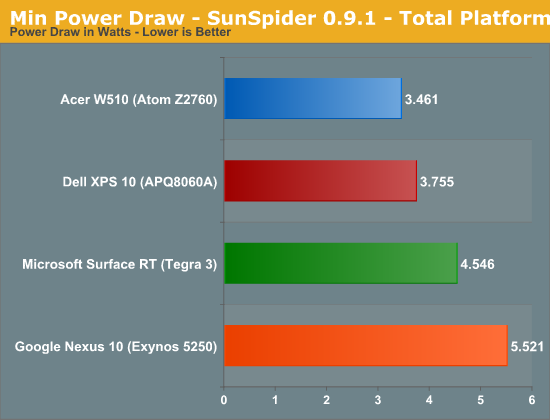

Minimum Power Draw

140 Comments

View All Comments

A5 - Friday, January 4, 2013 - link

Even if you just look at the Sunspider (which draws nothing on the screen) power draw, it's pretty clear that the A15 draws more power. There have been a ton of OEMs complaining about A15's power draw, too.madmilk - Friday, January 4, 2013 - link

Since when did screen resolution matter for CPU power consumption on CPU benchmarks? Platform power might change, yes, but this doesn't invalidate many facts like Cortex-A15 using twice as much power on average compared to Krait, Atom or Cortex-A9.Wolfpup - Friday, January 4, 2013 - link

Good lord. Do you have some evidence for any of this? If neither Windows nor Android is the "right platform" for ARM, then...are you waiting for Blackberry benchmarks? That's a whole lot of spin you're doing, presumably to fit the data to your preconceived "ARM IS BETTER!" faith.Veteranv2 - Friday, January 4, 2013 - link

Hahaha, the Nexus 10 has almost 4 times the pixels of the Atom.And the conclusion is it draws more power in benchmarks? Of course, those pixels aren't going to fill itself. Way to make conclusion.

How big was that Intel PR cheque?

iwod - Saturday, January 5, 2013 - link

While i wouldn't say it was a Intel PR, I think they should definitely have left the system level power usage out of the questions. There is no point telling me that a 100" Screen with ARM is using X amount of power compared to 1" Screen with Haswell.It is confusing.

But they did include CPU and GPU benchmarks. So saying it is Intel PR is just trolling.

AlB80 - Friday, January 4, 2013 - link

Architectures with variable length of instruction are doomed. Actually there is only one remains. x86.Intel made the step into a happy past when CISC has an advantage over RISC, when superscalarity was just a theory.

Cortex A57 is coming. ARM cores will easily outperform Atom by effective instruction rate with minimum overhead.

Wolfpup - Friday, January 4, 2013 - link

How is x86 doomed when it has an absolute stranglehold on real PCs, and is now competitive on ultramobile platforms?The only disadvantage it holds is the need for a larger decoder on the front end, which has been proportionally shrinking since 1995.

djgandy - Friday, January 4, 2013 - link

plus effing one!I think some people heard their uni lecturers say something once in 1999 and just keep repeating it as if it is still true!

AlB80 - Friday, January 4, 2013 - link

Shrinking decoder... nice myth. Of course complicated scheduler and ALU dozen impact on performance, but do not forget how decoded instruction queues are filled. Decoder is only one real difference.1. There is fundamental limits how many variable instructions can be decoded per clock. CISC has an instruction cross-interference at the decoder stage. One logical block should determine a total length of decoded instructions.

2. There is a trick when CISC decoder is disintegrated into 2-3 parts with dedicated inputs, so its looks like a few independent decoders, but each part can not decode any instruction.

Now compare it with RISC.

And as I said, what happens when Cortex can decode 4,5,6,7,8 instructions?

Kogies - Friday, January 4, 2013 - link

Don't be so quick to prophesy the death of a' that. What happens when a Cortex decodes 8 instructions... I don't know, it uses 8W?Also, didn't Apple choose CISC (Intel) chips over RISC (PowerPC)? Interestingly, I believe Apple made the switch to Intel because the PowerPC chips had too high a power premium for mobile computers.