The ARM vs x86 Wars Have Begun: In-Depth Power Analysis of Atom, Krait & Cortex A15

by Anand Lal Shimpi on January 4, 2013 7:32 AM EST- Posted in

- Tablets

- Intel

- Samsung

- Arm

- Cortex A15

- Smartphones

- Mobile

- SoCs

Determining the TDP of Exynos 5 Dual

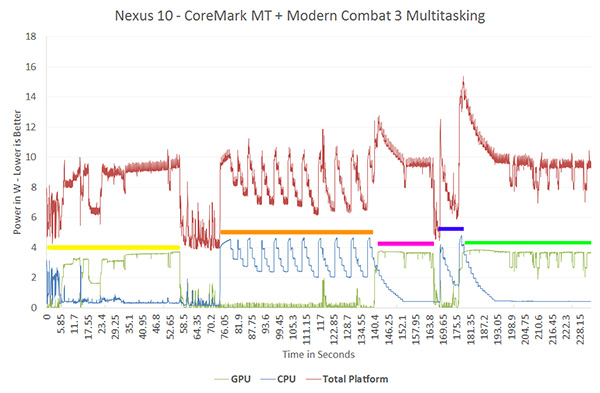

Throughout all of our Cortex A15 testing we kept bumping into that 4W ceiling with both the CPU and GPU - but we rarely saw both blocks use that much power at the same time. Intel actually tipped me off to this test to find out what happens if we try and force both the CPU and GPU to run at max performance at the same time. The graph below is divided into five distinct sections, denoted by colored bars above the sections. On this chart I have individual lines for GPU power consumption (green), CPU power consumption (blue) and total platform power consumption, including display, measured at the battery (red).

In the first section (yellow), we begin playing Modern Combat 3 - a GPU intensive first person shooter. GPU power consumption is just shy of 4W, while CPU power consumption remains below 1W. After about a minute of play we switch away from MC3 and you can see both CPU and GPU power consumption drop considerably. In the next section (orange), we fire up a multithreaded instance of CoreMark - a small CPU benchmark - and allow it to loop indefinitely. CPU power draw peaks at just over 4W, while GPU power consumption is understandably very low.

Next, while CoreMark is still running on both cores, we switch back to Modern Combat 3 (pink section of the graph). GPU voltage ramps way up, power consumption is around 4W, but note what happens to CPU power consumption. The CPU cores step down to a much lower voltage/frequency for the background task (~800MHz from 1.7GHz). Total SoC TDP jumps above 4W but the power controller quickly responds by reducing CPU voltage/frequency in order to keep things under control at ~4W. To confirm that CoreMark is still running, we then switch back to the benchmark (blue segment) and you see CPU performance ramps up as GPU performance winds down. Finally we switch back to MC3, combined CPU + GPU power is around 8W for a short period of time before the CPU is throttled.

Now this is a fairy contrived scenario, but it's necessary to understand the behavior of the Exynos 5250. The SoC is allowed to reach 8W, making that its max TDP by conventional definitions, but seems to strive for around 4W as its typical power under load. Why are these two numbers important? With Haswell, Intel has demonstrated interest (and ability) to deliver a part with an 8W TDP. In practice, Intel would need to deliver about half that to really fit into a device like the Nexus 10 but all of the sudden it seems a lot more feasible. Samsung hits 4W by throttling its CPU cores when both the CPU and GPU subsystems are being taxed, I wonder what an 8W Haswell would look like in a similar situation...

140 Comments

View All Comments

djgandy - Friday, January 4, 2013 - link

People care about battery life though. If you can run faster and go idle lower you can save more power.The next few years will be interesting and once everyone is on the same process, there will be less variables to find to assert who has the most efficient SOC.

DesktopMan - Friday, January 4, 2013 - link

"and once everyone is on the same process"Intel will keep their fabs so unless everybody else suddenly start using theirs it doesn't look like this will ever happen. Even at the same transistor size there are large differences between fab methods.

jemima puddle-duck - Friday, January 4, 2013 - link

Everyone cares about battery life, but it would take orders of magnitudes of improvement for people to actually go out of their way and demand it.Wolfpup - Friday, January 4, 2013 - link

No it wouldn't. People want new devices all the time with far less.And Atom swaps in for ARM pretty easily on Android, and is actually a huge selling point on the Windows side, given it can just plain do a lot more than ARM.

DesktopMan - Friday, January 4, 2013 - link

The same power tests during hardware based video playback would also be very useful. I'm disappointed in the playback time I get on the Nexus 10, and I'm not sure if I should blame the display, the SOC, or both.djgandy - Friday, January 4, 2013 - link

It's probably the display. Video decode usually shuts most things off except the video decoder. Anand has already done Video decode analysis in other articles.jwcalla - Friday, January 4, 2013 - link

You can check your battery usage meter to verify, but... in typical usage, the display takes up by far the largest swath of power. And in standby, it's the wi-fi and cell radios hitting the battery the most.So SoC power efficiency is important, but the SoC is rarely the top offender.

Drazick - Friday, January 4, 2013 - link

Why don't you keep it updated?iwod - Friday, January 4, 2013 - link

I dont think no one, or no anandtech reader with some technical knowledge in its mind, has ever doubt what Intel is able to come up with. A low power, similar performance or even better SoC in both aspect. Give it time Intel will get there. I dont think anyone should disagree with that.But i dont think that is Intel's problem at all. It is how they are going to sell this chip when Apple and Samsung are making one themselves for less then $20. Samsung owns nearly majority of the Android Market, Which means there is zero chance they are using a Intel SoC since they design AND manufacture the chip all by themselves. And when Samsung owns the top end of the market, the lower end are being filled by EVEN cheaper ARM SoCs.

So while Intel may have the best SoC 5 years down the road, I just dont see how they fit in in Smartphone Market. ( Tablet would be a different story and they should do alright.... )

jemima puddle-duck - Friday, January 4, 2013 - link

Exactly. Sometimes, whilst I enjoy reading these articles, it feels like the "How many angels can dance on the head of a pin" argument. Everyone knows Intel will come up with the fastest processor eventually. But why are we always told to wait for the next generation? It's just PR. Enjoyable PR, but PR none the less.