Plextor Updates The Firmware on M5 Pro: Promises Increased Performance, We Test It

by Kristian Vättö on December 10, 2012 2:30 PM ESTEarlier this week Plextor put out a press release about a new firmware for the M5 Pro SSD. The new 1.02 firmware is branded "Xtreme" and Plextor claims increases in both sequential write and random read performance. We originally reviewed the M5 Pro back in August and it did well in our tests but due to the new firmware, it's time to revisit the M5 Pro. It's still the only consumer SSD based on Marvell's 88SS9187 controller and it's one of the few that uses Toshiba's 19nm MLC NAND as most manufacturers are sticking with Toshiba's 24nm MLC for now.

It's not unheard of for manufacturers to release faster firmware updates even after the product has already made it to market. OCZ's Vertex 4 is among the most well known for its firmware updates because OCZ didn't provide just one, but two firmware updates that increased performance by a healthy margin. The SSD space is no stranger to aggressive launch schedules that force products out before they're fully baked. Fortunately a lot can be done via firmware updates.

The new M5 Pro firmware is already available at Plextor's site and the update should not be destructive, although we still strongly suggest that you have an up-to-date backup before flashing the drive. I've compiled the differences between the new 1.02 firmware and older versions in the table below:

| Plextor M5 Pro with Firmware 1.02 Specifications | |||

| Capacity | 128GB | 256GB | 512GB |

| Sequential Read | 540MB/s | 540MB/s | 540MB/s |

| Sequential Write | 340MB/s -> 330MB/s | 450MB/s -> 460MB/s | 450MB/s -> 470MB/s |

| 4KB Random Read | 91K IOPS -> 92K IOPS | 94K IOPS -> 100K IOPS | 94K IOPS -> 100K IOPS |

| 4KB Random Write | 82K IOPS | 86K IOPS | 86K IOPS -> 88K IOPS |

The 1.02 firmware doesn't bring any major performance increases and the most you'll be getting is 6% boost in random read speed. To test if there are any other changes, I decided to run the updated M5 Pro through our regular test suite. I'm only including the most relevant tests in the article but you can find all results in our Bench. The test system and benchmark explanations can be found in any of our SSD reviews, such as the original M5 Pro review.

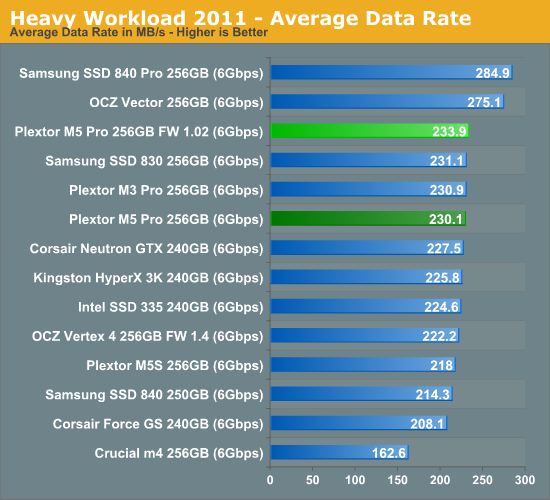

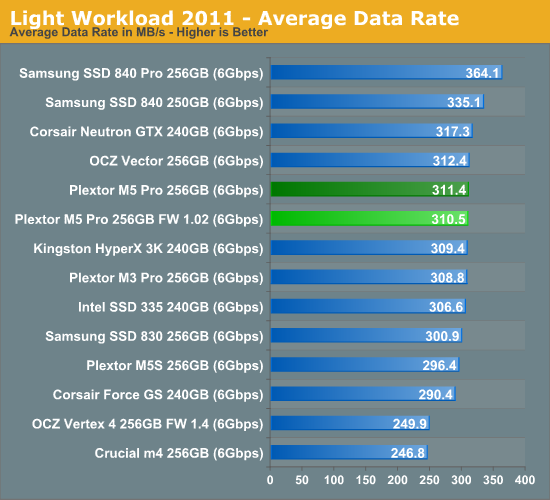

AnandTech Storage Bench

In our storage suites, the 1.02 firmware isn't noticeably faster. In our Heavy suite the new firmware is able to pull 3.8MB/s (1.7%) higher throughput but that falls within the range of normal run to run variance. The same applies to the Light suite test where the new firmware is actually slightly slower.

'

'

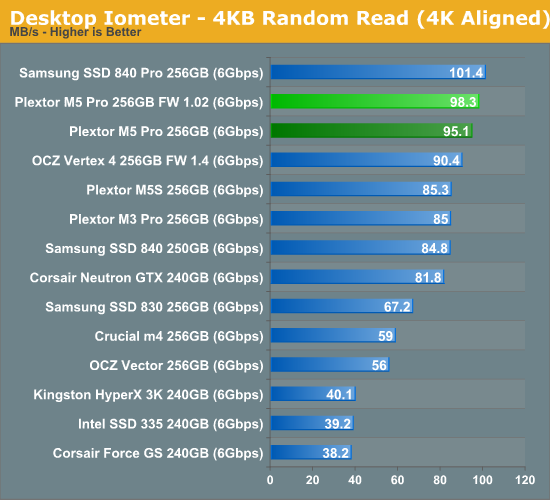

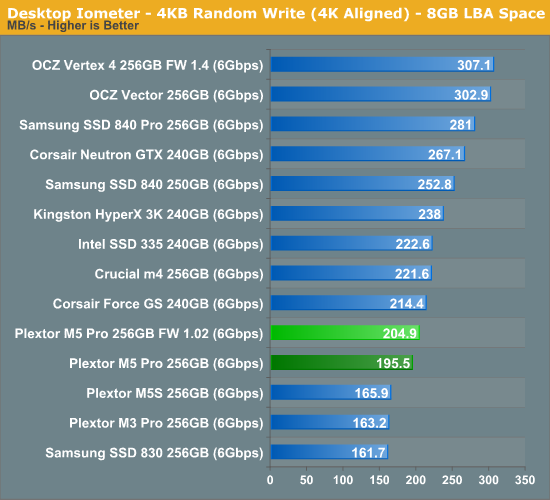

Random & Sequential Read/Write Speed

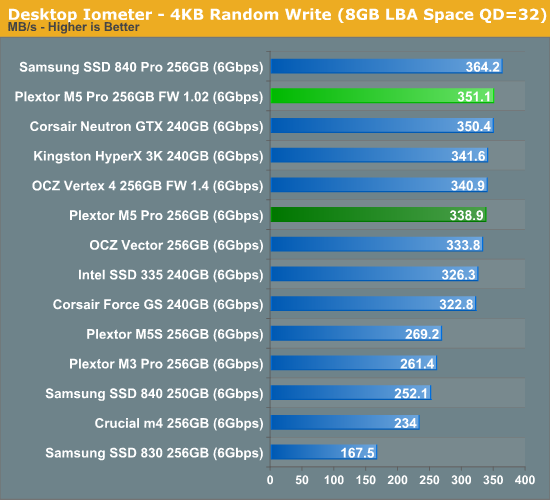

Random speeds are all up by 3-5%, though that's hardly going to impact real world performance.

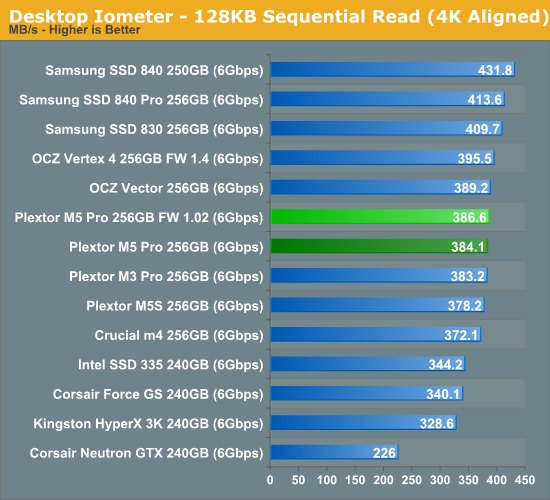

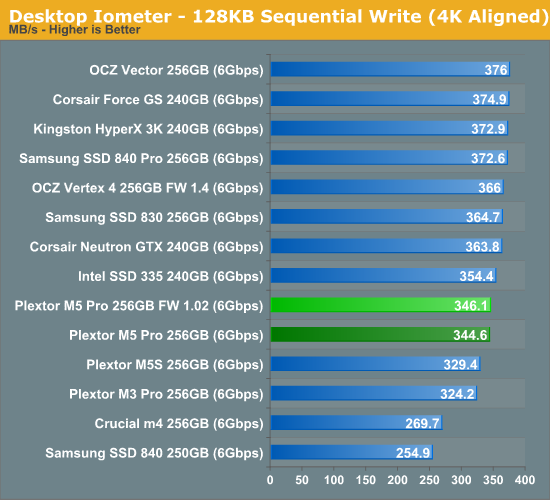

Sequential speeds are essentially not changed at all and the M5 Pro is still a mid-range performer.

46 Comments

View All Comments

jwilliams4200 - Thursday, December 13, 2012 - link

If SSDs were not to be used for heavy workloads, then the warranty would state the prohibited workloads. But they do not, other than (most of them) giving a maximum total amount TB written.The fact is that SSDs are used for many different applications and many different workloads.

Just because you favor certain workloads does not mean that everyone does.

Fortunately, not everyone thinks like you do on this subject.

JellyRoll - Thursday, December 13, 2012 - link

I would ask you to explain why there is a difference between Enterprise class SSDs and consumer SSDs.If there is no difference in the design, firmware and expectations between these two devices then I am sure that the multi-billion dollar companies such as Google are awaiting your final word on this with baited breath. Why, they have been paying much more for these SSDs that are designed for enterprise workloads for no reason!

You would think that these massive companies, such as Facebook, Amazon, Yahoo, and Google with all of their PHDs and humongous datacenters would have figured out that there is NO difference between these SSDs!

Why for their datacenters (which average 12 football fields in size) they can just deploy Plextor M5s! By the tens of thousands!

*hehe*

JellyRoll - Wednesday, December 12, 2012 - link

Also, operating systems and applications that delete data, such as temp and swap files, that do not end up in the recycle bin are subject to immediate TRIM command issuance. These files, thousands of them, are deleted every user session and are never seen in the recycle bin. Care to guess how many temp and cache files Firefox deletes in an hour?jwilliams4200 - Thursday, December 13, 2012 - link

So now every SSD user must run a web browser and keep the temporary files on the SSD?Who named you SSD overlord?

skytrench - Thursday, December 13, 2012 - link

Though I side with JR, in that the published IO consistency check doesn't show anything useful, modified it might have been an interesting test...(different blocksizes, taking breaks from only writing, maybe a reduction of load to see at which level the drives still perform well etc.etc).I find the test disrespectful to Plextor, who after all have delivered an improved firmware, and Anand to come out of this one lacking in credibility.

JellyRoll - Thursday, December 13, 2012 - link

The point is that since the files are subject to immediate issuance of a TRIM command and this cannot be done when testing without a filesystem, is that you cannot attempt to emulate application performance with trace-based testing and the utility they are using. This doesn't just apply to browsers either, this applies to ALL applications. From games, to office docs, to Win7 (or 8), or a simple media player.All applications, and operating systems, have these types of files that are subject to immediate TRIM commands.

gostan - Tuesday, December 11, 2012 - link

Hi Vatto,possible to get the samsung 830 through the performance consistency test? I know it's been discontinued but it's still available for sale in many places.

Cheers!

Kristian Vättö - Tuesday, December 11, 2012 - link

I don't have any Samsung 830s at my place but I'll ask Anand to run it on the 830. He's currently travelling so it will probably take a while but I'll try to include it in an upcoming SSD review.lunadesign - Tuesday, December 11, 2012 - link

WOW! My jaw hit the floor when I first saw the 1st graph.I totally understand that the consistency test isn't realistic for consumer usage. But what about people using the M5 Pro in low-to-moderate use servers? For example, I'm about to set up a new VMware dev server with four 256GB M5 Pros in RAID 10 (using LSI 9260-8i) supporting 8-10 VMs. In this config, I won't have TRIM support at all.

If I understand this consistency test, the drive is being filled and then its being sent non-stop requests for 30 mins that overwrite data in 4KB chunks. This *seems* to be an ultra-extreme case. In reality, most server drives are getting a choppy, fluctuating mixture of read and write operations with random pauses so the GC has much more time to do it work than the consistency test allowed, right?

Should I be concerned about using the M5 Pro is low-to-moderate use server situations? Should I over-provision the drives by 25% to ameliorate this? Or, worse yet, are these drives so bad that I should return them while I can? Or is this test case so far away from what I'll likely be seeing that I should use the drives normally with no extra over-provisioning?

JellyRoll - Tuesday, December 11, 2012 - link

For a non-TRIM environment this would be especially bad. You are constrained to the lowest speed of each SSD. In practical use in a scenario such as yours you will be receiving the bottom line of performance constantly. The RAID is only as fast as the slowest member, and with each drive dropping into the lower state frequently, one SSD will surely be in this low range constantly. Therefore your RAID will suffer.These SSDs are simply not designed for this usage. They are tailored for low QD, and the test results show that clearly.