Inside the Titan Supercomputer: 299K AMD x86 Cores and 18.6K NVIDIA GPUs

by Anand Lal Shimpi on October 31, 2012 1:28 AM EST- Posted in

- CPUs

- IT Computing

- Cloud Computing

- HPC

- GPUs

- NVIDIA

Introduction

Earlier this month I drove out to Oak Ridge, Tennessee to pay a visit to the Oak Ridge National Laboratory (ORNL). I'd never been to a national lab before, but my ORNL visit was for a very specific purpose: to witness the final installation of the Titan supercomputer.

ORNL is a US Department of Energy laboratory that's managed by UT-Battelle. Oak Ridge has a core competency in computational science, making it not only unique among all DoE labs but also making it perfect for a big supercomputer.

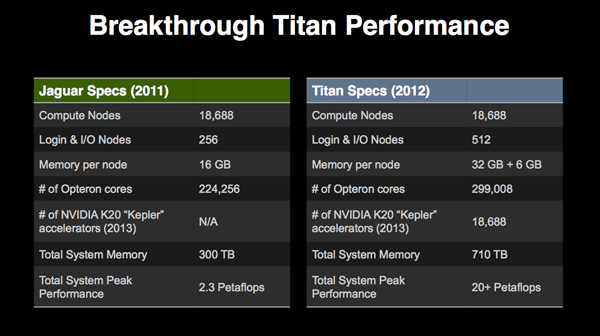

Titan is the latest supercomputer to be deployed at Oak Ridge, although it's technically a significant upgrade rather than a brand new installation. Jaguar, the supercomputer being upgraded, featured 18,688 compute nodes - each with a 12-core AMD Opteron CPU. Titan takes the Jaguar base, maintaining the same number of compute nodes, but moves to 16-core Opteron CPUs paired with an NVIDIA Kepler K20X GPU per node. The result is 18,688 CPUs and 18,688 GPUs, all networked together to make a supercomputer that should be capable of landing at or near the top of the TOP500 list.

We won't know Titan's final position on the list until the SC12 conference in the middle of November (position is determined by the system's performance in Linpack), but the recipe for performance is all there. At this point, its position on the TOP500 is dependent on software tuning and how reliable the newly deployed system has been.

Rows upon rows of cabinets make up the Titan supercomputer

Over the course of a day in Oak Ridge I got a look at everything from how Titan was built to the types of applications that are run on the supercomputer. Having seen a lot of impressive technology demonstrations over the years, I have to say that my experience at Oak Ridge with Titan is probably one of the best. Normally I cover compute as it applies to making things look cooler or faster on consumer devices. I may even dabble in talking about how better computers enable more efficient datacenters (though that's more Johan's beat). But it's very rare that I get to look at the application of computing to better understanding life, the world and universe around us. It's meaningful, impactful compute.

The Hardware

In the 15+ years I've been writing about technology, I've never actually covered a supercomputer. I'd never actually seen one until my ORNL visit. I have to say, the first time you see a supercomputer it's a bit anticlimactic. If you've ever toured a modern datacenter, it doesn't look all that different.

More Titan, the metal pipes carry coolant

Titan in particular is built from 200 custom 19-inch cabinets. These cabinets may look like standard 19-inch x 42RU datacenter racks, but what's inside is quite custom. All of the cabinets that make up Titan requires a room that's about the size of a basketball court.

The hardware comes from Cray. The Titan installation uses Cray's new XK7 cabinets, but it's up to the customer to connect together however many they want.

ORNL is actually no different than any other compute consumer: its supercomputers are upgraded on a regular basis to keep them from being obsolete. The pressures are even greater for supercomputers to stay up to date, after a period of time it actually costs more to run an older supercomputer than it would to upgrade the machine. Like modern datacenters, supercomputers are entirely power limited. Titan in particular will consume around 9 megawatts of power when fully loaded.

The upgrade cycle for a modern supercomputer is around 4 years. Titan's predecessor, Jaguar, was first installed back in 2005 but regularly upgraded over the years. Whenever these supercomputers are upgraded, old hardware is traded back in to Cray and a credit is issued. Although Titan reuses much of the same cabinetry and interconnects as Jaguar, the name change felt appropriate given the significant departure in architecture. The Titan supercomputer makes use of both CPUs and GPUs for compute. Whereas the latest version of Jaguar featured 18,688 12-core AMD Opteron processors, Titan keeps the total number of compute nodes the same (18,688) but moves to 16-core AMD Opteron 6274 CPUs. What makes the Titan move so significant however is that each 16-core Opteron is paired with an NVIDIA K20X (Kepler GK110) GPU.

A Titan compute board: 4 AMD Opteron (16-core CPUs) + 4 NVIDIA Tesla K20X GPUs

The transistor count alone is staggering. Each 16-core Opteron is made up of two 8-core die on a single chip, totaling 2.4B transistors built using GlobalFoundries' 32nm process. Just in CPU transistors alone, that works out to be 44.85 trillion transistors for Titan. Now let's talk GPUs.

NVIDIA's K20X is the server/HPC version of GK110, a part that never had a need to go to battle in the consumer space. The K20X features 2688 CUDA cores, totaling 7.1 billion transistors per GPU built using TSMC's 28nm process. With a 1:1 ratio of CPUs and GPUs, Titan adds another 132.68 trillion transistors to the bucket bringing the total transistor count up to over 177 trillion transistors - for a single supercomputer.

I often use Moore's Law to give me a rough idea of when desktop compute performance will make its way into notebooks and then tablets and smartphones. With Titan, I can't even begin to connect the dots. There's just a ton of computing horsepower available in this installation.

Transistor counts are impressive enough, but when you do the math on the number of cores it's even more insane. Titan has a total of 299,008 AMD Opteron cores. ORNL doesn't break down the number of GPU cores but if I did the math correctly we're talking about over 50 million FP32 CUDA cores. The total computational power of Titan is expected to be north of 20 petaflops.

Each compute node (CPU + GPU) features 32GB of DDR3 memory for the CPU and a dedicated 6GB of GDDR5 (ECC enabled) for the K20X GPU. Do the math and that works out to be 710TB of memory.

System storage is equally impressive: there's a total of 10 petabytes of storage in Titan. The underlying storage hardware isn't all that interesting - ORNL uses 10,000 standard 1TB 7200 RPM 2.5" hard drives. The IO subsystem is capable of pushing around 240GB/s of data. ORNL is considering including some elements of solid state storage in future upgrades to Titan, but for its present needs there is no more cost effective solution for IO than a bunch of hard drives. The next round of upgrades will take Titan to around 20 - 30PB of storage, at peak transfer speeds of 1TB/s.

Most workloads on Titan will be run remotely, so network connectivity is just as important as compute. There are dozens of 10GbE links inbound to the machine. Titan is also linked to the DoE's Energy Sciences Network (ESNET) 100Gbps backbone.

130 Comments

View All Comments

piroroadkill - Wednesday, October 31, 2012 - link

That sounds like a downgrade, no matter how you slice it..extide - Wednesday, October 31, 2012 - link

x2 I was thinking the same, especially at only 2.2Ghz!! I bet they are ~flat on CPU power and all the gain is from the GPU's.SunLord - Friday, November 2, 2012 - link

HPC is all highly multi-threaded by it's very nature which just happens to be about the only thing bulldozer is some what good atJorange - Wednesday, October 31, 2012 - link

I wonder how many Petaflops this beast would have achieved if it used Sandy Bridge EP class chips? Anandtech's review of the Opteron 6276 vs Sandy Bridge Xeon EP showed that Intel was far more performant.SunLord - Friday, November 2, 2012 - link

I doubt will make enough of difference to be worth it given the main focus is all on the cuda gpu compute sideCeriseCogburn - Saturday, November 10, 2012 - link

The AMD crap cores probably cause huge bottlenecks and lag the entire system and wind up as a large loss overall as they waste computer time.Jorange - Wednesday, October 31, 2012 - link

In a world in which millions of morons are enthralled by Honey Boo Boo and her band of genetic regressionists, it is great that scientists are advancing our understanding of the Universe. Without those 1%, one can only imagine the state our planet would be in.IanCutress - Wednesday, October 31, 2012 - link

I ported some Brownian motion code from CPU to GPU for my thesis and got a considerable increase (4000x over previously published data). Best thing was that the code scaled with GPUs. Having access to 20k GPUs with 2688 CUDA cores would just be gravy. Especially when simulating 10^12 and beyond independent particles.maximumGPU - Wednesday, October 31, 2012 - link

4000x ?! i don't think i've ever seen such a speedup, was that simply from 1 cpu to a 1 gpu?i ported a monte carlo risk simulation (which also uses brownian motion, although i suspect for different purposes than yours) and saw about 300-400X speed up, thought that was at the top end of what you can get in terms of speed increases.

IanCutress - Thursday, November 1, 2012 - link

It helped that the previously published data was a few generations back, so I had some Moore's Law advantage. The type of simulation for that research was essentially dropped there and then because it was so slow, and no-one had ever bothered to do it on newer hardware. I think a 2.2 GHz Nehalem single core simulation of my code compared to a GTX480 version of the code was 350x jump or so. Make that 16 cores vs 1 GPU (for a DP system) and it makes it more like 23x.