Memory Performance: 16GB DDR3-1333 to DDR3-2400 on Ivy Bridge IGP with G.Skill

by Ian Cutress on October 18, 2012 12:00 PM EST- Posted in

- Memory

- G.Skill

- Ivy Bridge

- DDR3

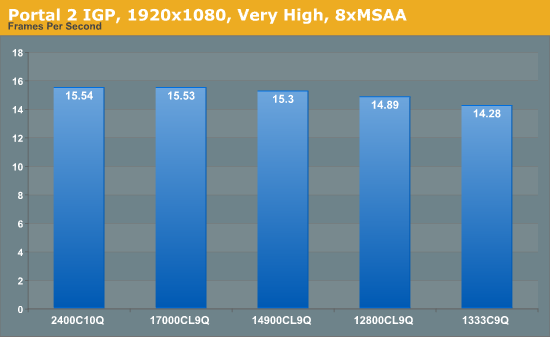

Portal 2

A stalwart of the Source engine, Portal 2 is the big hit of 2011 following on from the original award-winning Portal. In our testing suite, Portal 2 performance should be indicative of CS:GO performance to a certain extent. Here we test Portal 2 at 1920x1080 with High/Very High graphical settings.

Portal 2 mirrors previous testing, albeit our frame rate increases as a percentage are not that great – 1333 to 1600 is a 4.3% increase, but 1333 to 2400 is only an 8.8% increase.

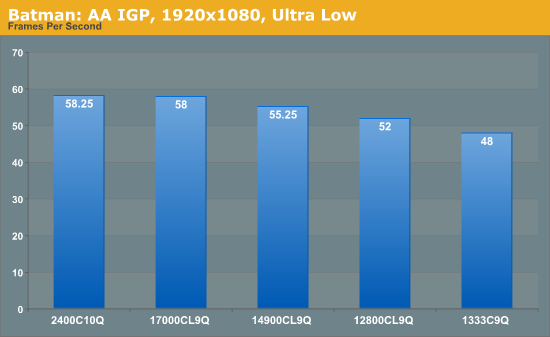

Batman Arkham Asylum

Made in 2009, Batman:AA uses the Unreal Engine 3 to create what was called “the Most Critically Acclaimed Superhero Game Ever”, awarded in the Guinness World Record books with an average score of 91.67 from reviewers. The game boasts several awards including a BAFTA. Here we use the in-game benchmark while at the lowest specification settings without PhysX at 1920x1080. Results are reported to the nearest FPS, and as such we take 4 runs and take the average value of the final three, as the first result is sometimes +33% more than normal.

Batman: AA represents some of the best increases of any application in our testing. Jumps from 1333 C9 to 1600 C9 and 1866 C9 gives an 8% then another 7% boost, ending with a 21% increase in frame rates moving from 1333 C9 to 2400 C10.

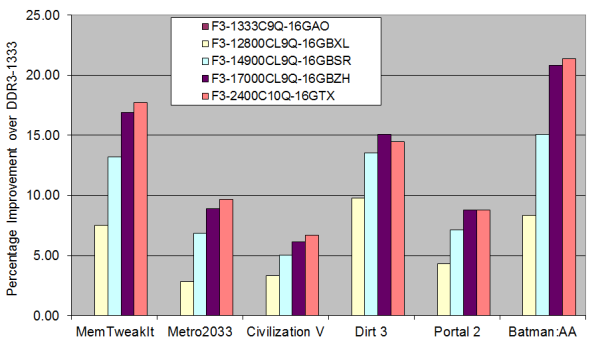

Overall IGP Results

Taking all our IGP results gives us the following graph:

The only game that beats the MemTweakIt predictions is Batman: AA, but most games follow the similar shape of increases just scaled differently. Bearing in mind the price differences between the kits, if IGP is your goal then either the 1600 C9 or 1866 C9 seem best in terms of bang-for-buck, but 2133 C9 will provide extra performance if the budget stretches that far.

114 Comments

View All Comments

Calin - Friday, October 19, 2012 - link

I remember the times when I had to select the speed of the processor (and even that of the processor's bus) with jumpers or DIP switches... It wasn't even so long ago, I'm sure anandtech.com has articles with mainboards with DIP switches or jumpers (jumpers were soooo Pentium :p but DIP switches were used in some K6 mainboards IIRC )Ecliptic - Friday, October 19, 2012 - link

Great article comparing different speed ram at similar timings but I'd be interested in seeing results at different timings. For example, I have some ddr3-1866 ram with these XMP timings:1333 @ 6-6-6-18

1600 @ 8-8-8-24

1866 @ 9-9-9-27

The question I have is if it better to run it at the full speed or lower the slower speed and use tighter timings?

APassingMe - Friday, October 19, 2012 - link

+ 1+ 2, if I can get away with it. I've always wondered the same thing. I have seen some minor formulas designed to compare... something like frequency divided by timing, in order to get a comparable number. But that is pure theory for the most part, I would like to see how the differences in the real world effects different systems and loads.

Spunjji - Friday, October 19, 2012 - link

But in all seriousness, I would find that to be much more useful - it's more likely to actually be used for IGP gaming.If you could go as far as to show the possible practical benefits of the higher-speed RAM (e.g. new settings /resolutions that become playable) that would be spiffing.

vegemeister - Friday, October 19, 2012 - link

Stop using 2 pass for benchmarks. Nobody is trying to fit DVD rips onto CD-Rs anymore. Exact file size *does not matter*. Using the same CRF for every file in a set (say, a season of a television series) produces a much better result and takes less time (you pretty much avoid the first pass).IanCutress - Friday, October 19, 2012 - link

The 2-pass is a feature of Greysky's x264 benchmark. Please feel free to email him if you would like him to stop doing 2-pass. Or, just look at the 1st pass results if the 2nd pass bothers you.Ian

rigel84 - Friday, October 19, 2012 - link

Hi, I don't know if I somehow skipped it in the article, but if I buy a 3570k and some 1866mhz memory, wouldn't I have to overclock the CPU in order for them to run at that speed? I'm pretty sure I had to overclock my RAM on my P4 2,4ghz, in order to use the extra mhz.. Does my memory fail me or has things changed?IanCutress - Friday, October 19, 2012 - link

No, you do not have to overclock the CPU. This has not been the case since the early days :D. Modern computer systems in the BIOS have an option to adjust the memory strap (1333/1600/1866 et al.) as required. On Intel systems and these memory kits, all that is needed it to set XMP - you need not worry about voltages or sub-timings unless you are overclocking the memory.Ian

CaedenV - Friday, October 19, 2012 - link

as there is an obvious difference with ram speed for onboard graphics, the next obvious question is one of how much memory is needed to prevent the system from throwing things back on the HDD?The reason I ask is that 16GB, while relatively cheap today, is still a TON of ram by today's standards, and people who are on a budget where they are playing with igp are not going to be able to afford an i7, and much less be willing to fork over ~$100 for system memory. However, if there is no performance hit moving down to 8GB of system memory it becomes much more affordable for these users to purchase better performing ram because the price points are even closer together between the performance tiers. As I understand memory usage, there should be no performance hit so long as there is more memory available than is actively being used by the game, so the question is how much is really needed before hitting that need for more memory? is the old standard of 4GB enough still? or do people need to step up to 8GB? or, if nothing is getting passed onto a dedicated GPU, do igp users really need that glut of 16GB of ram?

Lastly, I remember my first personal build being a Pentium 3 1GHz machine for a real time editing machine for college. I remember it being such an issue because the Pentium 4 was out, but was tied to Rambus memory which had a high burst rate, but terrible sustained performance, and so I agonized for a few months about sticking with the older but cheaper platform that had consistent performance, vs moving up to the newer (and terribly more expensive) P4 setup which would perform great for most tasks, but not as well for rendering projects. Anywho, I ended up getting the P3 with 1GB of DDR 133 memory. I cannot remember the actual price off hand (2001), but I do remember that the system memory was the 2nd most expensive part of the system (2nd to the real time rendering card which was $800). It really is mindblowing how much better things have gotten, and how much cheaper things are, and one wonders how long prices can remain this low with sales volumes dropping before companies start dropping out and we have 2-3 companies that all decide to up prices in lock step.

IanCutress - Friday, October 19, 2012 - link

With memory being relatively cheap, on a standard DDR3 system running Windows 7, 8 GB would be the minimum recommendation at this level. As I mentioned in my review, in my work load the most I have ever peaked at was 7.7 GB, and that was while playing a 1080p game with all the extras alongside lots of Chrome tabs and documents open at the same time.Ideally this review and comparison should be taken from the perspective that you should know how much memory you are using. For 99.9% of the populace, that usually means 16GB or less. Most can get away with 8, and on a modern Windows OS I wouldn't suggest anything less than that. 4GB might be ok, but that's what I have in my netbook and I sometimes hit that.

Ian