The NVIDIA GeForce GTX 650 Ti Review, Feat. Gigabyte, Zotac, & EVGA

by Ryan Smith on October 9, 2012 9:00 AM EST

"Once more into the fray.

Into the last good fight I'll ever know."

-The Grey

At a pace just shy of a card a month, NVIDIA has been launching the GeForce 600 series part by part for over the last half year now. What started with the GeForce GTX 680 in March and most recently saw the launch of the GeForce GTX 660 will finally be coming to an end today with the 8th and what is likely the final retail GeForce 600 series card, the GeForce GTX 650 Ti.

Last month we saw the introduction of NVIDIA’s 3rd Kepler GPU, GK106, which takes its place between the high-end GK104 and NVIDIA’s low-end/mobile gem, GK107. At the time NVIDIA launched just a single GK106 card, the GTX 660, but of course NVIDIA never launches just one product based on a GPU – if nothing else the economics of semiconductor manufacturing dictate a need for binning, and by extension products to attach to those bins. So it should come as no great surprise that NVIDIA has one more desktop GK106 card, and that card is the GeForce GTX 650 Ti.

The GTX 650 Ti is the aptly named successor to 2011’s GeForce GTX 550 Ti, and will occupy the same $150 price point that the GTX 550 Ti launched into. It will sit between the GTX 660 and the recently launched GTX 650, and despite the much closer similarities to the GTX 660 NVIDIA is placing the card into their GTX 650 family and pitching it as a higher performance alternative to the GTX 650. With that in mind, what exactly does NVIDIA’s final desktop consumer launch of 2012 bring to the table? Let’s find out.

| GTX 660 | GTX 650 Ti | GTX 650 | GT 550 Ti | |

| Stream Processors | 960 | 768 | 384 | 192 |

| Texture Units | 80 | 64 | 32 | 32 |

| ROPs | 24 | 16 | 16 | 16 |

| Core Clock | 980MHz | 925MHz | 1058MHz | 900MHz |

| Boost Clock | 1033MHz | N/A | N/A | N/A |

| Memory Clock | 6.008GHz GDDR5 | 5.4GHz GDDR5 | 5GHz GDDR5 | 4.1GHz GDDR5 |

| Memory Bus Width | 192-bit | 128-bit | 128-bit | 192-bit |

| VRAM | 2GB | 1GB/2GB | 1GB | 1GB |

| FP64 | 1/24 FP32 | 1/24 FP32 | 1/24 FP32 | 1/12 FP32 |

| TDP | 140W | 110W | 64W | 116W |

| GPU | GK106 | GK106 | GK107 | GF116 |

| Transistor Count | 2.54B | 2.54B | 1.3B | 1.17B |

| Manufacturing Process | TSMC 28nm | TSMC 28nm | TSMC 28nm | TSMC 40nm |

| Launch Price | $229 | $149 | $109 | $149 |

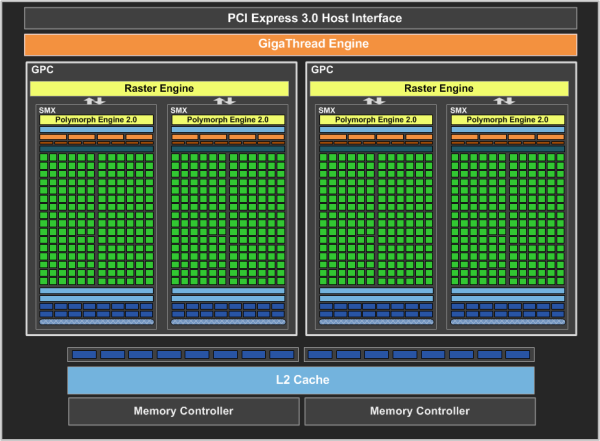

Coming from the GTX 660 and its fully enabled GK106 GPU, NVIDIA has cut several features and functional units in order to bring the GTX 650 Ti down to their desired TDP and price. As is customary for lower tier parts, GTX 650 Ti ships with a binned GK106 GPU with some functional units disabled, where it unfortunately takes a big hit. For the GTX 650 Ti NVIDIA has opted to disable both SMXes and ROP/L2/memory clusters, with a greater emphasis on the latter.

On the shader side of the equation NVIDIA is disabling just a single SMX, giving GTX 650 Ti 768 CUDA cores and 64 texture units. On the ROP/L2/memory side of things however NVIDIA is disabling one of GK106’s three clusters (the minimum granularity for such a change), so coming from the GTX 660 the GTX 650 Ti will have much less memory bandwith and ROP throughput than its older sibling.

Taking a look at clockspeeds, along with the reduction in functional units there has also been a reduction in clockspeeds across the board. The GTX 650 Ti will ship at 925MHz, 65MHz lower than the GTX 660 Ti. Furthermore NVIDIA has decided to limit GPU boost functionality to the GTX 660 and higher families, so the GTX 650 Ti will actually run at 925MHz and no higher. The lack of a boost clock means the effective difference is closer to 100MHz. On the other hand the lack of min-maxing here by NVIDIA will have some good ramifications for overclocking, as we’ll see. Meanwhile the memory clock will be at 5.4GHz, which at only 600MHz below NVIDIA’s standards-bearer Kepler memory clock of 6GHz is not nearly as big as the loss of memory bandwidth from the memory bus width reduction.

Overall this gives the GTX 650 Ti approximately 72% of the shading/texturing performance, 60% of the ROP throughput, and 60% of the memory bandwidth of the GTX 660. Meanwhile compared to the GTX 650 the GTX 650 Ti has 175% of shading/texturing performance, 108% of the memory bandwidth, and 87% of the ROP throughput of its smaller predecessor. For what little tradition there is, NVIDIA’s x50 parts are traditionally geared towards 1680x1050/1600x900 resolutions. And while NVIDIA is trying to stretch that definition due to the popularity of 1920x1080 monitors, the loss of the ROP/memory cluster all but closes the door on the GTX 650 Ti’s 1080p ambitions. The GTX 650 Ti will be for all intents and purposes NVIDIA’s fastest sub-1080p Kepler card.

Moving on, it was interesting to find out that NVIDIA is not going to be disabling SMXes for the GTX 650 Ti in a straightforward manner. Because of GK106’s asymmetrical design and the pigeonhole principle – 5 SMXes spread over 3 GPCs – NVIDIA is going to be shipping GTX 650 Ti certified GPUs with both 2 GPCs and 3 GPCs, depending on which GPC houses the defective SMX that NVIDIA will be disabling. To the best of our knowledge this is the first time NVIDIA has done something like this, particularly since Fermi cards had far more SMs per GPC. Despite the fact that 3 GPC versions of the GTX 650 Ti should technically have a performance advantage due to the extra Raster Engine, NVIDIA tells us that the performance is virtually identical to the 2 GPC version. Ultimately since GTX 650 Ti is going to be ROP bottlenecked anyhow – and hence lacking the ROP throughput to take advantage of that 3rd Raster Engine – the difference should be just as insignificant as NVIDIA claims.

Meanwhile when it comes to power consumption the GTX 650 Ti is being given a TDP of 110W, some 30W lower than the GTX 660. Even compared to the GTX 550 Ti this is still a hair lower (116W vs. 110W), while the gap between the GTX 650 Ti and GTX 650 will be 34W. Idle power consumption on the other hand will be virtually unchanged, with the GTX 650 Ti maintaining the GTX 660’s 5W standard.

As NVIDIA’s final consumer desktop GeForce 600 card for the year, NVIDIA is setting the MSRP of the 1GB card at $150, between the $109 GTX 650 and the $229 GTX 660. This is another virtual launch, with partners going ahead with their own designs from the start. NVIDIA’s reference design will not be directly sold, but most of the retail boards will be very similar to NVIDIA’s reference card anyhow, implementing a single-fan open air cooler like NVIDIA’s. PCBs should also be similar; 2 of the 3 retail cards we’re looking at use the reference PCB, which on a side note is identical to the GTX 650 reference PCB as GTX 650 Ti and GTX 650 are pin compatible. Meanwhile similar to the GTX 660 Ti launch, partners will be going ahead with a mix of memory capacities, with many partners offering both 1GB and 2GB cards.

At launch the GTX 650 Ti will be facing competition from both last-generation GeForce cards and current-generation Radeon cards. The GeForce GTX 560 is currently going for almost exactly $150, making it direct competition for the GTX 650 Ti. The 560 cannot match the GTX 650 Ti’s power consumption, but thanks to its ROP performance and memory bandwidth it’s a potent competitor for rendering performance.

Meanwhile the Radeon competition will be the tag-team of the 7770 and the 7850. The 7770 is not nearly as powerful as the GTX 650 Ti, but with prices at-or-below $119 it significantly undercuts the GTX 650 Ti. Meanwhile the Pitcairn based 7850 1GB can more than give the GTX 650 Ti a run for its money, but is priced on average $20 higher at $169, and as the 1GB version is a bit of a niche product for AMD the selection the card selection won’t be as great.

To sweeten the deal NVIDIA has a new game bundle promotion starting up for the GTX 650 Ti. Retailers will be bundling vouchers for Assassin’s Creed III with GTX 650 Ti cards in North America and in Europe. Unreleased games tend to be good deals value-wise, but in the case of Assassin’s Creed III this also means waiting nearly 2 months for the PC version of the game to ship.

| Fall 2012 GPU Pricing Comparison | |||||

| AMD | Price | NVIDIA | |||

| Radeon HD 7950 | $329 | ||||

| $299 | GeForce GTX 660 Ti | ||||

| Radeon HD 7870 | $239/$229 | GeForce GTX 660 | |||

| Radeon HD 7850 2GB | $189 | ||||

| Radeon HD 7850 1GB | $169 | GeForce GTX 650 Ti 2GB | |||

| $149 | GeForce GTX 650 Ti 1GB | ||||

| Radeon HD 7770 | $109 | GeForce GTX 650 | |||

| Radeon HD 7750 | $99 | GeForce GT 640 | |||

91 Comments

View All Comments

TheJian - Tuesday, October 9, 2012 - link

The 7850 is more money, it should perform faster. I'd expect nothing less. So what this person would end up with is 10-20% less perf (in the situation you describe) for 10-20% less money. ERGO, exactly what they should have got. So basically a free copy of AC3 :) Which is kind of the point. The 2GB beating the 650TI in that review is $20 more. It goes without saying you should get more perf for more $. What's your point?Your wrong. IN the page you point to (just looking at that, won't bother going though them all), the 650TI 1GB scores 32fps MIN, vs. 7770 25fps min. So unplayable on 7770, but playable on 650TI. Nuff said. Spin that all you want all day. The card is worth more than 7770. That's OVER 20% faster 1920x1080 4xAA in witcher 2. You could argue for $139 maybe, but not with the AC3 AAA title, and physx support in a lot of games and more to come.

http://www.geforce.com/games-applications/physx

All games with physx so far. Usually had for free, no hit, see hardocp etc. Borderlands 2, Batman AC & AAsylum, Alice Madness returns, Metro2033, sacred2FA, etc etc...The list of games is long and growing. This isn't talked about much, nor what these effects at to the visual experience. You just can't do that on AMD. Considering these big titles (and more head to the site) use it, any future revs of these games (sequels etc) will likely use it also and the devs now have great experience with physx. This will continue to become a bigger issue as we move forward. What happens when all new games support this, and there's no hit for having it on (hardocp showed they were winning WITH it on for free)? There's quite a good argument even now that a LOT of good games are different in a good way on NV based cards. Soon it won't be a good argument, it will be THE argument. Unfortunately for AMD/Intel havok never took off and AMD has no money to throw at devs to inspire them to support it. NV continues to make games more fun on their hardware (either on android tegrazone stuff, or PC stuff). Much tighter connections with devs on the NV side. Money talks, unfortunately for AMD debt can't talk for you (accept to say don't buy my stock we're in massive debt) :)

jtenorj - Wednesday, October 10, 2012 - link

No, you are wrong. Lower end nvidia cards(whether this card falls into that category or not is debatable) generally cannot run physx on high, but require it to be set to medium, low or off. AMD cards can run physx in a number of games on medium by using the cpu without a massive performance hit. There hasn't been a lot of time since nvidia got physx tech from ageia for game developers to include it in titles because developement cycles are getting longer and longer. Still, I think most devs shy away from physx because it hurts the bottom line(more time to impliment= more money spend on salaries and later release, alienate 40% of potential market by making it so the full experience is not an option for them, losing more money). Take a look at the havok page on wikipedia vs the physx page(which is more extensive than what even nvidia lists on their own site). Havok and other software physics engines are used in the vast majority of released and soon to be released titles because they will work with anyone's card. I'm not saying HD7770 is better than gtx650ti(it is in fact worse than the new card), but the HD7850 is a far better value(especially the 2GB version). Finally, it is possible to add a low end geforce like gt610 to a higher end AMD primary as a dedicated physx card in some systems.ocre - Thursday, October 11, 2012 - link

but it doest alienate 40% of the market.You said this yourself:

"AMD cards can run physx in a number of games on medium by using the cpu without a massive performance hit."

Then try to turn it all around???? Clever? Doubtful!!

And this is what all the AMD fanboys cried about. Nvidia purposefully crippling physX on the CPU. Nvidia evil for making physX nvidia only. But now they have improved their physX code on the CPU and every single game as of late offers acceptable physX performance on AMD hardware via the CPU. Of course you will only get fully fledged GPU accelerated physX with Nvidia hardware but you cannot really expect more, can you?

Even if your not capable of seeing the improvements Nvidia made it is there. They have reached over and extended the branch to AMD users. They got physX to run better on multicore CPUs. They listened to complaints (even from AMD users) and made massive improvements.

This is the thing with nvidia. They are listening and steadily improving. Removing those negatives one at a time. Its gonna be hard for AMD fanboys to come up with negatives because nvidia is responding with every generation. PhysX is one example, the massive power efficiency improvement of kepler is another. Nvidia is proactive and looking for ways to improve their direction. All these things complaints on Nvidia are getting addressed. There is nothing you can really say except they are making good progress. But that will not stop AMD fans from desperately searching for any negative that they can grasp on to. But more and more people are taking note of this progress, if you havent noticed yourself.

CeriseCogburn - Friday, October 12, 2012 - link

Oh, so that's why the crybaby amd fans have shut their annoying traps on that, not to mention their holy god above all amd/radeon videocards apu holy trinity company after decades of foaming the fuming rage amd fanboys into mooing about "proprietary Physx! " like a sick monkey in heat and half dead, and extolling the pure glorious god like and friendly neighbor gamer love of "open source" and spewwwwwwwing OpenCL as if they had it sewed all over their private parts and couldn't stop staring and reading the teleprompter, their glorious god amd BLEW IT- and puked out their proprietary winzip !R O F L

Suddenly the intense and insane constant moaning and complaining and attacking and dissing and spewing against nVidia "proprietary" was gone...

Now "winzip" is the big a compute win for the freak fanboy of we know which company. LOL

P R O P R I E T A R Y ! ! ! ! ! ! ! ! ! ! 1 ! 1 100100000

JC said it well : Looooooooooooooooooooooseeerrrr !

(that's Jim Carey not the Savior)

CeriseCogburn - Friday, October 12, 2012 - link

" You buy a GPU to play 100s of games not 1 game. "Good for you, so the $50 games times 100 equals your $5,000.00 gaming budget for the card.

I guess you can stop moaning and wailing about 20 bucks in a card price now, you freaking human joke with the melted amd fanboy brain.

Denithor - Tuesday, October 9, 2012 - link

Hopefully your shiny new GTX 650 Ti will be able to run AC3 smoothly...:D

chizow - Thursday, October 11, 2012 - link

According to Nvidia, the 650Ti ran AC3 acceptably at 1080p with 4xMSAA on Medium settings: http://www.geforce.com/whats-new/articles/nvidia-g..."In the case of Assassin’s Creed III, which is bundled with the GTX 650 Ti at participating e-tailers and retailers, we recorded 36.9 frames per second using medium settings."

That's not all that surprising to me though as the GTX 280 ran AC2/ACB Anvil engine games at around the same framerate. While AC3 will certainly be more demanding, the 650Ti is a good bit faster than the 280.

I'm not in the market though for a GTX 650Ti, I'm more interested in the AC3 bundle making its way to other GeForce parts as I'm interested in grabbing another 670. :D

HisDivineOrder - Tuesday, October 9, 2012 - link

Perhaps you might test without AA when dealing with cards in a sub-$200 price range as that would seem the more likely use for the card. Not saying you can't test with AA, too, but to have all tests include AA seems to be testing a new Volkswagon bug with a raw speed test through a live fire training exercise you'd test a humvee with.RussianSensation - Tuesday, October 9, 2012 - link

AA testing is often used to stress the ROP and memory bandwidth of GPUs. Also, it's what separates consoles from PCs. If a $150 GPU cannot handle AA but a $160-180 competitor can, it should be discussed. When GTX650Ti and its after-market versions are so closely priced to 7850 1GB/7850 2GB, and it's clear that 650Ti is so much slower, the only one to blame here is NV for setting the price at $149, not the reviewer for using AA.GTX560/560Ti/6870/6950 were all tested with AA and this card not only competes against HD7850 but gives owners of older cards a perspective of how much progress there has been with new generation of GPUs. Not using AA would not allow for such a comparison to be made unless you dropped AA from all the cards in this review.

It sounds like you are trying to find a way to make this card look good but sub-$200 GPUs are capable of running AA as long as you get a faster card.

HD7850 is 34% faster than GTX650Ti with 4xAA at 1080P and 49% faster with 8xAA at 1080P

http://www.computerbase.de/artikel/grafikkarten/20...

All that for $20-40 more. Far better value.

Mr Perfect - Tuesday, October 9, 2012 - link

I thought GTX was reserved for high end cards, with lower tier cards being GT. I guess they gave up on that?