Intel's Haswell Architecture Analyzed: Building a New PC and a New Intel

by Anand Lal Shimpi on October 5, 2012 2:45 AM ESTHaswell's GPU

Although Intel provided a good amount of detail on the CPU enhancements to Haswell, the graphics discussion at IDF was fairly limited. That being said, there's still some to talk about here.

Haswell builds on the same fundamental GPU architecture we saw in Ivy Bridge. We won't see a dramatic redesign/re-plumbing of the graphics hardware until Broadwell in 2014 (that one is going to be a big one).

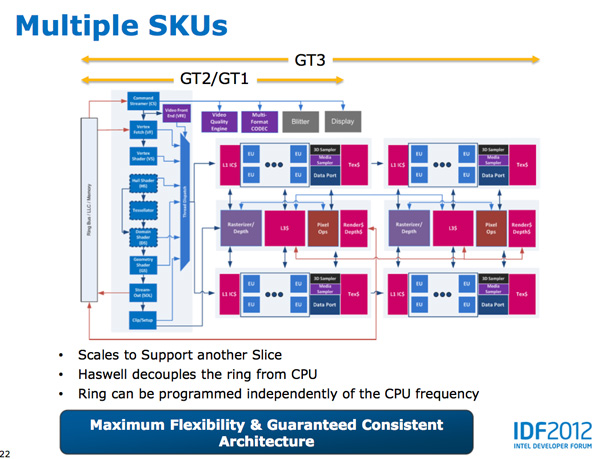

Haswell's GPU will be available in three physical configurations: GT1, GT2 and GT3. Although Intel mentioned that the Haswell GT3 config would have twice the shader count of Haswell GT2, it was careful not to disclose the total number of EUs in any of the versions. Based on the information we have at this point, GT3 should be a 40 EU configuration while GT2 should feature 20 EUs. Intel will also be including up to one redundant EU to deal with the case where there's a defect in an EU in the array. This isn't an uncommon practice, but it does indicate just how much of the die will be dedicated to graphics in Haswell. The larger of an area the GPU covers, the greater the likelihood that you'll see unrecoverable defects in the GPU. Redundancy at the EU level is one way of mitigating that problem.

Haswell's processor graphics extends API support to DirectX 11.1, OpenCL 1.2 and OpenGL 4.0.

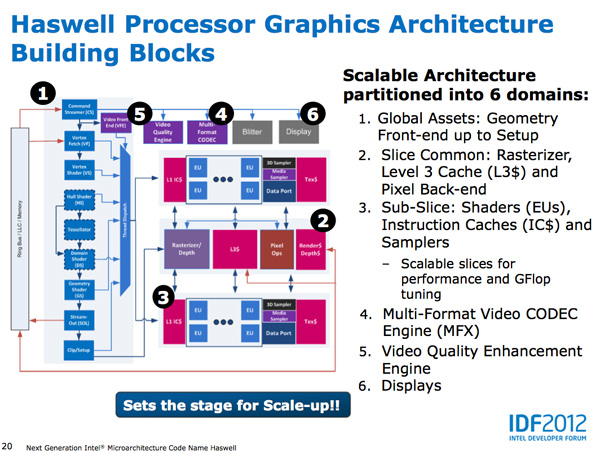

At the front of the graphics pipeline is a new resource streamer. The RS offloads some driver work that the CPU would normally handle and moves it to GPU hardware instead. Both AMD and NVIDIA have significant command processors so this doesn't appear to be an Intel advantage although the devil is in the (unshared) details. The point from Intel's perspective is that any amount of processing it can shift away from general purpose CPU hardware and onto the GPU can save power (CPU cores go to sleep while the RS/CS do their job).

Beyond the resource streamer, most of the fixed function graphics hardware sees a doubling of performance in Haswell.

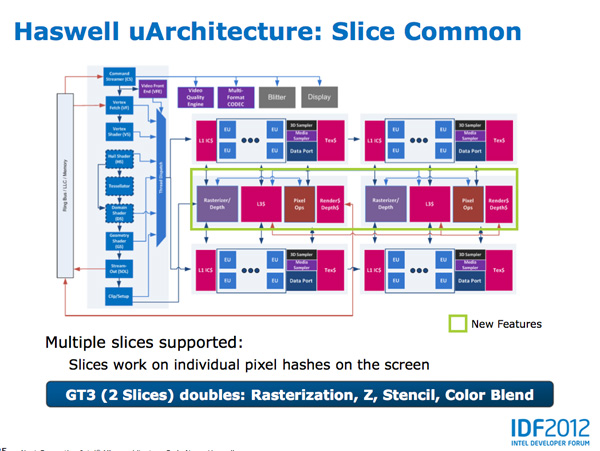

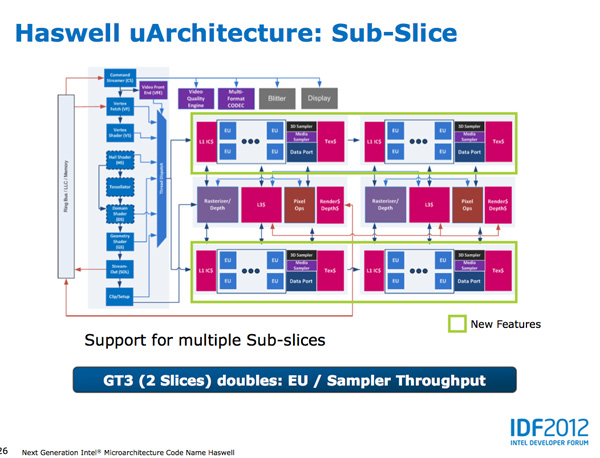

At the shader core level, Intel separates the GPU design into two sections: slice common and sub-slice. Slice common includes the rasterizer, pixel back end and GPU L3 cache. The sub-slice includes all of the EUs, instruction caches and EUs.

In Haswell GT1 and GT2 there's a single slice common, while GT3 sees a doubling of slice common. GT3 similarly has two sub-slices, although once again Intel isn't talking specifics about EU counts or clock speeds between GT1/2/3.

The final bit of detail Intel gave out about Haswell's GPU is the texture sampler sees up to a 4x improvement in throughput over Ivy Bridge in some modes.

Now to the things that Intel didn't let loose at IDF. Although originally an option for Ivy Bridge (but higher ups at Intel killed plans for it) was a GT3 part with some form of embedded DRAM. Rumor has it that Apple was the only customer who really demanded it at the time, and Intel wasn't willing to build a SKU just for Apple.

Haswell will do what Ivy Bridge didn't. You'll see a version of Haswell with up to 128MB of embedded DRAM, with a lot of bandwidth available between it and the core. Both the CPU and GPU will be able to access this embedded DRAM, although there are obvious implications for graphics.

Overall performance gains should be about 2x for GT3 (presumably with eDRAM) over HD 4000 in a high TDP part. In Ultrabooks those gains will be limited to around 30% max given the strict power limits.

As for why Intel isn't talking about embedded DRAM on Haswell, your guess is as good as mine. The likely release timeframe for Haswell is close to June 2013, there's still tons of time between now and then. It looks like Intel still has a desire to remain quiet on some fronts.

245 Comments

View All Comments

lmcd - Saturday, October 6, 2012 - link

Interestingly, this might be the first chance in forever AMD has at competing with Intel. If Haswell's sole goal is to hit lower power targets, and Piledriver hits its 15% and Steamroller its 15% over that, AMD is suddenly right up with Intel's i5 series with its GPU-less chips, and upper i3-range with their APUs, which is absolutely perfect positioning: most i5 purchases are for people planning to pair with discrete graphics, while most i3 series seem to go to the PC buyer looking for low price tags.The one downside is that the i7 series is Intel's money-maker: the clueless people who think they're getting maximum performance but are really just feeding the binning system and buying an unbalanced PC.

milkod2001 - Sunday, October 7, 2012 - link

u got it wrong bro, Intels money maker is not i7, it's i3 and i5(low end and a bit of mainstreem)as for Haswell, on paper it looks too good to be true as Ivy did last year and ended up everything but impressive.

Since Intel conroe core(2006) there actually were not any significant improvements worth mentioning.There's not much extra what todays CPUs can do and Pentium4 could not a decade ago.

I would love to see some innovations user could really benefit from(something like reattachable,thin, light, portable, firm solar panel hooked at the back of screen or even build in as last layer into screen itself) and not that crap Intel/AMD gives us year by year.

xeizo - Sunday, October 7, 2012 - link

Anand is very right, it's everything about power savings which in effect makes smaller and more portable form factors possible!As for mainstream perfomance, my Linux workstation still uses a Q9450 rev. C1 from 2008 clocked at 3.2GHz and a SSD of course. That box feels in every way as snappy as my Windows-box with Sandy Bridge at 4.8GHz. Which means, I really didn't need more performance than what C2Q already gave. Of course the SB-box benchmarks much faster, about twice as fast in most things, but the point is for myself I really don't need that perfromance except for some occasional game.

But I could use a smaller, cooler running device instead!

Teknobug - Tuesday, October 16, 2012 - link

LOL my Linux system still runs a Sempron and it's still fast.oomjcv - Sunday, October 7, 2012 - link

Very interesting article, enjoyed reading it.Something I would like to see is a decent comparison between Intel's and AMD's plans. Many might be able to outline the basics, but a thorough article on the subject should be rather enlightening... Comparing their design philosophies, architectures, possible pitfalls and successes etc, pretty much what's been done with this article only with both companies.

I know it might be time consuming but I imagine it could be quite a nice read.

zwillx - Monday, January 21, 2013 - link

agreed; it's difficult to find the common ground with so many different chip architectures. x86 is a big enough competition but now it's getting split wide open with ARM and BIG/litle etc etc so it's always helpful to have either more charts or real world examples lol.My take from this article though: Haswell still won't have the prowess to beat the GT650. I have GTX660 in my laptop w/ Optimus (TM). It works. Runs a game on HD4000 at 17 FPS. On the GTX660 I get 100+ fps, and am able to use higher anti-aliasing settings. So, clearly a 100% improvement over Ivy bridge is only putting the chip into "mediocre" category by the time its released.

alexandrio - Sunday, October 7, 2012 - link

"The bigger concern is whether or not the OEMs and ISVs will do their best to really take advantage of what Haswell offers. I know one will, but will the rest?"I am curious who is that one OME that will do their best to really take advantage of Haswell offers?

zwillx - Monday, January 21, 2013 - link

Apple. Or are you joking. I personally hate Apple and have since the original iMac but their engineering is top notch when it comes to getting ideal performance from silicon to user. So.. guessing that's the reference.Silma - Monday, October 8, 2012 - link

A fine read, technically very comprehensive, but still overly melodramatic.While it is true that it is crucial for Intel to step a foot in the byod market some things still hold true:

- In value and profit the PC processor market is much bigger than the byod processor market and will stay so for years because PCs, especially business PCs won't disappear anytime soon.

- Nobody can touch Intel in this market, it has been proved for decades. Not AMD at the height of its success, not mighty IBM, not Sun, nobody.

- Contrary to what you say Intel has a definitive production advantage and there are very few fabs able to compete. Note that Apple is incapable of producing processors, it is dependent on external manufacturers.

- What Apple does with its processor is interesting business wise for its iPods/Pads/Phones, but Apple doesn't have the research power Intel and others have in the chip space and I can't see how it will innovate better than Intel and other competitors.

- Intel is aware of its shortcomings, is pushing tremendously in the right direction. A competitor that doesn't rest on its laurels is a mighty threat, ARM beware.

- If Apple stops using Intel processors, it will of course wipe a few hundred millions of Intel's turnover but won't be anything remotely dangerous for Intel

- It remains to be seen that Apple users will accept yet another platform change.

- It remains to be seen that it would make sense business-wise for Apple

- I am quite sure many phone companies will be open about renewed chip competition and not letting a single platform become too powerful.

All in all it seems to me Intel is as dangerous as ever, executing very well in its core business and heading towards great things in the phone/pad space.

johnsmith9875 - Thursday, October 11, 2012 - link

Why couldn't they at least stick to LGA2011?