Intel's Haswell Architecture Analyzed: Building a New PC and a New Intel

by Anand Lal Shimpi on October 5, 2012 2:45 AM ESTCPU Architecture Improvements: Background

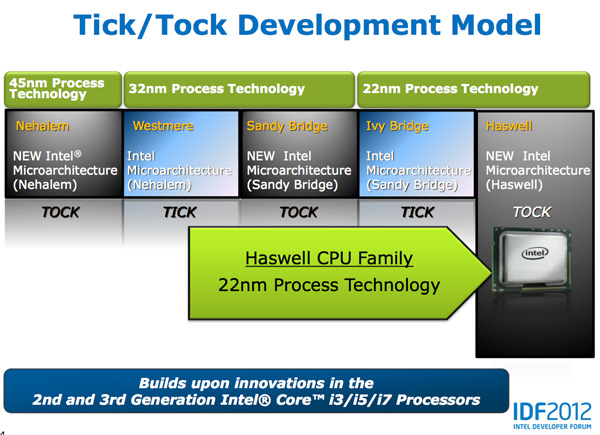

Despite all of this platform discussion, we must not forget that Haswell is the fourth tock since Intel instituted its tick-tock cadence. If you're not familiar with the terminology by now a tock is a "new" microprocessor architecture on an existing manufacturing process. In this case we're talking about Intel's 22nm 3D transistors, that first debuted with Ivy Bridge. Although Haswell is clearly SoC focused, the designs we're talking about today all use Intel's 22nm CPU process - not the 22nm SoC process that has yet to debut for Atom. It's important to not give Intel too much credit on the manufacturing front. While it has a full node advantage over the competition in the PC space, it's currently only shipping a 32nm low power SoC process. Intel may still have a more power efficient process at 32nm than its other competitors in the SoC space, but the full node advantage simply doesn't exist there yet.

Although Haswell is labeled as a new micro-architecture, it borrows heavily from those that came before it. Without going into the full details on how CPUs work I feel like we need a bit of a recap to really appreciate the changes Intel made to Haswell.

At a high level the goal of a CPU is to grab instructions from memory and execute those instructions. All of the tricks and improvements we see from one generation to the next just help to accomplish that goal faster.

The assembly line analogy for a pipelined microprocessor is over used but that's because it is quite accurate. Rather than seeing one instruction worked on at a time, modern processors feature an assembly line of steps that breaks up the grab/execute process to allow for higher throughput.

The basic pipeline is as follows: fetch, decode, execute, commit to memory. You first fetch the next instruction from memory (there's a counter and pointer that tells the CPU where to find the next instruction). You then decode that instruction into an internally understood format (this is key to enabling backwards compatibility). Next you execute the instruction (this stage, like most here, is split up into fetching data needed by the instruction among other things). Finally you commit the results of that instruction to memory and start the process over again.

Modern CPU pipelines feature many more stages than what I've outlined here. Conroe featured a 14 stage integer pipeline, Nehalem increased that to 16 stages, while Sandy Bridge saw a shift to a 14 - 19 stage pipeline (depending on hit/miss in the decoded uop cache).

The front end is responsible for fetching and decoding instructions, while the back end deals with executing them. The division between the two halves of the CPU pipeline also separates the part of the pipeline that must execute in order from the part that can execute out of order. Instructions have to be fetched and completed in program order (can't click Print until you click File first), but they can be executed in any order possible so long as the result is correct.

Why would you want to execute instructions out of order? It turns out that many instructions are either dependent on one another (e.g. C=A+B followed by E=C+D) or they need data that's not immediately available and has to be fetched from main memory (a process that can take hundreds of cycles, or an eternity in the eyes of the processor). Being able to reorder instructions before they're executed allows the processor to keep doing work rather than just sitting around waiting.

Sidebar on Performance Modeling

Microprocessor design is one giant balancing act. You model application performance and build the best architecture you can in a given die area for those applications. Tradeoffs are inevitably made as designers are bound by power, area and schedule constraints. You do the best you can this generation and try to get the low hanging fruit next time.

Performance modeling includes current applications of value, future algorithms that you expect to matter when the chip ships as well as insight from key software developers (if Apple and Microsoft tell you that they'll be doing a lot of realistic fur rendering in 4 years, you better make sure your chip is good at what they plan on doing). Obviously you can't predict everything that will happen, so you continue to model and test as new applications and workloads emerge. You feed that data back into the design loop and it continues to influence architectures down the road.

During all of this modeling, even once a design is done, you begin to notice bottlenecks in your design in various workloads. Perhaps you notice that your L1 cache is too small for some newer workloads, or that for a bunch of popular games you're seeing a memory access pattern that your prefetchers don't do a good job of predicting. More fundamentally, maybe you notice that you're decode bound more often than you'd like - or alternatively that you need more integer ALUs or FP hardware. You take this data and feed it back to the team(s) working on future architectures.

The folks working on future architectures then prioritize the wish list and work on including what they can.

245 Comments

View All Comments

Astarael - Monday, October 15, 2012 - link

Then get out of the comments section.Old_Fogie_Late_Bloomer - Tuesday, October 9, 2012 - link

I finally made it through this article...hell, I took a course in orgnization and architecture earlier this year and I didn't come close to understanding everything written here.Still, it was a great read. Thanks for going to the trouble, Anand. :-)

IKeelU - Friday, October 5, 2012 - link

What's great is that Anand's been doing this for 15 years, has hired new editors along the way, and the quality hasn't wavered. I'm glad they haven't polluted their front page with shallow tech blogging like other sites I once enjoyed.I can't imagine this hobby without this site. I got into PC building just as it came online and have depended on it ever since.

TheJian - Monday, October 8, 2012 - link

I disagree. Ryan Smith's 660TI article had some ridiculous conclusions and went on and on about a bandwidth issue that isn't an issue at 1920x1200. As evidenced by the fact that in their own tests it beat the 7950B in 6 games by OVER 20% but lost in one game by less than 10 at 1920x1200. Read the comments section where I reduced his arguments to rubble. He went on about a dumb Korean monitor you'd have to EBAY to get (or amazon from a guy with ONE review, no phone, no faq page, no domain, and a gmail account for help...LOL), and runs in 2560x1440. If his conclusions were based on 1920x1200 like he said (which he repeated to me in the comments yet touts some "enthusiast 2560x1440" korean monitor as an excuse for his conclusions), he would have been forced to say the truth which was as his benchmarks showed and hardocp stated. It wipes the floor with the 7950B, just as the 680 does with the 7970ghz (yea, even in MSAA 8x) where they also proved only 1 in 4 games was even above 30fps...@2560x1600 with high AA which is why its pointless to draw conclusions based on 2560x1600 as Ryan did. Heck 2 of the 4 games at hardocp's high AA article didn't even reach above 20fps (15 & 17, and if bandwidth is an issue how come the 660TI won anyway?...LOL)Ryan was reduced to being a fool when I was done with him, and then Jarred W. came in and insinuated I was a Ahole & uninformed...ROFL. I used all of his own data from the 660TI & 7970B & 7970ghz edition articles (all by Ryan!) to point out how ridiculous his conclusions were. When a card loses 6 out of 7 games, you leave out Starcraft 2 (which you used for 2 previous articles 1 & 2 months before, then again IMMEDIATELY after) which would have shown it beating even the 7970ghz edition (as all the nv cards beat it in that game, hence he left it out), you claim some Korean Ebay'd monitor as a reason for your asinine conclusions (clear bias to me), in the 6 games it loses by an avg of 20% or more at the ONLY res 68 24in monitors on newegg use (or below, most 1920x1080, not even 1920x1200, only <2% in steampowered.com hardware survey have above 1920x1200 and most with dual cards in that case), you've clearly WAVERED in your QUALITY since Anand took up mac's/phones.

I'm all for trying to save AMD (quit lowering your prices idiots, maybe you'll make some money), but stooping to dumb conclusions when all of your own evidence points in the exact opposite direction is really shady. Worse it was BOTH editors, as Ryan gave up (the evidence was voluminous, he wisely ran and hid) Jarred stepped in to personally attack me instead of the data...ROFLMAO. You know you've lost when you say nothing about my numbers at all, and resort to personal attacks. Ryan nor Jarred are dumb. They should have just admitted the article was full of bias or just changed the conclusion and moved on. With all the evidence I pointed out I wouldn't have wanted it to be in print any longer. It's embarrassing if you read the comments section after the article. You go back and realize what they did and wonder what the heck Ryan was thinking. He said that same crap in his next article. Either he loves AMD, gets money/hardware or something or maybe he just isn't as smart as I thought :)

Anand's last hardware article on haswell said it would be a "MONSTER" but it's graphics won't catch AMD's integrated gpu and we only get 5-15% on the cpu side for a TOCK release. 2x gpu doesn't mean much with it being 9 months away and won't even catch AMD if they sit still. OUCH. So basically much ado about nothing on the desktop side, with a hope they can do something with it in mobile below 10w (only a tablet even then). I was pondering waiting for the "MONSTER" but now I know I'll just buy an Ivy at black friday...ROFL. What monster? In this article he says Broadwell is now the "monster"...heh. Bah...At least I got to read this before black friday. I would have been ticked had I read this after it hoping for the desktop monster. Since AMD now sucks on the cpu side we get speed bin bumps for microarchitecure TOCK's instead of 25-40% like the old days. I pray AMD stops the price war with NV and starts taking profits soon.

If it wasn't for their advantage on the integrated gpu, they'd be bankrupt already and they will be there by xmas 2014 at the current burn of 650mil/year losses (they only have 1.5Bil in the bank and billions in debt compared to 3.5B cash for NV and no debt, never mind giving up the race to Intel who dwarfs NV by 10x on all fronts). AMD's only choice will be to further reduce their stock value by dilution of shares (AGAIN!) which will finally put them out to pasture. Hopefully someone will pick up their IP, put a few billion in it and compete again with Intel (samsung, ibm, NV if amd stock drops to $1 by then, even they could do it). Otherwise, my next card/cpu upgrade after black friday will cost $1000 each as NV/INTC suck us all dry. There stock is already WAY down in credit rating (B+ last I checked, FAR from NV AAA), and they are listed as 50% chance of bankruptcy vs. all their competitors at 1% chance (intc, qcom, nvda, samsung etc). The idea they'll take over mobile is far fetched at best. I see nowhere but down for their share price. That sucks. I hate apple, but at this point I wouldn't even mind if they picked them up and ran with AMD's cpu mantle. We might start getting ivy 3770's (or the next king) at prices less than $329 then! The first sale I've seen was $309 in my email from newegg this weekend and that sucks in 7 months. No speed upgrades, no price drops, just the same thing for 7 months with no pressure from a competitive-less AMD. Their gpu sucks compared to 660ti (hotter, noisy, less perf), so no black friday discount. You either go AMD for worse but savings or pay through the nose for NV. Same with Intel and the cpu. In that respect I guess I get Ryan trying to save them...ROFL. But prolonging the inevitable isn't helping, I'd rather have them go belly up now and someone buy the cpu and run with it before it's so far behind Intel they can't fix it no matter who buys the IP. I digress...

Spunjji - Thursday, October 18, 2012 - link

God that was painful to even attempt to read. :/ Comparing AMD vs. nVidia to AMD vs. Intel is foolish in the extreme (there's a rather significant difference in the cost/performance balance, where AMD and nVidia are actually competitors) so I feel justified in not reading most of that screed.ananduser - Friday, October 5, 2012 - link

Yes...Anand's quite the loss for the PC crowd. He's reviewing macs nowadays.A5 - Friday, October 5, 2012 - link

If you owned a site and could delegate reviews you don't find interesting (oooh boy, another 15-pound overpriced gaming laptop!), wouldn't you do the same thing?Kepe - Friday, October 5, 2012 - link

Mmh, I've also noticed how Anand seems to have become quite an Apple fan. Don't get me wrong, I love his reviews, and Anandtech as a whole. But the fact that Anand always keeps talking about Apple is an eyesore to me. Particularly annoying in this article was how he mentioned "iPad form factor" as if it was the only tablet out there. Why not say "tablet form factor" instead? Would have been a lot more neutral. Also it seemed to confuse someone in to thinking Apple might be putting Haswell in to a new iPad.meloz - Friday, October 5, 2012 - link

Agreed. The Apple devotion has gone too far and the editorial balanced has been lost. The podcasts -in particular- are basically an advertising campaign for Apple and a thinly disguised excuse for Anand & Friends to praise everything Apple. So I do not listen to them.The articles though -like this one about Haswell- are still worth reading. You still get as much gratuitous Apple references as Anand can throw in but there is also plenty of substance for everyone else.

ravisurdhar - Friday, October 5, 2012 - link

It's not "devotion", it's simply an accurate description of the market. How many iPads are out there? 100 million. One tenth of a BILLION. One for every 70 people on the planet. Well over half of Fortune 500 companies use them. Hospitals use them. Pilots use them. Name one other tablet that comes close to that sort of market penetration. When Apple decides to make their own silicon for their devices, it's a big, big deal.For the record, I don't have one. I just understand the significance of the 800 pound gorilla.