Intel's Haswell Architecture Analyzed: Building a New PC and a New Intel

by Anand Lal Shimpi on October 5, 2012 2:45 AM ESTCPU Architecture Improvements: Background

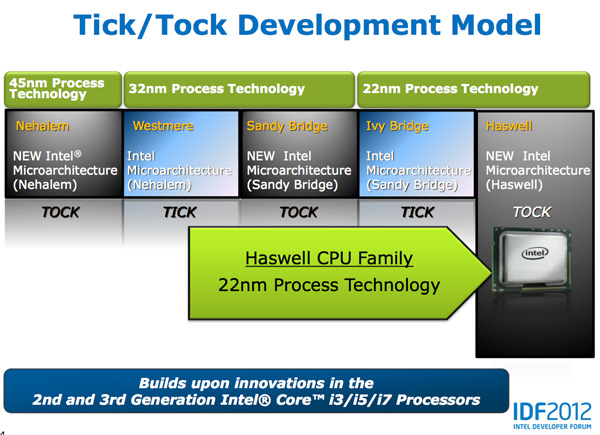

Despite all of this platform discussion, we must not forget that Haswell is the fourth tock since Intel instituted its tick-tock cadence. If you're not familiar with the terminology by now a tock is a "new" microprocessor architecture on an existing manufacturing process. In this case we're talking about Intel's 22nm 3D transistors, that first debuted with Ivy Bridge. Although Haswell is clearly SoC focused, the designs we're talking about today all use Intel's 22nm CPU process - not the 22nm SoC process that has yet to debut for Atom. It's important to not give Intel too much credit on the manufacturing front. While it has a full node advantage over the competition in the PC space, it's currently only shipping a 32nm low power SoC process. Intel may still have a more power efficient process at 32nm than its other competitors in the SoC space, but the full node advantage simply doesn't exist there yet.

Although Haswell is labeled as a new micro-architecture, it borrows heavily from those that came before it. Without going into the full details on how CPUs work I feel like we need a bit of a recap to really appreciate the changes Intel made to Haswell.

At a high level the goal of a CPU is to grab instructions from memory and execute those instructions. All of the tricks and improvements we see from one generation to the next just help to accomplish that goal faster.

The assembly line analogy for a pipelined microprocessor is over used but that's because it is quite accurate. Rather than seeing one instruction worked on at a time, modern processors feature an assembly line of steps that breaks up the grab/execute process to allow for higher throughput.

The basic pipeline is as follows: fetch, decode, execute, commit to memory. You first fetch the next instruction from memory (there's a counter and pointer that tells the CPU where to find the next instruction). You then decode that instruction into an internally understood format (this is key to enabling backwards compatibility). Next you execute the instruction (this stage, like most here, is split up into fetching data needed by the instruction among other things). Finally you commit the results of that instruction to memory and start the process over again.

Modern CPU pipelines feature many more stages than what I've outlined here. Conroe featured a 14 stage integer pipeline, Nehalem increased that to 16 stages, while Sandy Bridge saw a shift to a 14 - 19 stage pipeline (depending on hit/miss in the decoded uop cache).

The front end is responsible for fetching and decoding instructions, while the back end deals with executing them. The division between the two halves of the CPU pipeline also separates the part of the pipeline that must execute in order from the part that can execute out of order. Instructions have to be fetched and completed in program order (can't click Print until you click File first), but they can be executed in any order possible so long as the result is correct.

Why would you want to execute instructions out of order? It turns out that many instructions are either dependent on one another (e.g. C=A+B followed by E=C+D) or they need data that's not immediately available and has to be fetched from main memory (a process that can take hundreds of cycles, or an eternity in the eyes of the processor). Being able to reorder instructions before they're executed allows the processor to keep doing work rather than just sitting around waiting.

Sidebar on Performance Modeling

Microprocessor design is one giant balancing act. You model application performance and build the best architecture you can in a given die area for those applications. Tradeoffs are inevitably made as designers are bound by power, area and schedule constraints. You do the best you can this generation and try to get the low hanging fruit next time.

Performance modeling includes current applications of value, future algorithms that you expect to matter when the chip ships as well as insight from key software developers (if Apple and Microsoft tell you that they'll be doing a lot of realistic fur rendering in 4 years, you better make sure your chip is good at what they plan on doing). Obviously you can't predict everything that will happen, so you continue to model and test as new applications and workloads emerge. You feed that data back into the design loop and it continues to influence architectures down the road.

During all of this modeling, even once a design is done, you begin to notice bottlenecks in your design in various workloads. Perhaps you notice that your L1 cache is too small for some newer workloads, or that for a bunch of popular games you're seeing a memory access pattern that your prefetchers don't do a good job of predicting. More fundamentally, maybe you notice that you're decode bound more often than you'd like - or alternatively that you need more integer ALUs or FP hardware. You take this data and feed it back to the team(s) working on future architectures.

The folks working on future architectures then prioritize the wish list and work on including what they can.

245 Comments

View All Comments

TeXWiller - Friday, October 5, 2012 - link

Perhaps they also try to reach lower usable clock frequencies through performance upgrades and this way gain some additional voltage scaling, or what is left of it.vegemeister - Saturday, October 6, 2012 - link

>think loop counters which store an INT for loop iteration then perform some FP calcsIf updating the loop counter us taking a substantial fraction of the CPU time, doesn't that mean the compiler should have unrolled more?

Anand Lal Shimpi - Friday, October 5, 2012 - link

The high end desktop space was abandoned quite a while ago. The LGA-2011/Extreme platform remains as a way to somewhat address the market, but I think in reality many of those users simply shifted their sights downward with regards to TDPs. A good friend of mine actually opted for an S-series Ivy Bridge part when building his gaming mini-ITX PC because he wanted a cooler running system in addition to great performance.To specifically answer your question though - the common thread since Conroe/Merom was this belief that designing for power efficiency actually means designing for performance. All architectures since Merom have really been mobile focused, with versions built for the desktop. I like to think that desktop performance has continued to progress at a reasonable rate despite that, pretty much for the reason I just outlined.

Take care,

Anand

csroc - Friday, October 5, 2012 - link

Sandy Bridge E just seems to price itself out of being reasonable for a lot of people. The boards in particular are rather steep as well.dishayu - Friday, October 5, 2012 - link

Well, LGA2011 is bit of a halo product with no real substance. An ivy bridge 3770K will stand up to a quad core LGA2011 part nicely, not to mention it supports PCI e gen3, so even though it has lesser lanes, it doesn't have a bandwidth disadvantage. Moreover LGA2011 is still stuck at sandy based architecture, so that again isn't quite on the bleeding edge and as far as i understand, Haswell will come out before IB-E does, so it's 2 full cycles behind.Kevin G - Friday, October 5, 2012 - link

For a single discrete GPU, Ivy Bridge would be able to match the bandwidth of Sandy Bridge-E: a single 16 lane PCI-E 3.0 connection. Things get interesting when you scale the number of GPU's. There is a small but clear advantage to Sandy Bridge-E in a four GPU configuration. Ivy Bridge having fewer lanes does make a difference in such high end scenarios.For its target market (mobile, low end desktop), Ivy Bridge is 'good enough'.

vegemeister - Saturday, October 6, 2012 - link

Quad core LGA2011 is kind of a waste though. If you're already paying extra for the socket, my philosophy is go hexcore and 8 DIMMs or go home.Peanutsrevenge - Friday, October 5, 2012 - link

Given that desktop software's not really been pushing for better CPU performance, the direction intel has taken is not a bad one IMO either.It's now possible to build a mighty gaming rig in an mITX case (Bit Fenix Prodigy), think 3770K and GTX 690 gfx and watercooled.

A rig like that will likely last 3 years before settings have to be tweaked to keep 60+ fps.

What's really needed is for software to take advantage of GPUs more, (which would play into AMDs hands), but I fear many of the best coders have switched from windows to Android/iOS development, With windows 8 shipping shortly, that number will increase further.

j_newbie - Saturday, October 6, 2012 - link

I think that is quite sad.I for one always need more FLOPS, MCAD work and simulation work depends on two things memory bandwidth+size and flops, surprisingly AMD still offers a better vfm deal in this space thanks to avx instructions not being widely adopted into most FEA/CFD code yet and the additional ram slots you get with cheaper boards.

Server components are always overpriced as we dont need a system to last very long.

my 3930k setup is about 1.5 times faster than the x6 setup at 3 times the cost... :(

Peanutsrevenge - Saturday, October 6, 2012 - link

You're talking more of a workstation than a desktop. Hence my use of the word 'desktop'.