Samsung SSD 840 (250GB) Review

by Kristian Vättö on October 8, 2012 12:14 PM EST- Posted in

- Storage

- SSDs

- Samsung

- TLC

- Samsung SSD 840

AnandTech Storage Bench 2011

Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. Anand assembled the traces out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally we kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system. Later, however, we created what we refer to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. This represents the load you'd put on a drive after nearly two weeks of constant usage. And it takes a long time to run.

1) The MOASB, officially called AnandTech Storage Bench 2011—Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. Our thinking was that it's during application installs, file copies, downloading, and multitasking with all of this that you can really notice performance differences between drives.

2) We tried to cover as many bases as possible with the software incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II and WoW are both a part of the test), as well as general use stuff (application installing, virus scanning). We included a large amount of email downloading, document creation, and editing as well. To top it all off we even use Visual Studio 2008 to build Chromium during the test.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011—Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential; the rest ranges from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result we're going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time we'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, we will also break out performance into reads, writes, and combined. The reason we do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. It has lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback, as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

We don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea. The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests.

AnandTech Storage Bench 2011—Heavy Workload

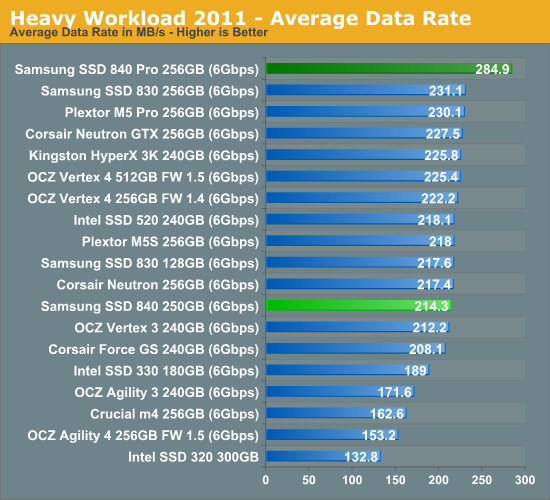

We'll start out by looking at average data rate throughout our new heavy workload test:

The 840 is quite average in our Heavy suite and performs similarly to most SandForce drives. The 840 Pro is a lot faster under heavy workloads, so it should be obvious by now why Samsung is offering two SSDs instead of one like they used to.

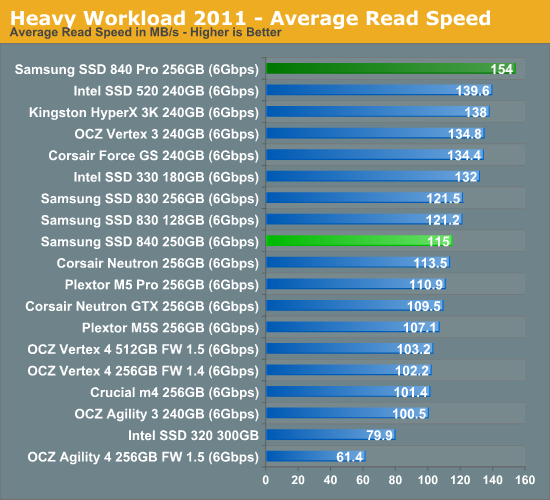

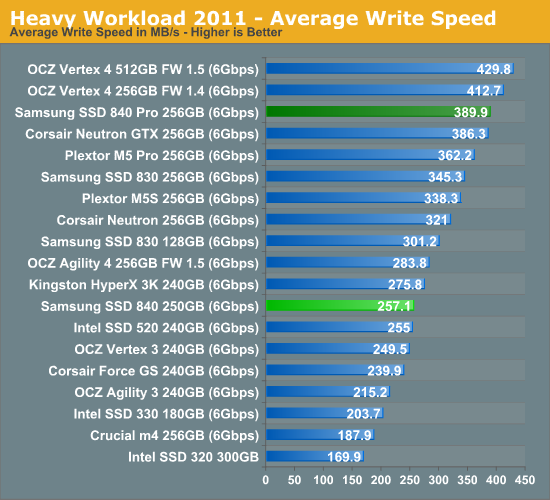

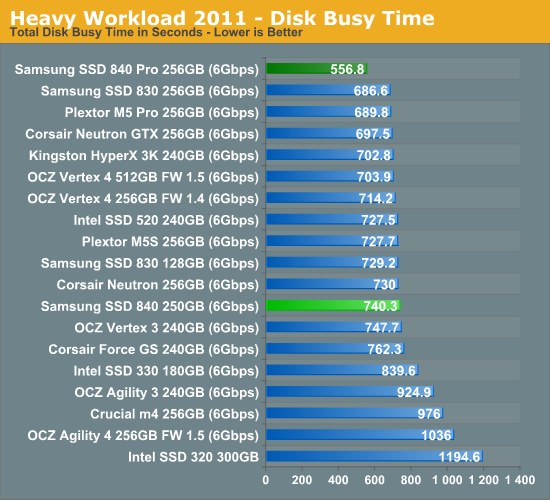

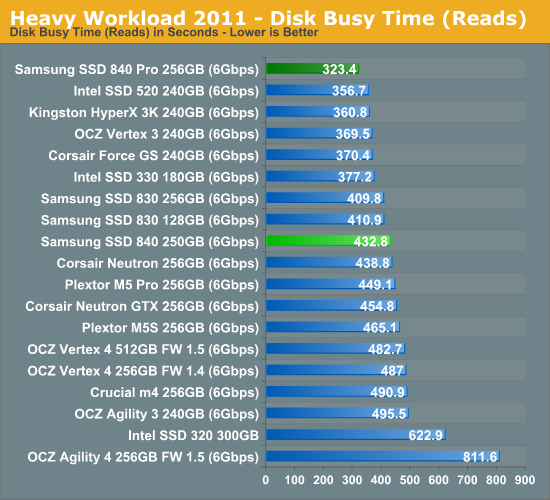

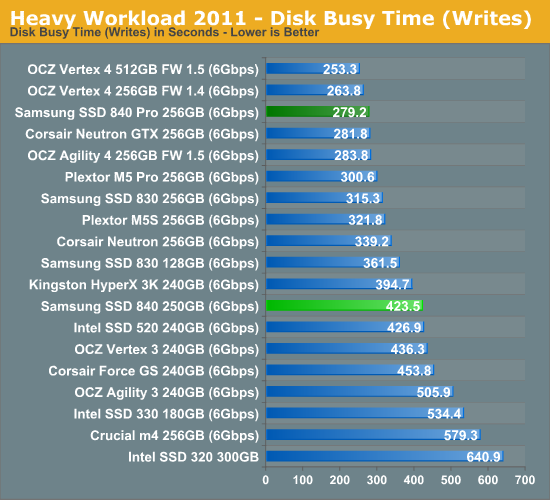

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

86 Comments

View All Comments

travbrad - Wednesday, October 10, 2012 - link

I had a 80GB WD that lasted 8 years without failing. I eventually had to stop using it simply because it was too slow. I also had a 250GB WD drive that I used for 5 years (then switched to all SATA). Now I have a 640GB drive that I've been using for almost 4 years. My brother has a couple 500GB drives in his system that have been running for 4-5 years as well.Maybe I've just been really lucky, but the only drive I've personally had fail in the last decade was a Hitachi drive (obviously selected for cost not quality) in my HP laptop.

Now at work it's a different story. Those pre-built machines cut every corner they can to bring costs down so they end up with low quality components (especially PSUs). Even in that situation there is a fairly low number of hard drive failures though (considering how old most of the machines are)

mapesdhs - Friday, October 12, 2012 - link

I have SCSI disks that are more than 20 years old which still work fine. :D

Ian.

MarkLuvsCS - Monday, October 8, 2012 - link

Considering Write Amplification has been significantly reduced compared to the initial SSD tech, I don't believe it's going to be a problem for the consumer market. Google xtremesystems Write Endurance to see a Samsung 830 256gb with 3000 P/E still running at 4.77 PETABYTES.That page also shows you other brands and how they fare. I would trust Samsung wouldn't put this tech to use without truly understanding how it would pan out.That is why the worry of the 1000 P/E 840 vs 3000 P/E 830 is overblown. Either way you have little to worry about with Samsung's controllers causing any fuss unlike Other CompanieZ.

Kjella - Monday, October 8, 2012 - link

Not giving one fsck about wearing out the SSD I burned through a 10k-rated SSD in 1.5 years. Now with fairly normal SSD usage - a standard Win7 desktop with torrents etc. on other drives - I'm down to 57% health and looking at 3 years 10 months on a 5K-rated drive. I don't know exactly what is eating it but I'm guessing every log file, every time MSN or IRC logs a line of chat, every time something is cached or whatever it burns write cycles. I feel the official numbers are vastly *overstating* the actual lifespan, not understating it. TLC with 1K writes? Not in my machine, no sir.madmilk - Monday, October 8, 2012 - link

There's no way MSN/IRC can burn through an SSD in 1.5 years since they're all text. You must be doing something unusual, or at least your computer is without you knowing it. A good idea would be to open up Task Manager, and select the columns that count the number of bytes written by various programs. Maybe then you can find the source of your problem. Also make sure you have defragmentation off, and sufficient RAM so you're not constantly hitting the pagefile.piiman - Tuesday, February 19, 2013 - link

Better yet put the page file on a different drive and also move your temp folders to a different drive.Notmyusualid - Tuesday, October 9, 2012 - link

Absolutely hilarious ending there pal... I wonder how many people got it!I got burned by them on a couple of drives, and promptly dumped them on some well-known auction site, sold as-is.

creed3020 - Tuesday, October 9, 2012 - link

I see what you did there ;-)Great review Kristian! I'll be looking at this drive as option for a new office PC I am building.

B3an - Monday, October 8, 2012 - link

Did you people even bother to read?? Because you're conveniently missing out the important fact in this article that you'd have to write 36.5TiB (almost 40TB) a year for it to last 3.5 years. I know for a fact that the average consumer does not write anywhere near that much a year, or even in 3 years. If anyone even comes close to 40TB a year they would be using a higher-end MLC SSD anyway as they would surely be using a workstation.Most consumers don't even write 10GB a day, so at that rate the drive would easily last OVER 20 years. But of course it's highly likely something else would fail before that happens.

You're also forgetting out DSP which is explained in this article as well. That can also near double the life.

I think Kristian should have made this all more clear because too many people don't bother to actually read stuff and just look at charts.

futrtrubl - Monday, October 8, 2012 - link

Granted the usual use cases won't have so much data throughput. However those same usual use cases have the user filling 3/4 of the drive with static data (program/OS/photo archive etc) reducing the drive area it's able to wear level over. So that 20 years again becomes 5 years.Also the 1000PE cycle stat means that there is a 50% chance for that sector to have become unusable by that time (ignoring DSP).

I'm not saying that TLC is bad, and I am certainly not saying this drive doesn't have great value. I'm just saying that we shouldn't understate the PE cycle issue.