The iPhone 5's A6 SoC: Not A15 or A9, a Custom Apple Core Instead

by Anand Lal Shimpi on September 15, 2012 2:15 PM EST- Posted in

- Smartphones

- Apple

- Mobile

- SoCs

- iPhone 5

When Apple announced the iPhone 5, Phil Schiller officially announced what had leaked several days earlier: the phone is powered by Apple's new A6 SoC.

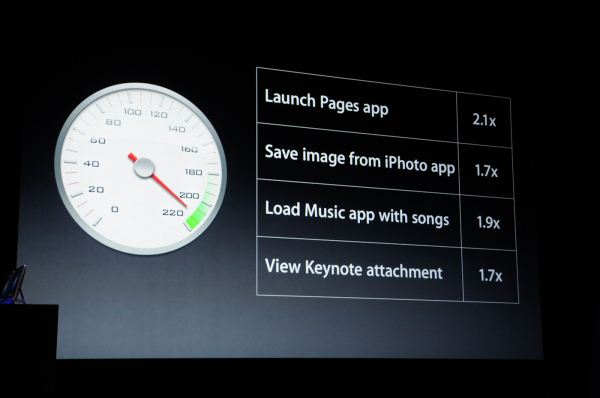

As always, Apple didn't announce clock speeds, CPU microarchitecture, memory bandwidth or GPU details. It did however give us an indication of expected CPU performance:

Prior to the announcement we speculated the iPhone 5's SoC would simply be a higher clocked version of the 32nm A5r2 used in the iPad 2,4. After all, Apple seems to like saving major architecture shifts for the iPad.

However, just prior to the announcement I received some information pointing to a move away from the ARM Cortex A9 used in the A5. Given Apple's reliance on fully licensed ARM cores in the past, the expected performance gains and unpublishable information that started all of this I concluded Apple's A6 SoC likely featured two ARM Cortex A15 cores.

It turns out I was wrong. But pleasantly surprised.

The A6 is the first Apple SoC to use its own ARMv7 based processor design. The CPU core(s) aren't based on a vanilla A9 or A15 design from ARM IP, but instead are something of Apple's own creation.

Hints in Xcode 4.5

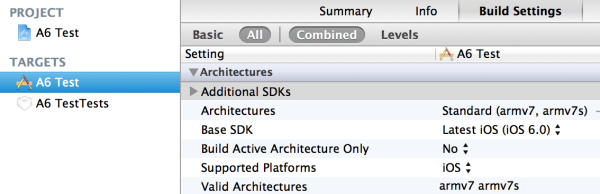

The iPhone 5 will ship with and only run iOS 6.0. To coincide with the launch of iOS 6.0, Apple has seeded developers with a newer version of its development tools. Xcode 4.5 makes two major changes: it drops support for the ARMv6 ISA (used by the ARM11 core in the iPhone 2G and iPhone 3G), keeps support for ARMv7 (used by modern ARM cores) and it adds support for a new architecture target designed to support the new A6 SoC: armv7s.

What's the main difference between the armv7 and armv7s architecture targets for the LLVM C compiler? The presence of VFPv4 support. The armv7s target supports it, the v7 target doesn't. Why does this matter?

Only the Cortex A5, A7 and A15 support the VFPv4 extensions to the ARMv7-A ISA. The Cortex A8 and A9 top out at VFPv3. If you want to get really specific, the Cortex A5 and A7 implement a 16 register VFPv4 FPU, while the A15 features a 32 register implementation. The point is, if your architecture supports VFPv4 then it isn't a Cortex A8 or A9.

It's pretty easy to dismiss the A5 and A7 as neither of those architectures is significantly faster than the Cortex A9 used in Apple's A5. The obvious conclusion then is Apple implemented a pair of A15s in its A6 SoC.

For unpublishable reasons, I knew the A6 SoC wasn't based on ARM's Cortex A9, but I immediately assumed that the only other option was the Cortex A15. I foolishly cast aside the other major possibility: an Apple developed ARMv7 processor core.

Balancing Battery Life and Performance

There are two types of ARM licensees: those who license a specific processor core (e.g. Cortex A8, A9, A15), and those who license an ARM instruction set architecture for custom implementation (e.g. ARMv7 ISA). For a long time it's been known that Apple has both types of licenses. Qualcomm is in a similar situation; it licenses individual ARM cores for use in some SoCs (e.g. the MSM8x25/Snapdragon S4 Play uses ARM Cortex A5s) as well as licenses the ARM instruction set for use by its own processors (e.g. Scorpion/Krait implement in the ARMv7 ISA).

For a while now I'd heard that Apple was working on its own ARM based CPU core, but last I heard Apple was having issues making it work. I assumed that it was too early for Apple's own design to be ready. It turns out that it's not. Based on a lot of digging over the past couple of days, and conversations with the right people, I've confirmed that Apple's A6 SoC is based on Apple's own ARM based CPU core and not the Cortex A15.

Implementing VFPv4 tells us that this isn't simply another Cortex A9 design targeted at higher clocks. If I had to guess, I would assume Apple did something similar to Qualcomm this generation: go wider without going substantially deeper. Remember Qualcomm moved from a dual-issue mostly in-order architecture to a three-wide out-of-order machine with Krait. ARM went from two-wide OoO to three-wide OoO but in the process also heavily pursued clock speed by dramatically increasing the depth of the machine.

The deeper machine plus much wider front end and execution engines drives both power and performance up. Rumor has it that the original design goal for ARM's Cortex A15 was servers, and it's only through big.LITTLE (or other clever techniques) that the A15 would be suitable for smartphones. Given Apple's intense focus on power consumption, skipping the A15 would make sense but performance still had to improve.

Why not just run the Cortex A9 cores from Apple's A5 at higher frequencies? It's tempting, after all that's what many others have done in the space, but sub-optimal from a design perspective. As we learned during the Pentium 4 days, simply relying on frequency scaling to deliver generational performance improvements results in reduced power efficiency over the long run.

To push frequency you have to push voltage, which has an exponential impact on power consumption. Running your cores as close as possible to their minimum voltage is ideal for battery life. The right approach to scaling CPU performance is a combination of increasing architectural efficiency (instructions executed per clock goes up), multithreading and conservative frequency scaling. Remember that in 2005 Intel hit 3.73GHz with the Pentium Extreme Edition. Seven years later Intel's fastest client CPU only runs at 3.5GHz (3.9GHz with turbo) but has four times the cores and up to 3x the single threaded performance. Architecture, not just frequency, must improve over time.

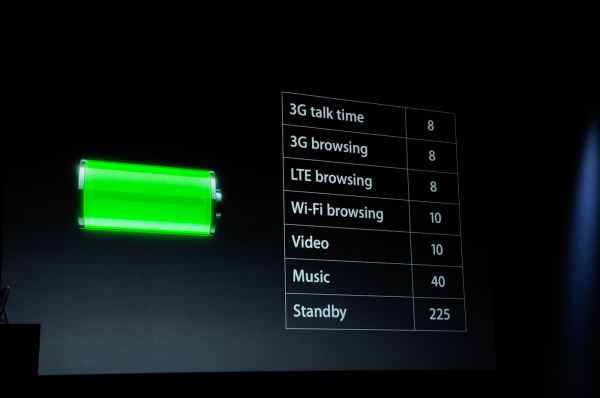

At its keynote, Apple promised longer battery life and 2x better CPU performance. It's clear that the A6 moved to 32nm but it's impossible to extract 2x better performance from the same CPU architecture while improving battery life over only a single process node shrink.

Despite all of this, had it not been for some external confirmation, I would've probably settled on a pair of higher clocked A9s as the likely option for the A6. In fact, higher clocked A9s was what we originally claimed would be in the iPhone 5 in our NFC post.

I should probably give Apple's CPU team more credit in the future.

The bad news is I have no details on the design of Apple's custom core. Despite Apple's willingness to spend on die area, I believe an A15/Krait class CPU core is a likely target. Slightly wider front end, more execution resources, more flexible OoO execution engine, deeper buffers, bigger windows, etc... Support for VFPv4 guarantees a bigger core size than the Cortex A9, it only makes sense that Apple would push the envelope everywhere else as well. I'm particularly interested in frequency targets and whether there's any clever dynamic clock work happening. Someone needs to run Geekbench on an iPhone 5 pronto.

I also have no indication how many cores there are. I am assuming two but Apple was careful not to report core count (as it has in the past). We'll get more details as we get our hands on devices in a week. I'm really interested to see what happens once Chipworks and UBM go to town on the A6.

163 Comments

View All Comments

secretmanofagent - Sunday, September 16, 2012 - link

Same here. I've enjoyed the Krait in-depth reviews as well. Keep up the good work.abishekmuw - Sunday, September 16, 2012 - link

It seems that all the performance gains shown off by Apple are storage bound (launching app, saving files, viewing files).. there doesn't seem to be a performance data point for CPU bound tasks (browsing, imovie, garage band, etc). doesn't this make it more likely that the iphone could be faster because of improved storage (or filesystem), and not just a faster CPU?jwcalla - Sunday, September 16, 2012 - link

Well that new Samsung NAND flash has like 4x the performance of previous flash (140 MB/s reads, 50 MB/s writes, plus good IOPS), but who knows if Apple decided to dip into that territory.The "up to 2x faster" claim is probably a combination of improvements in the CPU, memory, storage, and compiler.

Unfortunately, the benchmarks won't be able to isolate the CPU performance alone since there's no control / standard to compare it to. They'll show A6 vs. Krait vs. Exynos, etc., but one can't really draw much of a conclusion about the CPU itself. You're basically benching the "total package".

The LLVM / Clang compiler that Apple uses is actually very well-tuned for ARM architectures.

UpSpin - Sunday, September 16, 2012 - link

Exactly. If the A6 is really 2 times fater than A5, they easily could have added a synthetic benchmark result which shows that the A6 computes 2 times faster, just to satisfy tech sites. But they didn't, they focused on things which could be caused by software optimizations (accelerated through GPU) or other tweaks as you said (storage).I expected that the iPhone 5 will have a A15 to remain competive with next gen Android smartphones. Now I think the A6 is even less impressive than Anand believes it is. No A15, just a A9 with A15 like improvements, maybe worse than Qualcomm Krait. The biggest improvement: die shrink.

darkcrayon - Sunday, September 16, 2012 - link

They also said 2x graphics performance, and none of the examples had *anything* to do with GPU. It won't take long for us to find out at least.Shamesung-korean-made - Sunday, September 16, 2012 - link

LOL! I think talk of A15 had fandroid fans running scared! Galaxy s3 - designed by KOREANS!cacca - Sunday, September 16, 2012 - link

Strange that in this article you don't comment that all the previous iPhone compatible gadgets/hardware con be thrown out the window.So your alarm clock, hi-fi system, speakers, docking stations... everything is not compatible.

Obviously you can buy, for the usual high price, an adaptor (that will not be physically compatible with all your previous generation gadgets). Remember to buy the cable too.

So you end paying 100$ more (check the prices for adaptor and lighting cables), just to connect it to your PC/Apple.

Are all apple users so used to be swindled? Are mentally impaired? There was no real technical reason to change, exuding the usual one.... grab zealot money.

Welcome to Apple, a company dedicated to the studies of the of fanboysm and logic limits.

asendra - Sunday, September 16, 2012 - link

Are you for real?First, you don't need 100$ in order to connect it to your pc, it comes with the usb cable. I assume you pc does has usb right?

No technical reason? Besides the fact that it is 5 times smaller, symmetrical and all around a better connector more suited to modern technologies..

Besides, could you care to point ANY company that has kept the same connector for nearly 9 years? I think It was long overdue and people has had more than time to benefit from their purchases.

I could point to many OEM who see fit to change their charger/connector every 6 months, but hey, all of us know they aren't screwing anyone because no one buys accessories for those devices.

UpSpin - Sunday, September 16, 2012 - link

If they changed the connector, thus made every iPhone 4S and previous accessory incompatible, why have they introduced a new proprietary connector and haven't made use of the standard micro USB connector.True, the iPhone 5 connector, thanks to its symmetry, is more handy. But that's the only advantage, the disadvantage of introducing a new propiertary connector, if a standard connector is established already, is much larger. You still have to carry an USB micro adapter with you, you additionally have to carry an adapter for your old accessories which you won't upgrade in the near term (car dock, hifi station), so why not just move to USB and get compatible with all the USB standards? (MHL)

doobydoo - Sunday, September 16, 2012 - link

You don't need to carry any USB micro adapter with you, at all. What on earth are you on about.You don't need microUSB full stop. I've honestly never used it - not once, in any device.

You take the SUPPLIED cable and plug it into your pc, or use the SUPPLIED cable to charge your phone. How are you struggling with that?

Regarding lightning vs microUSB, unless you've extensively benchmarked both and know the absolute limitations and performance of both - you simply cannot make any claims regarding any benefits it may or may not have.