Intel's Haswell: 20x Lower Platform Idle Power than Sandy Bridge, 2x GPU Performance of Ivy Bridge

by Anand Lal Shimpi on September 11, 2012 12:58 PM EST- Posted in

- IT Computing

- CPUs

- Intel

- Haswell

- Trade Shows

- IDF 2012

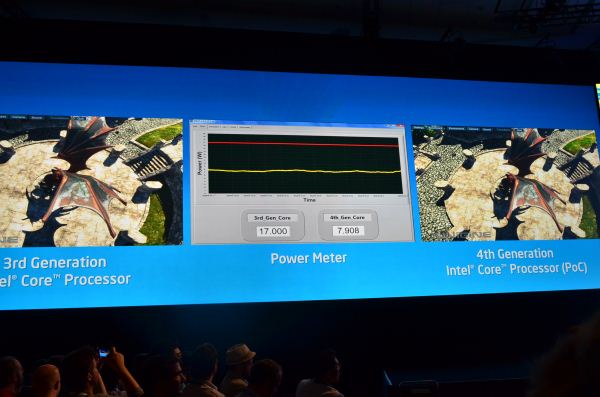

If you've been following our IDF Live Blog you've already seen this, but for everyone else - Intel gave us a hint at what Haswell will bring next year: 20x lower platform idle power compared to Sandy Bridge, and up to 2x the GPU performance of Ivy Bridge.

Intel ran Unigen on a Haswell reference platform at 2x the frame rate of an Ivy Bridge system. Alternatively you could run at the same performance but using half the power on Haswell.

23 Comments

View All Comments

csroc - Tuesday, September 11, 2012 - link

Hmm, it's coming up on the five year mark, time for me to build a new workstation. I'm not in any rush and every time I read new news I flip flop on whether I should just do it this winter or wait for Haswell. There's always the next chip to wait for but my plan was to have a new system built by next summerish regardless and it wouldn't happen any sooner than towards the end of this one.Increased GPU performance isn't too important to me. Any gaming I do would still run off a dedicated GPU.

Lower power consumption, particularly at idle, is nice but if it's just the CPU as others are already wondering, how much of a dent is that really going to make? I leave my system on all the time, every watt is nice but the rest may already be hungrier than Ivy Bridge at idle.

Decisions decisions.

csroc - Tuesday, September 11, 2012 - link

"wouldn't happen any sooner than towards the end of this one."Changed what I was saying but didn't fix that. Supposed to say "towards the end of this year"

Magichands8 - Tuesday, September 11, 2012 - link

Well, if you don't care much for graphics performance and you are looking for a workstation then I'm not sure Haswell has anything interesting to offer since graphics and low power consumption is what Haswell is all about. I was thinking of waiting until 2013 for Intel to release performance 6 core Haswell parts but from what I've read that just isn't going to happen. The kiddies have to be able to watch 4k video on their postage-stamp smartphone screens and that seems to be what Intel is most concerned with these days. Haswell could be really interesting for long battery life productivity work on a laptop though. If I were building a system today for performance I'd be looking at maybe multiple AMD Opterons. From what I've read Intel is refusing to even comment on Haswell's CPU performance. To me that's a bad sign and implies that it's not so hot. I wouldn't be surprised if it were just a couple of percents better than Ivy Bridge.csroc - Tuesday, September 11, 2012 - link

That's the overall impression I've been getting as well. Most of the information I've been reading hasn't really warmed me up on Haswell. Since I commented I have been rethinking things and on the whole I'm not that convinced Haswell would be too important. It could be great for mobile but on a desktop it just doesn't sound like a big step.Wonder if there will be Ivy Bridge E.

azazel1024 - Tuesday, September 11, 2012 - link

It may also include the chipset. I don't recall what Cougar Point was running, but I think it was 60nm (maybe 45nm). It certainly is not even 32nm and I know Panther Point is the same process node as Cougar Point, so it is possible that Shark Bay (that is Haswell's new chipset, right?) might actually be on 22nm and jump a couple of process nodes. Chipsets ususally seem to lag 1-2 node generations behind the processors.So that could be where the bulk of the power savings is, though I am sure there is a lot of processor power savings as well.

For a desktop, very little impact. In a notebook that is in S1 (IE possibly idle, but not asleep). Other motherboard stuff, drive idle/sleep power consumption, WiFi adapter draw, display power, DRAM, etc are already a fair amount larger than chipset/CPU in aggregate at idle. Now, sure, that might still get you an extra 10-15% battery life. What it will do is provide a good boost in tablets where there is less stuff connected already. Likely a smaller/lower power display, etc. Also it gives you a huge boost for this "connected" sleep mode where most everything else is powered down. In that case, compared to trying to do it with a modern processor, it could be the difference between a 2-3w "connected/sleep" power draw and a couple of hundred milliwatts (maybe 200-400mw) (or 20-30hrs and a week). This is of course compared to current day S3, which probably draws around 150-300mw to keep the RAM in lower power refresh mode and basically everything else off.

Frankly Haswell is sounding pretty impressive, both for x86 tablets and for notebooks. I am sure it won't hurt for desktops either, but in that kind of power envelope the improved GPU is superflous for most users (though it might just make a mean HTPC for me) and the lower power draw most don't care about.

dishayu - Tuesday, September 11, 2012 - link

All thanks to the on die VR and little tweaks here and there?Wolfpup - Tuesday, September 11, 2012 - link

Okay, so the power thing is cool, really even for desktops. The video is cool maybe for tablets...maybeBut for desktops and notebooks it continues to piss me off that they're blowing hundreds of millions of transistors on their stupid video that could be used towards a more powerful CPU...or heck, I'd sooner they just chopped that part off and took the profit!

Ugh, what AMD is doing is actually okayish at the low end, but for a mid range system? There's just no excuse for this integrated stuff...

Magichands8 - Tuesday, September 11, 2012 - link

Agreed, it's very disappointing to see Intel essentially halt any development on the performance side. There's no way I'd build a system today without a stand alone GPU and as it stands I can't even think about building a system with Ivy Bridge without feeling like I'm being cheated by being forced to pay for the useless half of the chip.Although honestly I don't even totally blame Intel for all of that. I understand that Intel has to adapt to the market and that they are being forced to compete with ARM on the mobile end whether they like it or not. What I don't understand quite is why the market is moving in that direction. Heavy duty graphics power makes sense to me for large screen displays for watching HD content at home or multi-LCD gaming setups but doesn't make any sense to me at all for mobile devices. Honestly, I don't even own a smartphone or tablet but aren't current chips already powerful enough to handle what the average user wants to do with them? How much smoother can Jelly Bean get? Where exactly does Intel think it's going to go with it's processors after they build one that can run Battefield 6 and Windows 9 Ultra Super Duper Edition on my clamshell cell phone?

DanNeely - Tuesday, September 11, 2012 - link

While they're hyping the IGP and low power since geeks with desktops and discrete GPUs are a shrinking minority of the market, Take at slides 11-13; they've doubled theoretical FP throughput with new AVX2 instructions (will need app recompiles at a minimum to benefit), doubled cache bandwidth, along with misc improvements to branch prediction a larger OOO buffer, and reduced latencies for virtualization.aicom - Wednesday, September 12, 2012 - link

Let's put this in perspective. Haswell DOES increase CPU performance per clock. They've doubled the L2 bandwidth by allowing L2 loads once per clock instead of once per two clocks. They've even added a whole new ALU pipeline! The Intel Core microarchitectures have been 3 issue on the ALU side since the original Core 2 Duo. With Haswell, Intel will be able to dispatch 4 ALU operations per clock and 8 total micro-ops (compared to 3 and 6 in Ivy Bridge). This is certainly a major improvement compared to the normal "make caches bigger" strategy.