Building the 2012 AnandTech SMB / SOHO NAS Testbed

by Ganesh T S on September 5, 2012 6:00 PM EST- Posted in

- IT Computing

- Storage

- NAS

Motherboard

A number of vendors exist in the dual processor workstation motherboard market. At the time of the build, LGA 2011 Xeons had already been introduced, and we decided to focus on boards supporting those processors. Since we wanted to devote one physical disk and one network interfaces to each VM, it was essential that the board have enough PCI-E slots for multiple quad-ported server NICs as well as enough native SATA ports. For our build, we chose the Asus Z9PE-D8 WS motherboard with an SSI EEB form factor..

Based on the C602 chipset, this dual LGA 2011 motherboard supports 8 DIMMs and has 7 PCIe 3.0 slots. The lanes can be organized as (2 x16 + 1 x16 + 1x8 or 4 x8 + 1x16 + 1 x8). All the slots are physically 16 lanes wide. The Intel C602 chipset provides two 6 Gbps SATA ports and eight SATA 3 Gbps ports. A Marvell PCIe 9230 controller provides four extra 6 Gbps ports making for a total of 14 SATA ports. This allows us to devote two ports to the host OS of the workstation and one port to each of the twelve planned VMs. The Z9PE-D8 WS motherboard also has two GbE ports based on the Intel 82574L. Two Gigabit LAN controllers are not going to be sufficient for all our VMs. We will address this issue further down in the build.

The motherboard also has 4 USB 3.0 ports, thanks to an ASMedia USB 3.0 controller. The Marvell SATA - PCIe bridge and the ASMedia USB3 controller are connected to the 8 PCIe lanes in the C602. All the PCIe 3.0 lanes come from the processors. Asus also provides support for SSD caching (where any installed SSD can be used as a cache for frequently accessed data, without any size limitations) in the motherboard. The Z9PE-D8 WS also has a Realtek ALC898 HD audio codec, but neither of the above aspects are of relevance to our build.

CPUs

One of the main goals of the build was to ensure low power consumption. At the same time, we wanted to run twelve VMs simultaneously. In order to ensure smooth operation, each VM needs at least one vCPU allocated exclusively to it. The Xeon E5-2600 family (Sandy Bridge-EP) has CPUs with core counts ranging from 2 to 8, with TDPs from 60 W to 150 W. Each core has two threads. Keeping in mind the number of VMs we wanted to run, we specifically looked at the 6 and 8 core variants, as two of those processors would give us 12 and 16 cores. Within these, we restricted ourselves to the low power variants. These included the hexa-core E5-2630L (60 W TDP) and the octa-core E5-2648L / E5-2650L (70 W TDP).

CPU decisions for machines meant to run VMs have to be usually made after taking the requirements of the workload into consideration. In our case, the workload for each VM involved IOMeter and Intel NASPT (more on these in the software infrastructure section). Both of these softwares tend to be I/O-bound, rather than CPU-bound, and can run reliably on even Pentium 4 processors. Therefore, the per-core performance of the three processors was not a factor that we were worried about.

Out of the three processors, we decided to go ahead with the hexa-core Xeon E5-2630L. The cores run at 2 GHz, but can Turbo up to 2.5 GHz when just one core is active. Each core has a 256 KB L2 cache, with a common 15 MB L3. With a TDP of just 60W, it enabled us to focus on energy efficiency. Two Xeon E5-2630Ls (a total of 120W TDP) enabled us to proceed with our plan to run 12 VMs concurrently.

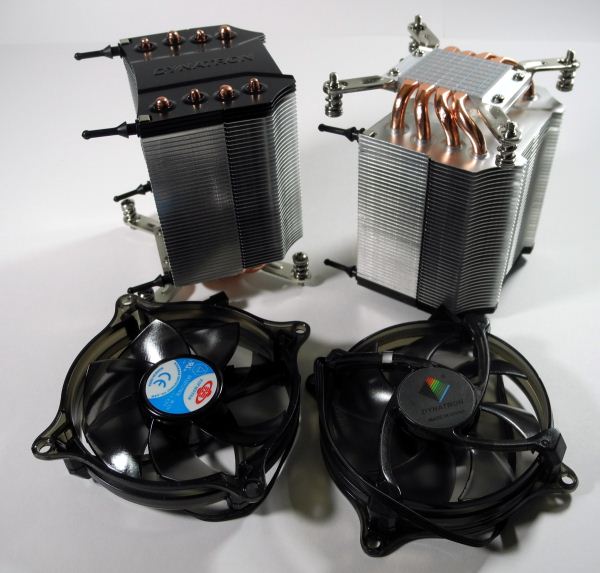

Coolers

The choice of coolers for the processors is dictated by the chassis used for the build. At the start of the build, we decided to go with a tower desktop configuration. Asus recommended the Dynatron R17 for use with the Z9PE-D8 WS, and we went ahead with their suggestion.

The R17 coolers are meant for the LGA 2011 sockets for 3U and above rackmount form factors as well as tower desktop and workstation solutions. They are made of aluminium fins with four copper heat pipes. A thermal compound is pre-printed at the base. Installation of the R17s was quite straightforward, but care had to be taken to ensure that the side meant to mount the cooler’s fans didn’t face the DIMM slots on the Z9PE-D8 WS.

The fans on the R17 operate between 1000 and 2500 rpm, and consume between 0.96W and 3W at these speeds. Noise levels are respectable and range from 17 dbA to 32 dbA. The R17 has the ability to cool CPUs with up to 160W TDP. The 60W E5-2630Ls were effectively maintained between 45C and 55C even under our full workloads by the Dynatron R17s.

74 Comments

View All Comments

Tor-ErikL - Thursday, September 6, 2012 - link

As always a great article and a sensible testbench which can be scaled to test everything from small setups to larger setups. good choice!However i would also like some type of test that is less geared towards technical performance and more real world scenarios.

so to help out i give you my real world scenario:

Family of two adults and two teenagers...

Equipment in my house is:

4 latops running on wifi network

1 workstation for work

1 mediacenter running XBMC

1 Synollogy NAS

laptops streams music/movies from my nas - usually i guess no more than two of these runs at the same time

MediaCenter also streams music/movies from the same nas at the same time

in adition some of the laptops browse all the family pictures which are stored on the NAS and does light file copy to and from the NAS.

The NAS itself downloads movies/music/tvshows and does unpacking and internal file transfers

My guess for a typical home use scenario there is not that much intensiv file copying going on, usually only light transfers trough mainly either wifi or 100mb links

I think the key factor is that usually there are multiple clients connecting and streaming different stuff that is the most relevant factor. at tops 4-5 clients

Also as mentioned difference on the different sharing protocols like SMB/CIFS would be interesting to se more details about.

Looking forward for the next chapters in your testbench :)

Jeff7181 - Thursday, September 6, 2012 - link

I'd be very curious to see tests involving deduplication. I know deduplication is found more on enterprise-class type storage systems, but WHS used SIS, and FreeNAS uses ZFS, which supports deduplication._Ryan_ - Thursday, September 6, 2012 - link

It would be great if you guys could post results for the Drobo FS.Pixelpusher6 - Thursday, September 6, 2012 - link

Quick Correction - On the last page under specs for the memory do you mean 10-10-10-30 instead of 19-10-10-30?I was wondering about the setup with the CPUs for this machine. If each of the 12 VMs use 1 dedicated real CPU core then what is the host OS running on? With 2 Xeon E5-2630Ls that would be 12 real CPU cores.

I'm also curious about how hyper-threading works in a situation like this. Does each VM have 1 physical thread and 1 HT thread for a total of 2 threads per VM? Is it possible to run a VM on a single HT core without any performance degradation? If the answer is yes then I'm assuming it would be possible to scale this system up to run 24 VMs at once.

ganeshts - Thursday, September 6, 2012 - link

Thanks for the note about the typo in the CAS timings. Fixed it now.We took a punt on the fact that I/O generation doesn't take up much CPU. So, the host OS definitely shares CPU resources with the VMs, but the host OS handles that transparently. When I mentioned that one CPU core is dedicated to each VM, I meant that the Hyper-V settings for the VM indicated 1 vCPU instead of the allowed 2 , 3 or 4 vCPUs.

Each VM runs only 1 thread. I am still trying to figure out how to increase the VM density in the current set up. But, yes, it looks like we might be able to hit 24 VMs because the CPU requirements from the IOMeter workloads are not extreme.

dtgoodwin - Thursday, September 6, 2012 - link

Kudos on excellent choice of hardware for power efficiency. 2 CPUs, 14 network ports, 8 sticks of RAM, and a total of 14 SSDS idling at just over 100 watts is very impressive.casteve - Thursday, September 6, 2012 - link

Thanks for the build walkthrough, Ganesh. I was wondering why you used a 850W PSU when worst case DC power use is in the 220W range? Instead of the $180 Silverstone Gold rated unit, you could have gone with a lower power 80+ Gold or Platinum PSU for less $'s and better efficiency at your given loads.ganeshts - Thursday, September 6, 2012 - link

Just a hedge against future workloads :)haxter - Thursday, September 6, 2012 - link

Guys yank those NICs and get a dual 10gbe card in place. SOHO is 10Gbe these days. What gives? How are you supposed to test SOHO NAS with each VM so crippled?extide - Thursday, September 6, 2012 - link

10GBe is certainly not SOHO.