The GeForce GTX 660 Ti Review, Feat. EVGA, Zotac, and Gigabyte

by Ryan Smith on August 16, 2012 9:00 AM ESTIt’s hard not to notice that NVIDIA has a bit of a problem right now. In the months since the launch of their first Kepler product, the GeForce GTX 680, the company has introduced several other Kepler products into the desktop 600 series. With the exception of the GeForce GT 640 – their only budget part – all of those 600 series parts have been targeted at the high end, where they became popular, well received products that significantly tilted the market in NVIDIA’s favor.

The problem with this is almost paradoxical: these products are too popular. Between the GK104-heavy desktop GeForce lineup, the GK104 based Tesla K10, and the GK107-heavy mobile GeForce lineup, NVIDIA is selling every 28nm chip they can make. For a business prone to boom and bust cycles this is not a bad problem to have, but it means NVIDIA has been unable to expand their market presence as quickly as customers would like. For the desktop in particular this means NVIDIA has a very large, very noticeable hole in their product lineup between $100 and $400, which composes the mainstream and performance market segments. These market segments aren’t quite the high margin markets NVIDIA is currently servicing, but they are important to fill because they’re where product volumes increase and where most of their regular customers reside.

Long-term NVIDIA needs more production capacity and a wider selection of GPUs to fill this hole, but in the meantime they can at least begin to fill it with what they have to work with. This brings us to today’s product launch: the GeForce GTX 660 Ti. With nothing between GK104 and GK107 at the moment, NVIDIA is pushing out one more desktop product based on GK104 in order to bring Kepler to the performance market. Serving as an outlet for further binned GK104 GPUs, the GTX 660 Ti will be launching today as NVIDIA’s $300 performance part.

| GTX 680 | GTX 670 | GTX 660 Ti | GTX 570 | |

| Stream Processors | 1536 | 1344 | 1344 | 480 |

| Texture Units | 128 | 112 | 112 | 60 |

| ROPs | 32 | 32 | 24 | 40 |

| Core Clock | 1006MHz | 915MHz | 915MHz | 732MHz |

| Shader Clock | N/A | N/A | N/A | 1464MHz |

| Boost Clock | 1058MHz | 980MHz | 980MHz | N/A |

| Memory Clock | 6.008GHz GDDR5 | 6.008GHz GDDR5 | 6.008GHz GDDR5 | 3.8GHz GDDR5 |

| Memory Bus Width | 256-bit | 256-bit | 192-bit | 320-bit |

| VRAM | 2GB | 2GB | 2GB | 1.25GB |

| FP64 | 1/24 FP32 | 1/24 FP32 | 1/24 FP32 | 1/8 FP32 |

| TDP | 195W | 170W | 150W | 219W |

| Transistor Count | 3.5B | 3.5B | 3.5B | 3B |

| Manufacturing Process | TSMC 28nm | TSMC 28nm | TSMC 28nm | TSMC 40nm |

| Launch Price | $499 | $399 | $299 | $349 |

In the Fermi generation, NVIDIA filled the performance market with GF104 and GF114, the backbone of the very successful GTX 460 and GTX 560 series of video cards. Given Fermi’s 4 chip product stack – specifically the existence of the GF100/GF110 powerhouse – this is a move that made perfect sense. However it’s not a move that works quite as well for NVIDIA’s (so far) 2 chip product stack. In a move very reminiscent of the GeForce GTX 200 series, with GK104 already serving the GTX 690, GTX 680, and GTX 670, it is also being called upon to fill out the GTX 660 Ti.

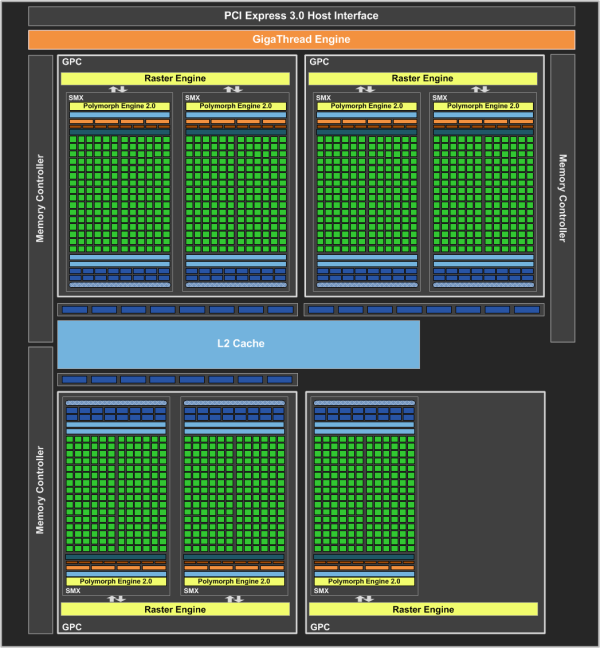

All things considered the GTX 660 Ti is extremely similar to the GTX 670. The base clock is the same, the boost clock is the same, the memory clock is the same, and even the number of shaders is the same. In fact there’s only a single significant difference between the GTX 670 and GTX 660 Ti: the GTX 660 Ti surrenders one of GK104’s four ROP/L2/Memory clusters, reducing it from a 32 ROP, 512KB L2, 4 memory channel part to a 24 ROP, 384KB L2, 3 memory channel part. With NVIDIA already binning chips for assignment to GTX 680 and GTX 670, this allows NVIDIA to further bin those GTX 670 parts without much additional effort. Though given the relatively small size of a ROP/L2/Memory cluster, it’s a bit surprising they have all that many chips that don’t meet GTX 670 standards.

In any case, as a result of these design choices the GTX 660 Ti is a fairly straightforward part. The 915MHz base clock and 980MHz boost clock of the chip along with the 7 SMXes means that GTX 660 Ti has the same theoretical compute, geometry, and texturing performance as GTX 670. The real difference between the two is on the render operation and memory bandwidth side of things, where the loss of the ROP/L2/Memory cluster means that GTX 660 Ti surrenders a full 25% of its render performance and its memory bandwidth. Interestingly NVIDIA has kept their memory clocks at 6GHz – in previous generations they would lower them to enable the use of cheaper memory – which is significant for performance since it keeps the memory bandwidth loss at just 25%.

How this loss of render operation performance and memory bandwidth will play out is going to depend heavily on the task at hand. We’ve already seen GK104 struggle with a lack of memory bandwidth in games like Crysis, so coming from GTX 670 this is only going to exacerbate that problem; a full 25% drop in performance is not out of the question here. However in games that are shader heavy (but not necessarily memory bandwidth heavy) like Portal 2, this means that GTX 660 Ti can hang very close to its more powerful sibling. There’s also the question of how NVIDIA’s nebulous asymmetrical memory bank design will impact performance, since 2GB of RAM doesn’t fit cleanly into 3 memory banks. All of these are issues where we’ll have to turn to benchmarking to better understand.

The impact on power consumption on the other hand is relatively straightforward. With clocks identical to the GTX 670, power consumption has only been reduced marginally due to the disabling of the ROP cluster. NVIDIA’s official TDP is 150W, with a power target of 134W. This compares to a TDP of 170W and a power target of 141W for the GTW 670. Given the mechanisms at work for NVIDIA’s GPU boost technology, it’s the power target that is a far better reflection of what to expect relative to the GTX 670. On paper this means that GK104 could probably be stuffed into a sub-150W card with some further functional units being disabled, but in practice desktop GK104 GPUs are probably a bit too power hungry for that.

Moving on, this launch will be what NVIDIA calls a “virtual” launch, which is to say that there aren’t any reference cards being shipped to partners to sell or to press to sample. Instead all of NVIDIA’s partners will be launching with semi-custom and fully-custom cards right away. This means we’re going to see a wide variety of cards right off the bat, however it also means that there will be less consistency between partners since no two cards are going to be quite alike. For that reason we’ll be looking at a slightly wider selection of partner designs today, with cards from EVGA, Zotac, and Gigabyte occupying our charts.

As for the launch supply, with NVIDIA having licked their GK104 supply problems a couple of months ago the supply of GTX 660 Ti cards looks like it should be plentiful. Some cards are going to be more popular than others and for that reason we expect we’ll see some cards sell out, but at the end of the day there shouldn’t be any problem grabbing a GTX 660 Ti on today’s launch day.

Pricing for GTX 660 Ti cards will start at $299, continuing NVIDIA’s tidy hierarchy of a GeForce 600 at every $100 price point. With the launch of the GTX 660 Ti NVIDIA will finally be able to start clearing out the GTX 570, a not-unwelcome thing as the GTX 660 Ti brings with it the Kepler family features (NVENC, TXAA, GPU boost, and D3D 11.1) along with nearly twice as much RAM and much lower power consumption. However this also means that despite the name, the GTX 660 Ti is a de facto replacement for the GTX 570 rather than the GTX 560 Ti. The sub-$250 market the GTX 560 Ti launched will continue to be served by Fermi parts for the time being. NVIDIA will no doubt see quite a bit of success even at $300, but it probably won’t be quite the hot item that the GTX 560 Ti was.

Meanwhile for a limited period of time NVIDIA will be sweeting the deal by throwing in a copy of Borderlands 2 with all GTX 600 series cards as a GTX 660 Ti launch promotion. Borderlands 2 is the sequel to Gearbox’s 2009 FPS/RPG hybrid, and is a TWIMTBP game that will have PhysX support along with planned support for TXAA. Like their prior promotions this is being done through retailers in North America, so you will need to check and ensure your retailer is throwing in Borderlands 2 vouchers with any GTX 600 card you purchase.

On the marketing front, as a performance part NVIDIA is looking to not only sell the GTX 660 Ti as an upgrade to 400/500 series owners, but to also entice existing GTX 200 series owners to upgrade. The GTX 660 Ti will be quite a bit faster than any GTX 200 series part (and cooler/quieter than all of them), with the question being of whether it’s going to be enough to spur those owners to upgrade. NVIDIA did see a lot of success last year with the GTX 560 driving the retirement of the 8800GT/9800GT, so we’ll see how that goes.

Anyhow, as with the launch of the GTX 670 cards virtually every partner is also launching one or more factory overclocked model, so the entire lineup of launch cards will be between $299 and $339 or so. This price range will put NVIDIA and its partners smack-dab between AMD’s existing 7000 series cards, which have already been shuffling in price some due to the GTX 670 and the impending launch of the GTX 660 Ti. Reference-clocked cards will sit right between the $279 Radeon HD 7870 and $329 Radeon HD 7950, which means that factory overclocked cards will be going head-to-head with the 7950.

On that note, with the launch of the GTX 660 Ti we can finally shed some further light on this week’s unexpected announcement of a new Radeon HD 7950 revision from AMD. As you’ll see in our benchmarks the existing 7950 maintains an uncomfortably slight lead over the GTX 660 Ti, which has spurred on AMD to bump up the 7950’s clockspeeds at the cost of power consumption in order to avoid having it end up as a sub-$300 product. The new 7950B is still scheduled to show up at the end of this week, with AMD’s already-battered product launch credibility hanging in the balance.

For this review we’re going to include both the 7950 and 7950B in our results. We’re not at all happy with how AMD is handling this – it’s the kind of slimy thing that has already gotten NVIDIA in trouble in the past – and while we don’t want to reward such actions it would be remiss of us not to include it since it is a new reference part. And if AMD’s credibility is worth anything it will be on the shelves tomorrow anyhow.

| Summer 2012 GPU Pricing Comparison | |||||

| AMD | Price | NVIDIA | |||

| Radeon HD 7970 GHz Edition | $469/$499 | GeForce GTX 680 | |||

| Radeon HD 7970 | $419/$399 | GeForce GTX 670 | |||

| Radeon HD 7950 | $329 | ||||

| $299 | GeForce GTX 660 Ti | ||||

| Radeon HD 7870 | $279 | ||||

| $279 | GeForce GTX 570 | ||||

| Radeon HD 7850 | $239 | ||||

313 Comments

View All Comments

TheJian - Sunday, August 19, 2012 - link

You forgot to mention the extra heat, noise and watts it takes to do that 1250mhz. Catching NV's cards isn't free in any of these regards as the 7950 BOOST edition already shows vs. even the zotac Amp version of the 660ti.AMD fanboys might call these features these days I guess...

Galidou - Monday, August 20, 2012 - link

7950 boost is a reference board, as we said before, 4 out of 18 boards are reference on newegg. It's unlikely people will buy reference cooler from AMD because they're plain bad if you overclock. Will people putting the reference design out of the way when speaking about overclock....Noise and temperature when overclocked on either for example Twin Frozr3 from MSI or sapphire OC are ALOT more silent and cool even fully overclocked....

TheJian - Monday, August 20, 2012 - link

Nearly every card on newegg is OC'd by manufacturer and the top listed boost isn't even the top it can do by default which os a Nice.CeriseCogburn - Thursday, August 23, 2012 - link

The GTX 580 was already way ahead, so it didn't have to catch up to 6970. When OC'ed it was so far ahead it was ridiculous.That's why all the amd fans had a hissy fit when the 680 came out - it won on power - frames, features, price - all they could whine about was it wasn't "greatly faster" than last gen (finally admitting of course the 580 STOMPED the pedal to the floor, held it there, and blew the doors off everything before it).

See, these are the types of bias that always rear their ugly little hate filled heads.

Galidou - Thursday, August 23, 2012 - link

''ugly little hate filled heads''The sentence speaks for itself about who's writing it, you're one of them sorry, if it wasn't so filled with hate maybe I could pass but.... no.

It won for the first time on power/performance, let'S not do like it has always been like that.... and before even if they didn't win on that front you speak like they won on everything forever. There's nothing good about AMD we already know your opinion, you don'T have to spread some more hatred on the subject, we know it.

Just do yourself a favor, stop lacking respect to others for something everyone already knows about you, you hate AMD end of the line.

CeriseCogburn - Thursday, August 23, 2012 - link

You were wrong again and made a completely false comparison, and got called on it.Should I just call you blabbering idiot instead of hate filled biased amd fanboy ?

How about NEITHER, and you keeping the facts straight ?

HEY !

That would actually be nice, but you're not nice.

Galidou - Tuesday, August 21, 2012 - link

You didn't get the point of the memory controller/memory quantity they said here, poor newbie, let me explain things for you. On GCN there's 6 64bit memory bus, now divide 3gb of memory into 6, bingo 512mb each controller. Now take 2gb of memory and divide it by 3 memory bus of the 660 ti(192bit), ohhh you can't do this, what that means is there are 2 64 bit buses that takes care of a 512mb chip and the 2 other memory buses take care of 2x512 memory each.Asynchronous memory may not be that bad, it isn't a weirdo theory either, my little girl that's 10 years old would understand it if I would explain her that simple mathematical equation. Mr Cogburn the expert with less logic than a 10 y old girl... what are you doing here, you'Re so good, maybe you should join us at overclock.net and discuss with some pro overclockers and show us your results of your cpu/gpu on nitrogen if you're so pro about hardware.

CeriseCogburn - Thursday, August 23, 2012 - link

Wow, and to think you just said you couldn't support the hatred, but there you go spreading your hatred again.The author is WRONG. Be a man and face the facts.

Galidou - Thursday, August 23, 2012 - link

I didn't use a damn word that lacked of respect to you in all my previous posts unlike you calling me dummy, stupid, and so on in some previous post. You used words nazi, evil, and so on against AMD but I'm the one who spread the HATE LOL COMON.Don't try me, you are the most disrespectful, but still knowledgeable, person I've ever seen at your times. You expect me to stay totally cold all the time in face of the fact you diminish and attack people calling them names because they are Fanboys.... COMON.

I speak once against your logic but every post you have is full of hatred and attack to people but ohhh when I say something ONCE, it's BAD. COMON.... Well I know it wasn't my best one and I offer you my dearest excuses, I truly am sorry. I was at the end of the roll, you're hard to follow, using everything you can to make us feel like AMD is realted to cancer, hell, nazis and such while I have a 4870 and 6850 crossfire that served me well.

Imagine someone attacking and diminishing the user of a product because he uses the said product and you're one owner of that said product. Someone lacking of respect to YOU in every way because you use that thing and you actually like it and it served you well, you had no problem with it but still he can't stop and almost tries to make you beleive that if you bought that, it'S because you are plain stupid. You'd be mad, well that's what you make feel to most of AMD users the way you speak of them.

I'm sorry for being such an ass comparing your logic to my 10 years old daughter while I know you're more logical than her. But you should really be sorry to any AMD video card owner that reads you because you really make em beleive AMD is the devil and their products are worthless while they're not.

CeriseCogburn - Thursday, August 23, 2012 - link

Don't start out with a big lie, and you won't hear from me in the way you don't like to hear or see.You've got yourself convinced, you already did your research, you've said so, you've told yourself a pile of lies, WELL KEEP YOUR LIES TO YOURSELF THEN, in your own head, swirling around, instead of laying them on here then making up every excuse when you get called on them !

Pretty simple dude.

Here' let me help you, this is you talking:

" I've been brainwashed at overclockers and all the amd fanboys there have convinced me to "get into OC" and told me a dozen lies, half of which I blindly and unthinkingly repeated here as I attacked the guy who knew what he was talking about and proved me wrong, again and again. I hate him, and want him to do what I say, not what he does. I want to remain wrong and immensley biased for all the wrong reasons, because being an amd fanboy is my fun no matter how many falsehoods I spew that are very easily smashed by someone, whom I claim, doesn't know a thing after they prove me wrong, again and again".

Hey dude, be an amd fanboy, just don't spew out those completely incorrect falsehoods, that's all. Not that hard is it ?

LOL

Yeah, it's really, really hard not to, otherwise, you'd have a heckuva time having a single point.

I get it. I expect it from you people. You've got no other recourse.

Honestly it would be better to just say I like AMD and I'm getting it because I like AMD and I don't care about anything but that.