The GeForce GTX 660 Ti Review, Feat. EVGA, Zotac, and Gigabyte

by Ryan Smith on August 16, 2012 9:00 AM ESTThat Darn Memory Bus

Among the entire GTX 600 family, the GTX 660 Ti’s one unique feature is its memory controller layout. NVIDIA built GK104 with 4 memory controllers, each 64 bits wide, giving the entire GPU a combined memory bus width of 256 bits. These memory controllers are tied into the ROPs and L2 cache, with each controller forming part of a ROP partition containing 8 ROPs (or rather 1 ROP unit capable of processing 8 operations), 128KB of L2 cache, and the memory controller. To disable any of those things means taking out a whole ROP partition, which is exactly what NVIDIA has done.

The impact on the ROPs and the L2 cache is rather straightforward – render operation throughput is reduced by 25% and there’s 25% less L2 cache to store data in – but the loss of the memory controller is a much tougher concept to deal with. This goes for both NVIDIA on the design end and for consumers on the usage end.

256 is a nice power-of-two number. For video cards with power-of-two memory bus widths, it’s very easy to equip them with a similarly power-of-two memory capacity such as 1GB, 2GB, or 4GB of memory. For various minor technical reasons (mostly the sanity of the engineers), GPU manufacturers like sticking to power-of-two memory busses. And while this is by no means a true design constraint in video card manufacturing, there are ramifications for skipping from it.

The biggest consequence of deviating from a power-of-two memory bus is that under normal circumstances this leads to a card’s memory capacity not lining up with the bulk of the cards on the market. To use the GTX 500 series as an example, NVIDIA had 1.5GB of memory on the GTX 580 at a time when the common Radeon HD 5870 had 1GB, giving NVIDIA a 512MB advantage. Later on however the common Radeon HD 6970 had 2GB of memory, leaving NVIDIA behind by 512MB. This also had one additional consequence for NVIDIA: they needed 12 memory chips where AMD needed 8, which generally inflates the bill of materials more than the price of higher speed memory in a narrower design does. This ended up not being a problem for the GTX 580 since 1.5GB was still plenty of memory for 2010/2011 and the high pricetag could easily absorb the BoM hit, but this is not always the case.

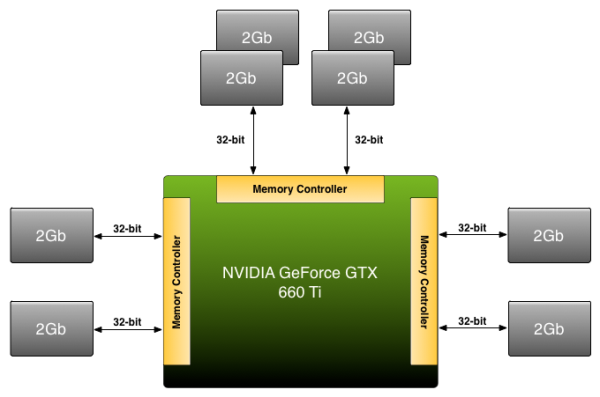

Because NVIDIA has disabled a ROP partition on GK104 in order to make the GTX 660 Ti, they’re dropping from a power-of-two 256bit bus to an off-size 192bit bus. Under normal circumstances this means that they’d need to either reduce the amount of memory on the card from 2GB to 1.5GB, or double it to 3GB. The former is undesirable for competitive reasons (AMD has 2GB cards below the 660 Ti and 3GB cards above) not to mention the fact that 1.5GB is too small for a $300 card in 2012. The latter on the other hand incurs the BoM hit as NVIDIA moves from 8 memory chips to 12 memory chips, a scenario that the lower margin GTX 660 Ti can’t as easily absorb, not to mention how silly it would be for a GTX 680 to have less memory than a GTX 660 Ti.

Rather than take the usual route NVIDIA is going to take their own 3rd route: put 2GB of memory on the GTX 660 Ti anyhow. By putting more memory on one controller than the other two – in effect breaking the symmetry of the memory banks – NVIDIA can have 2GB of memory attached to a 192bit memory bus. This is a technique that NVIDIA has had available to them for quite some time, but it’s also something they rarely pull out and only use it when necessary.

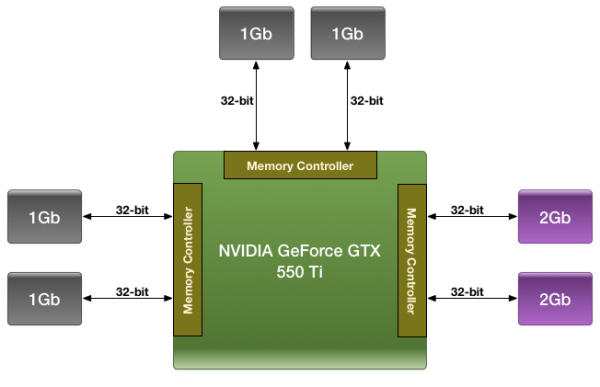

We were first introduced to this technique with the GTX 550 Ti in 2011, which had a similarly large 192bit memory bus. By using a mix of 2Gb and 1Gb modules, NVIDIA could outfit the card with 1GB of memory rather than the 1.5GB/768MB that a 192bit memory bus would typically dictate.

For the GTX 660 Ti in 2012 NVIDIA is once again going to use their asymmetrical memory technique in order to outfit the GTX 660 Ti with 2GB of memory on a 192bit bus, but they’re going to be implementing it slightly differently. Whereas the GTX 550 Ti mixed memory chip density in order to get 1GB out of 6 chips, the GTX 660 Ti will mix up the number of chips attached to each controller in order to get 2GB out of 8 chips. Specifically, there will be 4 chips instead of 2 attached to one of the memory controllers, while the other controllers will continue to have 2 chips. By doing it in this manner, this allows NVIDIA to use the same Hynix 2Gb chips they already use in the rest of the GTX 600 series, with the only high-level difference being the width of the bus connecting them.

Of course at a low-level it’s more complex than that. In a symmetrical design with an equal amount of RAM on each controller it’s rather easy to interleave memory operations across all of the controllers, which maximizes performance of the memory subsystem as a whole. However complete interleaving requires that kind of a symmetrical design, which means it’s not quite suitable for use on NVIDIA’s asymmetrical memory designs. Instead NVIDIA must start playing tricks. And when tricks are involved, there’s always a downside.

The best case scenario is always going to be that the entire 192bit bus is in use by interleaving a memory operation across all 3 controllers, giving the card 144GB/sec of memory bandwidth (192bit * 6GHz / 8). But that can only be done at up to 1.5GB of memory; the final 512MB of memory is attached to a single memory controller. This invokes the worst case scenario, where only 1 64-bit memory controller is in use and thereby reducing memory bandwidth to a much more modest 48GB/sec.

How NVIDIA spreads out memory accesses will have a great deal of impact on when we hit these scenarios. In the past we’ve tried to divine how NVIDIA is accomplishing this, but even with the compute capability of CUDA memory appears to be too far abstracted for us to test any specific theories. And because NVIDIA is continuing to label the internal details of their memory bus a competitive advantage, they’re unwilling to share the details of its operation with us. Thus we’re largely dealing with a black box here, one where poking and prodding doesn’t produce much in the way of meaningful results.

As with the GTX 550 Ti, all we can really say at this time is that the performance we get in our benchmarks is the performance we get. Our best guess remains that NVIDIA is interleaving the lower 1.5GB of address while pushing the last 512MB of address space into the larger memory bank, but we don’t have any hard data to back it up. For most users this shouldn’t be a problem (especially since GK104 is so wishy-washy at compute), but it remains that there’s always a downside to an asymmetrical memory design. With any luck one day we’ll find that downside and be able to better understand the GTX 660 Ti’s performance in the process.

313 Comments

View All Comments

Galidou - Monday, August 20, 2012 - link

I play on either my 1080p TV or my 3 monitors 1920*1200 monitors(mainly on the monitors now. I only had the tv before so it was ok with my 6850 crossfire but now I'll need more memory and the main game I play, almost the only one, is Skyrim. I can't use the texture pack and some high details because in some caves and some places, the memory is limiting me badly. I plan to change the tv for one of those new high resolution one when they come out. In crossfire, you don't add the memory, you ahve the same video memory of only one card, so no it's not 2gb it's 1gb, thanks.I want the 7950 OC with 950mhz core I already did my research prior to the 660 ti review. They've been out for many months, I just work too much during the summer so I wasn't in a hurry and I wanted to see the competition.

There you go, they even have thermal pictures of the whole system/card which is something I was looking for. It's not a performance review against competition it's only on overclock and power usage. I don't care about the 80 watts supplementary too much because I was ready to buy an GTX 580 at a low enough price which has stock clock power usage like 7950 overclocked power usage.

http://www.behardware.com/articles/853-18/roundup-...

Page 11 is the one I'm looking for, I just want to get 1100 mhz which seems everyone gets 1150 with this card.

Before the patch in AMD drivers, Nvidia had a clear advantage so the 660 ti was like the obvious choice when it would come out(I thought so). Then these drivers and optimized games are mixing all the stuff up... If only they would care about us and not only their product performance, thus, their profit, but I know it will never happen. Money makes wars everywhere and it will NEVER stop.

TheJian - Monday, August 20, 2012 - link

In SLI/Crossfire this card will give you 2GB (double your 1GB now, in either single or sli/cross you'll get 2GB), that's what I meant. I was talking about the 660TI, or whatever upgrade you do. You get the size of 2GB (one card's worth - SLI same thing). 2GBx2GB should be fine for skyrim at 1920x1200 (no card here was punished at 2GB 1920x1200). I already pointed to an article that shows NO difference from 2gb to 4gb in ANY game they tested.:http://www.guru3d.com/article/palit-geforce-gtx-68...

"But 2GB really covers 98% of the games in the highest resolutions." It even worked at 5760x1200 below :) Look @ hardocp who tested on 3 monitors@5760x1200. 30fps min, 58.6avg 104max. On a single gtx680. Two 660's should smoke this single 680.

Not quite sure I understand your gaming on 3 monitors comment...You mean 5760x1200? Or are you only gaming on ONE of them 1920x1200? or you mean something else?

So you are planning on buying 2 video cards again? I thought you were just replacing with one at 2GB, but if you're gaming at 5760x1200 that's another story. I'd just buy a GTX680 and OC.

http://www.hardocp.com/article/2012/03/22/nvidia_k...

For the apples-to-apples test we lowered the AA setting down to 4X AA, just in case there were some hidden bottlenecks. Lowering the AA setting to 4X AA only made things worse for the Radeon HD 7970. The GeForce GTX 680 increased its advantage to 29% performance advantage over the HD 7970!"

Granted as shown below with anand 12.7 they got better, but still lost, so don't expect NV to become behind in the above test even if they changed drivers. It didn't help below. NV won both tests anyway in anandtech testing... :)

Anand 12.7 drivers skyrim, 7970ghz edition, slaughtered at 1920x1200

http://www.anandtech.com/show/6025/radeon-hd-7970-...

All NV cards are cpu limited at that res, gtx580/670/680. But they all win by 10fps with 4xmsaa/16af. Even at the useless 2560x1600 res 680 still wins...Thats a REF model they're comparing it to also. 680 does much better than this with what you BUY, but this is vs. ghz edition, so don't expect much more from your overclock and heat/noise will get worse. You'll only get another 15% at 1150 than they are already benching here (if that, scaling isn't perfect).

From your own quoted article voltages vary, and as I pointed to another guy needed 1.25v to hit 1150.

"Secondly, PowerTune doesn’t register increases in the GPU voltage and the big energy consumption increases that come with it. The technology is therefore incapable of fully protecting the GPU and the card. AMD says that OVP (Over Voltage Protection) and OCP (Over Current Protection) are still in place but, as we were able to observe, these technologies cut all power to the card when limits are exceeded."

YOU can damage your card as I stated before this can't happen on 600's.

http://www.guru3d.com/article/radeon-hd-7950-overc...

1.25v 1150. Not all cards are the same.

Look at your own chart on your link. Scroll down to where they show the chart and 1.20+ being REQUIRED to hit this statement:

"The maximum clock on the Radeon HD 7900s generally seems to be between 1125 and 1200 MHz when the GPU voltage is adjusted.". Look at the chart above that line...1.20 is REQUIRED in most cases to hit anything over 1100. That is the FIRST voltage where they all meet 1100. @1.174 two couldn't even hit 1100 (the HIS and the Reference card). ONLY 3 cards hit 1125@1.25, only ONE went above. RUSSIAN, are you listening?...LOL. There are 11 cards in the list, be careful assuming all Sapphire OC's are the same. They are not. From page 19:

"When the GPU voltage is changed this goes up to an increase of between 21 and 78%, which is enough to put the power stages of these cards under stress."

That's 78% more power draw is a lot of watts, and they'll product a lot of heat/noise. Thanks for the link, it's a good article, I look forward to the 660 TI article there to see the comparison (hopefully he'll make one, there were only radeons in this article). Also note his page 14 comments and the charts showing heat stuck inside the pc frying other components as they don't expel the heat out the rear well:"The reference HIS, MSI Twin Frozr III and Sapphire OverClock Radeon HD 7950s and Radeon HD 7970 Lightning however tend to direct more hot air towards the hard drives.". Your card is in there...Only two cards didn't do that. Another downer for the 7950 cards in my mind. The CPU in your case (your OC card) would be 5C higher as shown in the chart vs. reference model. That's a lot of C added to your CPU. Paying attention russian? All of this affects your glorious 1150 speeds.

Galidou has a better article than I used, as it is more complete regarding the "OTHER" effects a 1150mhz overclock on your radeon will get you (cpu raises, HD's, mem sticks etc), not to mention they don't expel the heat. I'm wondering if his 660TI article will show case temps sucking also now...ROFL. Thanks again Galidou. GOOD INFO. Right now my decision is the same, but after he does a 660 article I hope the issue doesn't become confused :)

Galidou - Monday, August 20, 2012 - link

Lol you're so fun, people overclocked gtx 580 and it gave out more heat and still ate more current than anything this gen can ever imagine and no system died as of this yet... I gave you an example it can easily hit 1100-1150 without breaking 80w more and still you have to speak and speak and always say the same thing and try to make me beleive that if I ever buy a radeon card I will be deceived, I will eventually catch cancer and die of it.... thanks your fanaticism is appreciated. The more I speak with fanboys of both side, the more I think I'll have to stop playing computer for the risk of becoming like them.Skyrim,3 monitors is clearly ahead, I really thought you'd have sense after telling you the games I play but it seems you're as stubborn as someone can be. My wallet is speaking, my radeon 6850 crossfire made more heat in my system than this 7950 overclocked alone will do and yet I'll gain in performance.

Skyrim with mods isn'T shown anywhere, it fills over 2gb of ram as soon as you ramp up the mods in there, I'm playing with 30 and my 1gb of ram is crying at me to stop. If you don't know the game you're speaking of, just don'T comment please.

You can't damage your 660 or 670? lol fun stuff I'm a fan of reading 1 egg review on newegg and there's plenty on both side(AMD and Nvidia) claiming that their card died, for ati they died in the first week. 670 is newer than 7950 and still there are numerous cards that have 10 to 30% of 1egg-2eggs because of fried cards with and without overclock ranging from 1 days of ownership to 3 months of ownership.

I guess 28nm isn't at it's peak and that is reflected. One guy claimed he overclocked his 670 and when the boost would go past 1290 it would black screen and shut down in battlefield 3(verified owner in the forums). Why would someone own the card say stuff like that?

The articles you'Ve shown me in skyrim are before the big patch in AMD drivers, don'T get what I say, look at anandtech review of the gtx 680(4 months ago I think) and the review of the gtx 660 ti and watch the difference. You obviously didn'T do the research I did. The driver is about a month old and it gave VERY big improvements in skyrim, just watch any new review dating end of july to now and you will understand.

Seeing you had to speak again so much and seeing the lack of information you have, this is ending now, thanks anyway but your stubbornness dragged me out of myself and I'm tired to speak with someone who already has a choosen side that blinds him to the point he cannot bring arguments that are valid on every front. Been nice tho.

Galidou - Monday, August 20, 2012 - link

I meant over 2gb of Vram with texture pack. I'm an overclocker, I've been overclocking things for the past 15 years and still you try to explain me some things about heat while you can'T even find articles relative the the real performance of AMD with the newer drivers.I'm the kind of enthusiast you can find on overclock.net forums, you've argued the wrong way with the wrong person sorry if my english wasn'tperfect all along but I'm a french canadian.

Still, temperatures raises on components like you said has been experienced for years, my radeon 4870 is super hot and still is working in my wife system and still nothing died, it leaves more heat in my whole system than the radeon 7950 alone will and I overclocked the darn thing.

You're speaking like you'Re trying to make a show to everyone reading you but no one is reading cauz it's too long for them to bare and it isn'T even actual. So cut the show and the ''Galidou has a better article than I used'' like if you'Re speaking to someone, we'Re arguing together and you got lost in your information and didn'T even know of the BIG perf improvements as of 12.8 catalyst drivers show your inexperience. GG

Galidou - Monday, August 20, 2012 - link

Oh and I forgot, last thing then you'll NEVER ever hear from me again on this forum so free to you to speak in the emptiness, the more we add, the less people will get to read it.2560*1600 = 4 millions pixels

1920*1200 x 3 monitors = 7 millions pixels

2560*1600 is cpu limited on anadtech, meaning all video cards in the review that are stuck at 84-86fps will go higher, so the 7950 will be ahead of the 7970 and ahead of the gtx 670 which is 100$ over the price I will pay because I'll wait for the special to come back and there's never any special on Nvidia cards because they are too good.

Galidou - Monday, August 20, 2012 - link

And stop showing overclocked results and damaging thing about reference lousy stupid board, my system is watercooled and the video card will surely be in the end and stop shpowing me gtx 680 results it's above what I want to pay, I'm replacing my crossfire for one 7950 and watercooled they get EASILY to 1300 core clock which is rapeage of even the gtx 680 stock clocked ANYWHERE.Galidou - Monday, August 20, 2012 - link

http://www.techpowerup.com/reviews/Gigabyte/HD_797...now look at 5760*1080(lower resolution than I'll use) and look at where the 7950(not overclocked reference lousy board) just get te picture with the new drivers now. I leave up to you to find any 5760*1080 before drivers release and in the future, learn to find results by yourself.

CeriseCogburn - Thursday, August 23, 2012 - link

enjoy nVidia 660Ti's sweet victory over your planned to buy 7950 at your triple monitor rez there buckyLOL

http://www.bit-tech.net/hardware/2012/08/16/nvidia...

HAHHAHAHAHAHAHAHA

OMG ! HAHAHA

Galidou - Monday, August 20, 2012 - link

I know you don't want any AMD cards, it'S pretty obvious while I don't care about the brand it's only the fanboys from each side seems convinced the gap is SO IMMENSE while I don't see much of a gap when you consider price/performance and as always it depends on the game.Considering I'll play Skyrim on 3 monitors, unless you're a freaking blind fanboy, it would be hard to recommend the 660 ti... The sapphire OC to 950mhz isn't even on this site, it's simply a reference 7970 board with an 6+8 pin PEG connector.Which supports the overclock with the reference voltage on 1000mhz core easily.

If I didn't have the knowledge and desire about overclocking I have now, the choice would be freaking obvious, 1080p gaming without overclocking, welcome 660 ti the card would be on it's way. But I want to overclock and everyone in the forums at overclock.net already know it, and whatever how big your doubts are, 90% of them report super overclock.

Now don't bring me some of your comparison with the 7950b and reference coolers or I just do not answer back to you, AMD has as much non reference boards than Nvidia has. WTF wake up.... guru 3d overclocking a reference board with the lousy worthless not selling reference fan when 75% of their cards have way better coolers, good way of representing the reality..... COMON.

BTW, I live in Canada, province of quebec, and where I live, the electricity is quite cheap, like really cheap.

CeriseCogburn - Thursday, August 23, 2012 - link

" Considering I'll play Skyrim on 3 monitors, unless you're a freaking blind fanboy, it would be hard to recommend the 660 ti."http://www.bit-tech.net/hardware/2012/08/16/nvidia...

EVEN THE 7970 LOSES LMAO !!!

So, you're saying your a totally freaking blind fanboy...

Cool.