Khronos Announces OpenGL ES 3.0, OpenGL 4.3, ASTC Texture Compression, & CLU

by Ryan Smith on August 6, 2012 9:00 AM ESTOpenGL 4.3 Specification Also Released

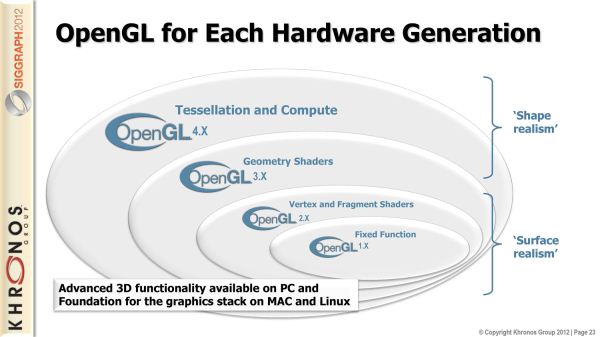

Alongside OpenGL ES 3.0, desktop OpenGL is also being iterated upon today with the launch of OpenGL 4.3. Unlike OpenGL ES this is only a much smaller point update. This is not to say that it’s unimportant, but it will bring a smaller list of new features to the table.

At the same time though, this also marks what may potentially be an interesting inflection point for OpenGL gaming on the desktop. On Windows OpenGL usage has never been lower; the only AAA game engine still based on OpenGL is id Tech 5, which with the termination of licensing by id is only used internally. Khronos of course would like to change that, so when Valve Software says that they’re going to be porting Source over to Linux (thereby increasing the audience for OpenGL games) it’s of quite some interest to Khronos. At the same time desktop OpenGL still has a long way to go to recapture its glory days in the late 90’s and early 2000’s, so Khronos is doing what they can to spur that on.

OpenGL ES 3.0 Superset & ETC Texture Compression

Moving on to looking at OpenGL 4.3’s features, unsurprisingly, one of the big additions to OpenGL 4.3 is to add the necessary features to make it a proper superset of OpenGL ES 3.0. One of Khronos’s missteps with OpenGL ES 2.0 was that it wasn’t until OpenGL 4.1 in 2010 that desktop OpenGL become a proper superset of OpenGL ES 2.0, which they’re rectifying this time around by launching OpenGL ES and its equivalent OpenGL at the same time.

Because OpenGL ES 3.0 is largely taken from desktop OpenGL in the first place, there aren’t a ton of changes due to this. But there is one: texture compression. The aforementioned standardization around ETC applies to both OpenGL ES and OpenGL, which means that starting with OpenGL 4.3, desktop OpenGL will have a standard texture compression format.

Practically speaking, this won’t make a huge difference to desktop developers right now. Because S3TC is a required part of the Direct3D specification and all desktop GPUs support Direct3D, S3TC has been a de-facto OpenGL standard for nearly 10 years now. And because few developers will target OpenGL 4.3 right away, that won’t change. But this means that developers targeting 4.3 do finally have a choice in texture compression, and developers doing cross-platform development with OpenGL ES can use the same texture compression format in both cases.

It’s worth noting though that just because a GPU “supports” ETC doesn’t mean it has hardware support. NVIDIA has told us that they’ll be 4.3 compliant, but they’re handling ETC by decompressing the texture in their drivers before sending it over to the GPU in an uncompressed format, and while AMD wasn’t able to get back to us in time it’s almost certainly the same story over there. For ports of OpenGL ES games this isn’t going to be a problem (dGPUs have plenty of high-bandwidth memory), but it means S3TC will remain the de-facto standard desktop OpenGL texture compression format for now.

OpenGL Compute Shaders

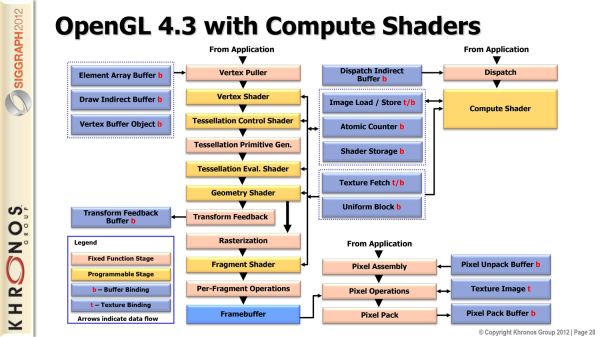

Moving on, while OpenGL ES 3.0 compatibility is a big deal for OpenGL, it’s actually not the biggest feature addition for OpenGL 4.3. For that we turn to compute shaders.

As a bit of background, when meaningful compute functionality was introduced for GPUs, Microsoft and Khronos went in two separate directions. Khronos of course created OpenCL, a full-featured ANSI C based API for compute. Microsoft on the other hand introduced compute shaders, which was a special class of HLSL designed for compute. OpenCL is far more flexible, but flexibility has its price. Specifically, implementing compute functionality in OpenGL games often wasn’t as easy as the equivalent functionality using a Direct3D compute shader, and the overhead of OpenCL limited performance.

The end result is that Khronos has decided to implement compute shaders in OpenGL in order to bridge this gap. OpenCL of course remains as Khronos’s premiere compute API for both stand-alone compute applications and OpenGL games/applications that need the full flexibility, for but games that don’t need that level of flexibility and only want to run compute work on a GPU there is now another option.

Like Direct3D’s compute shader functionality, OpenGL’s compute shader functionality is geared towards relatively simple pixel operations, where approaching an algorithm in a compute manner (instead of a graphics manner) allows for faster execution. The compute shaders themselves will be written in GLSL rather than C, underscoring the fact that this is an extension of OpenGL’s shading framework rather than their compute framework. The target for this functionality will be games and other applications which perform compute “close to the pixel”, taking advantage of the faster shared memory and greater thread count that compute shaders offer.

Since OpenGL’s compute shader functionality is being spurred on by Direct3D, it should come as no surprise that OpenGL’s compute shaders are going to be a very close implementation of Direct3D’s compute shaders. Specifically, OpenGL’s compute shader functionality is being advertised by Khronos as matching D3D11’s compute shader functionality. The differences between HLSL and GLSL mean that there’s no straightforward portability, but it underscores the fact that this is the OpenGL analog of D3D’s compute shader functionality.

New Texture & Buffer Features

Moving on, OpenGL 4.3 will also be introducing some new texture and buffer features. On the texture side of things, one new feature will be texture views, which allow for a texture to be “viewed” in a different way by interpreting its results in a different manner, all without needing to duplicate the texture for modification. As for buffers, 4.3 introduces support for reading and writing to very large buffers across all shader types and all stages. The idea behind this is that it’s an efficient way for those new compute shaders to communicate with the graphics pipeline, given the large amount of data that can be in flight with a compute shader.

Wrapping things up, for some time now Khronos has been working on bringing OpenGL into alignment with Direct3D in order to close the feature and developer gap that has been created between the two. As Khronos correctly notes, with the addition of compute shader functionality OpenGL is now a true superset of Direct3D. If desktop OpenGL is going to see a resurgence in the next few years, it’s now in a far better position to pull that off than it was in before.

46 Comments

View All Comments

djgandy - Tuesday, August 7, 2012 - link

I agree, I did say 'like apple' though, but yes, no one is really interested in desktop GL anymore.At least apple went 3.2 core though and explicitly state in their documentation that deprecated features are removed

https://developer.apple.com/library/mac/#documenta...

Tessellation (Burning energy) aside I don't think there are many headline features that desperately need adding from 4.0 onwards. Apple did only support 2.1 until they released 3.2 support!

Jumangi - Monday, August 6, 2012 - link

Yea DX isn't the stagnated one. OpenGL is a spec has been behind DirectX for years now. The stagnation is from allot of developers who don't use the latest stuff since they stay with the lowest denominator we still have with the PS3 and 360. The features are there if developers want to use them.sheh - Monday, August 6, 2012 - link

And WinXP.ltcommanderdata - Monday, August 6, 2012 - link

It's mentioned in the article that nVidia desktop GPUs don't support ETC in hardware. Do we know whether ETC support in hardware is universal across OpenGL ES 2.0 GPUs from various vendors like PowerVR, ARM, Qualcomm, and nVidia? In the transition, games will no doubt include both OpenGL ES 2.0 and 3.0 render paths to support more devices, and it'd be preferable if both paths could share texture assets.Since ASTC is optional right now which GPU makers have announced they support it in hardware?

It'll be interesting to see what Apple does with ETC on iOS. The existing PVRTC is more efficient on PowerVR GPUs than ETC. I can't see Apple happy to support a less efficient texture format whose benefit to developers but not Apple is to ease portability of games to Android. I suppose Apple will enable ETC support for compatibility, but won't be investing effort in optimizing it's performance. IMG just released a new PVRTC2 texture format for Series5XT and Series6 GPU that improves upon PVRTC, so I expect Apple will be pushing that format soon on iOS.

Ryan Smith - Monday, August 6, 2012 - link

Most current OpenGL ES 2.0 GPUs do not support ETC. I do not have a good list, but I know that PowerVR, Tegra, and Adreno do not support it. So until OpenGL ES 3.0 is the baseline, developers will still have to deal with disjoint texture compression formats. But at least there's finally a way out.As for ASTC, none of the GPU vendors have announced that they'll be supporting it in hardware. This is part of the reason why ASTC is currently being floated as an extension, in order to gather feedback. Now that the standard is out, GPU vendors can look at integrating hardware support. Since this is a joint ARM/NV proposal, I'd expect both of them to integrate support a the earliest opportunity.

pebrb - Monday, August 6, 2012 - link

You are confusing ETC and ETC2 in the entire article.All current OpenGL ES 2.0 GPUs _do_ support ETC (not ETC2) as it is the standard in ES 2.0

The only vendor that doesn't support it is Apple (even though the PowerVS SGX has support for it)

The reason for developers support different texture formats is because ETC is very low quality and lack of alpha channel support.

In OpenGL ES 3.0 they made ETC2 the standard, we'll see how it'll work out.

But I'm waiting for ASTC it has very good features. And from what I heard it will be supported by Microsoft as well, which means that everyone needs to implement it anyway.

name99 - Monday, August 6, 2012 - link

"I can't see Apple happy to support a less efficient texture format whose benefit to developers but not Apple is to ease portability of games to Android."Is any of this conspiracy theory in the slightest grounded in reality?

Apple has generally been happy to support standards, even when that's not directly relevant to its goals. The web world (HTML5 and h264) are obvious examples, but an example more relevant to what we're discussing here is Apple's aggressive support for C++11 (both language and library) in the latest version of XCode. And this is not just passive checkbox support, it is genuine hard work to make C++11 interoperate well with Objective C, and to perform well.

ltcommanderdata - Monday, August 6, 2012 - link

My doubt isn't about Apple supporting standards in general, it's about them supporting a weaker technology as a standard. Apple promotes H.264 over WebM, because it has superior image quality (yes there is some debate) and superior hardware support. With the existing PVRTC texture format on iOS offering better performance and image quality than ETC on the GPUs Apple uses I can't see them singing ETC's praises as a standard. They'll no doubt add iOS support for ETC as it's required for OpenGL ES 3.0, but I don't doubt they'll be strongly encouraging iOS developers to keep using PVRTC and soon PVRTC2.Alexvrb - Monday, August 6, 2012 - link

PVRTC2 will likely wipe the floor with ETC2. Heck it'll probably be better than ASTC too.iwodo - Tuesday, August 7, 2012 - link

Would love to see PVRTC2 Vs ASTC