AMD Radeon HD 7970 GHz Edition Review: Battling For The Performance Crown

by Ryan Smith on June 22, 2012 12:01 AM EST- Posted in

- GPUs

- AMD

- GCN

- Radeon HD 7000

Power, Temperature, & Noise

As always, we’re wrapping up our look at a video card’s stock performance with a look at power, temperature, and noise. Officially AMD is holding the 7970GE’s TDP and PowerTune limits at the same level they were at for the 7970 – 250W – however unofficially because of the higher voltages, higher clockspeeds, and Digital Temperature Estimation eating into the remaining power headroom, we’re expecting power usage to increase. The question then is “how much?”

| Radeon HD 7970 Series Voltages | ||||

| Ref 7970GE Base Voltage | Ref 7970GE Boost Voltage | Ref 7970 Base Voltage | ||

| 1.162v | 1.218 | 1.175v | ||

Because of chip-to-chip variation, the load voltage of 7970 cards varies with the chip and how leaky it is. Short of a large sample size there’s no way to tell what the voltage of an average 7970 or 7970GE is, so we can only look at what we have.

Unlike the 7970, the 7970GE has two distinct voltages: a voltage for its base clock, and a higher voltage for its boost clock. For our 7970GE sample the base clock voltage is 1.162v, which is 0.013v lower than our reference 7970’s base clock voltage (load voltage). On the other hand our 7970GE’s boost clock voltage is 1.218, which is 0.056v higher than its base clock voltage and 0.043v higher than our reference 7970’s load voltage. In practice this means that even with chip-to-chip variation, we’d expect the 7970GE to consume a bit more power than the reference 7970 when it can boost, but equal to (or less) than the 7970 when it’s stuck at its base clock.

So how does this play out for power, temperature, and noise? Let’s find out.

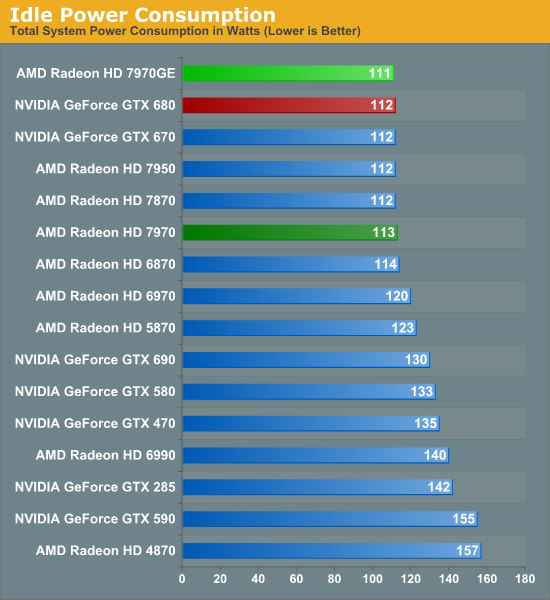

Starting with idle power, because it’s the same GPU on the same board there are no surprises here. Idle power consumption is actually down by 2W at the wall, but in practice this is such a small difference that it is almost impossible to separate from other sources. Though we wouldn’t be surprised if improving TSMC yields combined with AMD’s binning meant that real power consumption has actually decreased a hair.

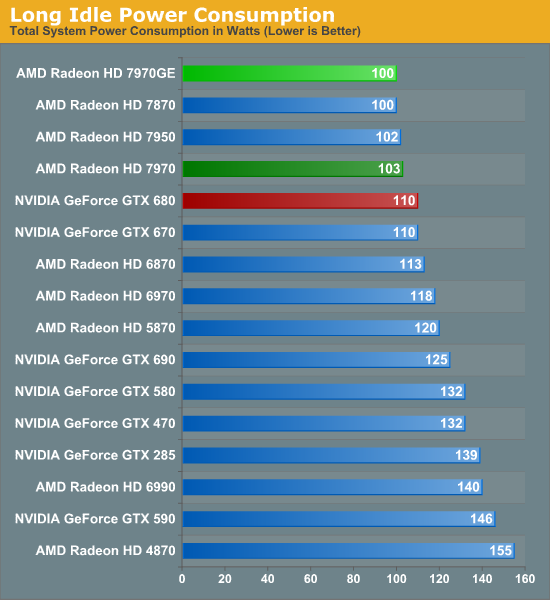

Similar to idle, long idle power consumption is also slightly down. NVIDIA doesn’t have anything to rival AMD’s ZeroCore Power technology, so the 7970CE is drawing a full 10W less at the wall, a difference that will become more pronounced when we compare SLI and CF in the future.

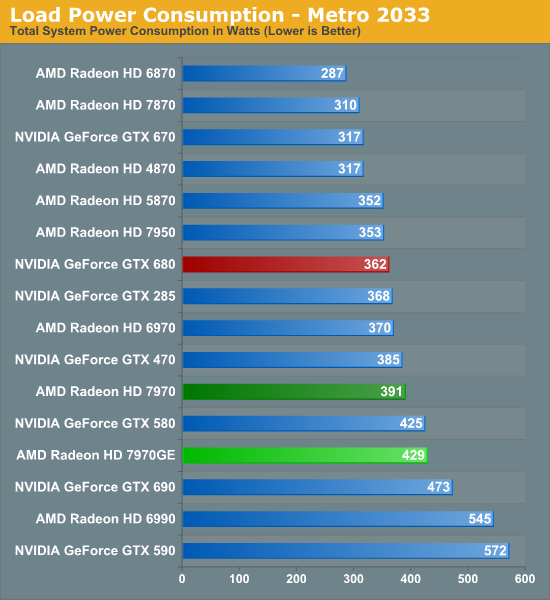

Moving on to our load power we finally see our first 7970GE power results, and while it’s not terrible it’s not great either. Power at the wall has definitely increased, with our testbed pulling 429W with the 7970GE versus 391 with the 7970. Now not all of this is due to the GPU – a certain percentage is the CPU getting to sleep less often because it needs to prepare more frames for the faster GPU – but in practice most of the difference is consumed (and exhausted) by the GPU. So the fact that the 7970GE is drawing 67W more than the GTX 680 at the wall is not insignificant.

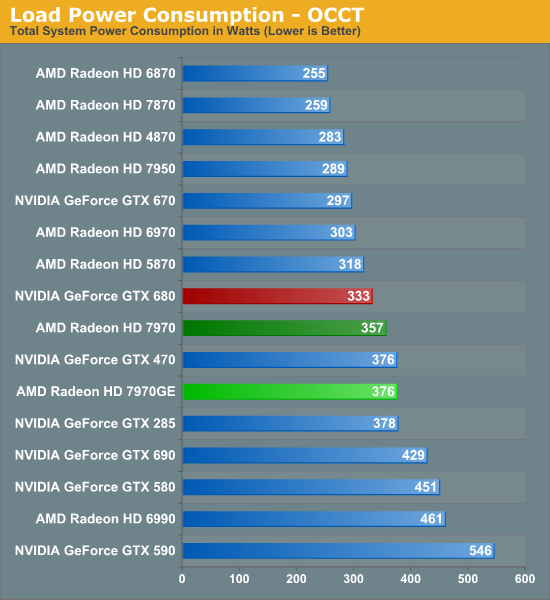

For a change of perspective we shift over to OCCT, which is our standard pathological workload and almost entirely GPU-driven. Compared to Metro, the power consumption increase from the 7970 to the 7970GE isn’t as great, but it’s definitely still there. Power has increased by 19W at the wall, which is actually more than we would have expected given the fact that the two have the same PowerTune limit and the fact that PowerTune should be heavily throttling both cards. Consequently this means that the 7970GE creates an even wider gap between the GTX 680 and AMD’s top card, with the 7970GE pulling 43W more at the wall.

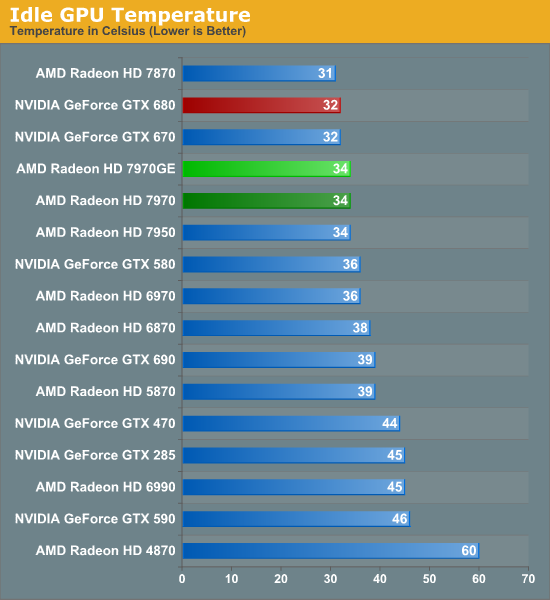

Moving on to temperatures, we don’t see a major change here. Identical hardware begets identical idle temperatures, which for the 7970GE means a cool 34C. Though the GTX 680 is a smidge cooler at 32C.

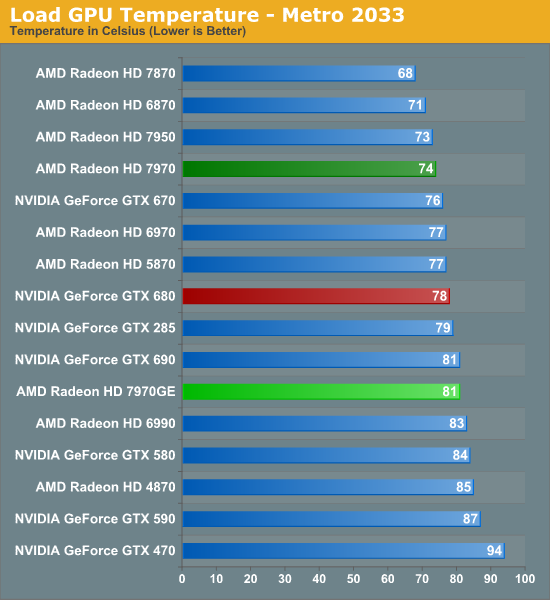

Since we’ve already seen that GPU power consumption has increased under Metro, we would expect temperatures to also increase under Metro and that’s exactly what’s happened. And actually, temperatures have increased by quite a lot, from 74C on the 7970 to 81C on the 7970GE. Since both 7970 cards share the same cooler, the 7970GE has to work harder to dissipate that extra power the card consumes, and even then temperatures will still increase some. 81C is still rather typical for a high end card, but it means there’s less thermal headroom to play with when overclocking when compared to the 7970. Furthermore it means the 7970GE is now warmer than the GTX 680.

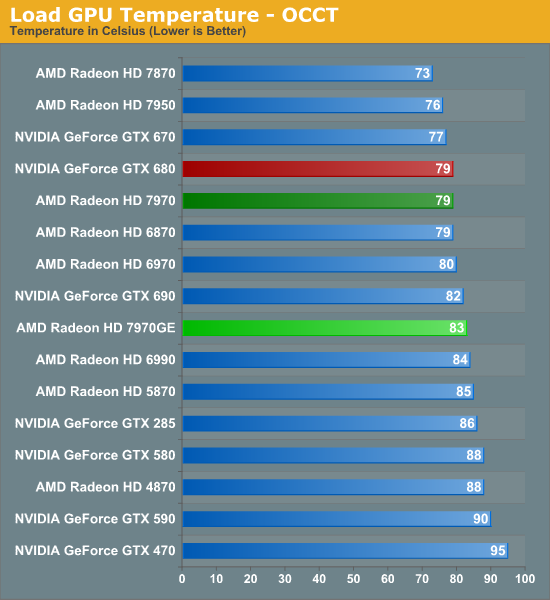

Thanks to PowerTune throttling the 7970GE doesn’t increase in temperature by nearly as much under OCCT as it does Metro, but we still see a 4C rise, pushing the 7970GE to 83C. Again this is rather normal for a high-end card, but it’s a sign of what AMD had to sacrifice to reach this level of gaming performance.

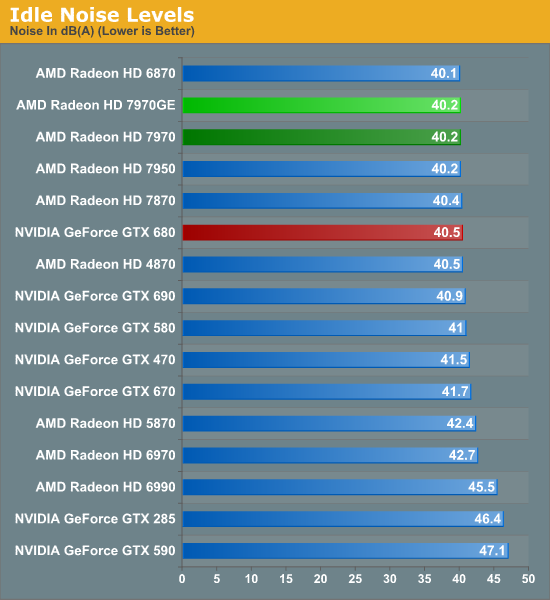

Last but not least we have our look at noise. Again with the same hardware we see no shift in idle noise, with the 7970GE registering at a quiet 40.2dBA.

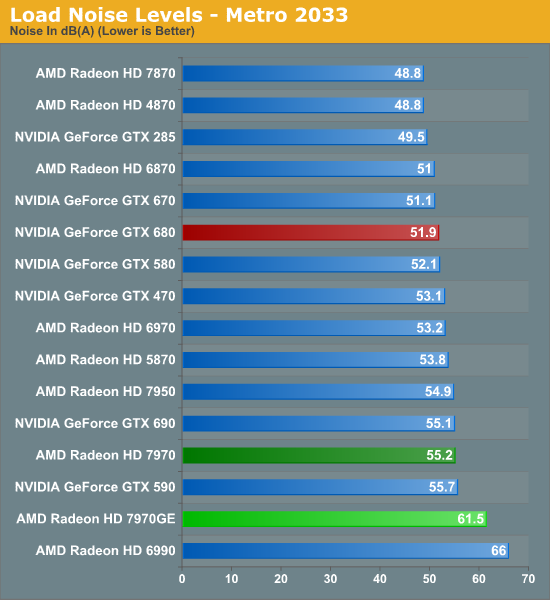

Unfortunately for AMD, this is where the 7970GE starts to come off of the rails. It’s not just power consumption and temperatures that have increased for the 7970GE, but load noise too. And it’s by quite a lot. 61.5dBA is without question loud for a video card. In fact the only card in our GPU 12 database that’s louder is the Radeon HD 6990, a dual-GPU card that was notoriously loud. The fact of the matter is that the 7970GE is significantly louder than any other card in our benchmark suite, and in all likelihood the only card that could surpass it would be the GTX 480. As a result the 7970GE isn’t only loud but it’s in a category of its own, exceeding the GTX 680 by nearly 10dBA! Even the vanilla 7970 is 6.3dBA quieter.

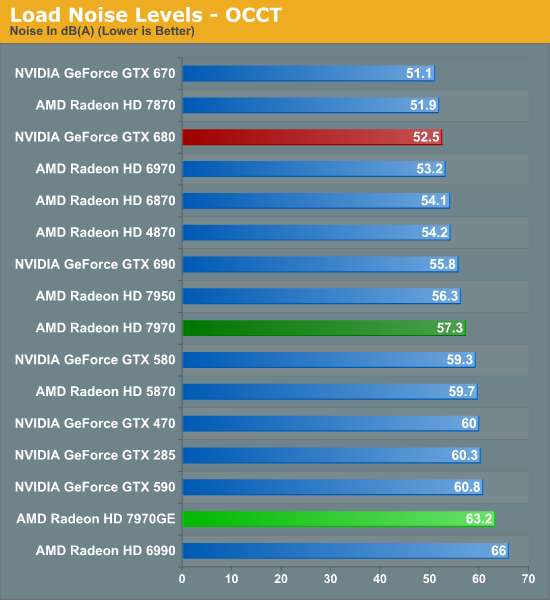

Does OCCT end up looking any better? Unfortunately the answer is no. At 63.2dBA it’s still the loudest single-GPU card in our benchmark suite by nearly 3dBA, and far, far louder than either the GTX 680 or the 7970. We’re looking at a 10.7dBA gap between the 7970GE and the GTX 680, and a still sizable 5.9dBA gap between the 7970GE and 7970.

From these results it’s clear where AMD has had to make sacrifices to achieve performance that could rival the GTX 680. By using the same card and cooler and at the same time letting power consumption increase to feed that speed, they have boxed themselves into a very ugly situation where the only solution is to run their cooler fast and to run it loud. Maybe, maybe with a better cooler they could have kept noise levels similar to the 7970 (which would have meant it would still be louder than the GTX 680), but that’s not what we’re looking at.

The 7970GE is without question the loudest single-GPU video card we have seen in quite some time, and that’s nothing for AMD to be proud of. Everyone’s limit for noise differs, but when we’re talking about single-GPU cards exceeding 60dB in Metro we have to seriously ponder whether it’s something many gamers would be willing to put up with.

110 Comments

View All Comments

Ammaross - Friday, June 22, 2012 - link

So, since the 7970 GE is essentially a tweaked OCed 7970, why not include a factory-overclocked nVidia 680 for fairness? There's a whole lot of headroom on those 680s as well that these benches leave untouched and unrepresented.elitistlinuxuser - Friday, June 22, 2012 - link

Can it run pong and at what frame ratesRumpelstiltstein - Friday, June 22, 2012 - link

Why is Nvidia red and AMD Green?Galcobar - Friday, June 22, 2012 - link

Standard graph colouring on Anandtech is that the current product is highlighted in green, specific comparison products in red. The graphs on page 3 for driver updates aren't a standard graph for video card reviews.Also, typo noted on page 18 (OC Gaming Performance), the paragraph under the Portal 2 1920 chart: "With Portal 2 being one of the 7970GE’s biggest defEcits" -- deficits

mikezachlowe2004 - Sunday, June 24, 2012 - link

Computer performance is a big factor in deciding in purchase as well and I am disappointed to not see any mention of this in the conclusion. AMD blows nVidia out the water when it comes to compute performance and this should not be taken lightly seeing as games right now are implementing more and more compute capabilities in games and many other things. Compute performance has been growing and growing and today at a rate higher than ever and it is very disappointing to see no mention of this in Anand's conclusion.I use autoCAD for work all the time but I also enjoy playing games as well and with a workload like this, AMDs GPU provide a huge advantage over nVidia simply because nVidias GK104 compute performance is no where near that of AMDs. AMD is the obvious choice for someone like me.

As far as the noise and temps go, I personally feel if your spending $500 on a GPU and obviously thousands on your system there is no reason not tospend a couple hundred on water cooling. Water cooling completely eliminates any concern for temps and noise which should make AMDs card the clear choice. Same goes for power consumption. If you're spending thousands on a system there is no reason you should be worried about a couple extra dollars a month on your bill. This is just how I see it. Now don't get me wrong, nVidia has a great card for gaming, but gaming only. AMD offers the best of both worlds. Both gaming and compute and to me, this makes the 7000 series the clear winner to me.

CeriseCogburn - Sunday, June 24, 2012 - link

It might help if you had a clue concerning what you're talking about." CAD Autodesk with plug-ins are exclusive on Cuda cores Nvidia cards. Going crossfire 7970 will not change that from 5850. Better off go for GTX580."

" The RADEON HD 7000 series will work with Autodesk Autocad and Revitt applications. However, we recommend using the Firepro card as it has full support for the applications you are using as it has the certified drivers. For the list of compatible certified video cards, please visit http://support.amd.com/us/gpudownload/fire/certifi... "

nVidia works out of the box, amd does NOT - you must spend thousands on Firepro.

Welcome to reality, the real one that amd fanboys never travel in.

spdrcrtob - Tuesday, July 17, 2012 - link

It might help if you knew what you are talking about...CAD as infer is AutoCAD by Autodesk and it doesn't have any CUDA dedicated plugin's. You are thinking of 3DS Max's method of Rendering called iRay. That's even fairly new from 2011 release.

There's isn't anything else that uses CUDA processors on a dedicated scale unless its a 3rd Party program or plugin. But not in AutoCAD, AutoCAD barely needs anything. So get it straight.

R-E-V-I-T ( with one T) requires more as there's rendering engine built in not to mention its mostly worked in as a 3D application, unlike AutoCAD which is mostly used in 2D.

Going Crossover won't help because most mid-range and high end single GPU's (AMD & NVIDIA) will be fine for ANY surface modeling and/ or 3D Rendering. If you use the application right you can increase performance numbers instead of increasing card count.

All Autodesk products work with any GPU really, there are supported or "certified" drivers and cards, usually only "CAD" cards like Fire Pro or Quadro's.

Nvidia's and AMD's work right out of the Box, just depends on the Add In Board partner and build quality NVIDIA fan boy. If you're going to state facts , then get your facts straight where it matters. Not your self thought cute remarks.

Do more research or don't state something you know nothing about. I have supported CAD and Engineering Environments and the applications they use for 8yrs now, before that 5 yrs more of IT support experience.

aranilah - Monday, June 25, 2012 - link

please put up a graph of the 680 overclocked to its maximum potential versus this to its maximum oc, that would be a different story i believe , not sure though. Please do it because on you 680 review there is no OC testing :/MrSpadge - Monday, June 25, 2012 - link

- AMDs boost assumes the stock heatsink - how is this affected by custom / 3rd party heat sinks? Will the chip think it's melting, whereas in reality it's crusing along just fine?- A simple fix would be to read out the actual temperature diode(s) already present within the chip. Sure, not deterministic.. but AMD could let users switch to this mode for better accuracy.

- AMD could implement a calibration routine into the control panel to adjust the digital temperature estimation to the atcual heat sink present -> this might avoid the problem altogether.

- Overvolting just to reach 1.05 GHz? I don't think this is necessary. Actually, I think AMD is generously overvolting most CPUs and some GPUs in the recent years. Some calibration for the actual chip capability would be nice as well - i.e. test if MY GPU really needs more voltage to reach the boost clock.

- 4 digit product numbers and only fully using 2 of them, plus the 3rd one to a limited extend (only 2 states to distinguish - 5 and 7). This is ridiculous! The numbers are there to indicate performance!!!

- Bring out cheaper 1.5 GB versions for us number crunchers.

- Bring an HD7960 with approx. the same amount of shaders as the HD7950, but ~1 GHz clock speeds. Most chips should easily do this.. and AMD could sell the same chip for more, since it would be faster.

Hrel - Monday, June 25, 2012 - link

How can you write a review like this, specifically to test one card against another, then only overclock one of them in the "OC gaming performance" section. Push the GTX680 as far as you can too otherwise those results are completely meaningless; for comparison.