Intel Dual-Core Mobile Ivy Bridge Launch and i5-3427U Ultrabook Review

by Jarred Walton on May 31, 2012 12:01 AM EST- Posted in

- Laptops

- CPUs

- Intel

- Ivy Bridge

- Ultrabook

Ivy Bridge Ultrabook Quick Sync and 3DMark Performance

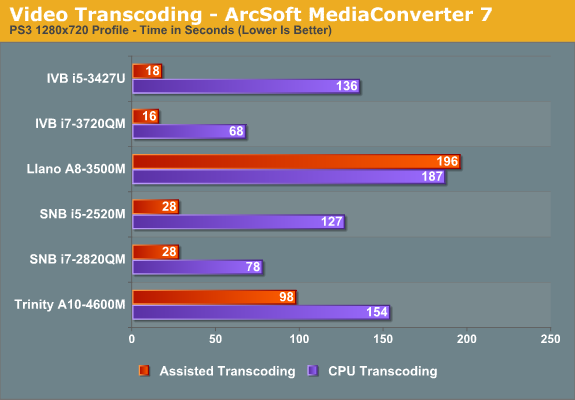

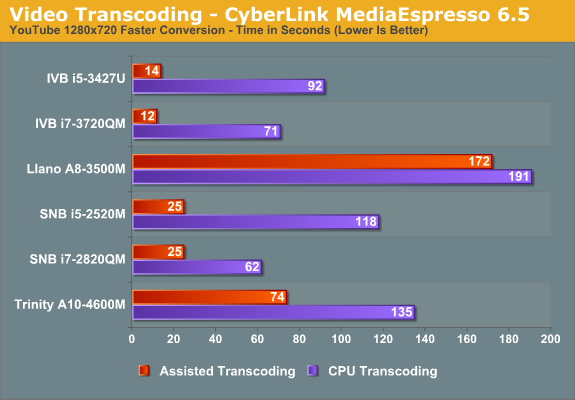

Buying a laptop isn’t just about generic office and Internet applications, naturally. Intel (and AMD and NVIDIA) have been pushing video and image processing applications as increasingly important, in our digital Facebook/YouTube/etc. world. We’ve looked at two video transcoding applications from ArcSoft and CyberLink several times already, but let’s see how Ivy Bridge Ultrabooks rate. We’ve run a video transcode converting a 3:43 minute 1080p24 video clip taken with a Nikon D3100 camera into a 720p video and timed how long the process takes.

Quick Sync continues to be the undisputed champion of these two applications, beating out AMD’s accelerated transcode on Trinity by a factor of five. However, if you’re really into video transcoding and you want more control over quality, we don’t know many people who use MediaEspresso or MediaConverter. For free software, Handbrake is probably the most popular option right now, and as we showed previously, AMD has a beta OpenCL accelerated version of Handbrake that they’ve been working on where they can come very close to quad-core Ivy Bridge performance.

It remains to be seen when the public release of Handbrake will get such support, not to mention Intel and NVIDIA are going to be interested in getting the OpenCL version to run appropriately on their hardware. Still, it’s important to keep these other developments in mind. For now, the best quality transcodes still come by way of the CPU, and ULV Ivy Bridge offers better performance than AMD’s Trinity A10 in that case—never mind the standard voltage parts. We expect to see additional software companies start looking at ways to leverage OpenCL, GPUs, APUs, and Quick Sync to help with this sort of workload going forward.

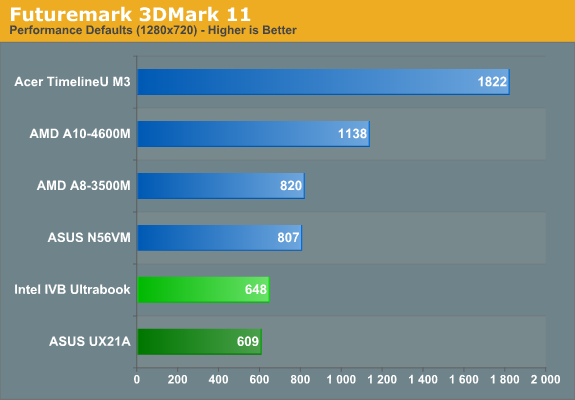

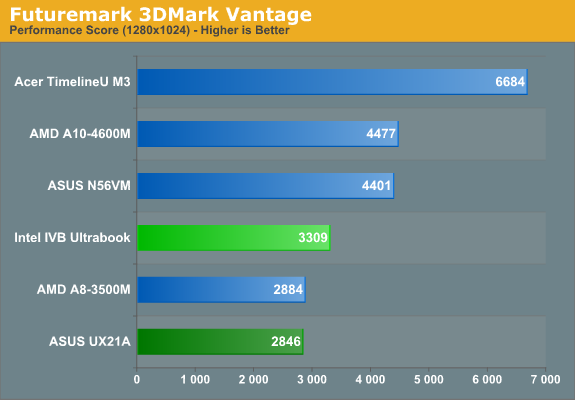

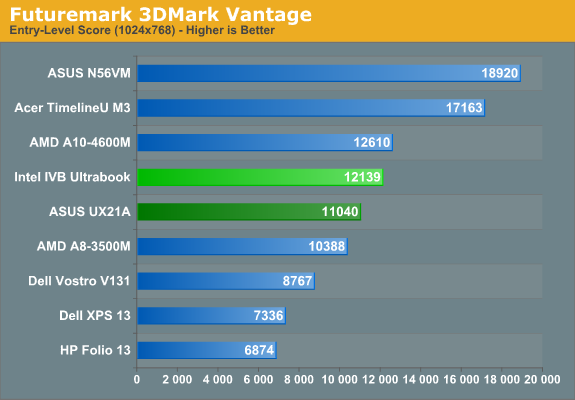

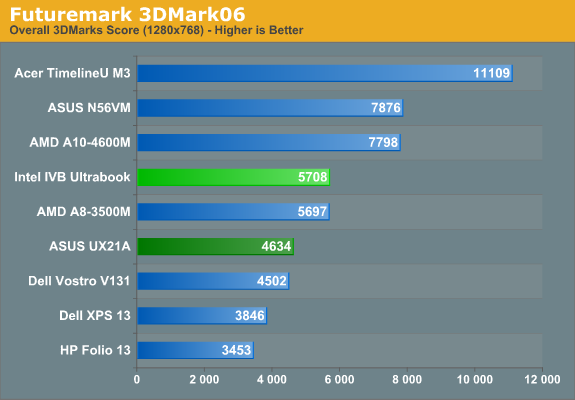

As for synthetic graphics performance, Intel has performed quite well in 3DMarks for several years now—we’d argue their 3DMark scores are often optimized far more than actual gaming performance. Still, 3DMarks are a nice way to compare across several generations of hardware, so we continue to run them on laptops. Again we see the UX21A trail the IVB ULV prototype, despite having a higher performance i7 CPU; thermal issues are the most likely cause, and the difference ranges from a rather minor 6% gap in 3DMark11 up to 23% in 3DMark06. Also interesting is that the quad-core i7-3720QM, which has a GPU that’s only clocked up to 9% higher, ends up leading the ULV IVB part by 25% (3DMark11) to as much as 33% or more! The extra—and faster—CPU cores might be a factor, but it’s also likely that the i7-3720QM is able to hit the maximum 1250MHz GPU clock far more often than the i5-3427U can hit its maximum 1150MHz clock.

Once we get beyond the Intel IGP comparisons, however, things don’t look nearly as good for ULV Ivy Bridge. Llano is around 25% faster in 3DMark11, though ULV IVB comes out ahead in the other results; Trinity on the other hand is 35-75% faster in the three main results (e.g. not counting 3DMark Vantage Entry, as that’s very low in terms of stressing the GPU). Again, the most interesting comparison is unfortunately one we can’t make yet: how will the A10-4655M 25W Trinity APU (with GPU clocks that are almost 30% lower than the 35W A10) compare with ULV IVB? We’ll have to take a wait and see approach on that one, but depending on the game it may or may not be a close matchup.

64 Comments

View All Comments

Oatmeal25 - Thursday, May 31, 2012 - link

Shift+End.Ultrabooks are almost there (for anyone doing more than web.) They just need to take fewer shortcuts with screen, GPU and storage and put less emphasis on CPU. Consistent build quality and lower prices wouldn't hurt either.

Wish Intel would work harder on their Integrated GPUs. I have an HD3000 in my Lenovo Y570 and when it's in use (also has a GT 555M with Optimus switching) dragging windows in Win7 is choppy.

JarredWalton - Thursday, May 31, 2012 - link

But there's no "End" key, which is why I list the Fn+Right key combination. Just using Shift+Right, or Control+Shift+Right doesn't trigger the issue I experienced much if at all; it's when I have to hit a lot of keys that it gets iffy. Like Fn+Control+Shift+Right to do "select to end of document" frequently ends up with the Control key registered as pressed when I'm done. So then I have to tap it just to let the OS know I've released the key.mikk - Thursday, May 31, 2012 - link

JarredWalton: "The HD 4000 ULV clocks are interesting. Base clocks are very low, but maximum clocks are quite high. WIth better cooling and configurable TDP (e.g. TDP Up or whatever it's called), it's possible there will be Ultrabooks that manage to get within 10% of the quad-core HD 4000 for graphics performance. However, Intel is only guaranteeing a rather low 350MHz iGPU clock, so in practice I bet average gaming clocks will be in the 700-900MHz range"Why you don't record the frequency used in games with gpu-z? This would be interesting. And it would be also interesting to see how it performs with a disabled cpu turbo to give more headroom for the iGPU.

JarredWalton - Thursday, May 31, 2012 - link

Working on it (see above). I'll update the article when I have some results.thejoelhansen - Thursday, May 31, 2012 - link

I see that next gen quad core part, 3720QM (2.6 GHZ), in the mix. However, there wasn't a quad core from last gen. Any chance of an update with a 26xx - 27xx part?I realize the article was more about the ULV and new dual core IVB chips for ultra books, but I'm kinda curious how all these new duals stack up to last gen's quads. Might be interesting... ?

Anyway, thanks for the well written and documented article and benchmarks (as always). :)

JarredWalton - Thursday, May 31, 2012 - link

There's always Mobile Bench. Here's the comparison you're after:http://www.anandtech.com/bench/Product/608?vs=327

Yangorang - Thursday, May 31, 2012 - link

So do you guys know what kind of frequencies the GPU was running at while benchmarking games? I am curious as to whether better cooling / lower ambient temps could actually net you signaficantly better or worse framerates.name99 - Thursday, May 31, 2012 - link

"There is an unused mini-PCIe slot just above the SSD, which might also support mSATA"The last time we went through this (with comments complaining about companies using their own SSD connectors, not mSATA) the informed conclusion seemed to be that mSATA was, at least right now, a "proto-spec" --- a nice idea that was not actually well-defined enough to translate into real, inter-compatible, products. The wikipedia section on mSATA, while not exactly clear, seems to confirm this impression.

So what's up here? Is mSATA a real (as in, I can go buy an mSATA drive from A, slot it into an mSATA slot from B, and have it work)? If not, then why bother with speculation about whether slots do or don't support it?

JarredWalton - Thursday, May 31, 2012 - link

It was more a thought along the lines of: "If this were a retail laptop, instead of an SSD they could use and HDD and put an mSATA caching drive right here." Can you buy mSATA drives and use them in different laptops? I don't know -- Apple and ASUS for sure have incompatible "gumstick drive" connections. I was under the impression that mSATA was a standard but apparently it's not very strict if that's the case. It would benefit the drive makers to all agree on something, though, as right now they might have to end up making several different SSD models if they want to support MBA, Zenbook, other mSATA, etc.Penti - Friday, June 1, 2012 - link

mSATA is a standard, that Apple and Asus don't use. You can normally use the mSATA slot for a retail mSATA SSD or any conforming product. Some mSATA-slots can also support PCIe obviously, but they should be few in todays laptops. Lenovos, HPs and DELL should be just fine running a real SSD instead of a cache drive there. Just look at what other users have done on the same model to be sure. They only appear to be stupid about it mSATA SSD + HDD is an almost perfect solution. mSATA is specified by SATA-IO in SATA 3.1 specifications and an earlier JEDEC specification specifies the mechanical design i.e. same size as a normal mini PCIe card. Not all mSATA slots will have PCIe though and not all mini PCIe slots will support SATA, you really have to know before hand, it requires some additional circuitry to have a switchable/multisignal slot. It's not like it is costly for Asus and Apple to order their custom designs. You can question Asus decision though. Apple made theirs before mSATA had made any headway. It's not like 256GB mSATA SSDs aren't around. Sandisk (that Asus uses) have theirs in various form-factors though, both custom and standardized, but including mSATA sandisk.com/business-solutions/ssd/form-factor-development It's they who finance and produces different boards for different customers, as it's fairly easy PCB's and they know the electrical requirements already it's not a big deal.