Intel Core i5 3470 Review: HD 2500 Graphics Tested

by Anand Lal Shimpi on May 31, 2012 12:00 AM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

- GPUs

Intel's first 22nm CPU, codenamed Ivy Bridge, is off to an odd start. Intel unveiled many of the quad-core desktop and mobile parts last month, but only sampled a single chip to reviewers. Dual-core mobile parts are announced today, as are their ultra-low-voltage counterparts for use in Ultrabooks. One dual-core desktop part gets announced today as well, but the bulk of the dual-core lineup won't surface until later this year. Furthermore, Intel only revealed the die size and transistor count of a single configuration: a quad-core with GT2 graphics.

Compare this to the Sandy Bridge launch a year prior where Intel sampled four different CPUs and gave us a detailed breakdown of die size and transistor counts for quad-core, dual-core and GT1/GT2 configurations. Why the change? Various sects within Intel management have different feelings on how much or how little information should be shared. It's also true that at the highest levels there's a bit of paranoia about the threat ARM poses to Intel in the long run. Combine the two and you can see how some folks at Intel might feel it's better to behave a bit more guarded. I don't agree, but this is the hand we've been dealt.

Intel also introduced a new part into the Ivy Bridge lineup while we weren't looking: the Core i5-3470. At the Ivy Bridge launch we were told about a Core i5-3450, a quad-core CPU clocked at 3.1GHz with Intel's HD 2500 graphics. The 3470 is near identical, but runs 100MHz faster. We're often hard on AMD for introducing SKUs separated by only 100MHz and a handful of dollars, so it's worth pointing out that Intel is doing the exact same here. It's possible that 22nm yields are doing better than expected and the 3470 will simply quickly take the place of the 3450. The two are technically priced the same so I can see this happening.

| Intel 2012 CPU Lineup (Standard Power) | |||||||||

| Processor | Core Clock | Cores / Threads | L3 Cache | Max Turbo | Intel HD Graphics | TDP | Price | ||

| Intel Core i7-3960X | 3.3GHz | 6 / 12 | 15MB | 3.9GHz | N/A | 130W | $999 | ||

| Intel Core i7-3930K | 3.2GHz | 6 / 12 | 12MB | 3.8GHz | N/A | 130W | $583 | ||

| Intel Core i7-3820 | 3.6GHz | 4 / 8 | 10MB | 3.9GHz | N/A | 130W | $294 | ||

| Intel Core i7-3770K | 3.5GHz | 4 / 8 | 8MB | 3.9GHz | 4000 | 77W | $332 | ||

| Intel Core i7-3770 | 3.4GHz | 4 / 8 | 8MB | 3.9GHz | 4000 | 77W | $294 | ||

| Intel Core i5-3570K | 3.4GHz | 4 / 4 | 6MB | 3.8GHz | 4000 | 77W | $225 | ||

| Intel Core i5-3550 | 3.3GHz | 4 / 4 | 6MB | 3.7GHz | 2500 | 77W | $205 | ||

| Intel Core i5-3470 | 3.2GHz | 4 / 4 | 6MB | 3.6GHz | 2500 | 77W | $184 | ||

| Intel Core i5-3450 | 3.1GHz | 4 / 4 | 6MB | 3.5GHz | 2500 | 77W | $184 | ||

| Intel Core i7-2700K | 3.5GHz | 4 / 8 | 8MB | 3.9GHz | 3000 | 95W | $332 | ||

| Intel Core i5-2550K | 3.4GHz | 4 / 4 | 6MB | 3.8GHz | 3000 | 95W | $225 | ||

| Intel Core i5-2500 | 3.3GHz | 4 / 4 | 6MB | 3.7GHz | 2000 | 95W | $205 | ||

| Intel Core i5-2400 | 3.1GHz | 4 / 4 | 6MB | 3.4GHz | 2000 | 95W | $195 | ||

| Intel Core i5-2320 | 3.0GHz | 4 / 4 | 6MB | 3.3GHz | 2000 | 95W | $177 | ||

The 3470 does support Intel's vPro, SIPP, VT-x, VT-d, AES-NI and Intel TXT so you're getting a fairly full-featured SKU with this part. It isn't fully unlocked, meaning the max overclock is only 4-bins above the max turbo frequencies. The table below summarizes what you can get out of a 3470:

| Intel Core i5-3470 | ||||||

| Number of Cores Active | 1C | 2C | 3C | 4C | ||

| Default Max Turbo | 3.6GHz | 3.6GHz | 3.5GHz | 3.4GHz | ||

| Max Overclock | 4.0GHz | 4.0GHz | 3.9GHz | 3.8GHz | ||

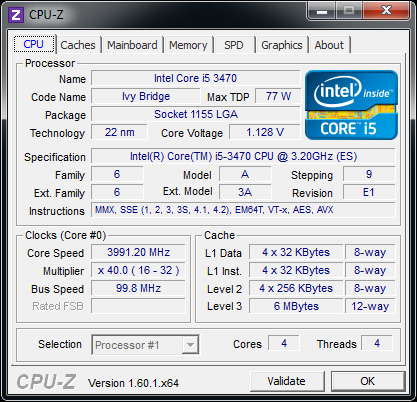

In practice I had no issues running at the max overclock, even without touching the voltage settings on my testbed's Intel DZ77GA-70K board:

It's really an effortless overclock, but you have to be ok with the knowledge that your chip could likely go even faster were it not for the artificial multiplier limitation. Performance and power consumption at the overclocked frequency are both reasonable:

| Power Consumption Comparison | ||||

| Intel DZ77GA-70K | Idle | Load (x264 2nd pass) | ||

| Intel Core i7-3770K | 60.9W | 121.2W | ||

| Intel Core i5-3470 | 54.4W | 96.6W | ||

| Intel Core i5-3470 @ Max OC | 54.4W | 110.1W | ||

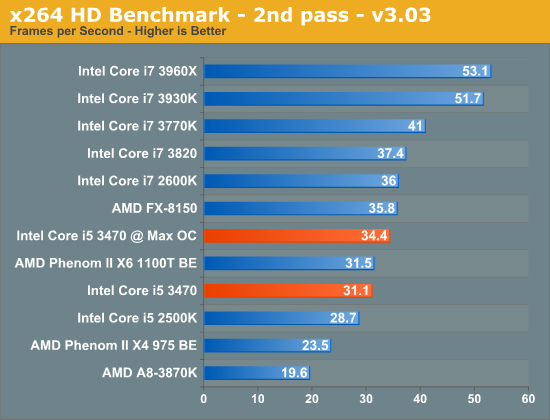

Power consumption doesn't go up by all that much because we aren't scaling the voltage up significantly to get to these higher frequencies. Performance isn't as good as a stock 3770K in this well threaded test simply because the 3470 lacks Hyper Threading support:

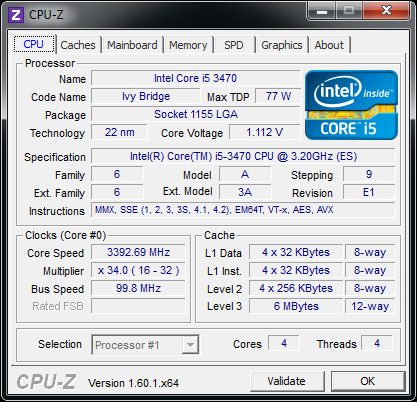

Overall we see a 10% increase in performance for a 13% increase in power consumption. Power efficient frequency scaling is difficult to attain at higher frequencies. Although I didn't increase the default voltage settings for the 3470, at 3.8GHz (the max 4C overclock) the 3470 is selecting much higher voltages than it would have at its stock 3.4GHz turbo frequency:

67 Comments

View All Comments

BSMonitor - Thursday, May 31, 2012 - link

f they truly were interested in building the best APU. And by that, a knockout iGPU experience.Where are the dual-core Core i7's with 30-40 EU's??

Or the AMD <whatevers> (not sure anymore what they call their APU) Phenom X2 CPU with 1200 Shaders??

When we are talking about a truly GPU intense application, a LOT of times single/dual core CPU is enough. Heck, if you were to take a dual-core Core 2, and stick it with a GeForce 670 or Radeon 7950.. You would see very similar numbers in terms of gaming performance to what's in the BENCH charts. ESP at the 1920x1080 and below.

Surely Intel can afford another die that aims a ton of transistors at just the GPU side of things. AMD, maybe. Why do we get from BOTH, their top end iGPU stuck with the most transistors dedicated to the CPU??

I find it hard to believe anyone shopping for an APU is hoping for amazing CPU performance to go with their average iGPU performance. That market would be the opposite. Sacrifice a few threads on the CPU side for amazing iGPU.

Am I missing something technically limiting?? Is that many GPU units overkill in terms of power/heat dissipation of the CPU socket??

tipoo - Thursday, May 31, 2012 - link

Well, their chips have to work in a certain set of thermal limits. Maybe at this point 1200 shader cores would not be possible on the same die as a quad core CPU for power consumption and heat reasons. I think Haswel will have 64 EUs though if the rumours are true.Roland00Address - Thursday, May 31, 2012 - link

There is no point of a 1200 shaders apu due to memory bandwidth. You couldn't feed a beast of an apu with only dual channel 1600 mhz memory when that same memory limits the performance of llano and trinity compared to their gpu cousins which have the same calculation units and core clocks but the gpus perform significantly better.silverblue - Thursday, May 31, 2012 - link

Possibly, but at the moment, bandwidth is a surefire performance killer.BSMonitor - Thursday, May 31, 2012 - link

Good Points. But currently, Intel has Quad Channel DDR3-1600 up on the Socket 2011. I am sure AMD could get more bandwidth there too, if they step up the memory controller.My overall point, is that neither is even trying for a low-medium transistor CPU and a high transistor GPU.

It's either Low-Medium CPU with Low-Medium GPU (disabled cores and what have you), or High End CPU with "High End" GPU.

There is no attempt at giving up CPU die space for more GPU transistors from either.. None. If you someone spends $$ on the High End of the CPU (Quad Core i7), the implementation of iGPU is not even close to worth using for that much CPU.

Roland00Address - Thursday, May 31, 2012 - link

Quad Channel is not a "free upgrade" it requires much more traces on the motherboard as well as more pins on the cpu socket. This dramtically increases costs for the motherboard and the cpu. Both of those are going against what AMD is trying to do with their APUs which will be both laptop as well as desktop chips. They are trying to increase their margins on their chips not decrease them.You have a large number OEMs only putting a single 4gb ddr3 stick in laptops and desktops (thus not achieving dual channel) in the current apus. You want think those same vendors are suddenly going to put 16gbs of memory on an apu (and it is going to be 16gbs since 2gb ddr3 sticks are being phased out via the memory manufactures.)

tipoo - Thursday, May 31, 2012 - link

I'm curious why the HD4000 outperforms something like the 5450 by nearly double in Skyrim, yet falls behind in something like Portal or Civ, or even Minecraft? Is it immature drivers or something in the architecture itself?ShieTar - Thursday, May 31, 2012 - link

For Minecraft, read the article and what it has to say about OpenGL.For Portal or Civ, it might very well be related to Memory Bandwidth. The HD2500 can have 25.6 GB/s (with DDR3-1600), or even more. The 5450 generally comes with half as much (12.8 GB/s), or even a quarter of it since there are also 5450s with DDR2.

As a matter of fact, I remember reading several reports on how much the Llano-Graphics would improve with faster Memory, even beyond DDR3-1600. I havn't seen any tests on the impact of memory speed from Ivy Bridge or Trinity yet, but that would be interesting given their increased computing powers.

silverblue - Thursday, May 31, 2012 - link

I'm sure it'll matter for both, more so for Trinity. I'm not sure we'll see much in the way of a comparison until the desktop Trinity appears, but for IB, I'm certainly waiting.tipoo - Thursday, May 31, 2012 - link

Having half the memory bandwidth would lead to the reverse expectation, the 5450 is close to or even surpasses the HD4000 with twice the bandwidth in those games, yet the 4000 beats it by almost double in games like Skyrim, even the 2500 beats it there.