NVIDIA Launches Fermi Based GeForce GT 610, GT 620, GT 630 Into Retail

by Ryan Smith on May 19, 2012 8:00 PM ESTWhile we were off at NVIDIA’s GTC 2012 conference seeing NVIDIA’s latest professional products, NVIDIA’s GeForce group was busy with some launches of their own. The company has quietly launched the GeForce GT 610, GT 620, and GT 630 into the retail market. Unfortunately these are not the Kepler GeForce cards you were probably looking for.

| GT 630 GDDR5 | GT 630 DDR3 | GT 620 | GT 610 | |

| Previous Model Number | GT 440 GDDR5 | GT 440 DDR3 | N/A | GT 520 |

| Stream Processors | 96 | 96 | 96 | 48 |

| Texture Units | 16 | 16 | 16 | 8 |

| ROPs | 4 | 4 | 4 | 4 |

| Core Clock | 810MHz | 810MHz | 700MHz | 810MHz |

| Shader Clock | 1620MHz | 1620MHz | 1400MHz | 1620MHz |

| Memory Clock | 3.2GHz GDDR5 | 1.8GHz DDR3 | 1.8GHz DDR3 | 1.8GHz DDR3 |

| Memory Bus Width | 128-bit | 128-bit | 64-bit | 64-bit |

| Frame Buffer | 1GB | 1GB | 1GB | 1GB |

| GPU | GF108 | GF108 | GF108/GF117? | GF119 |

| TDP | 65W | 65W | 49W | 29W |

| Manufacturing Process | TSMC 40nm | TSMC 40nm | TSMC 40nm | TSMC 40nm |

As NVIDIA was already reusing Fermi GPUs for GeForce 600 series parts for the OEM laptop and desktop market, it was only a matter of time until this came over to the retail market, and that’s exactly what has happened. The GT 610, GT 620, and GT 630 are all based on Fermi GPUs, and in fact 2 of them are straight-up rebadges of existing GeForce 400 and 500 series cards. Worse, they’re not even consistent with their OEM counterparts – the OEM GT 620 and GT 630 are based off of different chips and specs entirely.

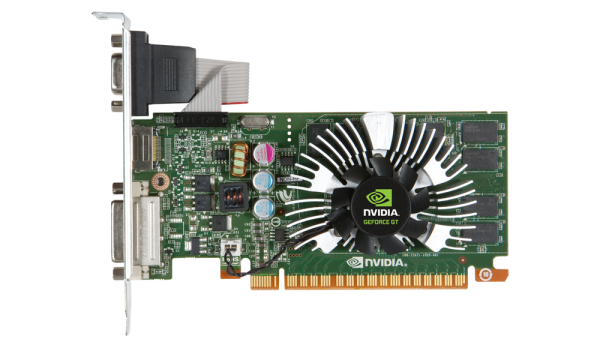

At the bottom of the 600 series retail stack is the GeForce GT 610, which is a rebadge of the GT 520. This means it’s either a GF119 GPU or cut-down GF108 GPU featuring a meager 48 CUDA Cores and a 64bit memory bus, albeit with a low 29W TDP as a result. This is truly a rock bottom card meant to be a cheap as possible upgrade for older computers, as even an Ivy Bridge HD4000 iGPU should be able to handily surpass it.

The second card is the GT 620, which is a variant of the OEM-only GT 530. With 96 CUDA cores we’re not 100% sure that this is GF108 as opposed to the 28nm GK117, but as NVIDIA currently has a 28nm capacity bottleneck we can’t see them placing valuable 28nm chips in low-end retail cards. Furthermore the 49W TDP perfectly matches the GF108 based GT 530. Compared to the OEM GT 620 the retail model has twice as many CUDA cores, so it has twice as much shader performance on paper, but because of the 64bit memory bus it’s going to be significantly memory bandwidth starved.

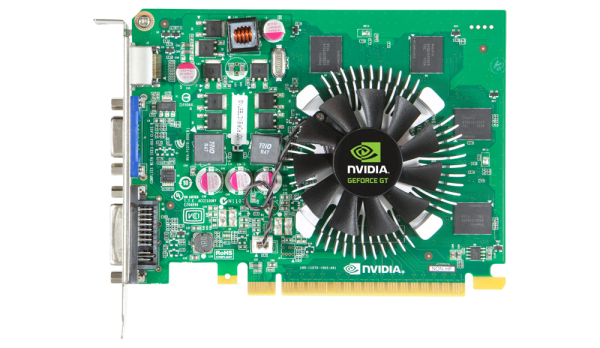

The final new 600 series card is the GT 630, which is a rebadge of the GT 440. Like the GT 440 this card comes in two variants, a model with DDR3 and a model with GDDR5. Both models are based on GF108 and have all 96 CUDA cores enabled, and have the same core clock of 810MHz. At the same time this is going to be the card that deviates from its OEM counterpart the most. The OEM GT 630 was a Kepler GK107 card, so this rules out getting a Kepler based GT 630 retail card any time in the near future.

As always, rebadging doesn’t suddenly make a good card bad – or vice versa – but it’s disappointing to once again see this mess transition over to the retail market. We hold to our belief that previous generation products are perfectly acceptable as they were, and that the desire to have yearly product numbers in an industry that is approaching 2 year product cycles is silly at its best, and confusing at its worst.

44 Comments

View All Comments

Scali - Sunday, May 20, 2012 - link

Which may be exactly why they're not bothering to introduce new GPUs at the low-end?I guess this level of performance will be phased out as it is now being surpassed by APUs. So why develop shiny new 28 nm GPUs for that? They probably wouldn't sell enough to make it worthwhile.

Wwhat - Sunday, May 20, 2012 - link

They should make mixed mode wafers where the central part has the high end thus large GPU's and then the edge has the small low end ones.I wonder if they ever did the calculation on that, what with the lousy yield of 28nm that might be a real good idea.

Scali - Sunday, May 20, 2012 - link

Not sure about that...Firstly it would require the extra effort to design and test these low-end parts before it would work.

Secondly, as far as I know it is not possible, or at least not cost-effective to build multiple chips on a single wafer.

Namely you normally re-use a single mask for all chips on a wafer. When you mix them, the mask has to be replaced halfway during lithography.

Lastly, the wafer has the least defects in the center, and the most defects at the edges. The yields may be too low for it to be worthwhile anyway. So it's just as well when they go to waste.

Lonyo - Sunday, May 20, 2012 - link

Except they already have low end GPUs which are being used in the OEM versions of these cards, so they have already developed shiny new 28nm GPUs, they just aren't using them in retail.CeriseCogburn - Saturday, May 26, 2012 - link

Why thank you yannigr for the 1st bit of sense so far.(although you could be sporting a racist name, so anything you say is not worthy, and should be ignored, and you lost all credibility, and if you had a nicer name maybe someone would listen to you or think you could possibly be telling the plain and obvious truth.)

rolls eyes

Oh sorry, I started fitting in and acting like the children here who taught me all too well.

vision33r - Sunday, May 20, 2012 - link

It's not the enthusiat market that keeps Nvidia afloat it's these low end solutions. I order them by the hundreds for businesses. Maybe a few more years Intel and AMD's internal GPU will be powerful enough to replace them.MrSpadge - Monday, May 21, 2012 - link

Trinity is more than a match for these low end cards.CeriseCogburn - Saturday, May 26, 2012 - link

Yes it appears trinity is a slight bit faster than a GTX 525, but so what ?You know how many millions of Athlon 2 / pentium D / Pentium 900 / Cedar Mill / Phenom / Phenom 2 systems are out there with an empty PCI-E slot in them - that could use a DX11 vid card where Trinity can't fit any of them at all ever ?

I'm kind of sick of the mindset that is about this place, where the only thought that comes to mind is ripping away with the clueless singular notion that fps in a game compared to any amd fan spew gpu of the moment is what counts even when no comparison in any dream world is pertinent, and added to that the endless ranting about nVidia making money or deceiving everyone.

It's just amazing how all of you are so much more intelligent than the rest of the world, that after an article you can spew out some amd fan piece, then proclaim everyone else is fooled because you the brightest bulbs on earth know all the tricks...

Man what a downer you people are. Really, what a freakin downer.

None of you ever seem to have a system you could use a card like one of these in. I say you probably all have a core2 or lesser around that could, but you're too busy bashing and ripping to even think. Maybe with your vast experiences you've never even opened your walmart box and have zero clue it has a 16X pci-e slot ready and waiting.

Yes, the top end Trinity is likely faster, so go buy the non -existent $800 + laptop and tell us how bad amd is spanking a $40 Dx11 card.

Surprisingly, when it's cheap as dirt, the usual amd fanboy reaction is massive greed kicks in and they drool to yank one off the shelf somewhere especially if some other release will drive the price down to pauper pleasing level.

Wow guess I freaked out. Good job amd fan.

tipoo - Tuesday, May 22, 2012 - link

They already are. Trinity will already be ahead of these for sure, and the HD4000 is probably ahead of them too, at least the bottom two.CeriseCogburn - Saturday, May 26, 2012 - link

Not with DX11.Amd dropped support on the HD4000 too.

Great job amd fan.