The AMD Trinity Review (A10-4600M): A New Hope

by Jarred Walton on May 15, 2012 12:00 AM ESTImproved Turbo

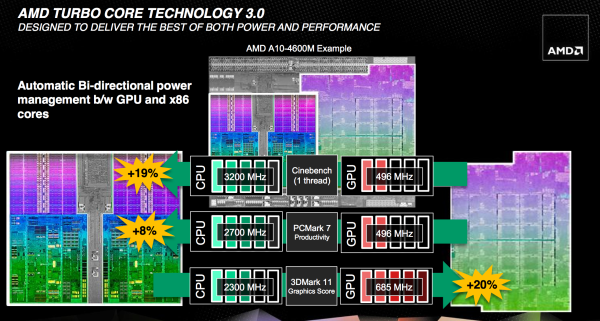

Trinity features a much improved version of AMD's Turbo Core technology compared to Llano. First and foremost, both CPU and GPU turbo are now supported. In Llano only the CPU cores could turbo up if there was additional TDP headroom available, while the GPU cores ran no higher than their max specified frequency. In Trinity, if the CPU cores aren't using all of their allocated TDP but the GPU is under heavy load, it can exceed its typical max frequency to capitalize on the available TDP. The same obviously works in reverse.

Under the hood, the microcontroller that monitors all power consumption within the APU is much more capable. In Llano, the Turbo Core microcontroller looked at activity on the CPU/GPU and performed a static allocation of power based on this data. In Trinity, AMD implemented a physics based thermal calculation model using fast transforms. The model takes power and translates it into a dynamic temperature calculation. Power is still estimated based on workload, which AMD claims has less than a 1% error rate, but the new model gets accurate temperatures from those estimations. The thermal model delivers accuracy at or below 2C, in real time. Having more accurate thermal data allows the turbo microcontroller to respond quicker, which should allow for frequencies to scale up and down more effectively.

At the end of the day this should improve performance, although it's difficult to compare directly to Llano since so much has changed between the two APUs. Just as with Llano, AMD specifies nominal and max turbo frequencies for the Trinity CPU/GPU.

A Beefy Set of Interconnects

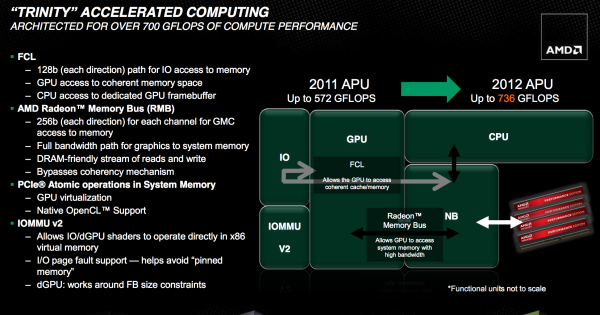

The holy grail for AMD (and Intel for that matter) is a single piece of silicon with CPU and GPU style cores that coexist harmoniously, each doing what they do best. We're not quite there yet, but in pursuit of that goal it's important to have tons of bandwidth available on chip.

Trinity still features two 64-bit DDR3 memory controllers with support for up to DDR3-1866 speeds. The controllers add support for 1.25V memory. Notebook bound Trinities (Socket FS1r2 and Socket FP2) support up to 32GB of memory, while the desktop variants (Socket FM2) can handle up to 64GB.

Hyper Transport is gone as an external interconnect, leaving only PCIe for off-chip IO. The Fusion Control Link is a 128-bit (each direction) interface giving off-chip IO devices access to system memory. Trinity also features a 256-bit (in each direction, per memory channel) Radeon Memory Bus (RMB) direct access to the DRAM controllers. The excessive width of this bus likely implies that it's also used for CPU/GPU communication as well.

IOMMU v2 is also supported by Trinity, giving supported discrete GPUs (e.g. Tahiti) access to the CPU's virtual memory. In Llano, you used to take data from disk, copy it to memory, then copy it from the CPU's address space to pinned memory that's accessible by the GPU, then the GPU gets it and brings it into its frame buffer. By having access to the CPU's virtual address space now the data goes from disk, to memory, then directly to the GPU's memory—you skip that intermediate mem to mem copy. Eventually we'll get to the point where there's truly one unified address space, but steps like these are what will get us there.

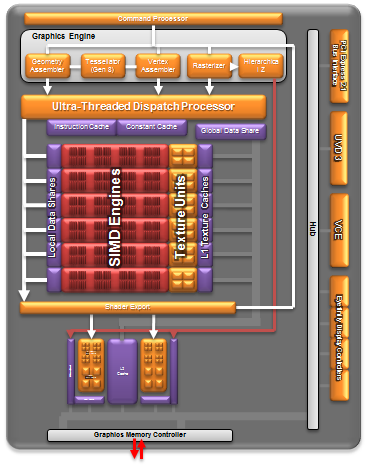

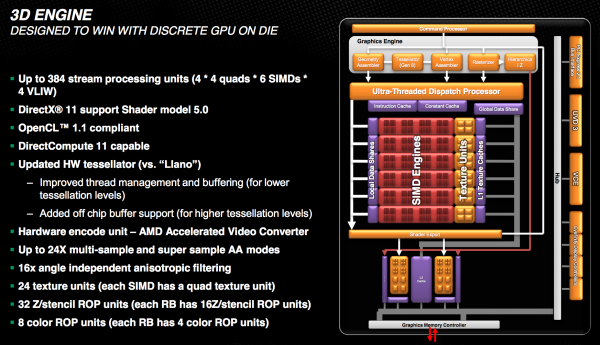

The Trinity GPU

Trinity's GPU is probably the most well understood part of the chip, seeing as how its basically a cut down Cayman from AMD's Northern Islands family. The VLIW4 design features 6 SIMD engines, each with 16 VLIW4 arrays, for a total of up to 384 cores. The A10 SKUs get 384 cores while the lower end A8 and A6 parts get 256 and 192, respectively. FP64 is supported but at 1/16 the FP32 rate.

As AMD never released any low-end Northern Islands VLIW4 parts, Trinity's GPU is a bit unique. It technically has fewer cores than Llano's GPU, but as we saw with AMD's transition from VLIW5 to VLIW4, the loss didn't really impact performance but rather drove up efficiency. Remember that most of the time that 5th unit in AMD's VLIW5 architectures went unused.

The design features 24 texture units and 8 ROPs, in line with what you'd expect from what's effectively 1/4 of a Cayman/Radeon HD 6970. Clock speeds are obviously lower than a full blown Cayman, but not by a ton. Trinity's GPU runs at a normal maximum of 497MHz and can turbo up as high as 686MHz.

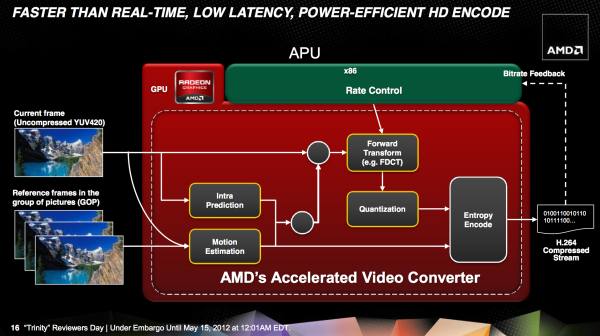

Trinity includes AMD's HD Media Accelerator, which includes accelerated video decode (UVD3) and encode components (VCE). Trinity borrows Graphics Core Next's Video Codec Engine (VCE) and is actually functional in the hardware/software we have here today. Don't get too excited though; the VCE enabled software we have today won't take advantage of the identical hardware in discrete GCN GPUs. AMD tells us this is purely a matter of having the resources to prioritize Trinity first, and that discrete GPU VCE support is coming.

271 Comments

View All Comments

zepi - Tuesday, May 15, 2012 - link

You've got it backwards.Stuff is priced according to the value it has for customers. To get as much money from their product as possible, regardless of manufacturing costs. Or that's what everybody is aiming for. Trinity is going to be cheap only because it's not good enough to get sales if priced higher.

Best possible outcome for everybody would have been that cheapest Trinity-based laptops would cost about $1500, but they'd be about as fast as Ivy Bridge Quadcore-desktops with Geforce GTX680 and still achieve a battery-life of about 8min per Wh. And performance & price would both have only gone upwards from there on.

That kind of performance-dominance would force Intel and Nvidia to drop their prices considerably (getting us the cheap laptops regardless of trinity being pricey) and we'd still have to option to go for über Trinity's if we'd have the cash.

And it would save AMD from bankruptcy, ensuring that we'd have competition in future as well.

Llano, Brazos and Bulldozer are all horrible products for AMD. Good product is characterized by the fact, that it has considerably more worth to the customer than it costs to manufacture it. If a product is good, it's easy to price it accordingly, and people will still buy it. AMD's CPU's are apparently very bad products, because AMD is making huge losses at the moment. And I don't think it's the GPU-division that's causing those losses.

JarredWalton - Tuesday, May 15, 2012 - link

Products are priced according to where the marketing folks think they'll sell. All you have to do is walk into Best Buy and talk to a sales person to realize that they'll push whatever they can on you, even if it's not faster/better. And I think the bean counters feel they can sell Trinity at $700 or more--and for many people, they're probably right. We'll see $600 and $500 Trinity as well, but that will be the A8 and A6 models, with less RAM and smaller HDDs.As far as competition, propping up an inferior product in the hope of having more competition isn't healthy, and if AMD has a superior product they simply charge as much as Intel. NVIDIA is the same. If someone came out with a chip that had the CPU performance of IVB and the GPU performance of a GTX card, all while using the power of Brazos...well, you can bet they'd charge an arm and a leg for it. They wouldn't sell it for $1500, they'd sell it for $2500--and some people would buy it.

Ultimately, they're all big businesses, and they (try to) do what's best for the business, so I buy whatever product fits my needs best. I wish Trinity were more impressive, particularly on the CPU side of the equation. I think if Trinity's CPU were as fast as Ivy Bridge, the GPU portion would probably end up being 50% faster than HD 4000; unfortunately, there are titles that require more CPU work (Skyrim for instance) and that starts to level the playing field. But wishing for something that isn't here, or playing the "what if" game, just doesn't really accomplish anything.

Targon - Wednesday, May 16, 2012 - link

And you can get a quad-core A6 laptop for under $500 right now. If you pay attention, you generally get what you pay for. For most users, going with an AMD quad-core laptop does provide a decent product for the price. For some, CPU power is more important, and for others, a more well rounded machine is more important. I expect that A10-4600 laptops will start closer to $600 than $700, unless you are looking at machines with a large screen, discrete graphics, or something else that increases the prices.CeriseCogburn - Wednesday, May 23, 2012 - link

What you're all missing is all the then second tier Optimus laptops that will have much deflated pricing, as well as the load of $599 amd discrete laptops that will sell like wildfire and please those who waited - just like the amd fans are constantly waiting for nVidia to release so they can snag a second tier deflated price amd card.Since the "cpu doesn't matter !" as we have been told, there's no excuse to not snag a fine and cheap Optimus that won't have an IB.

This is the "best time in the world" for all the amd fans to forget all prior generations of laptops and pretend, quite unlike in the video card area, that nothing else exists.

I love how amd fans do that crap.

evolucion8 - Tuesday, May 15, 2012 - link

Also remember that Penryn was launched on 2007-2008 and until late 2009, several Core 2 Duo laptops were released. I have a Gateway MD7309u and it was launched on October 2009 and still feeling very snappy and has good battery life, I hate its GMA 4500M with my whole heart.....Nfarce - Tuesday, May 15, 2012 - link

Yeah well I don't understand the point of buying a low-mid range laptop expecting to be enjoying playing games at basic laptop 1366x768 resolutions. What's the point?You can spend around $1,200 on a mid-range i5 turbo boost laptop with a discreet GPU and 1600x900 resolution screen that plays games decently without completely shutting down the eye candy sliders. Save up and get a better laptop - and Intel with a dedicated AMD or Nvidia GPU. If you can afford $600 now, you can afford $1,200 down the road and enjoy things much better.

CeriseCogburn - Wednesday, May 23, 2012 - link

I agree but the famdboy loves to torture itself and claim everyone else loves cheap frustrating crap - often characterized as a "mobile employee on the road, in the airplane, or at the hotel spot" needing a "game fix"...(in other words someone flush enough to buy +discrete) as you pointed out.The rest of the tremendous and greatly pleased "light gamers" will purportedly be playing at work( no scratch that) or on their couch at home (that sounds like the crew) ... and then one has to ask why aren't they using one of the desktops at home for gaming... a $100 vidcard in that will smoke the crap out of "the light gamer".

That leaves "enthusiasts" who just want to play with it and see for a few minutes if they can OC it, and "how it does" with games... and after that they will want to throw it at a wall for how badly it sucks - not to mention their online multi-player avatar will get smoked so badly their stats will plummet... so that will last all of two days.

So we get down to who this thing is really good for - and I suppose that's the young teen to pre teen brat - as a way to get the kid off mommy's or daddy's system so they can have the reigns uninterrupted... so the teeny bopper gets the crud low $ cheap walmart lappy system that should also keep them tamed since being too rough with it means the thing snaps in half a the plastic crumbles.

Yep - there it is - teeny bopper punkster will just have to live with the jaggied pixelized low end no eye candy crawler - and why not they still love it much more than homework and have no problem eyeballing the screen.

Latzara - Tuesday, May 15, 2012 - link

While i agree with the 'nothing earthshattering' part I have to wonder what kind of average Internet browsing usage are you commenting on when you say 'People want their laptop to be responsive when doing work, watching movies and browsing' -- Most of the CPUs on the entire board presented here are enough for work - not graphics modelling mind you - excell, DB, mail, presentations, average calculation load, and even smaller programming projects - which constitutes most of the workload an average worker is gonna get, movies stopped being an issue way before, and what kind of browsing are we talking about that will make your platform unresponsive (i don't mean frozen)? 25 tabs at once? Cause i've done that with a much weaker platform and had no issues...The main problem i see is that the plaform hasn't moved as much as ppl hoped, but enough to be a new iteration in terms of progress - and with the right pricing it could be the sweet spot for many of the broader average consumers - not just the '1% of the 1% of people looking for great gaming" ...

BSMonitor - Tuesday, May 15, 2012 - link

Load up a couple Java runtime environments in those browsers. Some flash. I did have an etc in there. I am a multi-tasker, and cannot stand waiting any amount of time. For the majority of real laptop owners, a late Pentium M, Athlon 64/X2, is not enough power for any real work.Spunjji - Wednesday, May 16, 2012 - link

Please define a "real" laptop owner? I own an Alienware and I don't do any of that sort of crap. Mind you, most users I have met express more patience than you do, too. regardless, in none of these metrics do you appear to represent the majority, which is the target market for this chip.