NVIDIA GeForce GTX 690 Review: Ultra Expensive, Ultra Rare, Ultra Fast

by Ryan Smith on May 3, 2012 9:00 AM ESTMeet The GeForce GTX 690

Much like the GTX 680 launch and the GTX 590 before it, the first generation of GTX 690 cards are reference boards being built by NVIDIA, with NVIDIA using their partners for distribution and support. In fact NVIDIA is enforcing some pretty strict standards on their partners to maintain a consistent image of the GTX 690 – not only will all of the launch cards be based off of NVIDIA’s reference design, but NVIDIA’s partners will be severely restricted in how they can dress up their cards, with stickers not being allowed anywhere on the shroud. Partners will only be able to put their mark on PCB, meaning the bottom and the rear of the card. In the future we’d expect to see NVIDIA’s partners do some customizing through waterblocks and such, but for the most part this will be the face of the GTX 690 throughout its entire run.

And with that said, what a pretty face it is.

Let’s get this clear right off the bat: the GTX 690 is truly a luxury video card. If the $1000 price tag didn’t sell that point, NVIDIA’s design choices will. There are a lot of design choices based on technical reasons, but at the same time NVIDIA has gone out of their way to build the GTX 690 out of metals instead of plastics not for major performance or quality reasons, but rather just because they can. The GTX 690 is a luxury video card and NVIDIA intends to make that fact unmistakable.

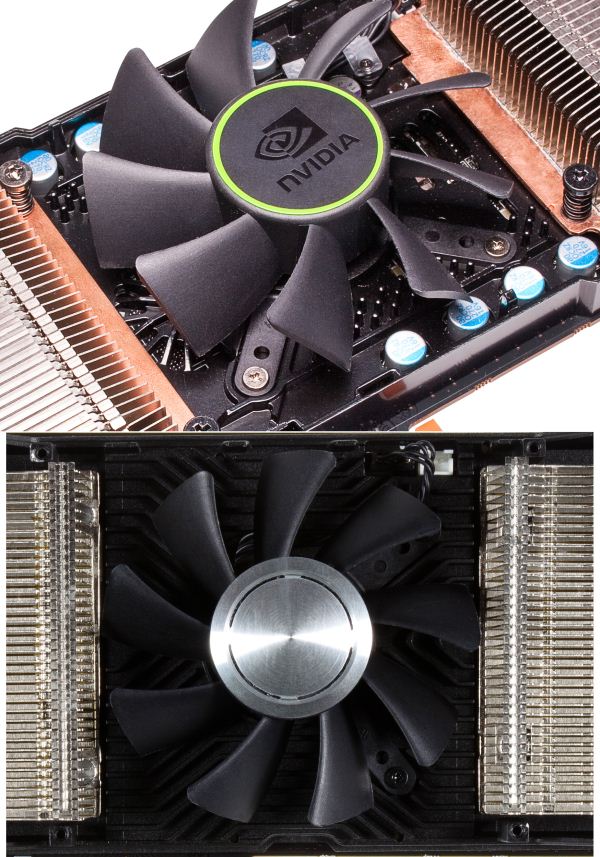

But before we get too far ahead of ourselves, let’s talk about basic design. At its most basic level, the GTX 690 is a reuse of the design principles of the GTX 590. With the exception of perhaps overclocking, the GTX 590 was a well-designed card that greatly improved on the design of past NVIDIA dual-GPU cards and managed to dissipate 365W of heat without sounding like a small hurricane. Since the GTX 690 is designed around the same power constraints and at the same time is a bit simpler in some regards – the GPUs are smaller and the memory busses narrower – NVIDIA has opted to reuse the GTX 590’s basic design.

The reuse of the GTX 590’s design means that the GTX 690 is a 10” long card with a double-wide cooler, making it the same size as the single-GPU GTX 680. The basis of the GTX 690’s cooler is single axial fan sitting at the center of the card, with a GPU and its RAM at either side. Heat from one GPU goes out the rear of the card, while the heat from the other GPU goes out the front. Heat transfer will once again be provided by a pair of nickel tipped aluminum heatsinks attached to vapor chambers, which also marks the first time we’ve seen a vapor chamber used with a 600 series card. Meanwhile a metal baseplate runs along the card at the same height as the top of the GPUs, not only providing structural rigidity but also providing cooling for the VRMs and RAM.

Compared to the GTX 590 NVIDIA has made a couple of minor tweaks however. The first is that NVIDIA has moved the baseplate a bit higher on the GTX 690 so that it covers all of the components other than the GPU, so that those components don’t need to stick through the baseplate. The idea here is that turbulence is reduced as airflow doesn’t need to deal with those obstructions, instead being generally driven by small channels in the baseplate. The second change is that NVIDIA has rearranged the I/O port configuration so that the stacked DVI connector is moved to the very bottom of the bracket rather than being in roughly the middle, maximizing just how much space is available for venting hot air out of the front of the card. In practice these aren’t huge differences – our test results don’t find the GTX 690 to be significantly quieter than the GTX 590 under gaming loads – but every bit helps.

Of course this design means that you absolutely need an airy case – you’re effectively dissipating 150W to 170W not just into your case, but straight towards the front of your case. As we saw with the GTX 590 and the Radeon HD 6990 this has a detrimental effect on anything that may be directly behind the video card, which for most cases is going to be the HDD cage. As we did with the GTX 590, we took some quick temperature readings with a hard drive positioned directly behind the GTX 690 in order to get an idea of the impact of exhausting hot air in this fashion.

| Seagate 500GB Hard Drive Temperatures | |||

| Video Card | Temperature | ||

| GeForce GTX 690 | 38C | ||

| Radeon HD 7970 | 28C | ||

| GeForce GTX 680 | 27C | ||

| GeForce GTX 590 | 42C | ||

| Radeon HD 6990 | 37C | ||

| Radeon HD 5970 | 31C | ||

Unsurprisingly the end result is very similar to the GTX 590. The temperature increase is reduced some thanks to the lower TDP of the card, but we’re still driving up the temperature of our HDD by over 10C. This is still well within the safety range of a HDD and in principle should work, but our best advice to GTX 690 buyers is to keep any drive bays directly behind the GTX 690 clear, just in case. That’s the tradeoff for making it quieter and capable of dissipating more heat than older blower designs.

Moving on, let’s talk about the technical details of the GTX 590. GPU power is supplied by 10 VRM phases, divided up into 5 phases per GPU. Like many other aspects of the GTX 690 this is the same basic design as the GTX 590, which means that it should be enough to push up to 365W but it’s no more designed for overvolting than the GTX 590 was. Any overclocking potential with the GTX 690 will be based on the fact that the card’s default configuration is for 300W, allowing for some liberal adjustment of the power target.

Meanwhile the RAM on the GTX 690 is an interesting choice. NVIDIA is using Samsung 6GHz GDDR5 as opposed to the Hynix 6GHz GDDR5 they used on the GTX 680. We haven’t seen much of Samsung lately, and in fact the last time we had a product with Samsung GDDR5 cross our path was on the GTX 590. This may or may not be significant, but it’s something to keep in mind for when we’re talking about overclocking.

Elsewhere NVIDIA’s choice of PCIe bridge is a PLX PCIe 3.0 bridge, which is the first time we’ve seen NVIDIA use a 3rd party bridge. With the GTX 590 and earlier dual-GPU cards NVIDIA used their NF200 bridge, which was a PCIe 2.0 capable bridge chip designed by NVIDIA’s chipset group. However as NVIDIA no longer has a chipset group they also no longer have a group to design such chips, and with NF200 now outdated in the face of PCIe 3.0, NVIDIA has turned to PLX to provide a PCI 3.0 bridge chip.

It’s worth noting that because NVIDIA is using a 3rd party PCIe 3.0 bridge here that they’ve opened up PCIe 3.0 support compared to GTX 680. Whereas GTX 680 officially only supported PCIe 3.0 on Ivy Bridge systems – specifically excluding Sandy Bridge-E – NVIDIA is enabling PCIe 3.0 on SNB-E systems thanks to the use of the PLX bridge. So SNB-E system owners won’t need to resort to registry hacks to enable PCIe 3 and there doesn’t appear to be any stability concerns on SNB-E with the PLX bridge. Meanwhile for users with PCIe 2 systems such as SNB, the PLX bridge supports the simultaneous use of PCIe 3.0 and PCIe 2, so regardless of the system used the GK104 GPUs will always be communicating with each other over PCIe 3.0.

Next, let’s talk about external connectivity. On the power side of things the GTX 690 features 2 8pin PCIe power sockets, allowing the card to safely draw up to 375W. The overbuilt power delivery system allows NVIDIA to sell the card as a 300W card while giving it some overclocking headroom for enthusiasts that want to play with the card’s power target. Meanwhile at the front of the card we find the sole SLI connector, which allows for the GTX 690 to be connected to another GTX 690 for quad-SLI.

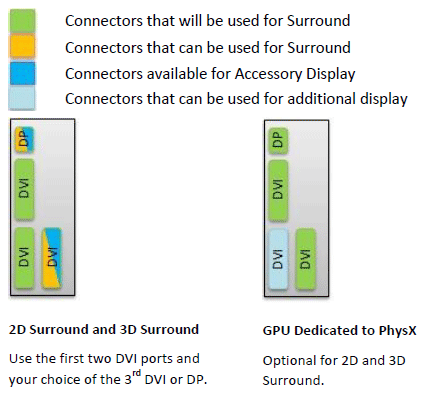

As for display connectivity NVIDIA is reusing the same port configuration we first saw with the GTX 590. This means 3 DL-DVI ports (2 I-type and 1 D-type) and a mini-DisplayPort for a 4th display. Interestingly NVIDIA is tying the display outputs to both GPUs rather than to a single GPU, and seeing as how NVIDIA still lacks display flexibility on par with AMD, this means that the GTX 690 has display configuration limitations similar to the GTX 590 and GTX 680 SLI. We’ve attached the relevant diagrams below, but in short you can’t use one of the DVI ports for a 4th monitor unless you’re in surround mode. It’s not clear at this time where DisplayPort 1.2’s multiple display capability fits into this, but since the MST hubs are still not available it’s not something that can be used at this time anyhow.

Last but certainly not least we have the luxury aspects of the GTX 690. While the basic design of the GTX 690 resembles the GTX 590, NVIDIA has replaced virtually every bit of plastic with metal for aesthetic/perceptual purposes. The basic shroud is composed of casted aluminum while the fan housing is made out of injection molded magnesium. In fact the only place you’ll find plastics on the shroud is on the polycarbonate windows over the heatsinks, which allows you to see the heatsinks just because.

To be clear the GTX 590 was a solid card and NVIDIA could have just as well used plastic again to no detriment, but the use of metal is definitely a noticeable change. The GTX 690 takes the “solid” concept to a completely different level, and while I have no intention of testing it, you could probably clock someone with the card and cause more damage to them than the GTX 690. Coupled with the return of the LED backlit GeForce logo – this time even larger and in the center of the card – and it’s clear that NVIDIA not only wants buyers to feel like they’ve purchased a solid card, but to be able to show it off in a case with a windowed side panel.

Surprisingly, there’s one place where NVIDIA didn’t put a metal part on the GTX 690 that they did the GTX 590: the back. The GTX 590 shipped with a pair of partial backplates to serve as heatsinks for the RAM on the back of the card, and while the GTX 690 doesn’t have any RAM on its backside thanks to the smaller number of chips required, I’m genuinely surprised NVIDIA didn’t throw in a backplate for the same reason as the metal shroud – just because. Backplates are the scourge of video cards when it comes to placing them directly next to each other because of the space occupied, but with the GTX 690 you need at least 1 free slot anyhow, so this is one of the few times where a backplate wouldn’t get in the way.

200 Comments

View All Comments

CeriseCogburn - Thursday, May 10, 2012 - link

The GTX680 by EVGA in a single sku outsells the combined total sales of the 7870 and 7850 at newegg.nVidia "vaporware" sells more units than the proclaimed "best deal" 7000 series amd cards.

ROFL

Thanks for not noticing.

Invincible10001 - Sunday, May 13, 2012 - link

Maybe a noob question, but can we expect a mobile version of the 690 on laptops anytime soon?trumpetlicks - Thursday, May 24, 2012 - link

Compute performance in this case may have to do with 2 things:- Amount of memory available for the threaded computational algorithm being run, and

- the memory IO throughput capability.

From the rumor-mill, the next NVidia chip may contain 4 GB per chip and a 512 bit bus (which is 2x larger than the GK104).

If you can't feed the beast as fast as it can eat it, then adding more cores won't increase your overall performance.

Joseph Gubbels - Tuesday, May 29, 2012 - link

I am a new reader and equally new to the subject matter, so sorry if this is a dumb question. The second page mentioned that NVIDIA will be limiting its partners' branding of the cards, and that the first generation of GTX 690 cards are reference boards. Does NVIDIA just make a reference design that other companies use to make their own graphics cards? If not, then why would anyone but NVIDIA have any branding on the cards?Dark0tricks - Saturday, June 2, 2012 - link

anyone who sides with AMD or NVIDIA are retards - side with yourself as a consumer - buy the best card at the time that is available AND right for your NEEDs.fact is the the 690 is trash regardless of whether you are comparing it to a NVIDIA card to a AMD card - if im buying a card like a 690 why the FUCK would i want anything below 1200 P

even if it is uncommon its a mfing trash of a $1000 card considering:

$999 GeForce GTX 690

$499 GeForce GTX 680

$479 Radeon HD 7970

and that SLI and CF both beat(or equal) the 690 at higher res's and cost less(by 1$ for NVIDIA but still like srsly wtf NVIDIA !? and 40$ for AMD) ... WHAT !?

furthermore you guys fighting over bias when the WHOLE mfing GFX community (companies, software developers is built on bias) is utterly ridiculous, GFX vendoers (AMD and NVIDA) have skewed results for games for the last decade + , and software vendors two - there needs to laws against specfically building a software for a particular graphics card in addition to making the software work worse on the other (this applies to both companies)

hell workstation graphics cards are a very good example of how the industry likes to screw over consumers ( if u ever bios modded - not just soft modded a normal consumer card to a work station card , you would know all that extra charge(up-to 70% extra for the same processor) of a workstation card is BS and if the government cleaned up their shitty policies we the consumer would be better for it)

nyran125 - Monday, June 4, 2012 - link

yep........Ultra expensive and Ultra pointless.

kitty4427 - Monday, August 20, 2012 - link

I can't seem to find anything suggesting that the beta has started...trameaa - Friday, March 1, 2013 - link

I know this is a really old review, and everyone has long since stopped the discussion - but I just couldn't resist posting something after reading through all the comments. Understand, I mean no disrespect to anyone at all by saying this, but it really does seem like a lot of people haven't actually used these cards first hand.I see all this discussion of nVidia surround type setups with massive resolutions and it makes me laugh a little. The 690 is obviously an amazing graphics card. I don't have one, but I do use 2x680 in SLI and have for some time now.

As a general rule, these cards have nowhere near the processing power necessary to run those gigantic screen resolutions with all the settings cranked up to maximum detail, 8xAA, 16xAF, tessellation, etc....

In fact, my 680 SLI setup can easily be running as low as 35 fps in a game like Metro 2033 with every setting turned up to max - and that is at 1920x1080.

So, for all those people that think buying a $1000 graphics card means you'll be playing every game out there with every setting turned up to max across three 1920x1200 displays - I promise you, you will not - at least not at a playable frame rate.

To do that, you'll be realistically looking at 2x$1000 graphics cards, a ridiculous power supply, and by the way you better make sure you have the processing power to push those cards. Your run of the mill i5 gaming rig isn't gonna cut it.

Utomo - Friday, October 25, 2013 - link

More than 1 year since it is announced. I hope new products will be better. My suggestion: 1 Add HDMI, it is standard. 2. consider to allow us to add memory / SSD for better/ faster performance, especially for rendering 3D animation, and otherTPLVG - Sunday, March 5, 2017 - link

GTX 690 in known as "The nuclear bomb" in the Chinese IT communities because its power consumption and temperature.