The Intel Ivy Bridge (Core i7 3770K) Review

by Anand Lal Shimpi & Ryan Smith on April 23, 2012 12:03 PM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

Quick Sync Image Quality & Performance

Intel obviously focused on increasing GPU performance with Ivy Bridge, but a side effect of that increased GPU performance is more compute available for Quick Sync. As you may recall, Sandy Bridge's secret weapon was an on-die hardware video transcode engine (Quick Sync), designed to keep Intel's CPUs competitive when faced with the onslaught of GPU computing applications. At the time, video transcode seemed to be the most likely candidate for significant GPU acceleration so the move made sense. Plus it doesn't hurt that video transcoding is an extremely popular activity to do with one's PC these days.

The power of Quick Sync was how it leveraged fixed function decode (and some encode) hardware with the on-die GPU's EU array. The combination of the two resulted in some pretty incredible performance gains not only over traditional software based transcoding, but also over the fastest GPU based solutions as well.

Intel put to rest any concerns about image quality when Quick Sync launched, and thankfully the situation hasn't changed today with Ivy Bridge. In fact, you get a bit more flexibility than you had a year ago.

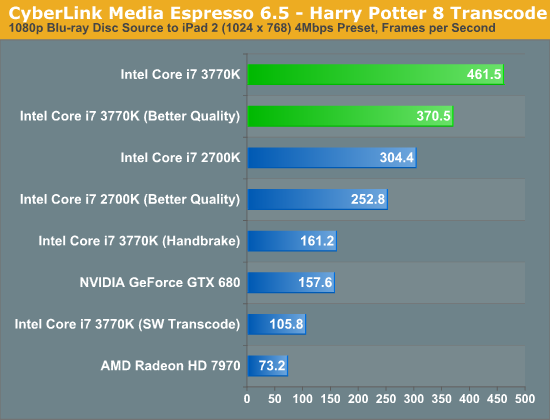

Intel's latest drivers now allow for a selectable tradeoff between image quality and performance when transcoding using Quick Sync. The option is exposed in Media Espresso and ultimately corresponds to an increase in average bitrate. To test image quality and performance, I took the last Harry Potter Blu-ray, stripped it of its DRM and used Media Espresso to make it playable on an iPad 2 (1024 x 768 preset).

In the case of our Harry Potter transcode, selecting the Better Quality option increased average bitrate from to 3.86Mbps to 5.83Mbps. The resulting file size for the entire movie increased from 3.78GB to 5.71GB. Both options produced a good quality transcode, picking one over the other really depends on how much time (and space) you have as well as the screen size of the device you'll be watching it on. For most phone/tablet use I'd say the faster performing option is ideal.

| Intel Core i7 3770K (x86) | Intel Quick Sync (SNB) | Intel Quick Sync (IVB) | Intel Quick Sync, Better (IVB) | NVIDIA GeForce GTX 680 | AMD Radeon HD 7970 |

| original | original | original | original | original | original |

While AMD has yet to enable VCE in any publicly available software, NVIDIA's hardware encoder built into Kepler is alive and well. Cyberlink Media Espresso 6.5 will take advantage of the 680's NVENC engine which is why we standardized on it here for these tests. Once again, Quick Sync's transcoding abilities are limited to applications like Media Espresso or ArcSoft's Media Converter—there's still no support in open source applications like Handbrake.

Compared to the output from Quick Sync, NVENC appears to produce a softer image. However, if you compare the NVENC output to what we got from the software/x86 path you'll see that the two are quite similar. It seems that Quick Sync, at least in this case, is sharpening/adding more noise beyond what you'd normally expect. I'm not sure I'd call it bad, but I need to do some more testing before I know whether or not it's a good thing.

The good news is that NVENC doesn't pose any of the horrible image quality issues that NVIDIA's CUDA transcoding path gave us last year. For getting videos onto your phone, tablet or game console I'd say the output of either of these options, NVENC or Quick Sync, is good enough.

Unfortunately AMD's solution hasn't improved. The washed out images we saw last year, particularly in dark scenes prior to a significant change in brightness are back again. While NVENC delivers acceptable image quality, AMD does not.

The performance story is unfortunately not much different from last year either. The chart below is average frame rate over the entire encode process.

Just as we saw with Sandy Bridge, Quick Sync continues to be an incredible way to get video content onto devices other than your PC. One thing I wanted to make sure of was that Media Espresso wasn't somehow holding x86 performance back to make the GPU accelerated transcodes seem much better than they actually are. I asked our resident video expert, Ganesh, to clone Media Espresso's settings in a Handbrake profile. We took the profile and performed the same transcode, the result is listed above as the Core i7 3770K (Handbrake). You will notice that the Handbrake x86/x264 path is definitely faster than Cyberlink's software path, by over 50% to be exact. However even using Handbrake as a reference, Quick Sync transcodes over 2x faster.

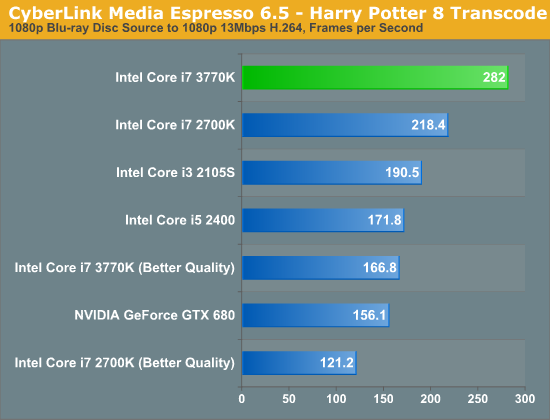

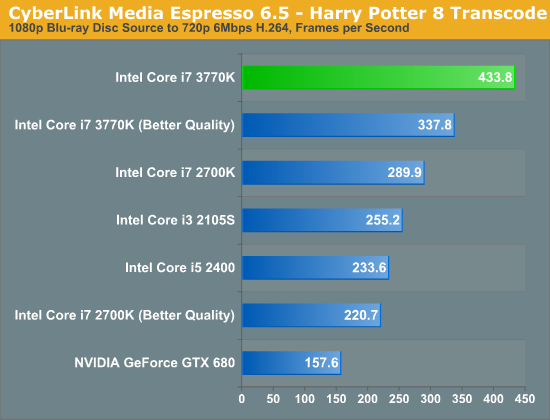

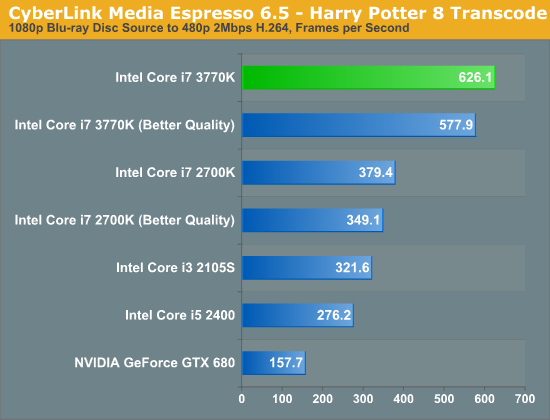

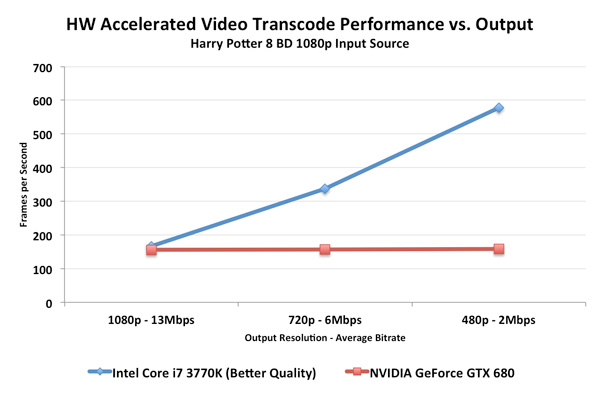

In the tests below I took the same source and varied the output quality with some custom profiles. I targeted 1080p, 720p and 480p at decreasing average bitrates to illustrate the relationship between compression demands and performance:

Unfortunately NVENC performance does not scale like Quick Sync. When asked to preserve a good amount of data, both NVENC and Quick Sync perform similarly in our 1080p/13Mbps test. However ask for more aggressive compression ratios for lower resolution/bitrate targets, and the Intel solution quickly distances itself from NVIDIA. One theory is that NVIDIA's entropy encode block could be the limiting factor here.

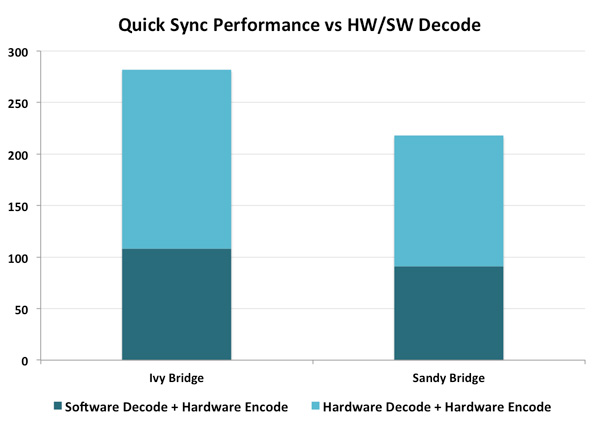

Ivy Bridge's improved Quick Sync appears to be aided both by an improved decoder and the HD 4000's faster/larger EU array. The graph below helps illustrate:

If we rely on software decoding but use Intel's hardware encode engine, Ivy Bridge is 18% faster than Sandy Bridge in this test (1080p 13Mbps output from BD source, same as above). If we turn on both hardware decode and encode, the advantage grows to 29%. More than half of the performance advantage in this case is due to the faster decode engine on Ivy Bridge.

173 Comments

View All Comments

hechacker1 - Monday, April 23, 2012 - link

VT-d is interesting if you run ESXi or a Linux based hyper visor, as they allow to utilize VT-d to directly assign hardware to the virtual machines. I think you can even share hardware with it.In Linux for example you could host Windows and assign it a real GPU and get full performance from it.

A while ago I built a machine with that idea in mind, but the software bits weren't in place just yet.

I too with for an overclockable VT-d part.

terragb - Monday, April 23, 2012 - link

Just to add to this, all the processors do support VT-x which is the potentially performance enhancing spec for virtualization.JimmiG - Monday, April 23, 2012 - link

Really annoying how Intel decides seemingly at random which parts get VT-d and which don't.Why do you get it with the $174 i5 3450, but not with the "one CPU to rule them all", everything-but-the-kitchen-sink, $313 i7 3770K?

It's also a stupid way to segment your product line, since 99% of the people buying systems with these CPUs won't even know what it does.

This means AMD also gets some of my money when I upgrade - I'll just build a cheap Bulldozer system for my virtualization needs. I can't really use my Phenom II X4 for that after upgrading - it uses too much power and it's dependent on DDR-2 RAM, which is hard to find and expensive.

dcollins - Monday, April 23, 2012 - link

VT-d is required to support Intel's Trusted Execution Platform, which is used by many OEMs to provide business management tools. That's why the low end CPUs have support and the enthusiast SKUs do not. VT-d provides no benefit to Desktop users right now because desktop virtualization packages do not support it.I agree that it is frustrating having to sacrifice future-proofing for overclocking, but Intel's logic kind of makes sense. Remember, any features that can be disabled will increase yields which means lower prices (or higher margins).

JimmiG - Tuesday, April 24, 2012 - link

VirtualBox, which is one of the most popular desktop virtualization packages, does support VT-d. In fact it's required for 64-bit guests and guests with more than one CPU being virtualized.Does VT-d really use so many transistors that disabling it increases yields? AMD keep their hardware virtualization features enabled even in their lowest-end CPUs (even those where entire cores have been disabled to increase yields)

dgingeri - Monday, April 23, 2012 - link

"I took the last Harry Potter Blu-ray, stripped it of its DRM and used Media Espresso to make it playable on an iPad 2 (1024 x 768 preset)."I wouldn't admit that in print, if I were you. The DMCA goblins will come and get you.

p05esto - Monday, April 23, 2012 - link

They can say they're just kidding and used it as an example, because they would "never" actually do that. I think pirate cops would need more than talk to go to court. Imagine how bad this site would rip into them if they said anything, lol.XJDHDR - Monday, April 23, 2012 - link

Why? No-one loses money from transcode benchmarks. Besides, piracy is the real problem. If it didn't exist, there would be no DRM to strip away.dgingeri - Monday, April 23, 2012 - link

Sure, nobody loses any money, but the entertainment industry pushed DMCA through, and they will use it if they think they could get any profit out of it. It's one law, out of many, that isn't there to protect anyone. It's there so the MPAA and RIAA can screw people over.copyrightforreal - Monday, April 23, 2012 - link

Don't pretend you know shit about copyright law when you don't.Ripping a DVD you own is NOT illegal under the DMCA or Copyright act.

Wikipedia article that even you will be able to comprehend:

http://en.wikipedia.org/wiki/Ripping#Circumvention...