The Intel Ivy Bridge (Core i7 3770K) Review

by Anand Lal Shimpi & Ryan Smith on April 23, 2012 12:03 PM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

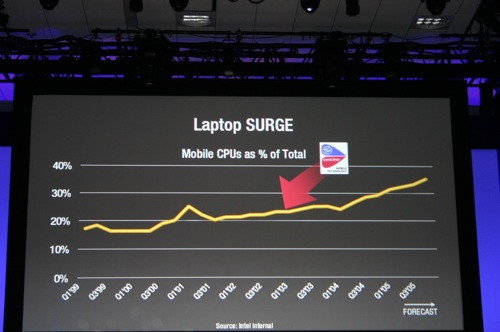

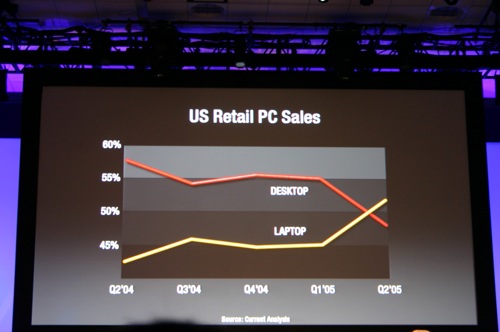

The times, they are changing. In fact, the times have already changed, we're just waiting for the results. I remember the first time Intel brought me into a hotel room to show me their answer to AMD's Athlon 64 FX—the Pentium 4 Extreme Edition. Back then the desktop race was hotly contested. Pushing the absolute limits of what could be done without a concern for power consumption was the name of the game. In the mid-2000s, the notebook started to take over. Just like the famous day when Apple announced that it was no longer a manufacturer of personal computers but a manufacturer of mobile devices, Intel came to a similar realization years prior when these slides were first shown at an IDF in 2005:

IDF 2005

IDF 2005

Intel is preparing for another major transition, similar to the one it brought to light seven years ago. The move will once again be motivated by mobility, and the transition will be away from the giant CPUs that currently power high-end desktops and notebooks to lower power, more integrated SoCs that find their way into tablets and smartphones. Intel won't leave the high-end market behind, but the trend towards mobility didn't stop with notebooks.

The fact of the matter is that everything Charlie has said on the big H is correct. Haswell will be a significant step forward in graphics performance over Ivy Bridge, and will likely mark Intel's biggest generational leap in GPU technology of all time. Internally Haswell is viewed as the solution to the ARM problem. Build a chip that can deliver extremely low idle power, to the point where you can't tell the difference between an ARM tablet running in standby and one with a Haswell inside. At the same time, give it the performance we've come to expect from Intel. Haswell is the future, and this is the bridge to take us there.

In our Ivy Bridge preview I applauded Intel for executing so well over the past few years. By limiting major architectural shifts to known process technologies, and keeping design simple when transitioning to a new manufacturing process, Intel took what once was a five year design cycle for microprocessor architectures and condensed it into two. Sure the nature of the changes every 2 years was simpler than what we used to see every 5, but like most things in life—smaller but frequent progress often works better than putting big changes off for a long time.

It's Intel's tick-tock philosophy that kept it from having a Bulldozer, and the lack of such structure that left AMD in the situation it is today (on the CPU side at least). Ironically what we saw happen between AMD and Intel over the past ten years is really just a matter of the same mistake being made by both companies, just at different times. Intel's complacency and lack of an aggressive execution model led to AMD's ability to outshine it in the late K7/K8 days. AMD's similar lack of an execution model and executive complacency allowed the tides to turn once more.

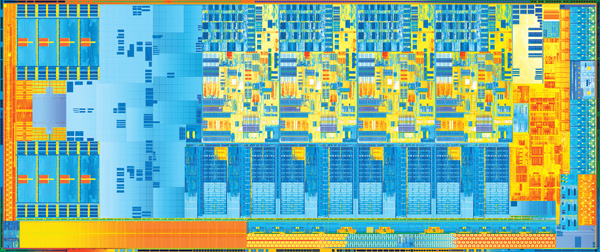

Ivy Bridge is a tick+, as we've already established. Intel took a design risk and went for greater performance all while transitioning to the most significant process technology it has ever seen. The end result is a reasonable increase in CPU performance (for a tick), a big step in GPU performance, and a decrease in power consumption.

Today is the day that Ivy Bridge gets official. Its name truly embodies its purpose. While Sandy Bridge was a bridge to a new architecture, Ivy connects a different set of things. It's a bridge to 22nm, warming the seat before Haswell arrives. It's a bridge to a new world of notebooks that are significantly thinner and more power efficient than what we have today. It's a means to the next chapter in the evolution of the PC.

Let's get to it.

Additional Reading

Intel's Ivy Bridge Architecture Exposed

Mobile Ivy Bridge Review

Undervolting & Overclocking on Ivy Bridge

Intel's Ivy Bridge: An HTPC Perspective

173 Comments

View All Comments

Shadowmaster625 - Monday, April 23, 2012 - link

I would like to start using quicksync, but 2 mbps for a tablet is way too much for me. I just want to quickly take a video and transcode it. There is nothing quick about copying a 1+ gigabyte file onto a tablet or phone. It does no good to be able to transcode faster than you can even copy it LOL. Can quicksync go lower? I want no more than 800 kbps,400-600 ideally.Also, is it possible to transcode and copy at the same time? Is anyone doing that?

BVKnight - Tuesday, April 24, 2012 - link

When you mention "2 mbps," I think you are referring to the bitrate, which is generally synonymous with the quality of the encoding."It does no good to be able to transcode faster than you can even copy" <---I think this is completely false. The transcoding is a separate file conversion step that creates the final version which you will move to your device. Your machine won't even start copying until transcoding is complete, which means that every little bit of speed you can add to the transcoding process will directly reduce the amount of time it takes to get your file on your device.

Getting quicksync will make a huge difference for your encoding.

ncrubyguy - Monday, April 23, 2012 - link

"Features like VT-d and Intel TXT are once again reserved for regular, non-K-series parts alone."Why do they keep doing that?

JarredWalton - Monday, April 23, 2012 - link

Because those are mostly for business users, and business users don't overclock and thus don't need K-series.Old_Fogie_Late_Bloomer - Monday, April 23, 2012 - link

I have a feeling that the real reason is that, if business users could get those features on a K-series processor, it would largely obviate the need/demand for SB-E. A 2600K/2700K overclocked up to, say, 4.5 GHz--which seems consistently achievable, even conservative--would compare very favorably to the 3930K, given the prices of both.Yes, I know you can overclock the 3930K, and yes, I know it has six cores and four memory controllers and more cache. But I bet that overclocked SB or IB with VT-d, &c., would make a lot of sense for a lot of applications, given price/performance considerations.

piroroadkill - Monday, April 23, 2012 - link

I'd be very interested in seeing overclocked 2500K and 2600K benchmarks tossed in, because lets be honest, one of those is the most popular CPU at the high end right now, and anyone with one has bumped it to at least 4.3GHz, often about 4.4-4.5.I think it would be nice to have a visual aid to see how that fares, but I understand the impracticality of doing so.

Rasterman - Monday, April 23, 2012 - link

Thank you for including this section, it is great. I think it would be more relevant for people though if it were a much smaller test. I think pretty much anyone is going to know that a project of that size is going to be faster with more cores and speed. What isn't so obvious though are smaller projects, where you are compiling only a few files and debugging. A typical cycle for almost all developers is: making changes, compiling, debugging to test them out. Even though you are only talking times of a few seconds, add this up to 100s-1000s of iterations per day and it makes a difference, I base my entire computer hardware selection around this workflow. For now I use the single threaded benchmarks you post as a guide.iGo - Monday, April 23, 2012 - link

The features table has put me in a great dilemma. I'm very much interested in running multiple virtual machines on my desktop, for debugging and testing purposes. Although I won't be running these virtual boxes 24x7, it would be great to have processor support for any kind of hardware acceleration that I can get, whenever I fire up these for testing. On the other hand, ability to overclock the K series processor is really tempting, and yes, a decent/modest overclock of say, 4.2-4.5GHz sounds lovely for 24x7 use.Anyone using SNB/Intel processors with VT-d can share if its worth going for non-K processor to get better virtualization performance? To be more clear, my primary job involves web-application development with UX development. For which I require a varied testing under different browsers. Currently I've setup 4 different virtual machines on my desktop with different browsers installed on different windows OS versions. Although these machines will never run 24x7 and never all at once (max 2 at once when testing). Apart from that, I also do lot of photo editing (RAW files, Lightroom and works) and bit of video editing/encoding stuff on my dekstop, mostly personal projects, rarely commercial work). Is it better to opt for 3770 for better virtual machine performance or 3770k with chance to boost overall performance by overclocking?

dcollins - Monday, April 23, 2012 - link

At the moment, VT-d will not give you any additional performance on your VM's using desktop virtualization programs like VMware workstation or Virtualbox. Neither supports VT-d right now. Based on progress this year, I expect VT-d support is still be a year away in Virtualbox, which is what I use.VT-d doesn't help performance in general; instead, VT-d allows VMs to directly access computer hardware. This is essential for high performance networking on servers or for accessing certain hardware like sound cards where low latency is crucial. For your workload, the only advantage will be slightly higher network speeds using native drivers versus a bridged connection. It may facilitate testing GPU accelerated browsers in the future as well.

If you plan on overclocking, the K series is worth loosing VT-d.

iGo - Monday, April 23, 2012 - link

Thanks, that helps a lot. I've been reading about and VT-d and your comment confirms where my thinking was going. I guess, 3770K it is then. :)