The Intel Ivy Bridge (Core i7 3770K) Review

by Anand Lal Shimpi & Ryan Smith on April 23, 2012 12:03 PM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

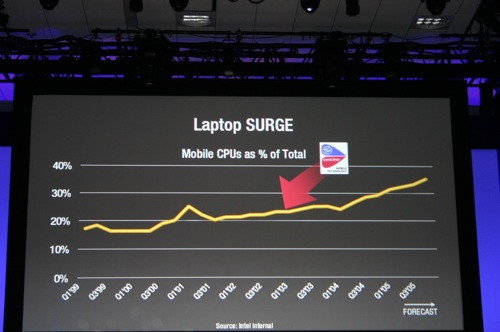

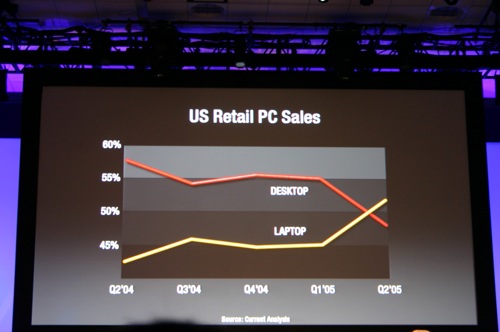

The times, they are changing. In fact, the times have already changed, we're just waiting for the results. I remember the first time Intel brought me into a hotel room to show me their answer to AMD's Athlon 64 FX—the Pentium 4 Extreme Edition. Back then the desktop race was hotly contested. Pushing the absolute limits of what could be done without a concern for power consumption was the name of the game. In the mid-2000s, the notebook started to take over. Just like the famous day when Apple announced that it was no longer a manufacturer of personal computers but a manufacturer of mobile devices, Intel came to a similar realization years prior when these slides were first shown at an IDF in 2005:

IDF 2005

IDF 2005

Intel is preparing for another major transition, similar to the one it brought to light seven years ago. The move will once again be motivated by mobility, and the transition will be away from the giant CPUs that currently power high-end desktops and notebooks to lower power, more integrated SoCs that find their way into tablets and smartphones. Intel won't leave the high-end market behind, but the trend towards mobility didn't stop with notebooks.

The fact of the matter is that everything Charlie has said on the big H is correct. Haswell will be a significant step forward in graphics performance over Ivy Bridge, and will likely mark Intel's biggest generational leap in GPU technology of all time. Internally Haswell is viewed as the solution to the ARM problem. Build a chip that can deliver extremely low idle power, to the point where you can't tell the difference between an ARM tablet running in standby and one with a Haswell inside. At the same time, give it the performance we've come to expect from Intel. Haswell is the future, and this is the bridge to take us there.

In our Ivy Bridge preview I applauded Intel for executing so well over the past few years. By limiting major architectural shifts to known process technologies, and keeping design simple when transitioning to a new manufacturing process, Intel took what once was a five year design cycle for microprocessor architectures and condensed it into two. Sure the nature of the changes every 2 years was simpler than what we used to see every 5, but like most things in life—smaller but frequent progress often works better than putting big changes off for a long time.

It's Intel's tick-tock philosophy that kept it from having a Bulldozer, and the lack of such structure that left AMD in the situation it is today (on the CPU side at least). Ironically what we saw happen between AMD and Intel over the past ten years is really just a matter of the same mistake being made by both companies, just at different times. Intel's complacency and lack of an aggressive execution model led to AMD's ability to outshine it in the late K7/K8 days. AMD's similar lack of an execution model and executive complacency allowed the tides to turn once more.

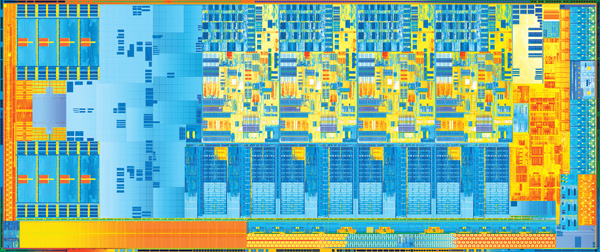

Ivy Bridge is a tick+, as we've already established. Intel took a design risk and went for greater performance all while transitioning to the most significant process technology it has ever seen. The end result is a reasonable increase in CPU performance (for a tick), a big step in GPU performance, and a decrease in power consumption.

Today is the day that Ivy Bridge gets official. Its name truly embodies its purpose. While Sandy Bridge was a bridge to a new architecture, Ivy connects a different set of things. It's a bridge to 22nm, warming the seat before Haswell arrives. It's a bridge to a new world of notebooks that are significantly thinner and more power efficient than what we have today. It's a means to the next chapter in the evolution of the PC.

Let's get to it.

Additional Reading

Intel's Ivy Bridge Architecture Exposed

Mobile Ivy Bridge Review

Undervolting & Overclocking on Ivy Bridge

Intel's Ivy Bridge: An HTPC Perspective

173 Comments

View All Comments

cjb110 - Tuesday, April 24, 2012 - link

Considering that on most games both the Xbox and PS 3tend to be sub 720p, the iGPU in Ivy Bridge is impressive. Has anybody compared the 3?tipoo - Tuesday, April 24, 2012 - link

You have a unified shader GPU with similar performance to the x1900 series but more flexible, and something like a Geforce 7800 with some parts of the 7600 like lower ROPs and memory bandwidth...7 years later, if even an IGP didn't beat those, it would be pretty sad. Those were ~200gflop cards, todays top end is over 3000, a lower-mid range chip like this I would expect to be in the upper hundreds.gammaray - Tuesday, April 24, 2012 - link

Why do Intel and AMD even started building IGPs in the first place?Why can't they just put a video card in every desktop and laptop?

And if they continue making IGPs, whats their goal?

Do they eventually wanna get rid of video card makers?

versesuvius - Tuesday, April 24, 2012 - link

Better yet, why not just put the graphics on a chip like the CPU? That way the "upgrade path" is a lot clearer, not to mention "possible". It will also offer the possibility of having those chips in different flavors, for example good video transcoder or good gamer. There is room for that on the motherboard now that the north bridge is gone. Or they can review the IBM boards from 286 days and learn from their clean, and very efficient design.Unfortunately the financial model of the IT industry from a collective viewpoint entails throwing a lot of good hardware away just for a small advantage. Just the way many will throw away their HD3000 IGPs without having ever used it. The comparison is cruel but that should not be what distinguishes them from the toilet paper industry.

As of late, Anand has taken to reminding us that technology has taken leaps ahead of our wishes and that we need time to absorb it. That is not the case. No wish is ever materialized. We only have to take whatever is offered and marvel at the only parameter that can be measured: speed. Less energy consumption is fine, but I suppose that comes with the territory (i.e. can Intel or AMD produce 22 nm chips that consume the same watts as 65 nm chips with the same number of transistors?).

tipoo - Wednesday, April 25, 2012 - link

Cost, size, power draw. All reduced by putting everything on one chip. I'm not sure if AMD wants to get rid of discreet graphics cards considering that's their one profitable division, but Intel sure does :)klmccaughey - Tuesday, April 24, 2012 - link

I've tried every setup possible and have never got quicksync to work at all. It won't even work with discrete graphics enabled and my monitor hooked up to the intel chip on my Z68 board.I have tried mediacoder (error 14), media converter 7, MEdia Espresso. I have downloaded the media SDK, I have tried the new FFmthingy from the intel engineer. Nothing, nada. It has never ever worked. AMD media converter will convert a few limited formats that went out of fashion 5 years ago (of all my 1.5TB of video the only thing it would touch was old episodes of Becker).

All in all I have got nothing whatsoever from any video accelerated encoding and I have always had to go back to my tried and trusted handbrake.

I don't think it actually works - I've never heard of anyone getting it working and the forums on mediacoder are full of people who have given up.

klmccaughey - Tuesday, April 24, 2012 - link

*disabled (for discrete graphics)JarredWalton - Tuesday, April 24, 2012 - link

What input format are you using? I've only tried it on laptops, and I've done MOV input from my Nikon D3100 camera with no issues whatsoever. I've also done a WMV input file (the sample Wildlife.WMV file from Windows 7) and it didn't have any trouble. If you're trying to do a larger video, that might be an issue, or it might just be a problem with the codec used on the original video.dealcorn - Tuesday, April 24, 2012 - link

Intel has big eyes for the workstation graphics market and has had some success at the bottom of this market. Will IB's IGD advances enhance Intel's access to this market?chizow - Tuesday, April 24, 2012 - link

Hope you're not counting yourself, you've proven long ago your opinion isn't worth paying attention to.