The 2012 iPad Followup: Galaxy Tab 10.1 LTE Comparison

by Anand Lal Shimpi on April 2, 2012 8:01 PM ESTAs with all things in life, the job of reviewing a product spans a spectrum of options. At one end of the spectrum you have the quick hands on preview that masquerades itself as a review. At the other end you have the long term road test, spanning months of usage and truly addressing what the product is like to live with. Balancing needs on both ends of the spectrum is very difficult. Go too far to one side and you end up with nothing more than press release talking points. Go too far to the other and you end up with a review that’s potentially irrelevant by the time it’s published. My goal is to always strike a balance in delivering something deep that’s timely as well. Usually it comes at the expense of sleep, seeing as how there are a finite number of hours in a day.

Our recent review of the new iPad went into great detail on a number of topics – ranging from the display to the SoC, as well as discussing usability. I’ve got another post that I’ll do to talk about some findings on the usability side, but today I want to focus on something I left out of the original review: a comparison to Samsung’s Galaxy Tab 10.1 LTE. In the interest of not taking even longer to get the iPad review out, I trimmed the comparison points down to ASUS’ Transformer Prime and Motorola’s Xyboard for battery life and performance. As newer tablets, and with the TF Prime’s position as our favorite Android tablet, the comparison made sense. As many of you pointed out however, the Galaxy Tab 10.1 is also offered in an LTE flavor and would have made a great comparison. Before the TF Prime, there was the Galaxy Tab 10.1 and it was our most desired Android tablet for a while.

The Display

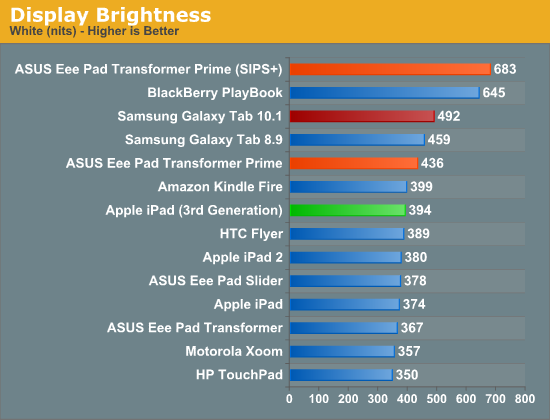

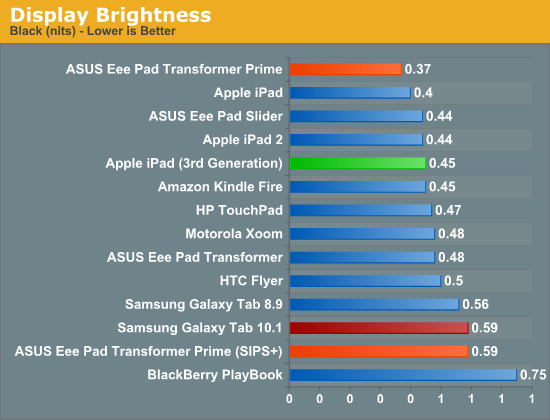

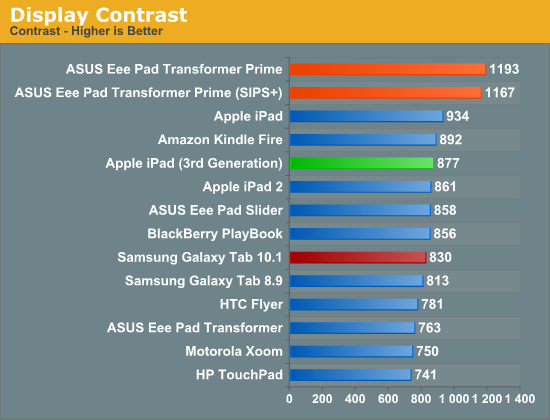

The Galaxy Tab 10.1 LTE uses Samsung’s own 1280 x 800 Super PLS display, which a year ago we loved. How does it stack up to the iPad’s Retina Display? In brightness and contrast it’s definitely competitive:

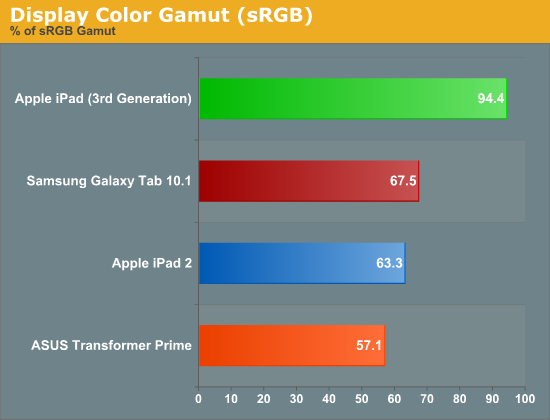

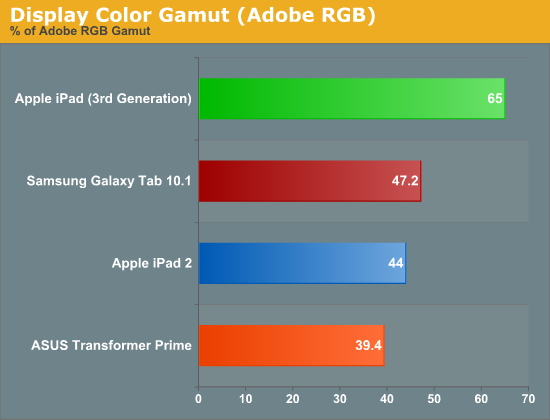

However once you start looking at color quality and gamut, the Galaxy Tab falls in line with the old standard. Samsung delivers similar a similar color gamut percentage to the iPad 2’s display, but the coverage area is different as you can see in the gallery below.

Both are short of the new iPad’s nearly full coverage of the sRGB space.

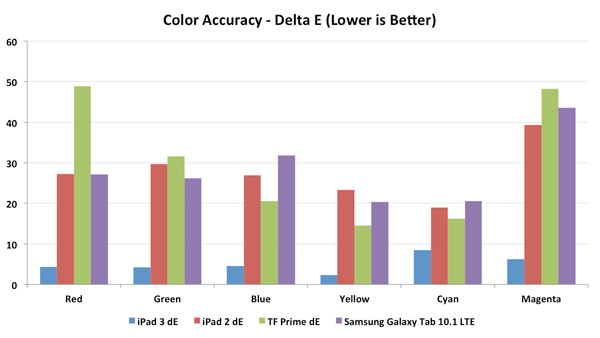

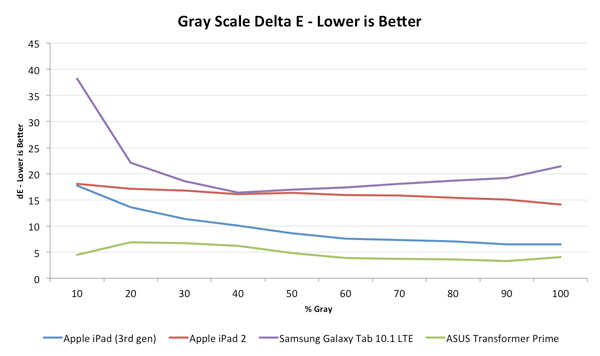

The delta E values echo what our CIE charts tell us, color and grayscale accuracy is simply better on the new iPad:

Note that ASUS’ TF Prime actually does better in the grayscale dE tests than the iPad. Apple may have raised the bar, but we’re still not anywhere close to perfection here.

Once again we have shots, taken at the same magnification, of the subpixel structure of all of these displays in order of increasing pixel density:

![]()

Apple iPad 2, 1024 x 768, 9.7-inches

![]()

ASUS Eee Pad Transformer Prime, 1280 x 800, 10.1-inches

Samsung Galaxy Tab 10.1, 1280 x 800, 10.1-inches

![]()

Apple iPad Retina Display (2012), 2048 x 1536, 9.7-inches

![]()

Apple iPhone 4S, 960 x 640, 3.5-inches

Performance

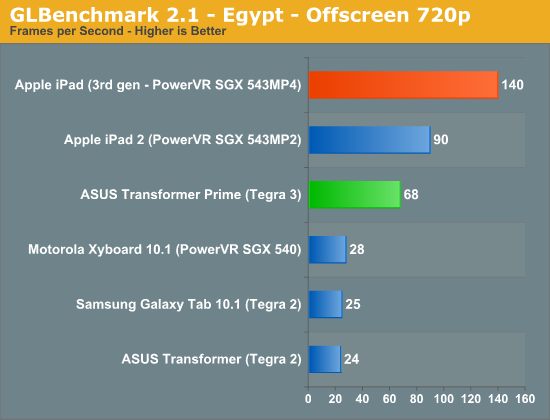

The Galaxy Tab 10.1 takes us back to a time when NVIDIA’s Tegra 2 was king. It was just a year ago that this was true. OMAP 4 was late, Tegra 3 was an eternity away and no one else had a dual-core Cortex A9 based SoC in shipping products. Unfortunately, by today’s standards, Tegra 2 is pretty slow. Not so much on the CPU side, but on the GPU side. Tegra 2 lacked the extra compute and efficiency improvements needed to really drive a 1280 x 800 display. Couple that with the bloat from Samsung’s TouchWiz update to Honeycomb and you don’t get a very smooth experience on the Galaxy Tab 10.1, especially compared to ASUS’ ICS enabled, Tegra 3 equipped Transformer Prime.

The GPU performance numbers support our subjective findings (more numbers here):

In our iPad review I talked about the gaming experience on Tegra 3 being pretty good using Tegra Zone titles. In fact, if a game is available for both iOS and Tegra Zone, the Tegra version typically looks better thanks to NVIDIA’s investment of additional developer resources for the title. Despite the visual gap, both platforms tend to offer good gaming experiences. The iOS app store is easier to navigate and compatibility with devices is less of a concern there, but developers on both sides of the fence try to deliver a ~30 fps experience regardless of platform. The Tegra 2 experience isn’t bad, but you do have to run games like GTA3 at lower quality settings to get similar frame rates to Tegra 3.

Battery Life

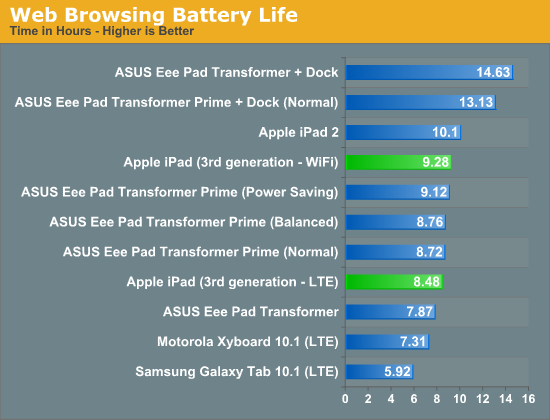

The iPad’s gigantic battery allowed it to last a bit longer on LTE than Motorola’s Xyboard 10.1, but what about compared to the Galaxy Tab 10.1? On LTE the Galaxy Tab 10.1 doesn’t fare as well as the Xyboard:

A few of you asked about video playback battery life of the new iPad. Using our old 720p, no-bframes test I managed 10.02 hours on the new iPad – tangibly less than the iPad 2 but above what Apple claims you should expect from the new tablet. I need to put together a 1080p, high profile video playback test now that more tablets can play higher quality streams. Perhaps I’ll do that in preparation for the TF700 review...

Final Words

Ask and you shall receive (time permitting). For those of you who asked, I hope the data shared here is what you were looking for. I've updated our original iPad review with all of this data as well. In short, the Galaxy Tab 10.1 LTE is a useful but not dramatically different comparison point from the Android camp. In the near term, ASUS' Transformer Pad Infinity is what I'm hoping will be some better competition.

On to the next one…

52 Comments

View All Comments

pxavierperez - Tuesday, April 3, 2012 - link

The name is not "the New iPad". It's just simply iPad. And this is not something entirely new from Apple. I'm suprise that Anand did not mention this on his more comprehensive new iPad review since he himself owns a Macbook Pro which always remains as Macbook Pro through different generational upgrades.The iMac since its introduction in 1998 has been always officially called iMac by Apple. So is the Macbook Pro, iPod, and just about almost all Apple's products have no distinct numeration or version appended to it. This year when Apple releases the new Macbook Pro (and it will be called the Macbook Pro) the old version will no longer be available for sale.

kevith - Tuesday, April 3, 2012 - link

Yeps, that's how it is!web2dot0 - Tuesday, April 3, 2012 - link

So you are saying that you are not buying the "New IPad" because the name sucks? Alrighty then .....The burst your bubble why not call it iPad 2013? Isn't that what we do with Cars?

I'm just not following your logic. I don't like the name either, but that's not going to stop me from evaluating objectively whether the product is good or not.

solipsism - Tuesday, April 3, 2012 - link

Do you get confused by today becoming yesterday tomorrow? I surely hope now. The word 'new' works in the same way except that it's not the spinning of the Earth that determines the change, but the release cycle of the iPad.We see this with products all the time. "The new Ford Ranger." I can't imagine someone getting confused by this who isn't living moment at the same point in time..

chemist1 - Monday, April 2, 2012 - link

The article has a series of close-ups showing the individual pixels on various displays. Of all of these, the iPad3 seems to have the worst fill factor (also known as aperture ratio: http://www.epson.jp/e/products/device/htps/tech/te... The fill factor is the percent of the display that is actually transmitting light (i.e., if you've got a lot of black around each pixel, you have a low fill factor). At least for video displays (TVs, projectors), increasing the fill factor significantly improves the quality of the picture -- and this holds even if you are in a regime where you can't see the individual pixels (e.g., sitting 12 feet away from a 1080p image projected on a 100" screen). That, for instance, is one of the reasons the JVC LCoS projectors provide such a pleasing, natural looking images -- the fill factor of the LCoS chip is ~.9. I've not seen the iPad3 display, but I wonder if the mediocre fill factor might take something away from the picture quality potentially achievable with such a high pixel density. Note also that a low fill factor will not affect any of the measurements done in this article (contrast, brightness, etc.). Thus it has an effect that can only be evaluated subjectively.ajcarroll - Tuesday, April 3, 2012 - link

For any given manufacturing process, as pixel density increases, aperture ratio decreases.For example suppose hypothetically there is a 10 micon gap between pixels. A display with 1000 pixels horizonally would have 999 x 10 micron vertical gaps between pixels, while a 2000 pixel display would have 1998 vertical gaps between pixels - some concept applies vertically. Thus by definition doubling pixels in each dimension doubles the amount of space.

This is just how things are. Obviously manufacturers attempt to pack the pixels more tighly, but it's not as though there's all this excessive space - thus for a given manufactoring process increasing pixel density decreases aperture ratio.

chemist1 - Tuesday, April 3, 2012 - link

Thanks for your comment, but I'm afraid I disagree. Yes, it is geometrically correct to say that "for any given *pixel spacing*, as pixel density increases, aperture ratio decreases." However, it is *not* the case that, when the manufacturing process changes to increase pixel density, pixel spacing must necessarily be maintained. Indeed, if you look at the close-up of the iPhone 4S display, you will see that, compared to the iPad3, the former has both a higher pixel density and a higher aspect ratio. This thus serves as a clear counterexample to your assertion.More broadly, the formulation of your argument -- that "for any given manufacturing process, as pixel density increases, aperture ratio decreases" -- didn't make sense to me since, by definition, a change in the pixel density means a change in the manufacturing process.

just6979 - Tuesday, April 3, 2012 - link

Why would you ever test off-screen rendering on a device that's single differentiating feature is the screen resolution? Of course the 4-core SGX543 is going to blow away the dual-core version, and the single-core SGX540 doesn't stand a chance. To me, the iPad 2012 should really have an eight-core SGX543, 4 times more raw power than the iPad 2, because the new screen has 4 times the pixels.Everyone is saying how great the iPad 2012 is going to be for gaming, but I don't see the numbers adding up. If you look back at the previous graphics tests this site did on the iPad 2012, you'll notice that the iPad 2 either matches or beats the new one when doing textured and lit triangles at native resolutions. Sure, games can render off-screen to non-native buffer sizes, but then they're going to need a special iPad 2012 render path to take advantage of the faster GPU, and then why not do a special version that take advantage of the hi-res screen? Oh yeah, because it the GPU won't be able to keep up trying to fill that many pixels, so the special versions either: a) run faster at the same res at the iPad2 versions, except it's probably already refreshing faster than most folks can perceive; or b) run slower than the iPad2 version, but have 4 times the pixels, pixels which most folks wouldn't be able to discern during fast action even at the lower resolution.

So now apps on the Apple App Store could potentially exist in 4 different versions: 2 (iPad versions) which would not work on the lowest supported iDevice, the iPhone 3GS, and 1 (iPhone Retina version) which would work, but not look or feel any differently (although it could potentially be slower due to the 4x larger graphics). This is pretty much the definition of fragmentation.

vision33r - Tuesday, April 3, 2012 - link

Go back to that fragmented Google Play store and read how many reviews are complaints in every single Android game with various devices not being able to run.That is the definition of fragmentation.

Today, devs only need to support iPad 1 and 2. New iPad can run them all, it won't be as pretty but it works.

Unlike the fragmented world of Android. You'll be lucky just to get a game to work.

Add TegraZone games to the fragmented mix and it gets worse when games said "Requires Tegra."

duffman55 - Tuesday, April 3, 2012 - link

Despite the small number of OS versions for iOS and the small number of different hardware configurations, I still have plenty of problems and crashes. Since developers can now easily update their software after it has been released, I think it's lead to the mentality of "We'll fix that bug later, it doesn't have to be perfect". I'm not sure how much of an advantage less software and hardware fragmentation actually provides when developers just want to get their software to market as quickly as possible without testing it thoroughly.Oddly enough, developers seem to be able to make reliable applications for desktop/laptop computers that have thousands of different hardware configurations and many OSes.