NVIDIA GeForce GTX 680 Review: Retaking The Performance Crown

by Ryan Smith on March 22, 2012 9:00 AM ESTThe Kepler Architecture: Efficiency & Scheduling

So far we’ve covered how NVIDIA has improved upon Fermi for; now let’s talk about why.

Mentioned quickly in our introduction, NVIDIA’s big push with Kepler is efficiency. Of course Kepler needs to be faster (it always needs to be faster), but at the same time the market is making a gradual shift towards higher efficiency products. On the desktop side of matters GPUs have more or less reached their limits as far as total power consumption goes, while in the mobile space products such as Ultrabooks demand GPUs that can match the low power consumption and heat dissipation levels these devices were built around. And while strictly speaking NVIDIA’s GPUs haven’t been inefficient, AMD has held an edge on performance per mm2 for quite some time, so there’s clear room for improvement.

In keeping with that ideal, for Kepler NVIDIA has chosen to focus on ways they can improve Fermi’s efficiency. As NVIDIA's VP of GPU Engineering, Jonah Alben puts it, “[we’ve] already built it, now let's build it better.”

There are numerous small changes in Kepler that reflect that goal, but of course the biggest change there was the removal of the shader clock in favor of wider functional units in order to execute a whole warp over a single clock cycle. The rationale for which is actually rather straightforward: a shader clock made sense when clockspeeds were low and die space was at a premium, but now with increasingly small fabrication processes this has flipped. As we have become familiar with in the CPU space over the last decade, higher clockspeeds become increasingly expensive until you reach a point where they’re too expensive – a point where just distributing that clock takes a fair bit of power on its own, not to mention the difficulty and expense of building functional units that will operate at those speeds.

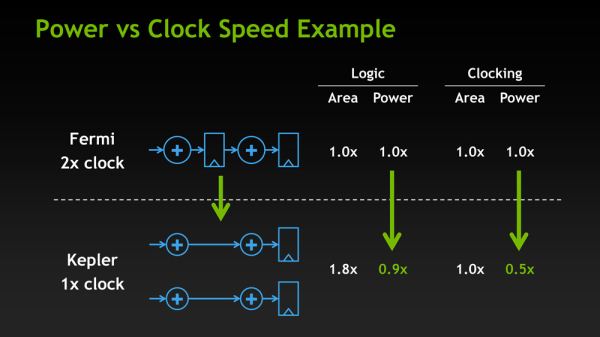

With Kepler the cost of having a shader clock has finally become too much, leading NVIDIA to make the shift to a single clock. By NVIDIA’s own numbers, Kepler’s design shift saves power even if NVIDIA has to operate functional units that are twice as large. 2 Kepler CUDA cores consume 90% of the power of a single Fermi CUDA core, while the reduction in power consumption for the clock itself is far more dramatic, with clock power consumption having been reduced by 50%.

Of course as NVIDIA’s own slide clearly points out, this is a true tradeoff. NVIDIA gains on power efficiency, but they lose on area efficiency as 2 Kepler CUDA cores take up more space than a single Fermi CUDA core even though the individual Kepler CUDA cores are smaller. So how did NVIDIA pay for their new die size penalty?

Obviously 28nm plays a significant part of that, but even then the reduction in feature size from moving to TSMC’s 28nm process is less than 50%; this isn’t enough to pack 1536 CUDA cores into less space than what previously held 384. As it turns out not only did NVIDIA need to work on power efficiency to make Kepler work, but they needed to work on area efficiency. There are a few small design choices that save space, such as using 8 SMXes instead of 16 smaller SMXes, but along with dropping the shader clock NVIDIA made one other change to improve both power and area efficiency: scheduling.

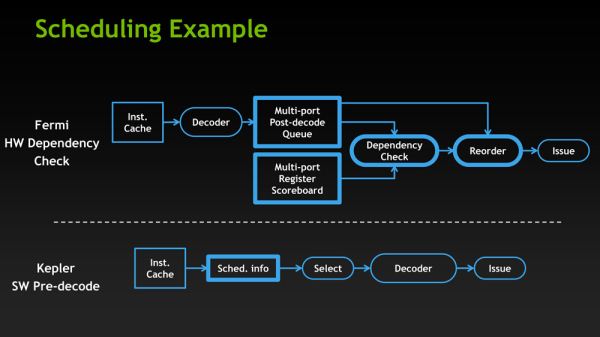

GF114, owing to its heritage as a compute GPU, had a rather complex scheduler. Fermi GPUs not only did basic scheduling in hardware such as register scoreboarding (keeping track of warps waiting on memory accesses and other long latency operations) and choosing the next warp from the pool to execute, but Fermi was also responsible for scheduling instructions within the warps themselves. While hardware scheduling of this nature is not difficult, it is relatively expensive on both a power and area efficiency basis as it requires implementing a complex hardware block to do dependency checking and prevent other types of data hazards. And since GK104 was to have 32 of these complex hardware schedulers, the scheduling system was reevaluated based on area and power efficiency, and eventually stripped down.

The end result is an interesting one, if only because by conventional standards it’s going in reverse. With GK104 NVIDIA is going back to static scheduling. Traditionally, processors have started with static scheduling and then moved to hardware scheduling as both software and hardware complexity has increased. Hardware instruction scheduling allows the processor to schedule instructions in the most efficient manner in real time as conditions permit, as opposed to strictly following the order of the code itself regardless of the code’s efficiency. This in turn improves the performance of the processor.

However based on their own internal research and simulations, in their search for efficiency NVIDIA found that hardware scheduling was consuming a fair bit of power and area for few benefits. In particular, since Kepler’s math pipeline has a fixed latency, hardware scheduling of the instruction inside of a warp was redundant since the compiler already knew the latency of each math instruction it issued. So NVIDIA has replaced Fermi’s complex scheduler with a far simpler scheduler that still uses scoreboarding and other methods for inter-warp scheduling, but moves the scheduling of instructions in a warp into NVIDIA’s compiler. In essence it’s a return to static scheduling.

Ultimately it remains to be seen just what the impact of this move will be. Hardware scheduling makes all the sense in the world for complex compute applications, which is a big reason why Fermi had hardware scheduling in the first place, and for that matter why AMD moved to hardware scheduling with GCN. At the same time however when it comes to graphics workloads even complex shader programs are simple relative to complex compute applications, so it’s not at all clear that this will have a significant impact on graphics performance, and indeed if it did have a significant impact on graphics performance we can’t imagine NVIDIA would go this way.

What is clear at this time though is that NVIDIA is pitching GTX 680 specifically for consumer graphics while downplaying compute, which says a lot right there. Given their call for efficiency and how some of Fermi’s compute capabilities were already stripped for GF114, this does read like an attempt to further strip compute capabilities from their consumer GPUs in order to boost efficiency. Amusingly, whereas AMD seems to have moved closer to Fermi with GCN by adding compute performance, NVIDIA seems to have moved closer to Cayman with Kepler by taking it away.

With that said, in discussing Kepler with NVIDIA’s Jonah Alben, one thing that was made clear is that NVIDIA does consider this the better way to go. They’re pleased with the performance and efficiency they’re getting out of software scheduling, going so far to say that had they known what they know now about software versus hardware scheduling, they would have done Fermi differently. But whether this only applies to consumer GPUs or if it will apply to Big Kepler too remains to be seen.

404 Comments

View All Comments

mm2587 - Thursday, March 22, 2012 - link

Well I guess we can expect AMD to slash price $50 across the top of their line to fall back into competition. Competion is always good for us consumers.While theres no arguing gtx680 looks like a great card I am a bit dissapointed this generation didn't push the boundries further on both the red and the green side. Hopefully gk100/gk110 is still brewing and we will still see a massive performance increase on the top end of the market this generation.

Unfortunatly I predict the gk100 is either scrapped or will be launched as a gtx 780 a year from now.

MarkusN - Thursday, March 22, 2012 - link

As far as I know, GK100 got scrapped due to issues but the GK110 is still cooking. ;)CeriseCogburn - Thursday, March 22, 2012 - link

Competition isn't "the same price with lesser features and lesser performance". I suppose with hardcore fanboys it is, but were talking about reality, and reality dictates the amd card needs to be at least $50 less than the 680..Lepton87 - Thursday, March 22, 2012 - link

http://translate.google.ca/translate?hl=en&sl=...So they are basically even at 2560 4xMSAA yet anand doesn't hesitate to call GTX680 indisputable king of the heel. It's strange because 7950 is at least the same amount faster than 580 yet he only implicitly said that it is faster than 580.

http://www.hardwarecanucks.com/forum...review-27.h...

http://www.guru3d.com/article/geforce-gtx-680-revi...

http://translate.google.ca/translate...itt_einleit...

judging from those results OC7970 is at least as fast if not faster than OC680. Wonder why they didn't directly compare oc numbers, probably this wasn't in nvidia reviewiers guide. Also it's not any better in tandem

http://www.sweclockers.com/recension...li/18#pageh...

Sabresiberian - Friday, March 23, 2012 - link

Someone needs to learn how to read charts.What, did you post a bunch of links and think no one was going to check them out?

I'll quote one of your sources, HardwareCanucks:

"After years of releasing inefficient, large and expensive GPUs, NVIDIA's GK104 core - and by association the GTX 680 - is not only smaller and less power hungry than Tahiti but it also outperforms the best AMD can offer by a substantial amount. "

Personally, I don't much care who comes out on top today, what I want is the battle for leadership to continue, for AMD and Nvidia to truly compete with each other, and not fall into some game of appearances.

"Big Kepler" should really establish how much better it is than Tahiti, though I wouldn't be surprised if some AMD fanboys will still turn the charts upside down and backwards to try to make Tahiti the leader - just as they are doing now. Of course, the 7990 dual GPU board will be out by then, and they will claim AMD has the best architecture based on it performing better than Big Kepler (assuming it does).

I don't know what AMD has planned, but I hope they come out with something right after Kepler (September? Too long, Nvidia, too long!) that is new, that will re-establish them. Of course, then there's Maxwell for next year . . .

;)

jigglywiggly - Thursday, March 22, 2012 - link

it's slower in crysis, it's slowerSabresiberian - Friday, March 23, 2012 - link

Yeah? What if it's faster in Crysis 2?http://www.tomshardware.com/reviews/geforce-gtx-68...

(While I get why using a venerable bench that Crysis provides gives us a performance base we're more familiar with, the marks for Crysis 2 show why an older model may not be such a good idea. Clearly, the GTX 680 beats out the Radeon 7970 in most DX9 benchmarks, and ALL DX11 Crysis 2 benches.)

So, your troll doesn't just fail, it epic fails.

SlyNine - Saturday, March 24, 2012 - link

My problem is the tests are run without AA. I'd rather seen some results with AA as I suspect that would cause the 680GTX to trade blows with the 7970.On the other hand 1920x1200 with 2x AA is AA enough for me.

HighTech4US - Thursday, March 22, 2012 - link

In the review all the slides are missing.For example: instead of a slide what is seen is this: [efficiency slide] or this [scheduler]

HighTech4US - Thursday, March 22, 2012 - link

OK, looks like it just got fixed