The Radeon HD 7970 Reprise: PCIe Bandwidth, Overclocking, & The State Of Anti-Aliasing

by Ryan Smith on January 27, 2012 4:30 PM EST- Posted in

- GPUs

- AMD

- Radeon

- Radeon HD 7000

With the release of AMD’s Radeon HD 7970 it’s clear that AMD has once again regained the single-GPU performance crown. But while the 7970’s place in the current GPU hierarchy is well established, we’re still trying to better understand the ins and outs of AMD’s new Graphics Core Next Architecture. What does it perform well at and what is it weak at? How might GCN scale with future GPUs? Etc.

Next week we’ll be taking a look at CrossFire performance and the performance of AMD’s first driver update. But in the meantime we wanted to examine a few other facets of the 7970: the impact of PCIe bandwidth on performance, overclocking our reference 7970 (and the performance impact thereof), and what AMD is doing for anti-aliasing with the surprise addition of SSAA for DX10+ along with an interesting technical demo implementing MSAA and complex lighting side-by-side. So let’s get started.

PCIe Bandwidth: When Do You Have Enough?

With the release of PCIe 3 we wanted to take a look at what the impact the additional bandwidth would have. Historically new PCIe revisions have come out well ahead of hardware that truly needs the bandwidth, and with the 7970 and PCIe 3 this once again appears to be the case. In our original 7970 review we saw that there were a small number of existing computational applications that could immediately benefit from the greater bandwidth, but what about gaming? We sat down with our benchmark suite and ran it at a number of different PCIe bandwidths in order to find an answer.

| PCIe Bandwidth Comparison (Each Direction) | |||||

| PCIe 1.x | PCIe 2.x | PCIe 3.0 | |||

| x1 | 250MB/sec | 500MB/sec | 1GB/sec | ||

| x2 | 500MB/sec | 1GB/sec | 2GB/sec | ||

| x4 | 1GB/sec | 2GB/sec | 4GB/sec | ||

| x8 | 2GB/sec | 4GB/sec | 8GB/sec | ||

| x16 | 4GB/sec | 8GB/sec | 16GB/sec | ||

For any given game the amount of data sent per frame is largely constant regardless of resolution, so we’ve opted to test everything at 1680x1050. At the higher framerates this resolution offers on our 7970, this should generate more PCie traffic than higher, more GPU limited resolutions, and make the impact of different amounts of PCIe bandwidth more obvious.

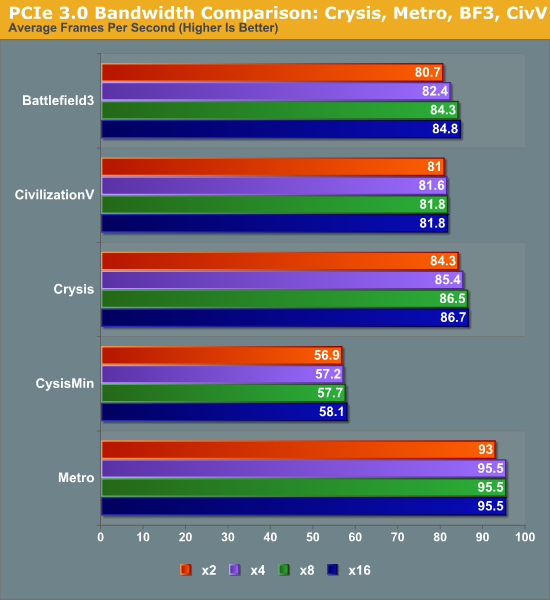

At the high end the results are not surprising. In our informal testing ahead of the 7970 launch we didn’t see any differences between PCIe 2 and PCIe 3 worth noting, and our formal testing backs this up. Under gaming there is absolutely no appreciable difference in performance between PCIe 3 x16 (16GB/sec) and PCIe 2 (8GB/sec). Nor was there any difference between PCIe 3 x8 (8GB/sec) and the other aforementioned bandwidth configurations.

Going forward, for Ivy Bridge owners this will be good news. Even with only 16 PCIe 3 lanes available from the CPU, there should be no performance penalty from utilizing x8 configurations in order to enable CrossFire or other uses that would rob a 7970 of 8 lanes. But how about existing Sandy Bridge systems that can only support PCIe 2? As it turns out things aren’t quite as good.

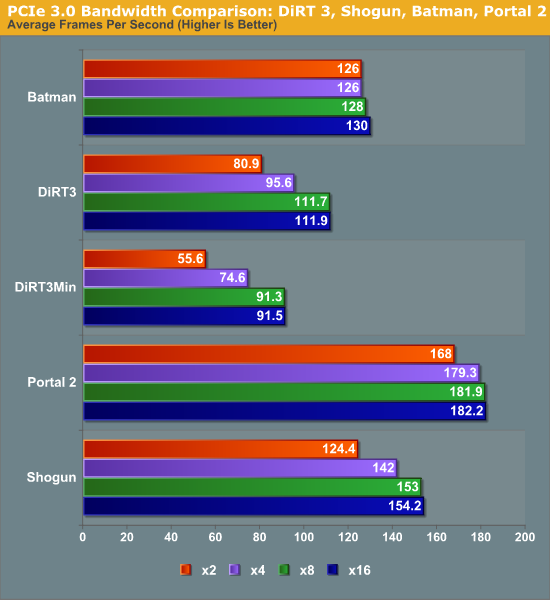

Moving from PCIe 2 x16 (8GB/sec) to PCIe 2 x8 (4GB/sec) does incur a generally small penalty on the 7970. However like most tests this is entirely dependent on the game itself. With games like Metro 2033 the difference is non-existent, while Battlefield 3 and Crysis only lose 2-3%, and DiRT3 suffers the most, losing 14% of its performance. DiRT3’s minimum framerates look even worse, dropping by 19%. As DiRT3 is one of our higher performing games in the first place the real world difference is not going to be that great – it’s still well above 60fps at all times – but it’s clear that in the wrong situation only having 4GB/sec of PCIe bandwidth can bottleneck a 7970.

Finally if we take one further step to PCIe 3 x2 (2GB/sec), we see performance continue to drop on a game-by-game basis. Crysis, Metro, Civilization V, and Battlefield 3 still hold rather steady, having lost less than 5% of their performance versus PCIe 3 x16, but DiRT 3 continues to fall, while Total War: Shogun and Portal 2 begin to buckle. At these speeds DiRT3 is only 72% of its original performance, while Shogun and Portal 2 are at 81% and 92% respectively.

Ultimately what is clear is that 8GB/sec of bandwidth, either in the form of PCIe 2 x16 or PCIe 3 x8, will be necessary to completely feed the 7970. 16GB/sec (PCIe 3 x16) appears to be overkill for a single card at this time, and 4GB/sec or 2GB/sec will bottleneck the 7970 depending on the game. The good news is that even at 2GB/sec the bottlenecking is rather limited, and based on our selection of benchmarks it looks like a handful of games will be bottlenecked. Still, there’s a good argument here that 7970CF owners are going to want a PCIe 3 system to avoid bottlenecking their cards – in fact this may be the greatest benefit of PCIe 3 right now, as it should provide enough bandwidth to make an x8/x8 configuration every bit as fast as an x16/x16 configuration, allowing for maximum GPU performance with Intel’s mainstream CPUs.

47 Comments

View All Comments

evilspoons - Friday, January 27, 2012 - link

MSI is working on that already :)http://www.anandtech.com/show/5352/msis-gus-ii-ext...

tipoo - Friday, January 27, 2012 - link

Yeah, that looks sweet...Now for non-Mac laptops to get Thunderbolt. I think some Sony's already have it, but Ivy Bridge laptops for sure.repoman27 - Saturday, January 28, 2012 - link

TB controllers have a PCIe 2.0 x4 back end, but the protocol adapter can only pump at 10Gbps, so Thunderbolt devices essentially share the equivalent of 2.5 lanes of PCIe 2.0. I was hoping that PCIe 3.0 x1 performance would be tested as well, since that would show bottlenecking very similar to what could be expected from a Thunderbolt connected GPU.Torrijos - Sunday, January 29, 2012 - link

I was wondering this too...Is there word of Thunderbolt adaptation to the evolution of PCIe technology version?

The first release being using PCIe 2, are we going to see (with Ivy Bridge) TB using PCIe 3 with more than an effective doubling of bandwidth (since they reduced overhead with PCIe 3)?

All of the sudden we would end up closer to external graphics in docking stations (or directly with large high res displays) for ultra light laptops.

DanNeely - Sunday, January 29, 2012 - link

We'd see less than a doubling of band with if TB2.0 just went from PCIe2.0 to 3.0 clocks because TB already incorporates a high efficiency encoding like 3.0 does. That's why a TB1.0 connection can carry 2.5x PCIe 2.0 lanes of data over a channel that's raw capacity is only 2 lanes wide.tynopik - Friday, January 27, 2012 - link

If your main conclusion is that x8 3.0 is plenty for crossfire, shouldn't, you know, ACTUALLY TEST crossfire at x8 3.0?bumble12 - Friday, January 27, 2012 - link

First sentence on the second paragraph of the first page:"Next week we’ll be taking a look at CrossFire performance and the performance of AMD’s first driver update. "

Guspaz - Friday, January 27, 2012 - link

I don't really understand why dumn SSAA would be so hard to implement in a game-independent, API-independent, renderer-independent fashion. The driver can simply present a larger framebuffer to the game (say, 3840x2160 for a 1080p game) and as a final step before swapping the buffer, average the pixel values in 2x2 blocks, supersampling down to the target resolution.I mean, this is how antialiasing used to work in the days before MSAA, and while there's a big performance penalty there, it has the virtue of working in any scenario, on any content or geometry.

ItsDerekDude - Friday, January 27, 2012 - link

Here it is!http://demo.ovh.com/download/37a53453c137425e584a1...

chizow - Friday, January 27, 2012 - link

So PCIe 4GB/s (2.0x8 or 3.0x4) is where high-end cards start dropping off and showing noticeable differences in performance. That is definitely going to be the big advantage IVB brings to the mainstream as you'll be able to get 8GB/s in an x8/x8 config with PCIe 3.0 cards.It'd be interesting if you could do a comparison at some point on the impact of VRAM and bandwidth and PCIe bus speeds. An ideal candidate would be a card that has 2xVRAM variants like a GTX 580 or 6970 that's still fast enough to make things interesting.

Also interesting discussion on the MSAA situation. That helps explain why enabling MSAA has caused VRAM amounts to balloon incredibly in recent games, like BF3, Skyrim, Crysis etc. That extra G-buffer with all that geometry data. Is this what Nvidia was doing in the past with their AA override compatibility bits? Telling their driver to store intermediate buffers for MSAA? Also, wasn't DX10.1/11 supposed to help with this with the ability to read back the multisample depth buffer?

In any case, I for one welcome FXAA. While it does have a blurring effect, the AA it provides with virtually no loss in performance is amazing. It allows me to run much lower levels of AA (4xMSAA + 4xTSAA max, or even 2x+2x) in conjunction with FXAA to achieve better overall AA at the expense of slight blurring. MSAA+TSAA+FXAA provides similar full-scene AA results as the much more performance expensive SGSSAA for me.