The Radeon HD 7970 Reprise: PCIe Bandwidth, Overclocking, & The State Of Anti-Aliasing

by Ryan Smith on January 27, 2012 4:30 PM EST- Posted in

- GPUs

- AMD

- Radeon

- Radeon HD 7000

With the release of AMD’s Radeon HD 7970 it’s clear that AMD has once again regained the single-GPU performance crown. But while the 7970’s place in the current GPU hierarchy is well established, we’re still trying to better understand the ins and outs of AMD’s new Graphics Core Next Architecture. What does it perform well at and what is it weak at? How might GCN scale with future GPUs? Etc.

Next week we’ll be taking a look at CrossFire performance and the performance of AMD’s first driver update. But in the meantime we wanted to examine a few other facets of the 7970: the impact of PCIe bandwidth on performance, overclocking our reference 7970 (and the performance impact thereof), and what AMD is doing for anti-aliasing with the surprise addition of SSAA for DX10+ along with an interesting technical demo implementing MSAA and complex lighting side-by-side. So let’s get started.

PCIe Bandwidth: When Do You Have Enough?

With the release of PCIe 3 we wanted to take a look at what the impact the additional bandwidth would have. Historically new PCIe revisions have come out well ahead of hardware that truly needs the bandwidth, and with the 7970 and PCIe 3 this once again appears to be the case. In our original 7970 review we saw that there were a small number of existing computational applications that could immediately benefit from the greater bandwidth, but what about gaming? We sat down with our benchmark suite and ran it at a number of different PCIe bandwidths in order to find an answer.

| PCIe Bandwidth Comparison (Each Direction) | |||||

| PCIe 1.x | PCIe 2.x | PCIe 3.0 | |||

| x1 | 250MB/sec | 500MB/sec | 1GB/sec | ||

| x2 | 500MB/sec | 1GB/sec | 2GB/sec | ||

| x4 | 1GB/sec | 2GB/sec | 4GB/sec | ||

| x8 | 2GB/sec | 4GB/sec | 8GB/sec | ||

| x16 | 4GB/sec | 8GB/sec | 16GB/sec | ||

For any given game the amount of data sent per frame is largely constant regardless of resolution, so we’ve opted to test everything at 1680x1050. At the higher framerates this resolution offers on our 7970, this should generate more PCie traffic than higher, more GPU limited resolutions, and make the impact of different amounts of PCIe bandwidth more obvious.

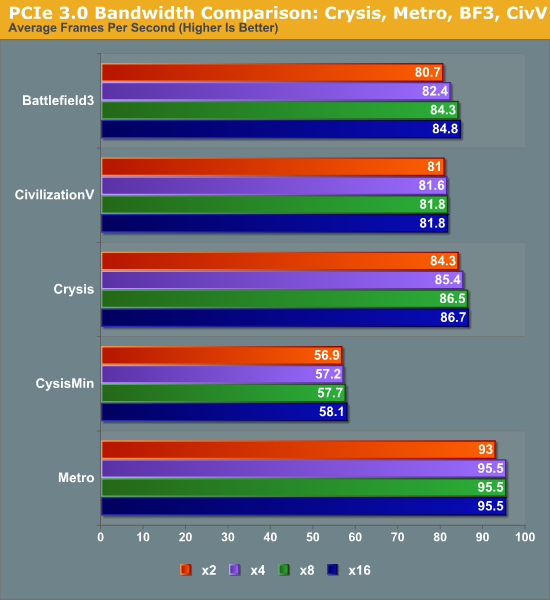

At the high end the results are not surprising. In our informal testing ahead of the 7970 launch we didn’t see any differences between PCIe 2 and PCIe 3 worth noting, and our formal testing backs this up. Under gaming there is absolutely no appreciable difference in performance between PCIe 3 x16 (16GB/sec) and PCIe 2 (8GB/sec). Nor was there any difference between PCIe 3 x8 (8GB/sec) and the other aforementioned bandwidth configurations.

Going forward, for Ivy Bridge owners this will be good news. Even with only 16 PCIe 3 lanes available from the CPU, there should be no performance penalty from utilizing x8 configurations in order to enable CrossFire or other uses that would rob a 7970 of 8 lanes. But how about existing Sandy Bridge systems that can only support PCIe 2? As it turns out things aren’t quite as good.

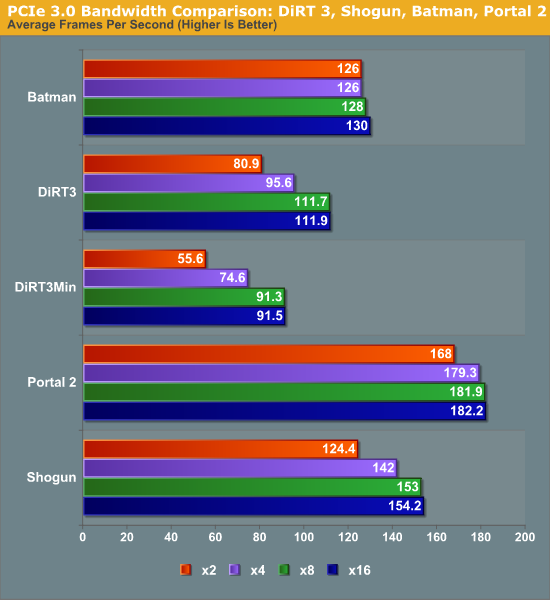

Moving from PCIe 2 x16 (8GB/sec) to PCIe 2 x8 (4GB/sec) does incur a generally small penalty on the 7970. However like most tests this is entirely dependent on the game itself. With games like Metro 2033 the difference is non-existent, while Battlefield 3 and Crysis only lose 2-3%, and DiRT3 suffers the most, losing 14% of its performance. DiRT3’s minimum framerates look even worse, dropping by 19%. As DiRT3 is one of our higher performing games in the first place the real world difference is not going to be that great – it’s still well above 60fps at all times – but it’s clear that in the wrong situation only having 4GB/sec of PCIe bandwidth can bottleneck a 7970.

Finally if we take one further step to PCIe 3 x2 (2GB/sec), we see performance continue to drop on a game-by-game basis. Crysis, Metro, Civilization V, and Battlefield 3 still hold rather steady, having lost less than 5% of their performance versus PCIe 3 x16, but DiRT 3 continues to fall, while Total War: Shogun and Portal 2 begin to buckle. At these speeds DiRT3 is only 72% of its original performance, while Shogun and Portal 2 are at 81% and 92% respectively.

Ultimately what is clear is that 8GB/sec of bandwidth, either in the form of PCIe 2 x16 or PCIe 3 x8, will be necessary to completely feed the 7970. 16GB/sec (PCIe 3 x16) appears to be overkill for a single card at this time, and 4GB/sec or 2GB/sec will bottleneck the 7970 depending on the game. The good news is that even at 2GB/sec the bottlenecking is rather limited, and based on our selection of benchmarks it looks like a handful of games will be bottlenecked. Still, there’s a good argument here that 7970CF owners are going to want a PCIe 3 system to avoid bottlenecking their cards – in fact this may be the greatest benefit of PCIe 3 right now, as it should provide enough bandwidth to make an x8/x8 configuration every bit as fast as an x16/x16 configuration, allowing for maximum GPU performance with Intel’s mainstream CPUs.

47 Comments

View All Comments

CeriseCogburn - Saturday, June 23, 2012 - link

Yep Termie, now the hyper enthusiast experts with their 7970's are noobs unable to be skilled enough to overclock...Can you amd fans get together sometime and agree on your massive fudges once and for all - we just heard no one but the highest of all gamers and end user experts buys these cards - with the intention of overclocking the 7970 to the hilt, as the expert in them demands the most performance for the price...

We just heard MONTHS of that crap - now it's the opposite....

Suddenly, the $579.00 amd fanboy buyers can't overclock...

How about this one- add this one to the arsenal of hogwash...

" Don't void your warranty !" by overclocking even the tiniest bit..

( We know every amd fanboy will blow the crap out of their card screwing around and every tip given around the forums is how to fake out the vendor, lie, and get a free replacement after doing so )

darkswordsman17 - Tuesday, January 31, 2012 - link

First, sorry for this response being several days later.Fair enough. I didn't mean it as a real criticism just more of a nitpick. I realize the state of voltage control on video cards isn't exactly stellar and I'm sure AMD/nVidia aren't keen on you doing it.

Its certainly not as robust as CPU voltage adjustment is today, which I didn't mean to confuse as I understand there's a pretty significant disparity.

I sould have expanded my on my comment a bit more.I have a hunch AMD is being pretty conservative on voltage with these (in both directions, its higher than it needs to be, but its not as high as it could fairly safely be either). Firstly, probably to play it safe with the chips from the new process, but also I think they're giving themselves some breathing room for improvement. After 40nm, they probably didn't want to go for broke right out of the gate and leave some extra that they could push to improve as needed (they have space to release a 7980; something in line with the 4890). Considering the results, its not like they really need to, especially coupled with the rumored 28nm issues.

Oh, and likewise to Termie, I do still appreciate the work and realize you can't please everyone. I liked the update and actually I think you did enough to touch on the subject in the 7950 review (namely addressing the lack of quality software management for GPUs currently).

mczak - Friday, January 27, 2012 - link

The Leo demo as mentioned in the article has been released (no idea about version):http://developer.amd.com/samples/demos/pages/AMDRa...

Requires 7970 to run (not sure why exactly if it's just DirectX11/DirectCompute?).

mczak - Friday, January 27, 2012 - link

Actually Dave Baumann clarified it should run on other hw as well.ltcommanderdata - Friday, January 27, 2012 - link

It seemed like we've just finished seeing most major engines like Unreal Engine 3, FROSTBITE 2.0, CryEngine 3 transition to a deferred rendering model. Is it very difficult for developers to modify their existing/previous forward renderers to incorporate the new lighting technique used in the Leo Demo? Otherwise, given the investment developers have put into deferred rendering, I'm guessing they're not looking to transitioned back to an improved forward renderer anytime soon.On a related note, you mentioned the lack of MSAA is a common problem to DX10+. Given this improved lighting technique requires compute shaders, is it actually DX11 GPU only, ie. does it require CS5.0 or can it be implemented in CS4.x to support DX10 GPUs? According to the latest Steam survey, by far the majority of GPUs are still DX10, so game developers won't be dropping support for them for a few years. Some games do support DX11 only features like tessellation, but I presume that having to implement 2 different rendering/lighting models is a lot more work, which could hinder adoption if the technique isn't compatible with DX10 GPUs.

Logsdonb - Friday, January 27, 2012 - link

No one has tested the 7970 in a crossfire configuration under PCI 3.0. I would expect increased bandwidth to benefit the most in that environment. I realize the 7800 series will be better candidates for crossfire given price, heat, and power consumption but a test with the 7900 series would show the potential.piroroadkill - Friday, January 27, 2012 - link

I'm sorry, I might be pretty drunk, but I'm falling at the first page."PCIe Bandwidth..."

There's a clear difference between 8x and 16x PCIe 3.0

Even if it is small, it is there, showing some bottlenecking. If it was inside the margin of error, you'd expect they'd switch places. They didn't. There is clear bottlenecking.

Concillian - Friday, January 27, 2012 - link

I saw some stuff flying around about SMAA a month or two ago... seemed promising and a better alternative to FXAA, but I haven't seen much in the "official" media outlets about it.It'd be nice to see some analysis on SMAA vs. FXAA vs. Morphological AA in an article covering the current state of AA.

Ryan Smith - Friday, January 27, 2012 - link

As I understand it, SMAA is still a work in progress. It would be premature to comment on it at this time.tipoo - Friday, January 27, 2012 - link

If I remember correctly, TB provides the bandwidth of a PCIe 4x connection. So if a high end card like this isn't bottlenecked with that much constraint, it sure looks good for external graphics! You'd need a separate power plug of course, but it now looks feasible.