The AMD FX (Bulldozer) Scheduling Hotfixes Tested

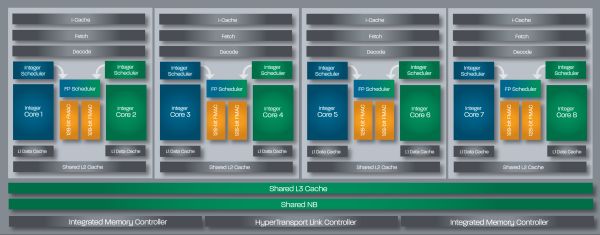

by Anand Lal Shimpi on January 27, 2012 12:47 PM ESTThe basic building block of Bulldozer is the dual-core module, pictured below. AMD wanted better performance than simple SMT (ala Hyper Threading) would allow but without resorting to full duplication of resources we get in a traditional dual core CPU. The result is a duplication of integer execution resources and L1 caches, but a sharing of the front end and FPU. AMD still refers to this module as being dual-core, although it's a departure from the more traditional definition of the word. In the early days of multi-core x86 processors, dual-core designs were simply two single core processors stuck on the same package. Today we still see simple duplication of identical cores in a single processor, but moving forward it's likely that we'll see more heterogenous multi-core systems. AMD's Bulldozer architecture may be unusual, but it challenges the conventional definition of a core in a way that we're probably going to face one way or another in the not too distant future.

A four-module, eight-core Bulldozer

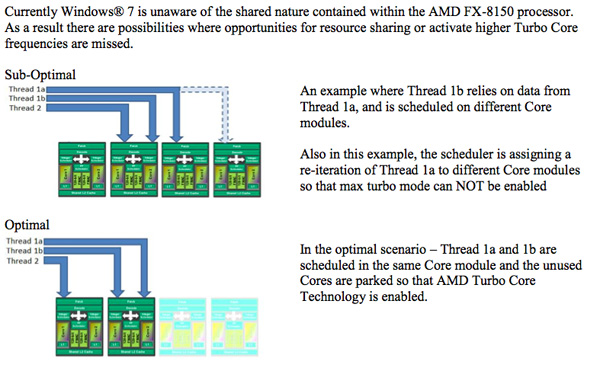

The bigger issue with Bulldozer isn't one of core semantics, but rather how threads get scheduled on those cores. Ideally, threads with shared data sets would get scheduled on the same module, while threads that share no data would be scheduled on separate modules. The former allows more efficient use of a module's L2 cache, while the latter guarantees each thread has access to all of a module's resources when there's no tangible benefit to sharing.

This ideal scenario isn't how threads are scheduled on Bulldozer today. Instead of intelligent core/module scheduling based on the memory addresses touched by a thread, Windows 7 currently just schedules threads on Bulldozer in order. Starting from core 0 and going up to core 7 in an eight-core FX-8150, Windows 7 will schedule two threads on the first module, then move to the next module, etc... If the threads happen to be working on the same data, then Windows 7's scheduling approach makes sense. If the threads scheduled are working on different data sets however, Windows 7's current treatment of Bulldozer is suboptimal.

AMD and Microsoft have been working on a patch to Windows 7 that improves scheduling behavior on Bulldozer. The result are two hotfixes that should both be installed on Bulldozer systems. Both hotfixes require Windows 7 SP1, they will refuse to install on a pre-SP1 installation.

The first update simply tells Windows 7 to schedule all threads on empty modules first, then on shared cores. The second hotfix increases Windows 7's core parking latency if there are threads that need scheduling. There's a performance penalty you pay to sleep/wake a module, so if there are threads waiting to be scheduled they'll have a better chance to be scheduled on an unused module after this update.

Note that neither hotfix enables the most optimal scheduling on Bulldozer. Rather than being thread aware and scheduling dependent threads on the same module and independent threads across separate modules, the updates simply move to a better default cause of scheduling on modules first. This should improve performance in most cases but there's a chance that some workloads will see a performance reduction. AMD tells me that it's still working with OS vendors (read: Microsoft) to better optimize for Bulldozer. If I had to guess I'd say that we may see the next big step forward with Windows 8.

AMD was pretty honest when it described the performance gains FX owners can expect to see from this update. In its own blog post on the topic AMD tells users to expect a 1 - 2% gain on average across most applications. Without any big promises I wasn't expecting the Bulldozer vs. Sandy Bridge standings to change post-update, but I wanted to run some tests just to be sure.

The Test

| Motherboard: | ASUS P8Z68-V Pro (Intel Z68) ASUS Crosshair V Formula (AMD 990FX) |

| Hard Disk: | Intel X25-M SSD (80GB) Crucial RealSSD C300 |

| Memory: | 2 x 4GB G.Skill Ripjaws X DDR3-1600 9-9-9-20 |

| Video Card: | ATI Radeon HD 5870 (Windows 7) |

| Video Drivers: | AMD Catalyst 11.10 Beta (Windows 7) |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows 7 x64 SP1 w/ BD Hotfixes |

79 Comments

View All Comments

Ratman6161 - Saturday, January 28, 2012 - link

With an Intel i3 2100. I suspect the gamers might actually be better served with the i3 than AMD's 8150 other than the inability to overclock the i3. Think of how much better a GPU you could get by saving about $150 by going with an i3 over an 8150.Just sayin...

medi01 - Sunday, January 29, 2012 - link

Did you forget something important?Taking into account, cough, motherboard prices, cough?

The "you can get much better GPU for XX bucks" stands for pretty much any non low end CPU.

xeridea - Monday, January 30, 2012 - link

AMD did a blind test with the 2700k and 8150. 28% chose 2700k, 51% chose 8150, 20% undecided. It is a possibly an anomaly that the 8150 got more votes, but it at least shows that it is competitive as a gaming CPU, even compared to the 2700k which costs $100 more.IIRC the 8150 is competitive and sometimes beats the 2500k and even beats the 2600k in some highly threaded situations.

Your figures are wrong. Intel has the 2600k for $325 and the 8150 for $270. This means the 8150 is $65 less than the 2600k, not $30. It is $40 more than the 2500k but you have to consider generally higher cost of motherboard/memory with Intel, and guaranteed socket incompatibility with every CPU.

arjuna1 - Saturday, January 28, 2012 - link

Yeah, Imagine how pathetic can a life be when you need to sustain your ego by hating the competing brand of your PC's cpu.Fox5 - Saturday, January 28, 2012 - link

Wasn't the point of the hotfix to consolidate threads onto modules, so that modules could be gated and turbo core enabled? Isn't that where the performance boost is supposed to come from?KonradK - Sunday, January 29, 2012 - link

Windows 7 sheduler since beginning tries to group many threads on single core. It is mainly for maximize effect of power gates. I'm not sure whether core must be completely idle for turbo core/turbo boost to be enabled.Purpose of hotfix was to make sheduler distingushing between cores beloging to the same, or separate module.

Mech-Akuma - Monday, January 30, 2012 - link

This is what I thought as well. I remember reading somewhere when Bulldozer launched that Win7 prefers to primarily direct all threads to cores inside different modules. This would cause modules not to be able to enter C6 sleep and therefore the performance improvement of Turbo Core would be drastically cut. However since each module does not need to share resources (while each module is only using 1 core) performance is picked up there.However this contradicts what this article says about pre-hotfix scheduling. Could someone clarify how pre-hotfix scheduling worked?

silverblue - Saturday, January 28, 2012 - link

Toms did the same thing. However, I'm not sure it'd make much difference.richaron - Monday, January 30, 2012 - link

As far as I'm aware 1600 is the most developed ram at the moment. I consider anything faster as simply overclocked 1600; more "bandwidth", more voltage, but lower cas latency.I'm not sure what the reviewers were thinking, but they probly know more than most of us...

KonradK - Saturday, January 28, 2012 - link

"No. Bulldozer adds silicon that actually executes instructions. [...]"Whole idea of Hyperthreading is to let a second thread utilize resources of core wasted by first thread (and vice-versa).

Depending on fact how much the execution units are replicated and how (un)optimally written is code amount of wastes (and benefits from Hyperthreiding) will be lower or greater.

Maybe the execution units of Bulldozer's FPU are replicated to the such excent that it will be wasted in most cases unless used by two threads simultaneusly.

But performance of two (FPU intensive) threads running on the same module will never be equal to the performance of two threads running on separate modules.*

Otherwise hotfixes to sheduler would be useless.

*) Assuming that CPU is not fully loaded i.e. some cores remains idle.