AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

PCI Express 3.0: More Bandwidth For Compute

It may seem like it’s still fairly new, but PCI Express 2 is actually a relatively old addition to motherboards and video cards. AMD first added support for it with the Radeon HD 3870 back in 2008 so it’s been nearly 4 years since video cards made the jump. At the same time PCI Express 3.0 has been in the works for some time now and although it hasn’t been 4 years it feels like it has been much longer. PCIe 3.0 motherboards only finally became available last month with the launch of the Sandy Bridge-E platform and now the first PCIe 3.0 video cards are becoming available with Tahiti.

But at first glance it may not seem like PCIe 3.0 is all that important. Additional PCIe bandwidth has proven to be generally unnecessary when it comes to gaming, as single-GPU cards typically only benefit by a couple percent (if at all) when moving from PCIe 2.1 x8 to x16. There will of course come a time where games need more PCIe bandwidth, but right now PCIe 2.1 x16 (8GB/sec) handles the task with room to spare.

So why is PCIe 3.0 important then? It’s not the games, it’s the computing. GPUs have a great deal of internal memory bandwidth (264GB/sec; more with cache) but shuffling data between the GPU and the CPU is a high latency, heavily bottlenecked process that tops out at 8GB/sec under PCIe 2.1. And since GPUs are still specialized devices that excel at parallel code execution, a lot of workloads exist that will need to constantly move data between the GPU and the CPU to maximize parallel and serial code execution. As it stands today GPUs are really only best suited for workloads that involve sending work to the GPU and keeping it there; heterogeneous computing is a luxury there isn’t bandwidth for.

The long term solution of course is to bring the CPU and the GPU together, which is what Fusion does. CPU/GPU bandwidth just in Llano is over 20GB/sec, and latency is greatly reduced due to the CPU and GPU being on the same die. But this doesn’t preclude the fact that AMD also wants to bring some of these same benefits to discrete GPUs, which is where PCI e 3.0 comes in.

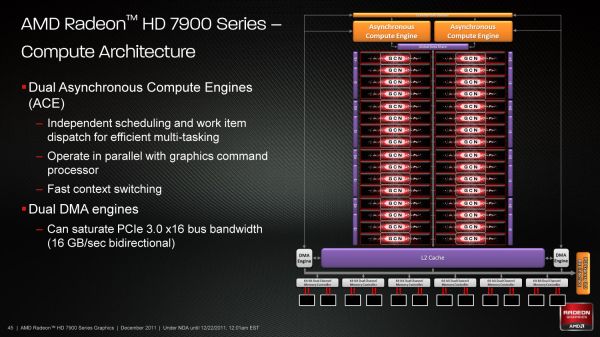

With PCIe 3.0 transport bandwidth is again being doubled, from 500MB/sec per lane bidirectional to 1GB/sec per lane bidirectional, which for an x16 device means doubling the available bandwidth from 8GB/sec to 16GB/sec. This is accomplished by increasing the frequency of the underlying bus itself from 5 GT/sec to 8 GT/sec, while decreasing overhead from 20% (8b/10b encoding) to 1% through the use of a highly efficient 128b/130b encoding scheme. Meanwhile latency doesn’t change – it’s largely a product of physics and physical distances – but merely doubling the bandwidth can greatly improve performance for bandwidth-hungry compute applications.

As with any other specialized change like this the benefit is going to heavily depend on the application being used, however AMD is confident that there are applications that will completely saturate PCIe 3.0 (and thensome), and it’s easy to imagine why.

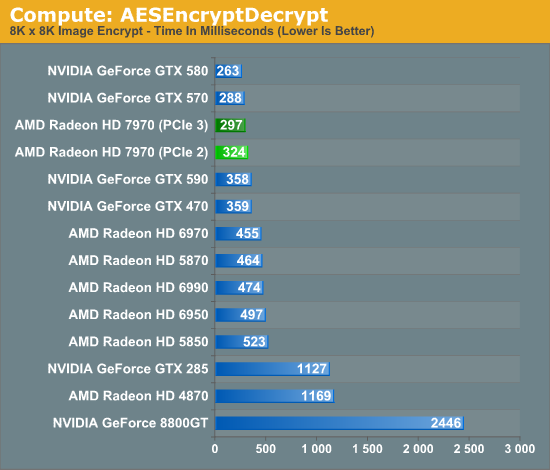

Even among our limited selection compute benchmarks we found something that directly benefitted from PCIe 3.0. AESEncryptDecrypt, a sample application from AMD’s APP SDK, demonstrates AES encryption performance by running it on square image files. Throwing it a large 8K x 8K image not only creates a lot of work for the GPU, but a lot of PCIe traffic too. In our case simply enabling PCIe 3.0 improved performance by 9%, from 324ms down to 297ms.

Ultimately having more bandwidth is not only going to improve compute performance for AMD, but will give the company a critical edge over NVIDIA for the time being. Kepler will no doubt ship with PCIe 3.0, but that’s months down the line. In the meantime users and organizations with high bandwidth compute workloads have Tahiti.

292 Comments

View All Comments

mczak - Thursday, December 22, 2011 - link

Oh yes _for this test_ certainly 32 ROPs are sufficient (FWIW it uses FP16 render target with alpha blend). But these things have caches (which they'll never hit in the vantage fill test, but certainly not everything will have zero cache hits), and even more important than color output are the z tests ROPs are doing (which also consume bandwidth, but z buffers are highly compressed these days).You can't really say if 32 ROPs are sufficient, nor if they are somehow more efficient judged by this vantage test (as just about ANY card from nvidia or amd hits bandwidth constraints in that particular test long before hitting ROP limits).

Typically it would make sense to scale ROPs along with memory bandwidth, since even while it doesn't need to be as bad as in the color fill test they are indeed a major bandwidth eater. But apparently AMD disagreed and felt 32 ROPs are enough (well for compute that's certainly true...)

cactusdog - Thursday, December 22, 2011 - link

The card looks great, undisputed win for AMD. Fan noise is the only negative, I was hoping for better performance out the new gen cooler but theres always non-reference models for silent gaming.Temps are good too so theres probably room to turn the fan speed down a little.

rimscrimley - Thursday, December 22, 2011 - link

Terrific review. Very excited about the new test. I'm happy this card pushes the envelope, but doesn't make me regret my recent 580 purchase. As long as AMD is producing competitive cards -- and when the price settles on this to parity with the 580, this will be the market winner -- the technology benefits. Cheers!nerfed08 - Thursday, December 22, 2011 - link

Good read. By the way there is a typo in final words.faster and cooler al at once

Anand Lal Shimpi - Thursday, December 22, 2011 - link

Fixed, thank you :)Take care,

Anand

hechacker1 - Thursday, December 22, 2011 - link

I think most telling is the minimum FPS results. The 7970 is 30-45% ahead of the previous generation; in a "worse case" situation were the GPU can't keep up or the program is poorly coded.Of course they are catching up with Nvidia's already pretty good minimum FPS, but I am glad to see the improvement, because nothing is worse than stuttering during a fasted pace FPS. I can live with 60fps, or even 30fps, as long as it's consistent.

So I bet the micro-stutter problem will also be improved in SLI with this architecture.

jgarcows - Thursday, December 22, 2011 - link

While I know the bitcoin craze has died down, I would be interested to see it included in the compute benchmarks. In the past, AMD has consistently outperformed nVidia in bitcoin work, it would also be interesting to see Anandtech's take as to why, and to see if the new architecture changes that.dcollins - Thursday, December 22, 2011 - link

This architecture will most likely be a step backwards in terms of bitcoin mining performance. In the GCN architecture article, Anand mentioned that buteforce hashing was one area where a VLIW style architecture had an advantage over a SIMD based chip. Bitcoin mining is based on algorithms mathematically equivalent to password hashing. With GCN, AMD is changing the very thing that made their card better miners than Nvidia's chips.The old architecture is superior for "pure," mathematically well defined code while GCN is targeted at "messy," more practical and thus widely applicable code.

wifiwolf - Thursday, December 22, 2011 - link

a bit less than expected, but not really an issue:http://www.tomshardware.co.uk/radeon-hd-7970-bench...

dcollins - Thursday, December 22, 2011 - link

You're looking at a 5% increase in performance for a whole new generation with 35% more compute hardware, increased clock speed and increased power consumption: that's not an improvement, it's a regression. I don't fault AMD for this because Bitcoin mining is a very niche use case, but Crossfire 68x0 cards offer much better performance/watt and performance/$.