AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

Compute: The Real Reason for GCN

Moving on from our game tests we’ve now reached the compute benchmark segment of our review. While the gaming performance of the 7970 will have the most immediate ramifications for AMD and the product, it is the compute performance that I believe is the more important metric in the long run. GCN is both a gaming and a compute architecture, and while its gaming pedigree is well defined its real-world compute capabilities still need to be exposed.

With that said, we’re going to open up this section with a rather straightforward statement: the current selection of compute applications for AMD GPUs is extremely poor. This is especially true for anything that would be suitable as a benchmark. Perhaps this is because developers ignored Evergreen and Northern Islands due to their low compute performance, or perhaps this is because developers still haven’t warmed up to OpenCL, but here at the tail end of 2011 there just aren’t very many applications that can make meaningful use of the pure compute capabilities of AMD’s GPUs.

Aggravating this some is that of the applications that can use AMD’s compute capabilities, some of the most popular ones among them have been hand-tuned for AMD’s previous architectures to the point that they simply will not run on Tahiti right now. Folding@Home, FLACC, and a few other candidates we looked into for use as compute benchmarks all fall under this umbrella, and as a result we only have a limited toolset to work with for proving the compute performance of GCN.

So with that out of the way, let’s get started.

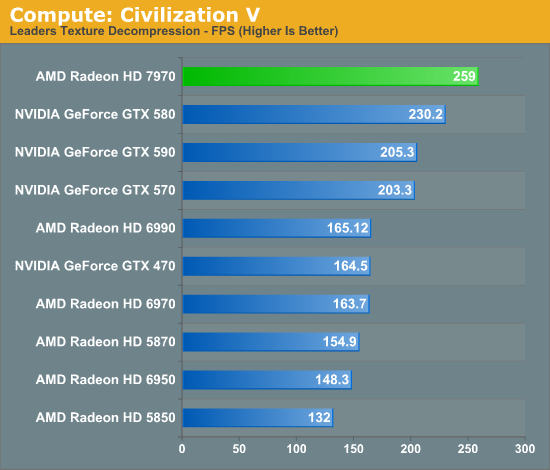

Since we just ended with Civilization V as a gaming benchmark, let’s start with Civilization V as a compute benchmark. We’ve seen Civilization V’s performance skyrocket on 7970 and we’ve theorized that it’s due to improvements in compute shader performance, and now we have a chance to prove it.

And there’s our proof. Compared to the 6970, the 7970’s performance on this benchmark has jumped up by 58%, and even the previously leading GTX 580 is now beneath the 7970 by 12%. GCN’s compute ambitions are clearly paying off, and in the case of Civilization V it’s even enough to dethrone NVIDIA entirely. If you’re AMD there’s not much more you can ask for.

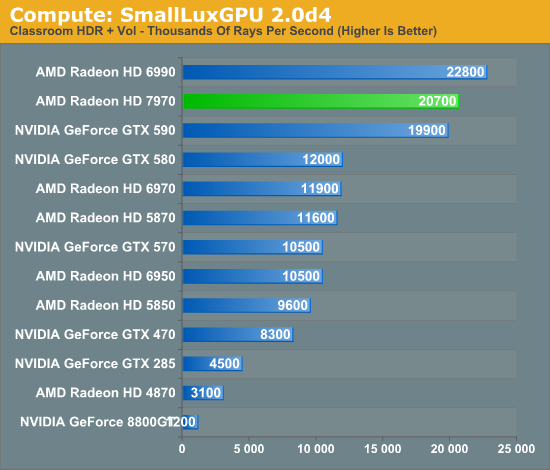

Our next benchmark is SmallLuxGPU, the GPU ray tracing branch of the open source LuxRender renderer. We’re now using a development build from the version 2.0 branch, and we’ve moved on to a more complex scene that hopefully will provide a greater challenge to our GPUs.

Again the 7970 does incredibly well here compared to AMD’s past architectures. AMD already did rather well here even with the limited compute performance of their VLIW4 architecture, and with GCN AMD once again puts their old architectures to shame, and puts NVIDIA to shame too in the process. Among single-GPU cards the GTX 580 is the closest competitor and even then the 7970 leads it by 72%. The story is much the same for the 7970 versus the 6970, where the 7970 leads by 74%. If AMD can continue to deliver on performance gains like these, the GCN is going to be a formidable force in the HPC market when it eventually makes its way there.

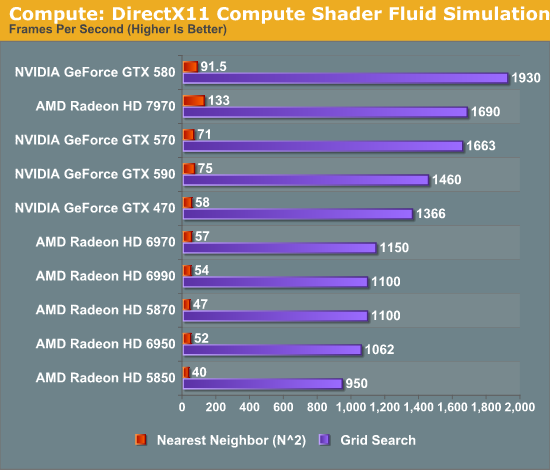

For our next benchmark we’re once again looking at compute shader performance, this time through the Fluid simulation sample in the DirectX SDK. This program simulates the motion and interactions of a 16k particle fluid using a compute shader, with a choice of several different algorithms. In this case we’re using two of them: a highly optimized grid search that Microsoft based on an earlier CUDA implementation, and an (O)n^2 nearest neighbor method that is optimized by using shared memory to cache data.

There are many things we can gather from this data, but let’s address the most important conclusions first. Regardless of the algorithm used, AMD’s VLIW4 and VLIW5 architectures had relatively poor performance in this simulation; NVIDIA meanwhile has strong performance with the grid search algorithm, but more limited performance with the shared memory algorithm. 7970 consequently manages to blow away the 6970 in all cases, and while it can’t beat the GTX 580 at the grid search algorithm it is 45% faster than the GTX 580 with the shared memory algorithm.

With GCN AMD put a lot of effort into compute performance, not only with respect to their shader/compute hardware, but with the caches and shared memory to feed that hardware. I don’t believe we have enough data to say anything definitive about how Tahiti/GCN’s cache compares to Fermi’s cache, this benchmark does raise the possibility that GCN cache design is better suited for less than optimal brute force algorithms. In which case what this means for AMD could be huge, as it could open up new HPC market opportunities for them that NVIDIA could never access, and certainly it could help AMD steal market share from NVIDIA.

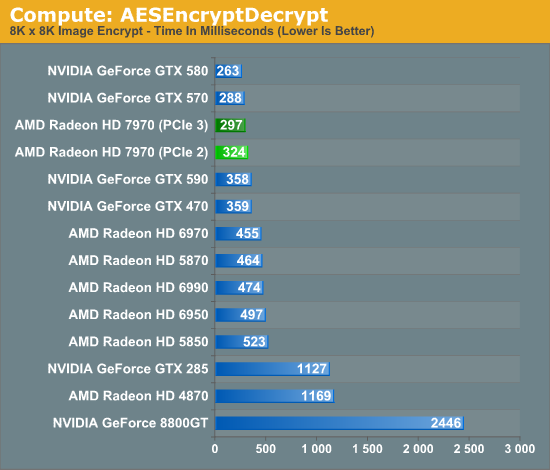

Moving on to our final two benchmarks, we’ve gone spelunking through AMD’s OpenCL archive to dig up a couple more compute scenarios to use to evaluate GCN. The first of these is AESEncryptDecrypt, an OpenCL AES encryption routine that AES encrypts/decrypts an 8K x 8K pixel square image file. The results of this benchmark are the average time to encrypt the image over a number of iterations of the AES cypher.

We went into the AMD OpenCL sample archives knowing that the projects in it were likely already well suited for AMD’s previous architectures, and there is definitely a degree of that in our results. The 6970 already performs decently in this benchmark and ultimately the GTX 580 is the top competitor. However the 7970 still manages to improve on the 6970 by a sizable degree, and accomplishes this encryption task in only 65% the time. Meanwhile compared to the GTX 580 it trails by roughly 12%, which shows that if nothing else Fermi and GCN are going to have their own architectural strengths and weaknesses, although there’s obviously some room for improvement.

One interesting fact we gathered from this compute benchmark is that it benefitted from the increase in bandwidth offered by PCI Express 3.0. With PCIe 3.0 the 7970 improves by about 10%, showcasing just how important transport bandwidth is for some compute tasks. Ultimately we’ll reach a point where even games will be able to take full advantage of PCIe 3.0, but for right now it’s the compute uses that will benefit the most.

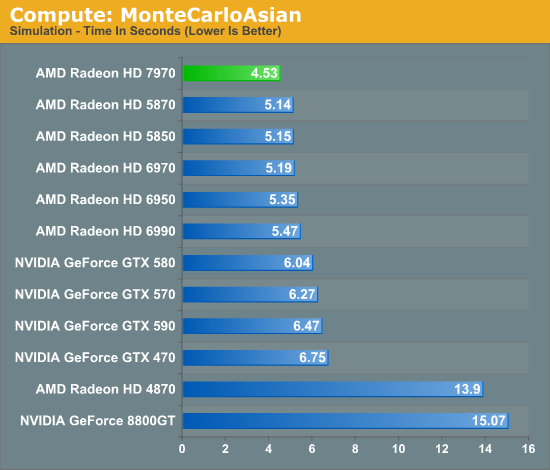

Our final benchmark also comes from the AMD OpenCL archives, and it’s a variant of the Monte Carlo method implemented in OpenCL. Here we’re timing how long it takes to execute a 400 step simulation.

For our final benchmark the 7970 once again takes the lead. The rest of the Radeon pack is close behind so GCN isn’t providing an immense benefit here, but AMD still improves upon the 6970 by 14%. Meanwhile the lead over the GTX 580 is larger at 33%.

Ultimately from these benchmarks it’s clear that AMD is capable of delivering on at least some of the theoretical potential for compute performance that GCN brings to the table. Not unlike gaming performance this is often going to depend on the task at hand, but the performance here proves that in the right scenario Tahiti is a very capable compute GPU. Will it be enough to make a run at NVIDIA’s domination with Tesla? At this point it’s too early to tell, but the potential is there, which is much more than we could say about VLIW4.

292 Comments

View All Comments

CrystalBay - Thursday, December 22, 2011 - link

Hi Ryan , All these older GPUs ie (5870 ,gtx570 ,580 ,6950 were rerun on the new hardware testbed ? If so GJ lotsa work there.FragKrag - Thursday, December 22, 2011 - link

The numbers would be worthless if he didn'tAnand Lal Shimpi - Thursday, December 22, 2011 - link

Yep they're all on the new testbed, Ryan had an insane week.Take care,

Anand

Lifted - Thursday, December 22, 2011 - link

How many monitors on the market today are available at this resolution? Instead of saying the 7970 doesn't quite make 60 fps at a resolution maybe 1% of gamers are using, why not test at 1920x1080 which is available to everyone, on the cheap, and is the same resolution we all use on our TV's?I understand the desire (need?) to push these cards, but I think it would be better to give us results the vast majority of us can relate to.

Anand Lal Shimpi - Thursday, December 22, 2011 - link

The difference between 1920 x 1200 vs 1920 x 1080 isn't all that big (2304000 pixels vs. 2073600 pixels, about an 11% increase). You should be able to conclude 19x10 performance from looking at the 19x12 numbers for the most part.I don't believe 19x12 is pushing these cards significantly more than 19x10 would, the resolution is simply a remnant of many PC displays originally preferring it over 19x10.

Take care,

Anand

piroroadkill - Thursday, December 22, 2011 - link

Dell U2410, which I have :3and Dell U2412M

piroroadkill - Thursday, December 22, 2011 - link

Oh, and my laptop is 1920x1200 too, Dell Precision M4400.My old laptop is 1920x1200 too, Dell Latitude D800..

johnpombrio - Wednesday, December 28, 2011 - link

Heh, I too have 3 Dell U2410 and one Dell 2710. I REALLY want a Dell 30" now. My GTX 580 seems to be able to handle any of these monitors tho Crysis High-Def does make my 580 whine on my 27 inch screen!mczak - Thursday, December 22, 2011 - link

The text for that test is not really meaningful. Efficiency of ROPs has almost nothing to do at all with this test, this is (and has always been) a pure memory bandwidth test (with very few exceptions such as the ill-designed HD5830 which somehow couldn't use all its theoretical bandwidth).If you look at the numbers, you can see that very well actually, you can pretty much calculate the result if you know the memory bandwidth :-). 50% more memory bandwidth than HD6970? Yep, almost exactly 50% more performance in this test just as expected.

Ryan Smith - Thursday, December 22, 2011 - link

That's actually not a bad thing in this case. AMD didn't go beyond 32 ROPs because they didn't need to - what they needed was more bandwidth to feed the ROPs they already had.