AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

Crysis: Warhead

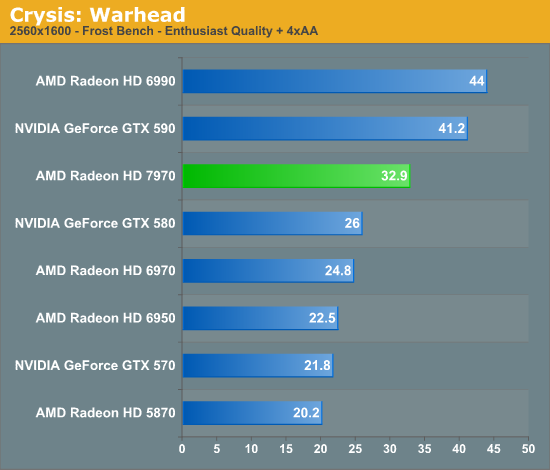

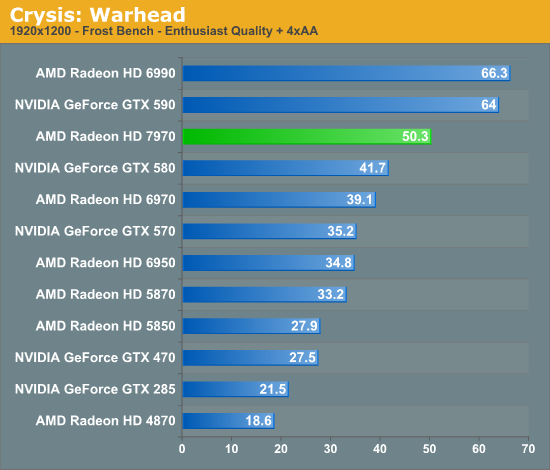

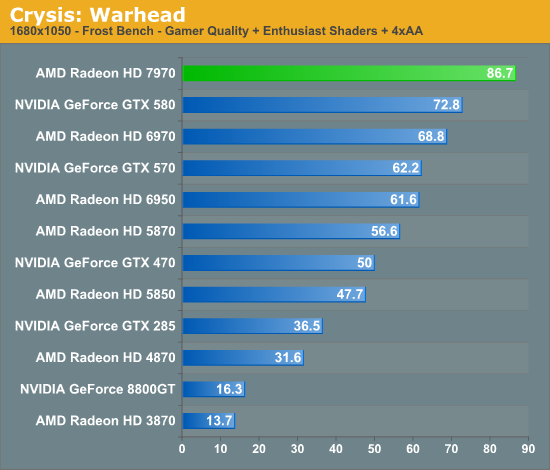

Kicking things off as always is Crysis: Warhead. It’s no longer the toughest game in our benchmark suite, but it’s still a technically complex game that has proven to be a very consistent benchmark. Thus even 4 years since the release of the original Crysis, “but can it run Crysis?” is still an important question, and the answer continues to be “no.” While we’re closer than ever, full Enthusiast settings at a 60fps is still beyond the grasp of a single-GPU card.

This year we’ve finally cranked our settings up to full Enthusiast quality for 2560 and 1920, so we can finally see where the bar lies. To that extent the 7970 is closer than any single-GPU card before as we’d imagine, but it’s going to take one more jump (~20%) to finally break 60fps at 1920.

Looking at the 7970 relative to other cards, there are a few specific points to look at; the GTX 580 is of course its closest competitor, but we can also see how it does compared to AMD’s previous leader, the 6970, and how far we’ve come compared to DX10 generation cards.

One thing that’s clear from the start is that the tendency for leads to scale with the resolution tested still stands. At 2560 the 7970 enjoys a 26% lead over the GTX 580, but at 1920 that’s only a 20% lead and it shrinks just a bit more to 19% at 1680. Even compared to the 6970 that trend holds, as a 32% lead is reduced to 28% and then 26%. If the 7970 needs high resolutions to really stretch its legs that will be good news for Eyefinity users, but given that most gamers are still on a single monitor it may leave AMD closer to 40nm products in performance than they’d like.

Speaking of 40nm products, both of our dual-GPU entries, the Radeon HD 6990 and GeForce GTX 590 are enjoying lofty leads over the 7970 even with the advantage of its smaller fabrication process. To catch up to those dual-GPU cards from the 6970 would require a 70%+ increase in performance, and even with a full node difference it’s clear that this is not going to happen. Not that it’s completely out of reach for the 7970 once you start looking at overclocking, but the reduction in power usage when moving from TSMC 40nm to 28nm isn’t nearly large enough to make that happen while maintaining the 6970’s power envelope. Dual-GPU owners will continue to enjoy a comfortable lead over even the 7970 for the time being, but with the 7970 being built on a 28nm process the power/temp tradeoff for those cards is even greater compared to 40nm products.

Meanwhile it’s interesting to note just how much progress we’ve made since the DX10 generation though; at 1920 the 7970 is 130% faster than the GTX 285 and 170% faster than the Radeon HD 4870. Existing users who skip a generation are a huge market for AMD and NVIDIA, and with this kind of performance they’re in a good position to finally convince those users to make the jump to DX11.

Finally it should be noted that Crysis is often a good benchmark for predicting overall performance trends, and as you will see it hasn’t let us down here. How well the 7970 performs relative to its competition will depend on the specific game, but 20-25% isn’t too far off from reality.

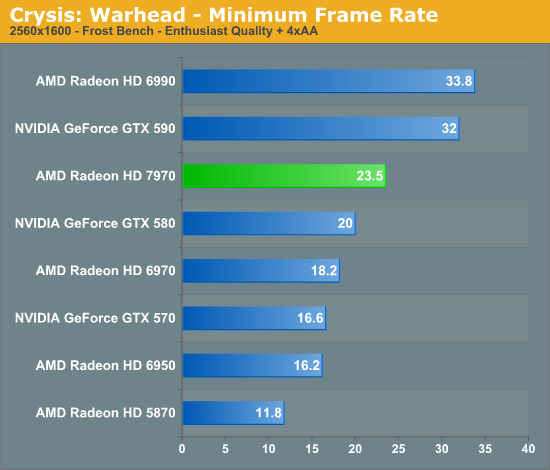

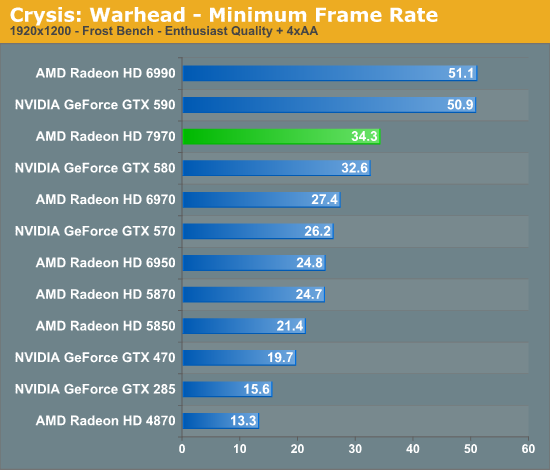

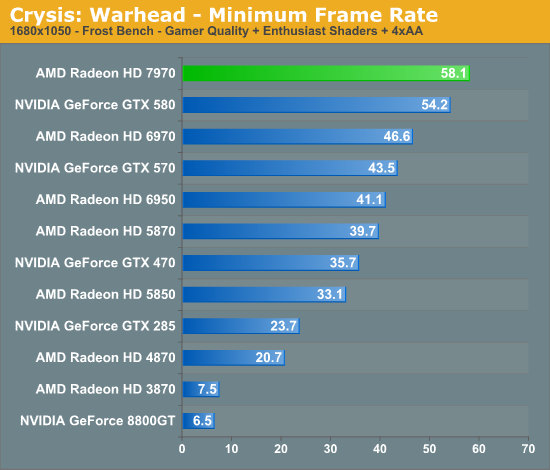

Looking at our minimum framerates it’s a bit surprising to see that while the 7970 has a clear lead when it comes to average framerates the minimums are only significantly better at 2560. At that resolution the lowest framerate for the 7970 is 23.5 versus 20 for the GTX 580, but at 1920 that becomes a 2fps, 5% difference. It’s not that the 7970 was any less smooth in playing Crysis, but in those few critical moments it looks to be dipping a bit more than the GTX 580.

Compared to the 6970 on the other hand the minimum framerate difference is much larger and much more consistent. At 2560 the 7970’s minimums are 29% better, and even at lower resolutions it holds at around 25%. Clearly even with AMD’s new architecture their designs still inherit some of the traits of their old designs.

292 Comments

View All Comments

CrystalBay - Thursday, December 22, 2011 - link

Hi Ryan , All these older GPUs ie (5870 ,gtx570 ,580 ,6950 were rerun on the new hardware testbed ? If so GJ lotsa work there.FragKrag - Thursday, December 22, 2011 - link

The numbers would be worthless if he didn'tAnand Lal Shimpi - Thursday, December 22, 2011 - link

Yep they're all on the new testbed, Ryan had an insane week.Take care,

Anand

Lifted - Thursday, December 22, 2011 - link

How many monitors on the market today are available at this resolution? Instead of saying the 7970 doesn't quite make 60 fps at a resolution maybe 1% of gamers are using, why not test at 1920x1080 which is available to everyone, on the cheap, and is the same resolution we all use on our TV's?I understand the desire (need?) to push these cards, but I think it would be better to give us results the vast majority of us can relate to.

Anand Lal Shimpi - Thursday, December 22, 2011 - link

The difference between 1920 x 1200 vs 1920 x 1080 isn't all that big (2304000 pixels vs. 2073600 pixels, about an 11% increase). You should be able to conclude 19x10 performance from looking at the 19x12 numbers for the most part.I don't believe 19x12 is pushing these cards significantly more than 19x10 would, the resolution is simply a remnant of many PC displays originally preferring it over 19x10.

Take care,

Anand

piroroadkill - Thursday, December 22, 2011 - link

Dell U2410, which I have :3and Dell U2412M

piroroadkill - Thursday, December 22, 2011 - link

Oh, and my laptop is 1920x1200 too, Dell Precision M4400.My old laptop is 1920x1200 too, Dell Latitude D800..

johnpombrio - Wednesday, December 28, 2011 - link

Heh, I too have 3 Dell U2410 and one Dell 2710. I REALLY want a Dell 30" now. My GTX 580 seems to be able to handle any of these monitors tho Crysis High-Def does make my 580 whine on my 27 inch screen!mczak - Thursday, December 22, 2011 - link

The text for that test is not really meaningful. Efficiency of ROPs has almost nothing to do at all with this test, this is (and has always been) a pure memory bandwidth test (with very few exceptions such as the ill-designed HD5830 which somehow couldn't use all its theoretical bandwidth).If you look at the numbers, you can see that very well actually, you can pretty much calculate the result if you know the memory bandwidth :-). 50% more memory bandwidth than HD6970? Yep, almost exactly 50% more performance in this test just as expected.

Ryan Smith - Thursday, December 22, 2011 - link

That's actually not a bad thing in this case. AMD didn't go beyond 32 ROPs because they didn't need to - what they needed was more bandwidth to feed the ROPs they already had.