AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

PCI Express 3.0: More Bandwidth For Compute

It may seem like it’s still fairly new, but PCI Express 2 is actually a relatively old addition to motherboards and video cards. AMD first added support for it with the Radeon HD 3870 back in 2008 so it’s been nearly 4 years since video cards made the jump. At the same time PCI Express 3.0 has been in the works for some time now and although it hasn’t been 4 years it feels like it has been much longer. PCIe 3.0 motherboards only finally became available last month with the launch of the Sandy Bridge-E platform and now the first PCIe 3.0 video cards are becoming available with Tahiti.

But at first glance it may not seem like PCIe 3.0 is all that important. Additional PCIe bandwidth has proven to be generally unnecessary when it comes to gaming, as single-GPU cards typically only benefit by a couple percent (if at all) when moving from PCIe 2.1 x8 to x16. There will of course come a time where games need more PCIe bandwidth, but right now PCIe 2.1 x16 (8GB/sec) handles the task with room to spare.

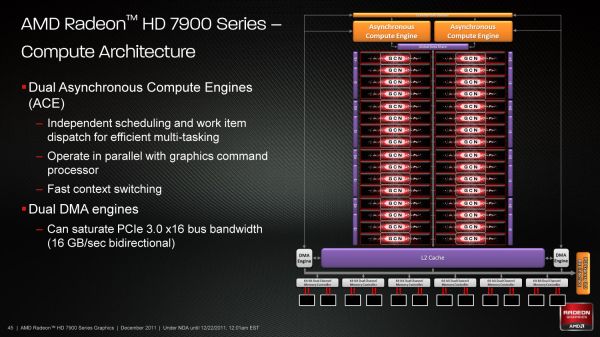

So why is PCIe 3.0 important then? It’s not the games, it’s the computing. GPUs have a great deal of internal memory bandwidth (264GB/sec; more with cache) but shuffling data between the GPU and the CPU is a high latency, heavily bottlenecked process that tops out at 8GB/sec under PCIe 2.1. And since GPUs are still specialized devices that excel at parallel code execution, a lot of workloads exist that will need to constantly move data between the GPU and the CPU to maximize parallel and serial code execution. As it stands today GPUs are really only best suited for workloads that involve sending work to the GPU and keeping it there; heterogeneous computing is a luxury there isn’t bandwidth for.

The long term solution of course is to bring the CPU and the GPU together, which is what Fusion does. CPU/GPU bandwidth just in Llano is over 20GB/sec, and latency is greatly reduced due to the CPU and GPU being on the same die. But this doesn’t preclude the fact that AMD also wants to bring some of these same benefits to discrete GPUs, which is where PCI e 3.0 comes in.

With PCIe 3.0 transport bandwidth is again being doubled, from 500MB/sec per lane bidirectional to 1GB/sec per lane bidirectional, which for an x16 device means doubling the available bandwidth from 8GB/sec to 16GB/sec. This is accomplished by increasing the frequency of the underlying bus itself from 5 GT/sec to 8 GT/sec, while decreasing overhead from 20% (8b/10b encoding) to 1% through the use of a highly efficient 128b/130b encoding scheme. Meanwhile latency doesn’t change – it’s largely a product of physics and physical distances – but merely doubling the bandwidth can greatly improve performance for bandwidth-hungry compute applications.

As with any other specialized change like this the benefit is going to heavily depend on the application being used, however AMD is confident that there are applications that will completely saturate PCIe 3.0 (and thensome), and it’s easy to imagine why.

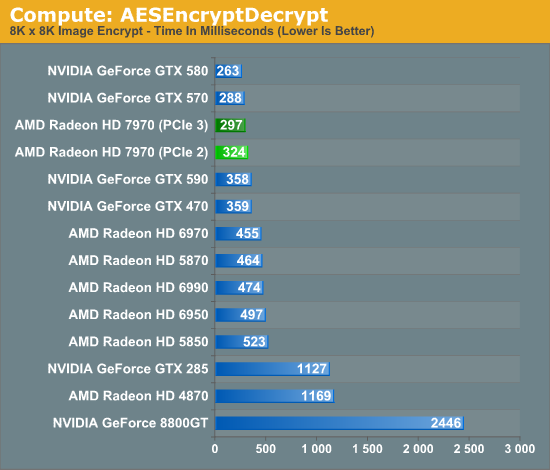

Even among our limited selection compute benchmarks we found something that directly benefitted from PCIe 3.0. AESEncryptDecrypt, a sample application from AMD’s APP SDK, demonstrates AES encryption performance by running it on square image files. Throwing it a large 8K x 8K image not only creates a lot of work for the GPU, but a lot of PCIe traffic too. In our case simply enabling PCIe 3.0 improved performance by 9%, from 324ms down to 297ms.

Ultimately having more bandwidth is not only going to improve compute performance for AMD, but will give the company a critical edge over NVIDIA for the time being. Kepler will no doubt ship with PCIe 3.0, but that’s months down the line. In the meantime users and organizations with high bandwidth compute workloads have Tahiti.

292 Comments

View All Comments

CeriseCogburn - Sunday, March 11, 2012 - link

We'll have to see if amd "magically changes that number and informs Anand it was wrong like they did concerning their failed recent cpu.... LOLThat's a whole YEAR of lying to everyone trying to make their cpu look better than it's actual fail, and Anand shamefully chose to announce the number change "with no explanation given by amd"... -

That's why you should be cautious - we might find out the transistor count is really 33% different a year from now.

piroroadkill - Thursday, December 22, 2011 - link

Only disappointing if you:a) ignored the entire review

b) looked at only the chart for noise

c) have brain damage

Finally - Thursday, December 22, 2011 - link

In Eyefinity setups the new generation shines: http://tinyurl.com/bu3wb5cwicko - Thursday, December 22, 2011 - link

I think the price is disappointing. Everything else is nice though.CeriseCogburn - Sunday, March 11, 2012 - link

The drivers suckRussianSensation - Thursday, December 22, 2011 - link

Not necessarily. The other possibility is that being 37% better on average at 1080P (from this Review) over HD6970 for $320 more than an HD6950 2GB that can unlock into a 6970 just isn't impressive enough. That should be d).piroroadkill - Friday, December 23, 2011 - link

Well, I of course have a 6950 2GB that unlocked, so as far as I'm concerned, that has been THE choice since the launch of the 6950, and still is today.But you have to ignore cost at launch, it's always high.

CeriseCogburn - Thursday, March 8, 2012 - link

I agree RS, as these amd people are constantly screaming price percentage increase vs performance increase... yet suddenly applying the exact combo they use as a weapon against Nvidia to themselves is forbidden, frowned upon, discounted, and called unfair....Worse yet, according to the same its' all Nvidia's fault now - that amd is overpriced through the roof...LOL - I have to laugh.

Also, the image quality page in the review was so biased toward amd that I thought I was going to puke.

Amd is geven credit for a "perfect algorythm" that this very website has often and for quite some time declared makes absolutely no real world difference in games - and in fact, this very reviewer admitted the 1+ year long amd failure in this area as soon as they released "the fix" - yet argued everyone else was wrong for the prior year.

The same thing appears here.

Today we find out the GTX580 nvidia card has much superior anti-shimmering than all prior amd cards, and that finally, the 7000 high end driver has addressed the terrible amd shimmering....

Worse yet, the decrepit amd low quality impaired screens are allowed in every bench, with the 10% amd performance cheat this very site outlined them merely stated we hope Nvidia doesn't so this too - then allowed it, since that year plus ago...

In the case of all the above, I certainly hope the high end 797x cards aren't CHEATING LIKE HECK still.

For cripe sakes, get the AA stuff going, stop the 10% IQ cheating, and get our bullet physics or pay for PhysX, and stabilize the drivers .... I am sick of seeing praise for cheating and failures - if they are (amd) so great let's GET IT UP TO EQUIVALENCY !

Wow I'm so mad I don't have a 7970 as supply is short and I want to believe in amd for once... FOR THE LOVE OF GOD DID THEY GET IT RIGHT THIS TIME ?!!?

slayernine - Thursday, December 22, 2011 - link

Holy fan boys batman!This comment thread reeks of nvidia fans green with jealousy

Hauk - Thursday, December 22, 2011 - link

LOL, Wreckage first!Love him or hate him, he's got style..