AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

Crysis: Warhead

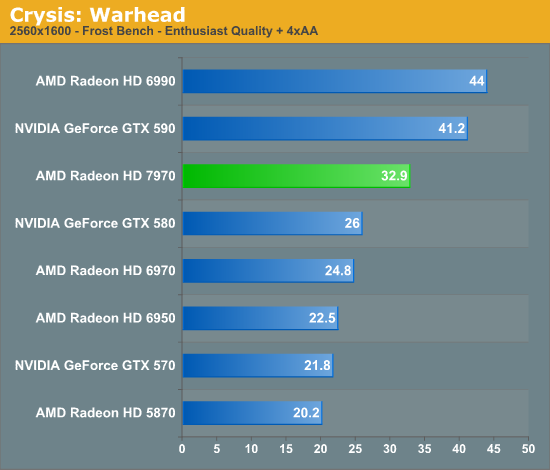

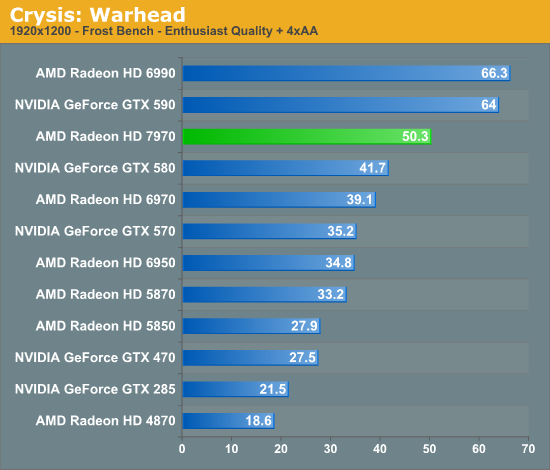

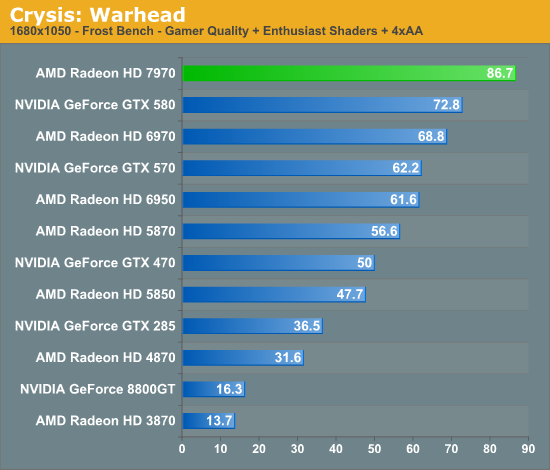

Kicking things off as always is Crysis: Warhead. It’s no longer the toughest game in our benchmark suite, but it’s still a technically complex game that has proven to be a very consistent benchmark. Thus even 4 years since the release of the original Crysis, “but can it run Crysis?” is still an important question, and the answer continues to be “no.” While we’re closer than ever, full Enthusiast settings at a 60fps is still beyond the grasp of a single-GPU card.

This year we’ve finally cranked our settings up to full Enthusiast quality for 2560 and 1920, so we can finally see where the bar lies. To that extent the 7970 is closer than any single-GPU card before as we’d imagine, but it’s going to take one more jump (~20%) to finally break 60fps at 1920.

Looking at the 7970 relative to other cards, there are a few specific points to look at; the GTX 580 is of course its closest competitor, but we can also see how it does compared to AMD’s previous leader, the 6970, and how far we’ve come compared to DX10 generation cards.

One thing that’s clear from the start is that the tendency for leads to scale with the resolution tested still stands. At 2560 the 7970 enjoys a 26% lead over the GTX 580, but at 1920 that’s only a 20% lead and it shrinks just a bit more to 19% at 1680. Even compared to the 6970 that trend holds, as a 32% lead is reduced to 28% and then 26%. If the 7970 needs high resolutions to really stretch its legs that will be good news for Eyefinity users, but given that most gamers are still on a single monitor it may leave AMD closer to 40nm products in performance than they’d like.

Speaking of 40nm products, both of our dual-GPU entries, the Radeon HD 6990 and GeForce GTX 590 are enjoying lofty leads over the 7970 even with the advantage of its smaller fabrication process. To catch up to those dual-GPU cards from the 6970 would require a 70%+ increase in performance, and even with a full node difference it’s clear that this is not going to happen. Not that it’s completely out of reach for the 7970 once you start looking at overclocking, but the reduction in power usage when moving from TSMC 40nm to 28nm isn’t nearly large enough to make that happen while maintaining the 6970’s power envelope. Dual-GPU owners will continue to enjoy a comfortable lead over even the 7970 for the time being, but with the 7970 being built on a 28nm process the power/temp tradeoff for those cards is even greater compared to 40nm products.

Meanwhile it’s interesting to note just how much progress we’ve made since the DX10 generation though; at 1920 the 7970 is 130% faster than the GTX 285 and 170% faster than the Radeon HD 4870. Existing users who skip a generation are a huge market for AMD and NVIDIA, and with this kind of performance they’re in a good position to finally convince those users to make the jump to DX11.

Finally it should be noted that Crysis is often a good benchmark for predicting overall performance trends, and as you will see it hasn’t let us down here. How well the 7970 performs relative to its competition will depend on the specific game, but 20-25% isn’t too far off from reality.

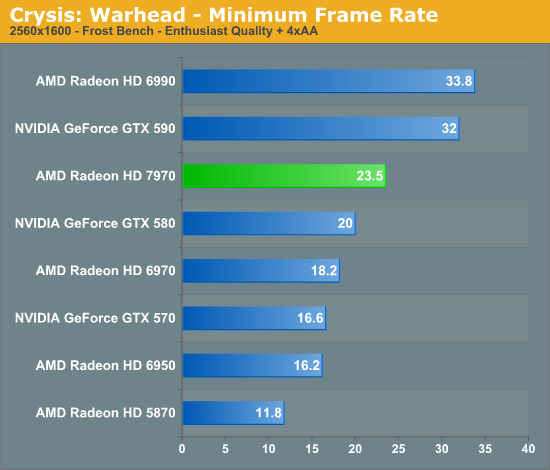

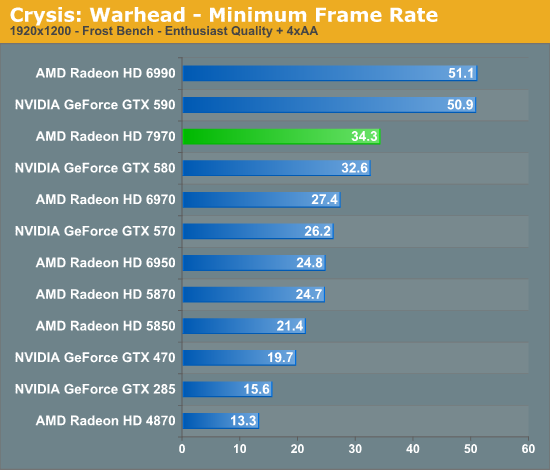

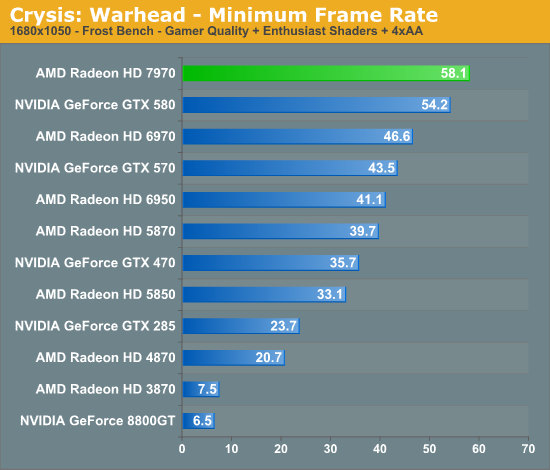

Looking at our minimum framerates it’s a bit surprising to see that while the 7970 has a clear lead when it comes to average framerates the minimums are only significantly better at 2560. At that resolution the lowest framerate for the 7970 is 23.5 versus 20 for the GTX 580, but at 1920 that becomes a 2fps, 5% difference. It’s not that the 7970 was any less smooth in playing Crysis, but in those few critical moments it looks to be dipping a bit more than the GTX 580.

Compared to the 6970 on the other hand the minimum framerate difference is much larger and much more consistent. At 2560 the 7970’s minimums are 29% better, and even at lower resolutions it holds at around 25%. Clearly even with AMD’s new architecture their designs still inherit some of the traits of their old designs.

292 Comments

View All Comments

B3an - Thursday, December 22, 2011 - link

Anyone with half a brain should have worked out that being as this was going to be AMD's Fermi that it would not of had a massive increase for gaming, simply because many of those extra transistors are there for computing purposes. NOT for gaming. Just as with Fermi.The performance of this card is pretty much exactly as i expected.

Peichen - Friday, December 23, 2011 - link

AMD has been saying for ages that GPU computing is useless and CPU is the only way to go. I guess they just have a better PR department than Nvidia.BTW, before suggesting I have suffered brain trauma, remember that Nvidia delivered on Fermi 2 and GK100 will be twice as powerful as GF110

CeriseCogburn - Thursday, March 8, 2012 - link

Well it was nice to see the amd fans with half a heart admit amd has accomplished something huge by abandoned gaming, as they couldn't get enough of screaming it against nvidia... even as the 580 smoked up the top line stretch so many times...It's so entertaining...

CeriseCogburn - Thursday, March 8, 2012 - link

AMD is the dumb company. Their dumb gpu shaders. Their x86 copying of intel. Now after a few years they've done enough stealing and corporate espionage to "clone" Nvidia architecture and come out with this 7k compute.If they're lucky Nvidia will continue doing all software groundbreaking and carry the massive load by a factor of ten or forty to one working with game developers, porting open gl and open cl to workable programs and as amd fans have demanded giving them PhysX ported out to open source "for free", at which point it will suddenly be something no gamer should live without.

"Years behind" is the real story that should be told about amd and it's graphics - and it's cpu's as well.

Instead we are fed worthless half truths and lies... a "tesselator" in the HD2900 (while pathetic dx11 perf is still the amd norm)... the ddr5 "groundbreaker" ( never mentioned was the sorry bit width that made cheap 128 and 256 the reason for ddr5 needs)...

Etc.

When you don't see the promised improvement, the radeonites see a red rocket shooting to the outer depths of the galaxy and beyond...

Just get ready to pay some more taxes for the amd bailout coming.

durinbug - Thursday, December 22, 2011 - link

I was intrigued by the comment about driver command lists, somehow I missed all of that when it happened. I went searching and finally found this forum post from Ryan:http://forums.anandtech.com/showpost.php?p=3152067...

It would be nice to link to that from the mention of DCL for those of us not familiar with it...

digitalzombie - Thursday, December 22, 2011 - link

I know I'm a minority, but I use Linux to crunch data and GPU would help a lot...I was wondering if you guys can try to use these cards on Debian/Ubuntu or Fedora? And maybe report if 3d acceleration actually works? My current amd card have bad driver for Linux, shearing and glitches, which sucks when I try to number crunch and map stuff out graphically in 3d. Hell I try compiling the driver's source code and it doesn't work.

Thank you!

WaltC - Thursday, December 22, 2011 - link

Somebody pinch me and tell me I didn't just read a review of a brand-new, high-end ATi card that apparently *forgot* Eyefinity is a feature the stock nVidia 580--the card the author singles out for direct comparison with the 7970--doesn't offer in any form. Please tell me it's my eyesight that is failing, because I missed the benchmark bar charts detailing the performance of the Eyefinity 6-monitor support in the 7970 (but I do recall seeing esoteric bar-chart benchmarks for *PCIe 3.0* performance comparisons, however. I tend to think that multi-monitor support, or the lack of it, is far more an important distinction than PCIe 3.0 support benchmarks at present.)Oh, wait--nVidia's stock 580 doesn't do nVidia's "NV Surround triple display" and so there was no point in mentioning that "trivial fact" anywhere in the article? Why compare two cards so closely but fail to mention a major feature one of them supports that the other doesn't? Eh? Is it the author's opinion that multi-monitor gaming is not worth having on either gpu platform? If so, it would be nice to know that by way of the author's admission. Personally, I think that knowing whether a product will support multi monitors and *playable* resolutions up to 5760x1200 ROOB is *somewhat* important in a product review. (sarcasm/massive understatement)

Aside from that glaring oversight, I thought this review was just fair, honestly--and if the author had been less interested in apologizing for nVidia--we might even have seen a better one. Reading his hastily written apologies was kind of funny and amusing, though. But leaving out Eyefinity performance comparisons by pretending the feature isn't relative to the 7970, or that it isn't a feature worth commenting on relative to nVidia's stock 580? Very odd. The author also states: "The purpose of MST hubs was so that users could use several monitors with a regular Radeon card, rather than needing an exotic all-DisplayPort “Eyefinity edition” card as they need now," as if this is an industry-standard component that only ATi customers are "asking for," when it sure seems like nVidia customers could benefit from MST even more at present.

I seem to recall reading the following statement more than once in this review but please pardon me if it was only stated once: "... but it’s NVIDIA that makes all the money." Sorry but even a dunce can see that nVidia doesn't now and never has "made all the money." Heh...;) If nVidia "made all the money," and AMD hadn't made any money at all (which would have to be the case if nVidia "made all the money") then we wouldn't see a 7970 at all, would we? It's possible, and likely, that the author meant "nVidia made more money," which is an independent declaration I'm not inclined to check, either way. But it's for certain that in saying "nVidia made all the money" the author was--obviously--wrong.

The 7970 is all the more impressive considering how much longer nVidia's had to shape up and polish its 580-ish driver sets. But I gather that simple observation was also too far fetched for the author to have seriously considered as pertinent. The 7970 is impressive, AFAIC, but this review is somewhat disappointing. Looks like it was thrown together in a big hurry.

Finally - Friday, December 23, 2011 - link

On AT you have to compensate for their over-steering while reading.Death666Angel - Thursday, December 22, 2011 - link

"Intel implemented Quick Sync as a CPU company, but does that mean hardware H.264 encoders are a CPU feature?" << Why is that even a question. I cannot use the feature unless I am using the iGPU or use the dGPU with Lucid Virtu. As such, it is not a feature of the CPU in my book.Roald - Thursday, December 22, 2011 - link

I don't agree with the conclusion. I think it's much more of a perspective thing. Comming from the 6970 to the 7970 it's not a great win in the gaming deparment. However the same can be said from the change from 4870 to 5870 to 6970. The only real benefit the 5870 had over the 4870 was DX11 support, which didn't mean so much for the games at the time.Now there is a new architechture that not only manages to increase FPS in current games, it also has growing potential and manages to excell in the compute field aswell at the same time.

The conclusion made in the Crysis warhead part of this review should therefore also have been highlighted as finals words.