Intel Core i7 3960X (Sandy Bridge E) Review: Keeping the High End Alive

by Anand Lal Shimpi on November 14, 2011 3:01 AM EST- Posted in

- CPUs

- Intel

- Core i7

- Sandy Bridge

- Sandy Bridge E

If you look carefully enough, you may notice that things are changing. It first became apparent shortly after the release of Nehalem. Intel bifurcated the performance desktop space by embracing a two-socket strategy, something we'd never seen from Intel and only once from AMD in the early Athlon 64 days (Socket-940 and Socket-754).

LGA-1366 came first, but by the time LGA-1156 arrived a year later it no longer made sense to recommend Intel's high-end Nehalem platform. Lynnfield was nearly as fast and the entire platform was more affordable.

When Sandy Bridge launched earlier this year, all we got was the mainstream desktop version. No one complained because it was fast enough, but we all knew an ultra high-end desktop part was in the works. A true successor to Nehalem's LGA-1366 platform for those who waited all this time.

Left to right: Sandy Bridge E, Gulftown, Sandy Bridge

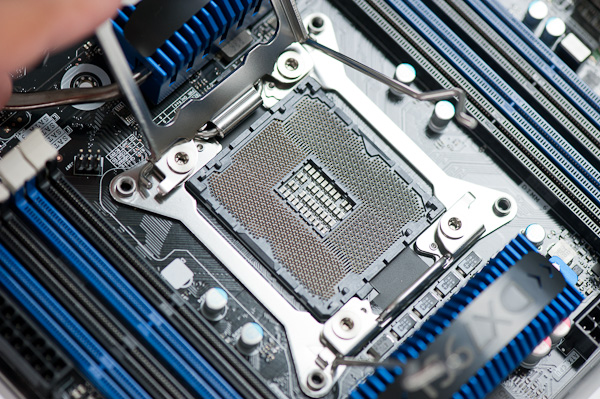

After some delays, Sandy Bridge E is finally here. The platform is actually pretty simple to talk about. There's a new socket: LGA-2011, a new chipset Intel's X79 and of course the Sandy Bridge E CPU itself. We'll start at the CPU.

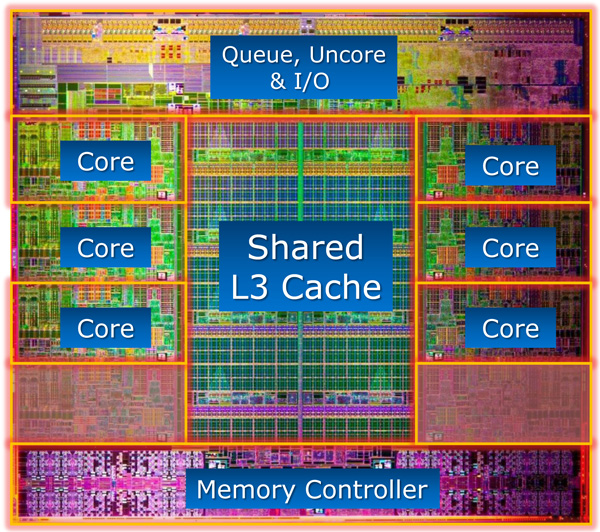

For the desktop, Sandy Bridge E is only available in 6-core configurations at launch. Early next year we'll see a quad-core version. I mention the desktop qualification because Sandy Bridge E is really a die harvested Sandy Bridge EP, Intel's next generation Xeon part:

If you look carefully at the die shot above, you'll notice that there are actually eight Sandy Bridge cores. The Xeon version will have all eight enabled, but the last two are fused off for SNB-E. The 32nm die is absolutely gigantic by desktop standards, measuring 20.8 mm x 20.9 mm (~435mm^2) Sandy Bridge E is bigger than most GPUs. It also has a ridiculous number of transistors: 2.27 billion.

Around a quarter of the die is dedicated just to the chip's massive L3 cache. Each cache slice has increased in size compared to Sandy Bridge. Instead of 2MB, Sandy Bridge E boasts 2.5MB cache slices. In its Xeon configuration that works out to 20MB of L3 cache, but for desktops it's only 15MB. That's just 1MB shy of how much system memory my old upgraded 386-SX/20 had.

| CPU Specification Comparison | ||||||||

| CPU | Manufacturing Process | Cores | Transistor Count | Die Size | ||||

| AMD Bulldozer 8C | 32nm | 8 | 1.2B* | 315mm2 | ||||

| AMD Thuban 6C | 45nm | 6 | 904M | 346mm2 | ||||

| AMD Deneb 4C | 45nm | 4 | 758M | 258mm2 | ||||

| Intel Gulftown 6C | 32nm | 6 | 1.17B | 240mm2 | ||||

| Intel Sandy Bridge E (6C) | 32nm | 6 | 2.27B | 435mm2 | ||||

| Intel Nehalem/Bloomfield 4C | 45nm | 4 | 731M | 263mm2 | ||||

| Intel Sandy Bridge 4C | 32nm | 4 | 995M | 216mm2 | ||||

| Intel Lynnfield 4C | 45nm | 4 | 774M | 296mm2 | ||||

| Intel Clarkdale 2C | 32nm | 2 | 384M | 81mm2 | ||||

| Intel Sandy Bridge 2C (GT1) | 32nm | 2 | 504M | 131mm2 | ||||

| Intel Sandy Bridge 2C (GT2) | 32nm | 2 | 624M | 149mm2 | ||||

Update: AMD originally told us Bulldozer was a 2B transistor chip. It has since told us that the 8C Bulldozer is actually 1.2B transistors. The die size is still accurate at 315mm2.

At the core level, Sandy Bridge E is no different than Sandy Bridge. It doesn't clock any higher, L1/L2 caches remain unchanged and per-core performance is identical to what Intel launched earlier this year.

The Lineup

| Processor | Core Clock | Cores / Threads | L3 Cache | Max Turbo | Max Overclock Multiplier | TDP | Price |

| Intel Core i7 3960X | 3.3GHz | 6 / 12 | 15MB | 3.9GHz | 57x | 130W | $990 |

| Intel Core i7 3930K | 3.2GHz | 6 / 12 | 12MB | 3.8GHz | 57x | 130W | $555 |

| Intel Core i7 3820 | 3.6GHz | 4 / 8 | 10MB | 3.9GHz | 43x | 130W | TBD |

| Intel Core i7 2700K | 3.5GHz | 4 / 8 | 8MB | 3.9GHz | 57x | 95W | $332 |

| Intel Core i7 2600K | 3.4GHz | 4 / 8 | 8MB | 3.8GHz | 57x | 95W | $317 |

| Intel Core i7 2600 | 3.4GHz | 4 / 8 | 8MB | 3.8GHz | 42x | 95W | $294 |

| Intel Core i5 2500K | 3.3GHz | 4 / 4 | 6MB | 3.7GHz | 57x | 95W | $216 |

| Intel Core i5 2500 | 3.3GHz | 4 / 4 | 6MB | 3.7GHz | 41x | 95W | $205 |

Those of you buying today only have two options: the Core i7-3960X and the Core i7-3930K. Both have six fully unlocked cores, but the 3960X gives you a 15MB L3 cache vs. 12MB with the 3930K. You pay handsomely for that extra 3MB of L3. The 3960X goes for $990 in 1K unit quantities, while the 3930K sells for $555.

The 3960X has the same 3.9GHz max turbo frequency as the Core i7 2700K, that's with 1 - 2 cores active. With 5 - 6 cores active the max turbo drops to a respectable 3.6GHz. Unlike the old days of many vs. few core CPUs, there are no tradeoffs for performance when you buy a SNB-E. Thanks to power gating and turbo, you get pretty much the fastest possible clock speeds regardless of workload.

Early next year we'll see a Core i7 3820, priced around $300, with only 4 cores and a 10MB L3. The 3820 will only be partially unlocked (max OC multiplier = 4 bins above max turbo).

163 Comments

View All Comments

DanNeely - Monday, November 14, 2011 - link

AMD's been selling 6 core Phenom CPUs since April 2010 (6 core opterons launched in jun 09). Prior to SB's launch they were very competitive with intel systems at the same mobo+CPU price points, and while having fallen behind since then are still decent buys for more threaded apps because AMD's slashed prices to compete.On the intel side, while hyperthreading isn't 8 real cores for most workloads 8 threads will run significantly faster than 4.

ClagMaster - Monday, November 14, 2011 - link

This Sandy-Bridge-E is really a desktop supercomputer well-suited for engineering workstations that can solve Abequs or Monte Carlo Programs. With that intent, the Xeon brand of this processor, with eight-cores and ECC memory support, is the processor to buy.The Xeon will very likely have the SAS support that Anand so laments on a specialty chipset based on the X79. And engineering workstations are not made or broken with lack of native USB 3 controllers.

DDR3 1333 is not slouch memory. With four channels of the memory there will be much faster memory IO than a two channel system on the i7-2700K with the same memory.

This Sandy-Bridge-E consumer chip is for those true, frothing, narcisstic enthusiasts who have thousands of USD to burn and want the bragging rights.

I suppose its their money to waste and their chests to thump.

As for myself, I would have purchased an ASUS C206 workstation and a E3-1240 Xeon processor.

sylar365 - Monday, November 14, 2011 - link

Everybody is seeing the benchmarks and claiming that this processor is overkill for gaming but aren't all of these "real world" gaming benchmarks run with the game as being the ONLY application open at the time of testing? I understand that you need to reduce the number of variables in order to produce accurate numbers across multiple platforms, but what I really want to know, more than "can it run (insert game) at 60fps" is this:Can it run (for instance) Battlefield 3 multiplayer on "High" ALONGSIDE Origin, Chrome, Skype, Pandora One and streaming software while giving a decent stream quality?

Streaming gameplay has become popular. Justin.tv has made Twitch.tv as a separate site just to handle all of the gamers streaming themselves in gameplay. Streaming software such as Xsplit Broadcaster are doing REAL TIME video encoding of screen captures or Gamesource and then bundling for streaming all in one swoop and ALL WHILE PLAYING THE GAME AT THE SAME TIME. For streamers who count on ad revenue as a source of income it becomes less about Time = Money and more about Quality = Money since everything is required to happen in real time. I happen to know for a fact that a 2500k @ 4.0Ghz chokes on these tasks and it directly impacts the quality of the streaming experience. Don't even get me started on trying to stream Skyrim at 720p, a game that actually taxes the processor. What is the point of running a game at it's highest possible settings at 60fps if the people watching only see something like a watercolor re-imagining at the other end? Once you hurdle bandwidth contraints and networking issues the stream quality is nearly 100% dependent on the processor and it's immediate subsystem. Video cards need not apply here.

There has got to be a way to determine if multiple programs can be run in different threads efficiently on these modern processors. Or at least a way to see whether or not there would be an advantage to having a 3960x over a 2500k in a situation like I am describing. And I know I can't be the only person who is running more than one program at a time. (Am I?) I mean, I understand that some applications are not coded to benefit from more than one core, but can multi-core or multi-threaded processors even help in situations where you are actually using more than one single threaded (or multi-threaded) application at a time? What would the impact of quad-channel memory be when, heaven forbid, TWO taxing applications are being run at the SAME TIME!? GASP!

N4g4rok - Monday, November 14, 2011 - link

That's a good point, but don't forget that a lot of games are so CPU intensive that it would take more than just background applications to cause the CPU to lose it's performance during gameplay. I can't agree with the statement that streaming video will be completely dependent on the processor. The right software will support hardware acceleration, and would likely tax the GPU just as much as the CPU.However, with this processor, and a lot of Intel processors with hyper-threading, you would be sacrificing just a little bit of it's turbo frequency to deal with those background applications. Which should not be a problem for this system.

Also, keep in mind that benchmarks are just trying to give a general case. if you know how well one application runs, and you know how well another runs, you should be able to come up with a rough idea of how it will handle both of those tasks at the same time. and it's likely that the system running these games is also running necessary background software. you can assume things like Intel's Turbo Boost controller or the GPU driver software, etc. etc.

N4g4rok - Monday, November 14, 2011 - link

"but don't forget that a lot of games are so CPU intensive that it would take more than...."My mistake, i meant 'GPU' here.

sylar365 - Monday, November 14, 2011 - link

"The right software will support hardware acceleration, and would likely tax the GPU just as much as the CPU"In almost every modern game I wouldn't want my streaming software to utilize the GPU(s) since it is already being fully utilized to make the game run smoothly. Besides, most streaming software I know of doesn't even have the option to use that hardware yet. If it did I suppose you could start looking at Tesla cards just to help process the conversion and encoding of stream video, but then you are talking about multiple thousands of dollars just for the Tesla hardware. You should check out Tom's own BF3 performance review and see how much GPU compute power would be left after getting a smooth experience at 1080p for the local machine. It seems like the 3960x could help. But I will evidently need to take the gamble of spending $xxxx myself since I don't get hardware sent to me for review and no review sites are posting any type of results for using two power hungry applications at the same time.

N4g4rok - Tuesday, November 15, 2011 - link

No kidding.Even with it's performance, it's difficult to justify that price.

shady28 - Monday, November 14, 2011 - link

Could rename this article 'Core i7 3960X - Diminishing Returns'

Not impressed at all with this new chip. Maybe if you're doing a ton of multitasking all time time (like constantly doing background decoding) it would be worth it, but even in the multitasking benchmarks it isn't exactly revolutionary.

If multitasking is that big of a deal, better off getting a G34 and popping in a pair of 8 or 12 core Magny Cours AMD's. Or, maybe the new 16 Interlagos core G34. Heck, the 16 core is selling for $650 at NewEgg already.

For anything else, it's really only marginally faster while probably being considerably more expensive.

Bochi - Monday, November 14, 2011 - link

Can we get benchmarks that show the potential impact of the greater CPU power & memory bandwidth? This may be overkill for gaming at 1920 x 1080. However, I would like to know what type of performance changes are possible when it's used on a top end Crossfire or SLI system.rs2 - Monday, November 14, 2011 - link

"I had to increase core voltage from 1.104V to 1.44V, but the system was stable."Surely that is a typo?